By: Fikrat Azizov | Comments | Related: 1 | 2 | 3 | 4 | > Azure

Problem

Azure Analysis Services management tasks automation requires building cloud applications. In this series of tips, I'm going to share my experience on using Azure Logic App to automate Azure Analysis Services (AAS) model refresh tasks. I explained the design of the core application module for AAS model refresh in my earlier tips (see Automate Azure Analysis Services management using Logic App - Part 1 and Automate Azure Analysis Services management using Logic App - Part 2). I'll show how to build a flexible Logic App, capable of running multiple model refreshes in parallel, in this tip.

Solution

Azure Analysis Services refresh challenges

AAS models often come in different sizes and complexities. A typical enterprise can have number of models with many large tables, alongside with smaller models. While smaller models are relatively easy to process, the large models present the challenges, like:

- Large table sizes -

Large fact tables with historical data may contain many years' worth of

data. Reading large amounts of data can lead to long processing times and timeouts.

I think the best approach to handle this scenario is to create the model partitions.

The model partitions have following benefits:

- Partitions allow processing smaller portions of table and therefore make processing more manageable

- Partitions allow a selective processing (e.g. processing of only recently changed data), as oppose to processing of entire table

- High number of tables - Processing high number of tables may require significant time, even though each table processing is relatively fast. The best approach to handle this scenario is to run the processes in parallel.

- Complex SQL queries - Some SQL queries behind the model tables, may involve joins and aggregations of multiple large tables. This scenario may require query optimization exercise.

The Logic App modules we're going to create in the following sections, will be able address these scenarios.

Here is the description of the parameters, we'll need to pass to the core module:

- ModelName - Represents the model name.

- JSONBody - Represents JSON string with multiple table names.

- TableName - Represents the specific table name to be processed. Note that this module will handle JSonBody parameter, if both JSonBody and TableName parameters supplied.

- PartitionName - Represents specific partition name. Note that TableName parameter is required, if PartitionName is supplied.

- MaxParallelism - Represents the parallelism degree of the processing job (by default equals to 2).

Processing small Azure Analysis Services models

This is the simplest scenario, which allows processing the cubes on full model granularity level. You can choose this approach, if you know that your models operate on small tables and processing completes fast.

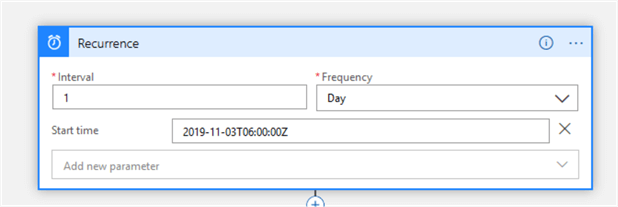

Let's create a new Logic App and add a Recurrence trigger there, with the required run frequency, as follows:

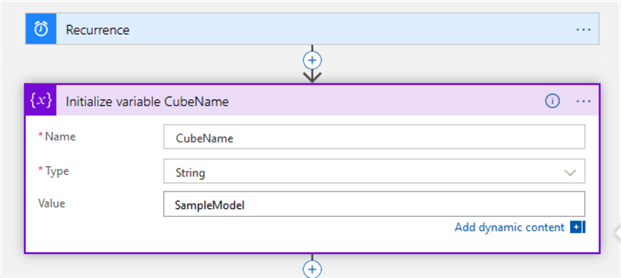

Next, add a Initialize variable action and configure it to assign the name of the cube you want to process, to the variable CubeName, as follows:

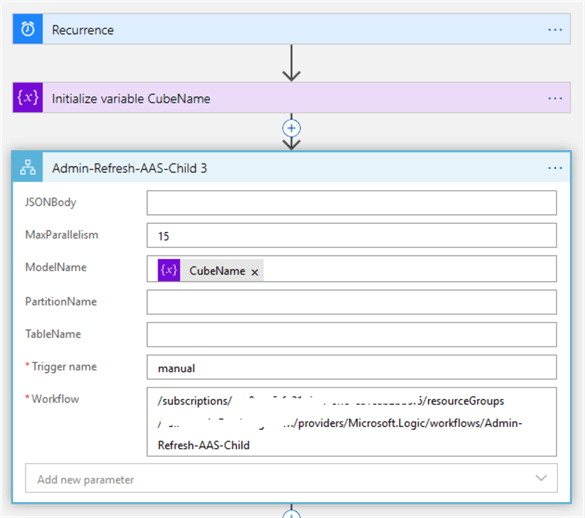

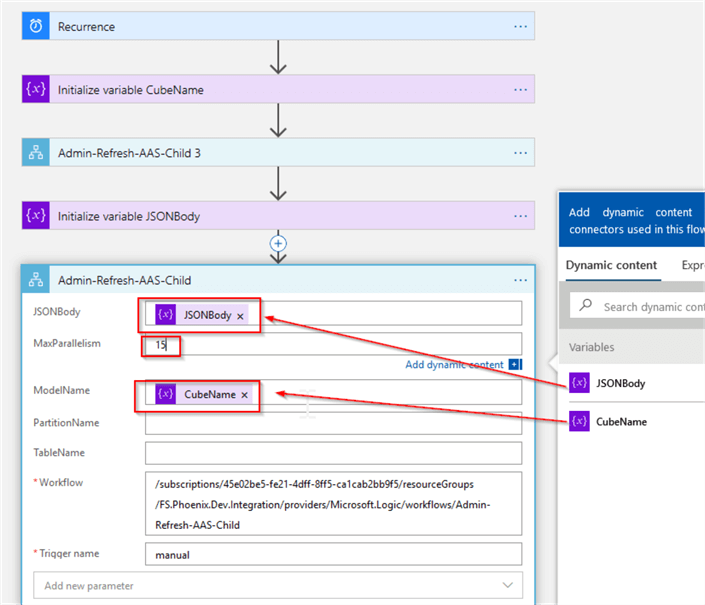

Finally, add Azure Logic Apps action, select the app Admin-Refresh-AAS-Child from the list of available applications and set its MaxParallelism parameter to the desired parallelism degree, as follows:

This is all that is required to process the model. You can add more branches to this app, to process other models in sequence or in parallel, of course.

Processing the Azure Analysis Services models with a high number of small tables

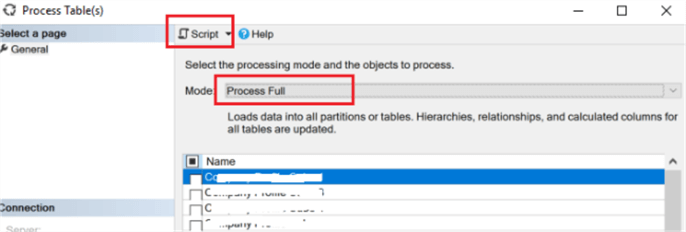

This scenario is like the above described scenario, except it has more tables. Processing such cubes on a full model level may require significant time, because AAS will process sequentially. However, the processing could significantly be accelerated and parallelized, if we pass the names of tables as a JSON string to the core module. You can easily generate JSON string with the names of tables, by connecting to AAS from SSMS, selecting Process table command from the right-click menu, selecting required tables and clicking 'Script' command, as follows:

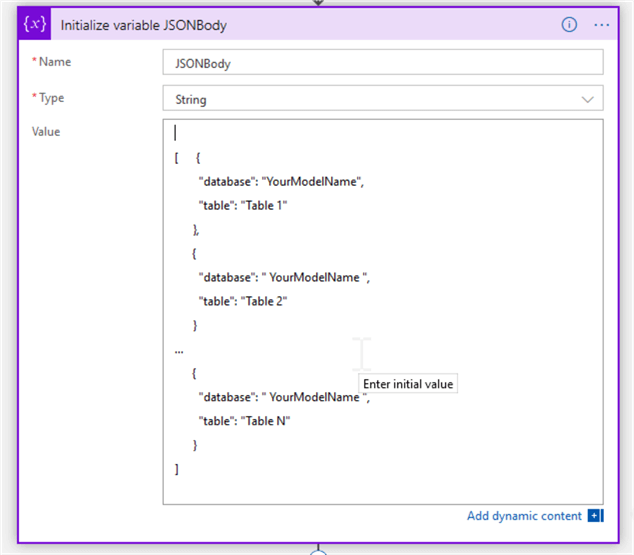

The resulting string should be in the following format:

[

{

"database": "YourModelName",

"table": "Table 1"

},

{

"database": "YourModelName",

"table": "Table 2"

}

…

{

"database": "YourModelName",

"table": "Table N"

}

]

Let's create a Logic App and add a Recurrence and Initialize variable CubeName components, like in the previous scenario, followed by another Initialize variable action with the following configuration:

Finally, add Azure Logic Apps action, select the app Admin-Refresh-AAS-Child and configure it as follows:

Processing Azure Analysis Services models with medium size tables

This scenario includes models with medium size tables, which can be processed within reasonable timeframe. We'll create a looping logic to process these tables sequentially.

We'll need the list of model tables to be processed in the following format:

["Table 1","Table 2",...,"Table N"]

You can use SSMS to generate the list of all model tables the same way, like we did in the previous section.

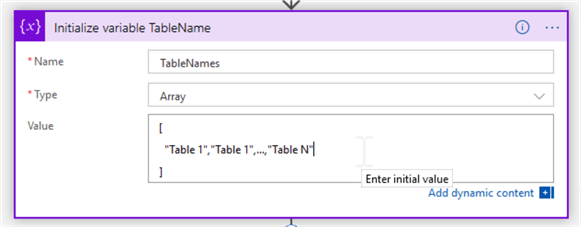

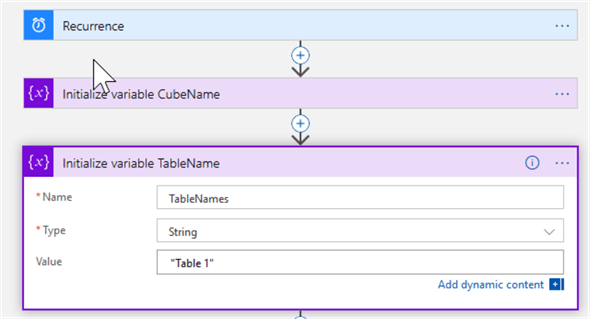

Let's create a Logic App and add a Recurrence and Initialize variable CubeName components, like in previous scenario, followed by another Initialize variable action to assign the table list string to the variable named TableNames, as follows:

Note that I've specified Array data type for this variable.

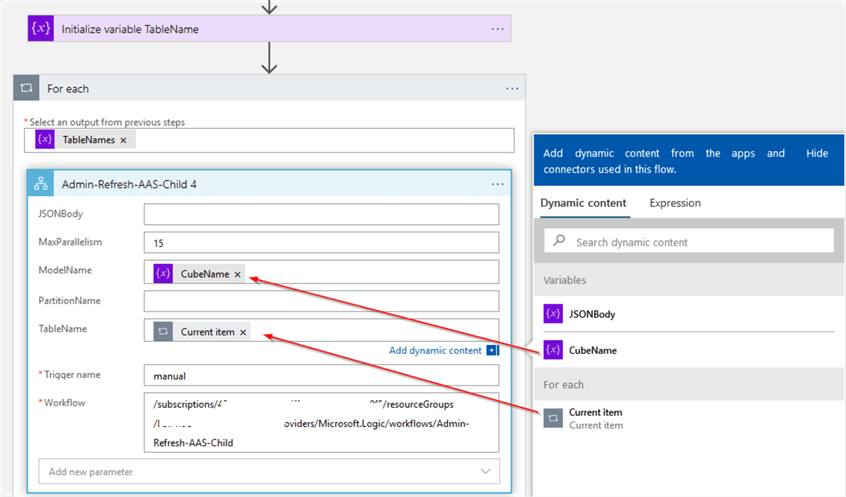

Next, add a ForEach action and include the TableNames variable to its looping set. Add Azure Logic Apps action, select the app Admin-Refresh-AAS-Child and configure it as follows:

Processing Azure Analysis Services models with large size tables

This scenario includes models with large size tables, which are preferred to be processed on a partition level.

We'll need to prepare the list of partition names, in the following format:

["Partition 1"," Partition 2",...," Partition N"]

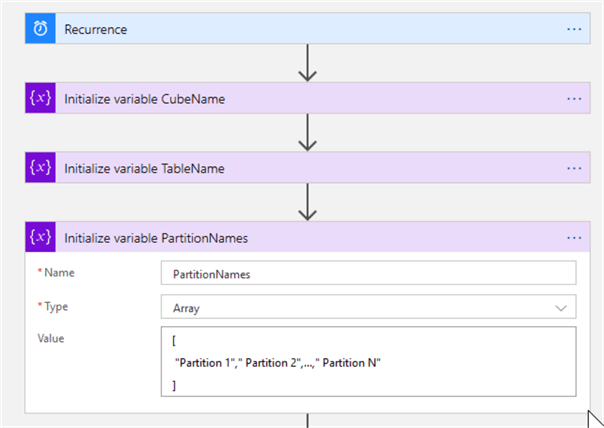

Let's add a Recurrence and Initialize variable CubeName components, like in previous section.

Next, add Initialize variable action with the string data type and use it to assign the name of the table, which will host partitions to the variable TableNames, as follows:

Next, add another Initialize variable action with the array data type and use it to assign partition names to the variable PartitionNames, as follows:

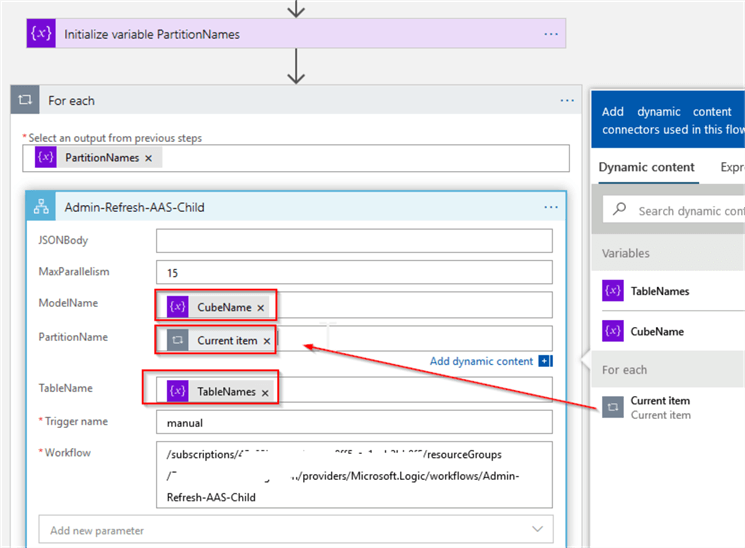

Next, add ForEach action and include PartitionNames variable to its looping set. Include a Azure Logic Apps action into the ForEach action, select the app Admin-Refresh-AAS-Child from the list of available applications and configure it as follows:

Final notes

The Logic App module design I've shared in this and previous tips allows creating flexible data flows to manage processing of multiple models sequentially or in parallel. The only constraint is that AAS will not allow processing the same model table from multiple application instances simultaneously, even if they're trying to process different partitions.

Next Steps

- Read: Refreshing Azure Analysis Services models using Logic Apps By Adam MarcZak

- Read: Logic App tutorials from Microsoft

- Read: Azure Tips

About the author

Fikrat Azizov has been working with SQL Server since 2002 and has earned two MCSE certifications. He’s currently working as a Solutions Architect at Slalom Canada.

Fikrat Azizov has been working with SQL Server since 2002 and has earned two MCSE certifications. He’s currently working as a Solutions Architect at Slalom Canada.This author pledges the content of this article is based on professional experience and not AI generated.

View all my tips