By: Jeremy Kadlec | Updated: 2024-01-02 | Comments (4) | Related: > Application Development

Problem

Our organization receives and transmits data on a daily basis for business-critical processes to numerous partners, vendors, customers, suppliers and more. Our data needs to be validated and cleansed prior to loading the data to our Microsoft SQL Server environments. We have made significant investments with our SQL Server DBAs and Developers to automate the processes as much as possible. Unfortunately, we are constantly shooting at a moving target i.e. file formats differ between each system, we often get unexpected file formats (data is added, removed or re-ordered), new data is being requested between the organizations and more unpredictable circumstances. On top of this, we need to ensure all of the data is properly vetted by the Data Stewards intimately knowledgeable on the data.

The constant data management moving target tends to overwhelm the SQL Server DBAs and Developers with the need for immediate response of updating the validation and import code to then load the data to meet the business requirements between the organizations. How can we have the best of both worlds? Have our SQL Server DBAs and Developers build a system to support the organization, while providing our Data Stewards the flexibility to lookup, validate and cleanse the data prior to the production load without having the deep programming knowledge of our technical team.

Solution

Data Quality Suite

With SQL Server Data Platform, most DBAs and Developers turn to either SQL Server Integration Services (SSIS), Azure Data Factory (ADF), BIML, API, XML, JSON or T-SQL code for data integration and data governance needs. With standardized files, formats and data, these technologies are able to automate tedious processes common in data management solutions. The SQL Server technologists are able to shine and show their value in terms of time savings for the entire organization.

Unfortunately, many processes are difficult to automate due daily changes between systems perhaps due to constantly changing marketing programs, systems updates, short term programs, shifting product lines, fulfillment via numerous vendors and more. From a technology perspective, this can be considered an SSIS Nightmare with hundreds of unwieldy packages, version control debacle and countless hours trying to automate processes that are rapidly evolving. These situations quickly become frustrating for the SQL Server technologists and can benefit from the collaboration with Application Owners, Data Stewards and Power Users, who can decipher the data and interface with the other organizations to work through the process.

So how do we avoid the SSIS Nightmare? Let the technologists build an automation solution that standardizes processes and gives the Data Stewards a solution for cleansing, validation, enrichment, standardization and data integration without becoming programmers? One data quality software solution, that I would like to introduce is Unison from Melissa Data, a solution built for Data Stewards enabling them to validate, lookup, cleanse, import and export data via an intuitive project-oriented framework. Unison data quality solutions include:

- Intuitive three step ETL process to perform data cleansing workflows

- Simple point and click interface to profile, cleanse, standardize, enrich, match, merge and validate data in real-time

- Highly refined data quality algorithms to ensure your data is accurate with monthly meta data updates

- Address verification for 240 countries, USPS certified mailings, Name Parsing and more enhancements

- Import and export data from major databases (SQL Server, Oracle and MySQL), Excel and flat files as data sources

- Intuitive web-based interface without programming expertise

- Collaboration among business users to ensure critical business processes are met

- Project oriented paradigm for re-use, modification and recurring scheduling

- Fast data processing - 50 million address records per hour

- Architecture designed for on-premises deployments of container technology to meet internal security and performance requirements

Let’s dive in to see Unison data quality suite in action.

Getting Started with Unison for Data Quality Management

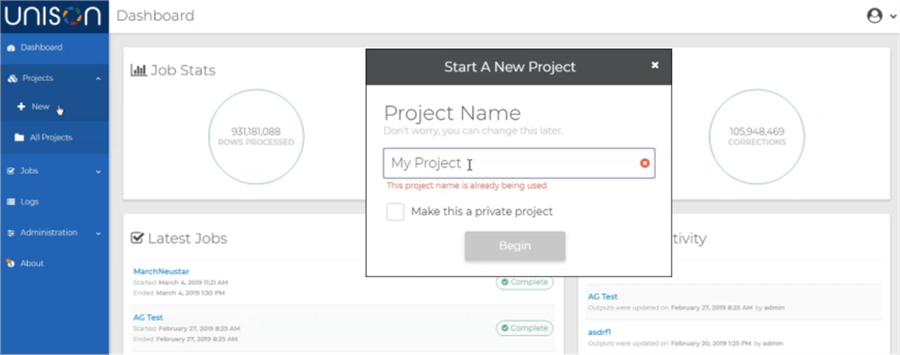

To get started with cleansing and validating your data with Unison from Melissa Data, login to the dashboard to have access to Projects, Jobs and administrative interfaces such as logs, user administration, meta data updates and more. Start a new project by clicking on the Projects link in the left navigation and select the New option. This begins the process by naming the project then specifying whether the project will be public or private.

Once the project is named, three core steps are followed to complete the project:

- Define the Input Source as either a text file or database connection as your data set

- Configure the data validation tasks

- Specify Output Source to either a text file or database connection

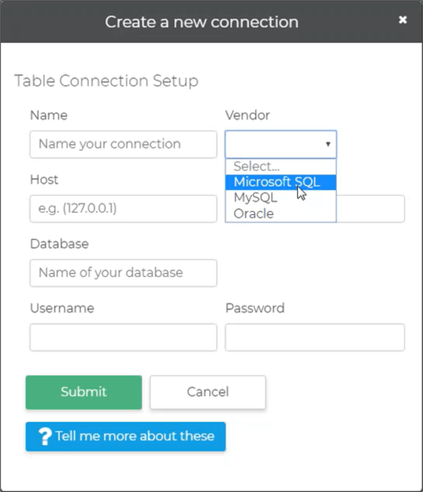

Both the Input and Output Sources can be a flat file or database connection to SQL Server, Oracle or MySQL. Below is the interface to create a database connection in your project.

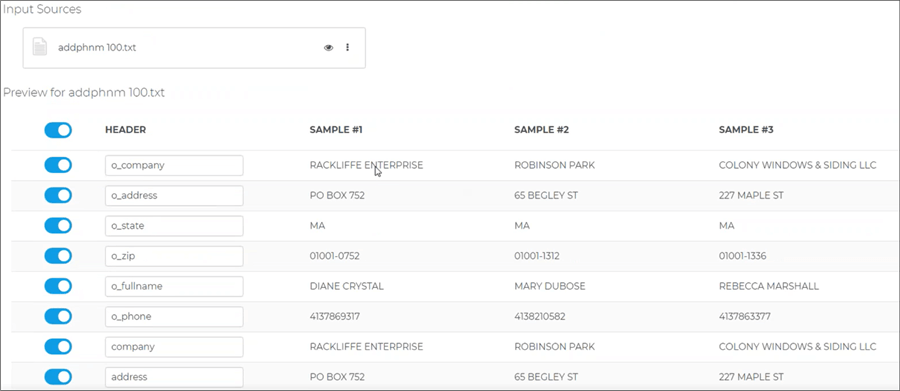

In this project, we will drag and drop a text file from Windows Explorer into Unison and Unison will read the file header as well as preview the first three records as shown below. Unison includes intelligent file detection, but if needed the text file properties can be configured in terms of the delimiter, field enclosure such as double quotes or if the file is a fixed width.

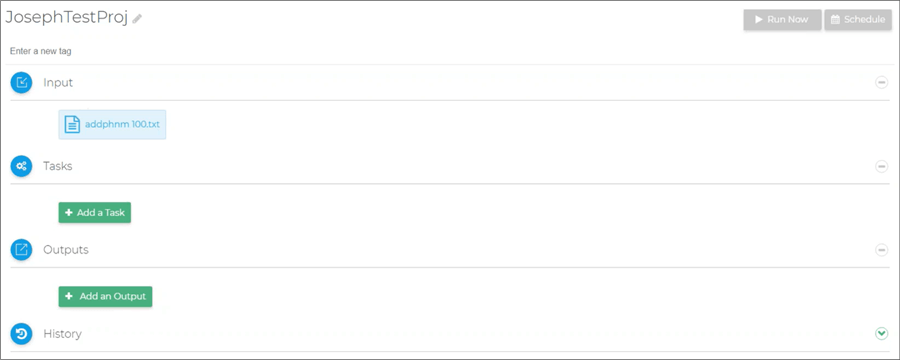

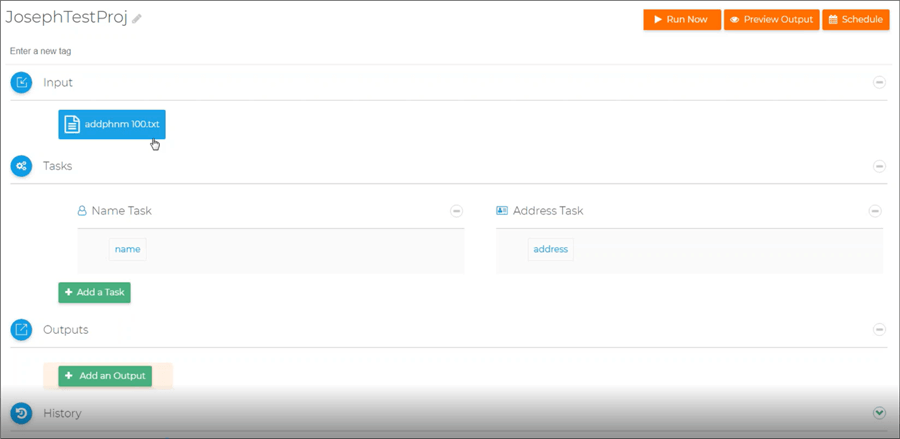

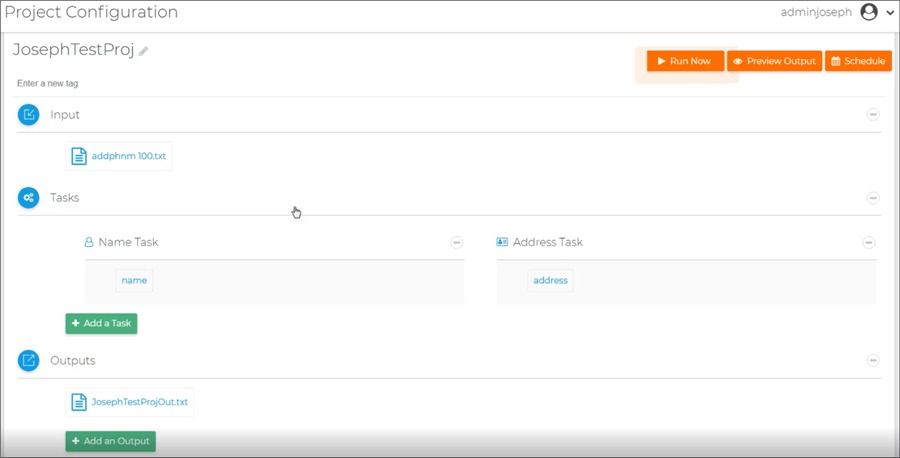

Once configured, the Project looks like this:

Unison Data Validation Tasks

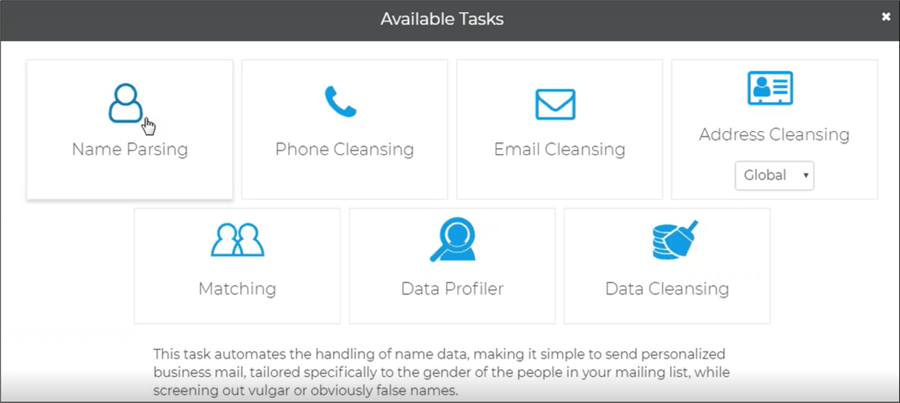

The true value of Unison is apparent with the data validation tasks for contact data. There are currently four tasks available for customer data including Name Parsing, Phone, Email and Address Cleansing which includes 240 countries. Coming soon will be Matching, Data Profiler and Data Cleansing that are currently supported in complimentary Melissa solutions. For Unison, simply click on any task to include it in the project. Let’s start with Address Cleansing for our project.

Address Validation with Unison

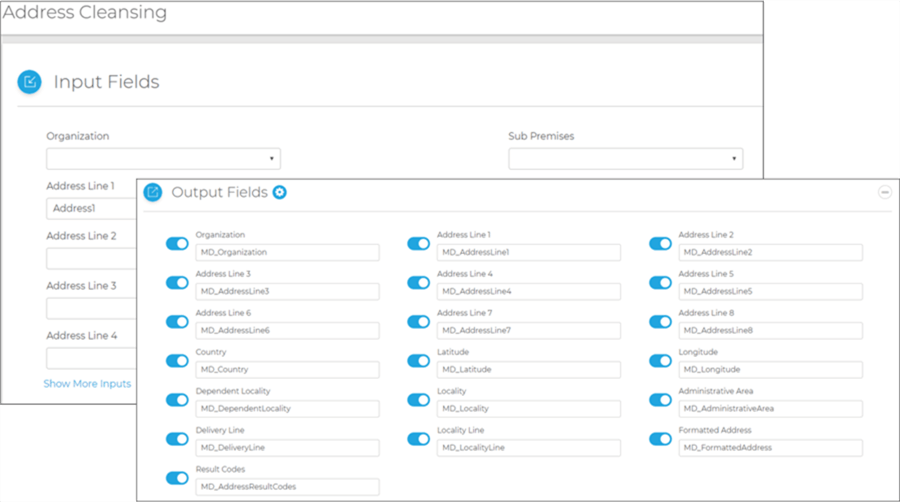

For Address Cleansing, the Task will match the input files to common address fields in Unison. The process starts with identifying the Input Fields then the Output Fields which will be ultimately used in the final step of the process. The Output Fields are the validated Melissa results to ensure the final data is accurate. Once we review and save the configuration, we will return to the main project design window.

Name Parsing with Unison

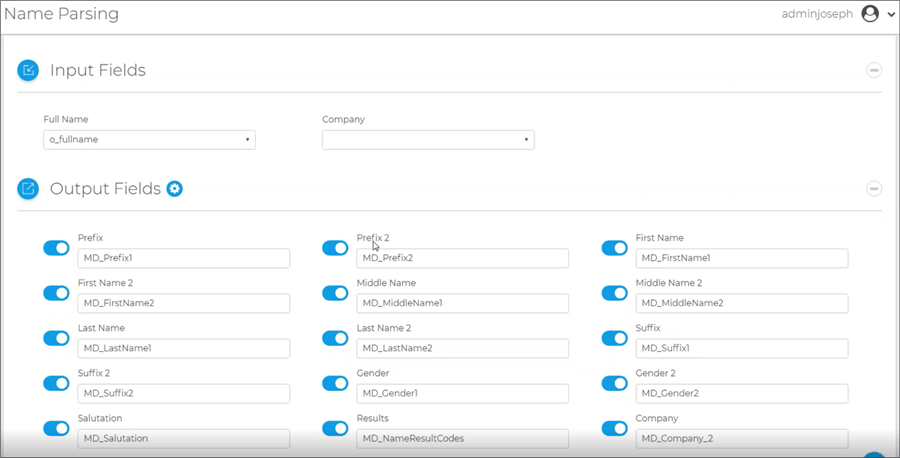

Our next step is to perform Naming Parsing with Unison that follows a similar paradigm as the Address Task. This includes input and output fields with validation as well as separation of each name segment.

When we return back to the project, the current status with the Input and Tasks are completed. Our final configuration step is to define the output.

Unison Outputs

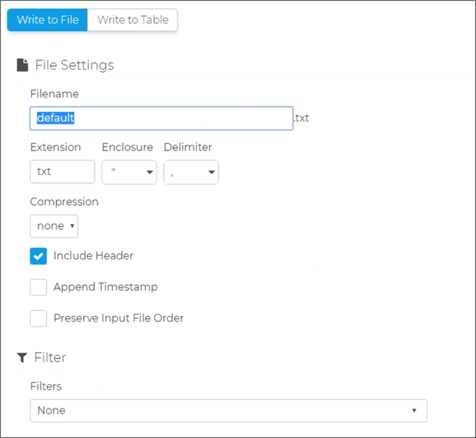

Just like with the Unison Inputs, the Outputs can be both to a File or Table in SQL Server, Oracle or MySQL. With the Write to File option shown below, the name, extension, enclosure and delimited are included. Further, the file can be compressed as a Zip or GZip file once built. Another unique feature is the ability to filter the file output based on Full address matches, full phone number matches, etc.

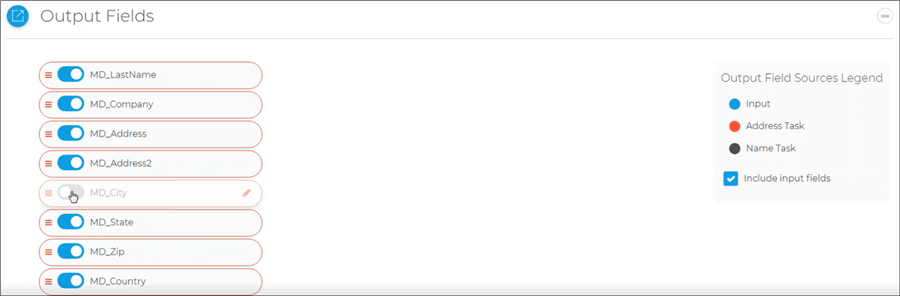

As a final step prior to outputting the data, Unison provides the ability to review the Output Fields, turn columns on / off with the slider and rearrange the column order. The legend on the right specifies the color coding associated with the Output Fields.

Final Project Review

Now that the project is configured, there are three options on the top right of the interface including:

- Run Now

- Preview Output

- Schedule

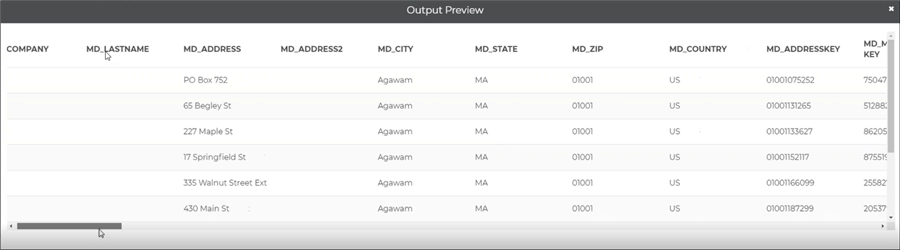

Preview Output

From the Preview Output option, you can review all columns and the first set of rows to ensure the data is going to be delivered in the needed format. If not, you can update the project to your requirements.

Run Now in Unison

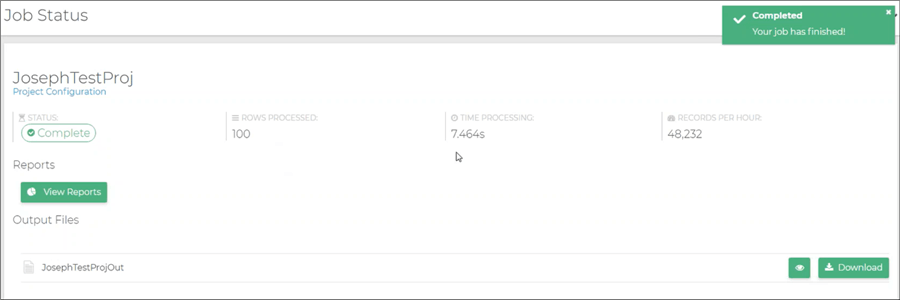

If the data is correct based on the Preview Output, then Run the job, review the status and download the final output file the next steps in your business processes. Whether that means copying the file to a directory for the data to be loaded by an automated process built by SQL Server Professionals, encrypting the file then sharing with team members or partners, etc.

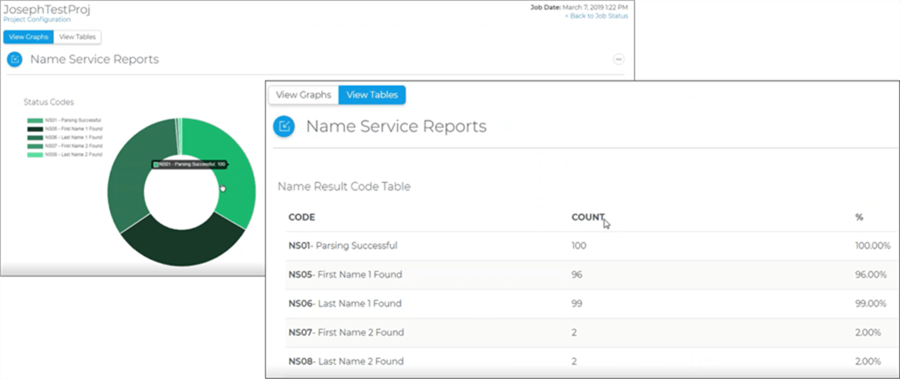

If you click on the View Reports button, you are able to see the results from both the Name and Address Parsing in either a graphical or tabular format for the types of changes made as well as errors recorded.

Unison Scheduling

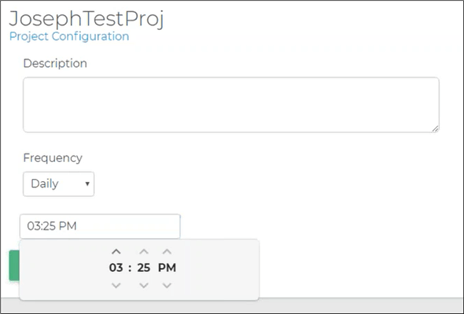

The final option for the project is to schedule the job to run either daily, weekly, monthly or a customized time frame. Below is the intuitive interface to schedule a job daily.

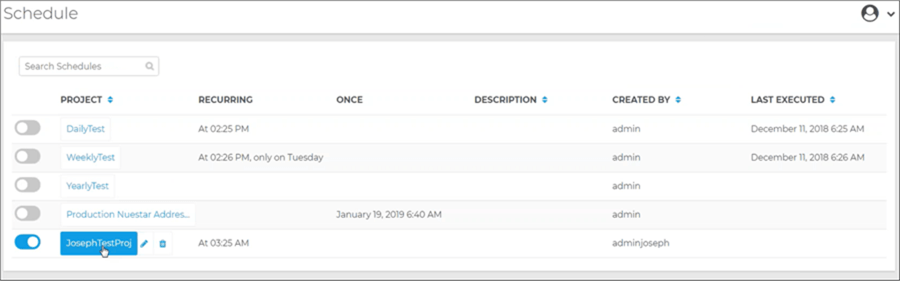

Unison also allows the opportunity to update and review the Jobs to meet daily management and monitoring needs.

How do I get started with Unison?

- Think about the numerous hours and relive the frustration of meeting critical deadlines that your business and technology groups face when trying to cleanse, validate, dedupe and load data among various systems in order to achieve high-quality data.

- If you are experiencing SSIS Nightmares with constantly changing requirements, code and too many SSIS Packages to count, then consider Unison as a technology for data enrichment that you can integrate into your processes. This enables your Power Users to validate the data and your SQL Server Professionals to manage the data in production environments.

- Get a personal demo of Unison from a member of the Melissa team and then put Unison through its paces in your environment with your data to see the ease of use firsthand. See how easy it is to validate your data and streamline tedious business processes that have not included data validation.

- Communicate how Unison can help your organization:

- Time savings for data quality issues, data validation, data enrichment, data matching, data migration, deduplication and data cleansing

- Put the power of data quality and data analysis in the hands of your data stewards

- No programming experience necessary, only knowledge of the company data and business rules

- Reduce the burden of your SQL Server Professionals to focus on production data

- Introduce data quality to new processes to improve the value of your data, customer experience, decision making, data warehouse projects and business intelligence

- After working with Unison, measure the tangible and intangible benefits and determine your next steps to radically streamline business processes and put the power of data quality in the hands of your power users.

Next Steps

- Learn about all of the Unison functionality.

- Get a personal demo of Unison.

- Download Unison.

- Check out the Melissa Wiki on Unison.

- Check out this Unison video.

MSSQLTips.com Product Spotlight sponsored by Melissa, makers of Unison.

About the author

Jeremy Kadlec is a Co-Founder, Editor and Author at MSSQLTips.com with more than 300 contributions. He is also the CTO @ Edgewood Solutions and a six-time SQL Server MVP. Jeremy brings 20+ years of SQL Server DBA and Developer experience to the community after earning a bachelor's degree from SSU and master's from UMBC.

Jeremy Kadlec is a Co-Founder, Editor and Author at MSSQLTips.com with more than 300 contributions. He is also the CTO @ Edgewood Solutions and a six-time SQL Server MVP. Jeremy brings 20+ years of SQL Server DBA and Developer experience to the community after earning a bachelor's degree from SSU and master's from UMBC.This author pledges the content of this article is based on professional experience and not AI generated.

View all my tips

Article Last Updated: 2024-01-02