By: Chad Boyd | Comments | Related: > Hardware

Let's start the SSD blogs off with a comparison of the SQLIO tool running on my laptop and compare the results to some other traditional spindle based systems.

First, let's outline the systems we'll be comparing throughout the SSD blogs:

- First, my laptop. This is a loaded Dell XPS M1330 laptop running Windows Vista Ultimate x64. Specs include a 2.2Ghz Intel Core2Duo T7500 processor (dual-core, 800MHz FSB, 4MB L2 cache), 4gb DDR2 SDRAM @ 667Mhz, and a single 64gb Solid State Drive (Samsung)

- Next, my desktop. This is a Dell Precision Workstation T5400 running Windows Vista Business x64. Specs include a 2.0Ghz Intel Xeon E5405 (quad-core, 1333MHz FSB, 12MB L2 cache), 16gb DDR2 SDRAM @ 667Mhz, a single SATA II 10k RPM 146gb boot-drive (system, swap), and a single SATA II 7.2k RPM 750gb additional drive.

- Server #1. This server is a Dell PowerEdge 2950 running Windows Server Enterprise 2003 R2 x64. Specs include 2 x Intel Xeon 5160 3.0Ghz processors (dual-core, 1333MHz FSB, 4MB L2 cache each (8MB total)), 16gb RAM (8 x 2 gb Dual-Rank), dual PERC 5/E adapters, a RAID-1 boot-drive (system,swap) with 2xSATA II 7.2k RPM 250gb drives, and 2 LUNs attached via an external Dell MD-1000 DAS enclosure. Each external LUN is made up of 7 SATA 7.2k RPM 500gb spindles each in a RAID-5 configuration. For tests, we'll only make use of the 2 externally-attached LUNs.

- Server #2. This server is an HP DL380 running Windows Server Enterprise 64-bit Edition attached to an HP EVA 6100 SAN. Specs include 2 x Intel Xeon X5450 3.0GHz processors (quad-core, 1333MHz FSB, 12MB L2 cache each (24MB total)), 32gb RAM, tri emulex LP1050 HBA's, 2 internal 15k SAS drives RAID 1 for boot/swap, external log LUN with 8 SAS 15k 146gb drives RAID10 configured, 2 external data LUNs each with 20 SAS 15k 146gb drives RAID 10 configured for data files. SAN is connected via 2Gb FC fabric and dual Brocade 4gb silkworm fiber switches.

As you can see, we have quite a range of systems from my laptop, a beefy desktop, a mid-size server/storage configuration, and a larger-size server/storage configuration. This should give us a good range of tests throughout the different scenarios. So, let's get this party started, first with some SQL IO comparisons.

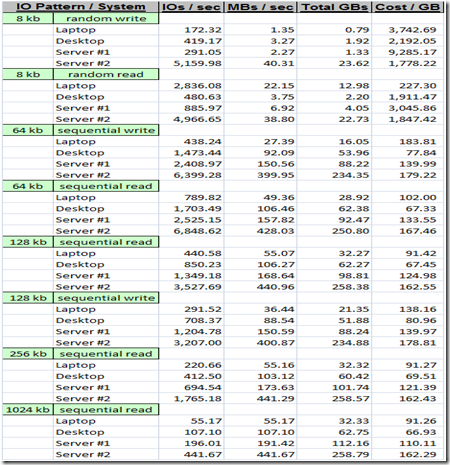

For our SQL IO test, we'll use the following parameters:

- All tests are run back-to-back in a serial manner - each test hits either the LOG file or the DATA files (so any tests that have the log file on the same LUN as a data file are never mixing IO operations)

- All tests use 8 processing threads, run for 10 minutes each, are enabled for multi-io, and allow up to between 16 and 64 outstanding IOs each

- Files used include 2 data files and 1 log file

- On my laptop, all files reside on the solid state drive and are sized at 15gb each.

- On my desktop the log file and one of the data files resides on the 10k boot drive - the other data file resides on the 7.2k drive. All files are sized at 75gb each.

- On Server #1, the log file and one of the data files resides on the first LUN from the DAS - the other data file resides on the 2nd LUN from the DAS. All files are sized at 100gb each.

- On Server #2, the log file resides on the log lun, and the 2 data files each reside on a separate data LUN each. All files are sized at 100gb each.

- The tests include each of the following:

- 8kb random writes to the data files (pattern for checkpoints, tempdb, etc.)

- 8kb random reads to the data files (pattern for random data reads, singleton seeks, etc.)

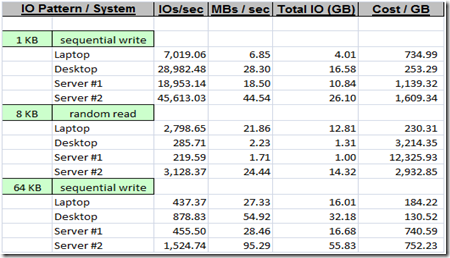

- 64kb sequential writes to the log file (bulk log writes)

- 8kb random reads to the log file (rollbacks, log reader, etc.)

- 1kb sequential writes to the log file (small log writes)

- 64kb sequential writes to the data files (checkpoints, reindex, bulk inserts)

- 64kb sequential reads to the data files (read-ahead, reindex, checkdb)

- 128kb sequential reads to the data files (read-ahead, reindex, checkdb)

- 128kb sequential writes to the data files (bulk inserts, reindex)

- 256kb sequential reads to the data files (read-ahead, reindex)

- 1MB sequential reads to the data files (backups)

So, let's take a look at the results - in each test we've captured the number of IOs performed per second, the number of MBs per second, the total amount of data transferred in GBs, and the Cost per GB where I took the cost of each system and correlated it against the total GBs transferred.

First, here are the results for operations against the simulated DATA files/luns:

And secondly, here are the results for the tests against the simulated LOG files/luns:

So, where did the SSD perform well? Clearly, it is king in the random read scenarios, nearly keeping pace with Server #2 on each LUN - and, if you include costing in the figures, it's a landslide in favor of the SSD. These benefits for random read patterns are great for day-to-day computing, which is why I see such improvements on my laptop for day-to-day use. In the SQL Server world, this would be beneficial for traditional OLTP-like read patterns (i.e. small, singleton type reads of data) and also for something else we've looked at in detail recently - reads against heavily fragmented structures.

For writes, it's a different story - the SSD lagged significantly behind all other systems in nearly every write scenario. The same holds true for sequential read patters as well, which aligns with findings elsewhere. On a side note, I did perform some sequential read/write tests with smaller IO sizes (1kb and 4kb) for the data files on each of the systems except the Server #2 system and the SSD performs quite a bit better with these smaller sized IOs than the larger sized IOs that are performed typically in a SQL Server system - seems to follow findings from other reviews I've found and researched a bit on the web. Of course, we really care about how it performs with SQL for these posts, and as you can see, it really is beneficial for smaller, random IO patterns - with the larger and sequential type patterns, it looks like for now most traditional spindle systems will outperform them for now (at least the commodity versions that you can get in a laptop for example, I'm sure some of the solid-state systems built for enterprise deployments are a bit more valid for server scenario type tests, you can find these types of systems by most major storage manufacturers today like EMC, HP, Hitachi, etc.).

Good start to our SSD posts - next I think will be the impact of these drives on fragmentation (since it's fresh in our minds and all).

Enjoy!

About the author

Chad Boyd is an Architect, Administrator and Developer with technologies such as SQL Server, .NET, and Windows Server.

Chad Boyd is an Architect, Administrator and Developer with technologies such as SQL Server, .NET, and Windows Server.This author pledges the content of this article is based on professional experience and not AI generated.

View all my tips