By: Koen Verbeeck | Comments | Related: > SQL Server 2016

Problem

The launch of SQL Server 2016 is accompanied with the release of a new sample database: Wide World Importers. This sample database aims to give a more realistic approach. One of its strongest features is the ability to influence the distribution and the amount of data that resides in the database. In this tip, we will find out how you can keep your Wide World Importers databases up to date.

Solution

The tip Installing the new SQL Server sample databases Wide World Importers explains how you can install and configure the OLTP sample database WideWorldImporters. Part 2 covered the installation and configuration of the OLAP database WideWorldImportersDW. In both tips, scripts were used to generate data right until the 31st of May 2016. Now we will explore how we can load data right until the current date.

WideWorldImporters Sample Data Generation

As always, let's start with the OLTP database, since this database will serve as a source for the data warehouse. The process is quite simple. All we have to do is run the stored procedure DataLoadSimulation.PopulateDataToCurrentDate. This stored procedure will generate random sales and purchase data up to the current date, on a day-to-day basis. Keep in mind that if you have to generate data for a long time range, the script can take a while to run. The stored procedure has the following parameters:

- AverageNumberOfCustomerOrdersPerDay: The average number of orders per day. This is the most important parameter to influence the size of the generated data.

- SaturdayPercentageOfNormalWorkDay and SundayPercentageOfNormalWorkDay: The respective percentages of the average number of customer orders, set by the previous parameter. This also influences the size of the data, but only for the weekends.

- IsSilentMode and AreDatesPrinted: The parameters are for debugging purposes and to keep track of the status of the data generation.

On my system, I used the following script:

EXEC [DataLoadSimulation].[PopulateDataToCurrentDate] @AverageNumberOfCustomerOrdersPerDay = 100, @SaturdayPercentageOfNormalWorkDay = 70, @SundayPercentageOfNormalWorkDay = 50, @IsSilentMode = 1, @AreDatesPrinted = 1;

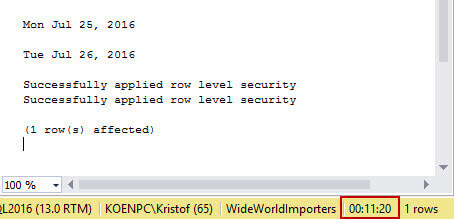

I choose quite high values for the first three parameters, to simulate a busy period for the Wide World Importers company. When the script is running, you can keep track of the days generated so far if you set @AreDatesPrinted to true.

![Run [DataLoadSimulation].[PopulateDataToCurrentDate] to generate data in the SQL Server 2016 sample database](/tipimages2/4431_GenerateData01.jpg)

To generate almost two months of sample data, the script took a bit over 11 minutes. If you use smaller values for the parameters, the script will execute much faster. The official documentation estimates about 10 minutes for a whole year.

The data is generated until the day before today. So in the screenshot above the script was run on the 27th of July 2016. This is important for the loading of the data warehouse, as you'll find out in the next section.

WideWorldImportersDW Sample Data Generation

The next step is to load the data warehouse. The data will be fetched from the OLTP database, so make sure you have run the script from the previous section. The official documentation suggests to do a full load. Since the entire process runs under 2 minutes, this is acceptable. First you have to run the stored procedure [Application].[Configuration_ReseedETL]. This will remove all of the data in the data warehouse and set the cut off times back to the initial date of the 31st of December 2012.

![Run the stored procedue run the stored procedure [Application].[Configuration_ReseedETL]to reseed ETL](/tipimages2/4431_GenerateData04.jpg)

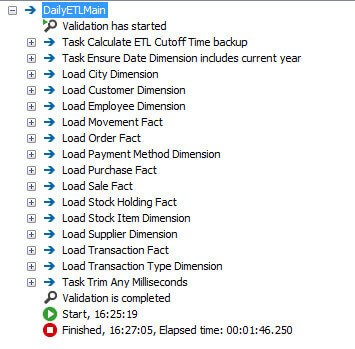

After that, you simply need to run the DailyETLMain package (which can be found on Github) which will bring the data warehouse up to date.

The biggest problem with this approach is that there is no opportunity to build up any history inside the dimension tables. Another method would be to not run the Configuration_ReseedETL stored procedure, but to run only the SSIS package. In this case, the ETL should pick up only the delta changes since the last load. However, at the time of writing there seems to be an issue with the [Integration].[ETL Cutoff] table, where the dates from the last load are stored for each data warehouse table. The problem is that this table stores the point in time when the SSIS package has run. For example the datetime 2016-07-27 15:43:12. But the OLTP load preceding the run of the SSIS package has only loaded data to the 26th of July (see the screenshot in the previous section). This means that the next day, data is loaded until the 27th of July. But since the cutoff time is set to 2016-07-27 15:43:12, you might miss a lot of data. This issue can be resolved by manually updating the cutoff table in order to set the cutoff date to an earlier point in time.

UPDATE [WideWorldImportersDW].[Integration].[ETL Cutoff] SET [Cutoff Time] = DATEADD(DAY,-1,[Cutoff Time]) WHERE [Table Name] <> 'Date';

After running this script, the SSIS package only takes about 10 seconds to run. It has to be noted that it seems only make changes in the fact tables and not any changes in the dimension tables, so there is still no history build-up in the dimension tables. But, this might be because data is only generated by the stored procedure. There are also sample applications available to interact with the OLTP database and those might generate changes that will ripple down to the dimension tables.

Conclusion

Keeping the sample databases up to date is just a matter of running a simple stored procedure and an Integration Services package. It's easy to schedule those in an SQL Server Agent job. However, there seems to be an issue with the logic behind the cutoff dates - necessary for delta loading - and this requires intervention.

Next Steps

- Try it out yourself! You can download all the source code from Github.

- Make sure to check out all of the sample scripts and application workflows as well!

- Read the tips Installing the new SQL Server sample databases Wide World Importers - Part 1 and Part 2 on how to install and configure the sample databases.

- For SQL Server 2016 tips, you can use this overview.

About the author

Koen Verbeeck is a seasoned business intelligence consultant at AE. He has over a decade of experience with the Microsoft Data Platform in numerous industries. He holds several certifications and is a prolific writer contributing content about SSIS, ADF, SSAS, SSRS, MDS, Power BI, Snowflake and Azure services. He has spoken at PASS, SQLBits, dataMinds Connect and delivers webinars on MSSQLTips.com. Koen has been awarded the Microsoft MVP data platform award for many years.

Koen Verbeeck is a seasoned business intelligence consultant at AE. He has over a decade of experience with the Microsoft Data Platform in numerous industries. He holds several certifications and is a prolific writer contributing content about SSIS, ADF, SSAS, SSRS, MDS, Power BI, Snowflake and Azure services. He has spoken at PASS, SQLBits, dataMinds Connect and delivers webinars on MSSQLTips.com. Koen has been awarded the Microsoft MVP data platform award for many years.This author pledges the content of this article is based on professional experience and not AI generated.

View all my tips