By: Simon Liew | Comments (2) | Related: > Clustering

Problem

A SQL Server AlwaysOn Availability Group is dependent on the Windows Server Failover Cluster (WSFC) as a platform technology. A fundamental understanding of WSFC is essential to the design and troubleshooting of high availability and disaster recovery in a SQL Server AlwaysOn solution. This tip will explain the function of a Dynamic Quorum in WSFC with some demos and an explanation.

Solution

Dynamic Quorum is a feature introduced in WSFC built on Windows Server 2012 and is enabled by default. With Dynamic Quorum, cluster quorum majority is no longer fixed based on the initial cluster configuration. NOTE - Windows Cluster or cluster in this tip refers to WSFC.

Dynamic Quorum allows the Windows Cluster to dynamically recalculate the quorum requirement based on the state of the active voters in the cluster. For example, if a node is shut down, its quorum vote will be removed and not counted towards the quorum. When the node is up, Windows Cluster will then include this node in the quorum calculation again.

One important note is that Windows Cluster does not sustain a simultaneous failure of a majority of voting members at a point in time.

In Windows Server 2012 R2, the recommendation is to always configure a witness regardless of the number of nodes. But in this tip, we will look at the Dynamic Quorum behavior with the quorum type of Node Majority when adding and shutting down nodes in the cluster.

Even Number of Nodes in a Windows Cluster

The Windows Cluster for this tip is built on Windows Server 2012 R2 Standard Edition. Since the cluster is built to host a SQL Server AlwaysOn Availability Group, the cluster will not be configured to have shared storage.

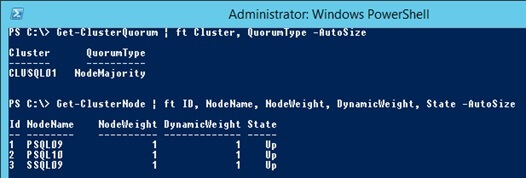

The demonstration will start with quorum type of Node Majority with 3-nodes in the cluster. Nodes with a prefix "P" reside in the primary site and nodes with a prefix "S" reside in the secondary site.

NodeWeight determines whether a node participates in quorum. If you explicitly remove a node from participating in quorum, then the NodeWeight will be 0 and cannot be used by the cluster to determine the quorum majority.

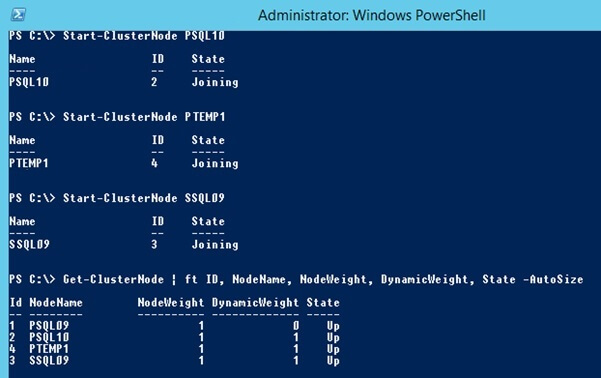

The DynamicWeight property shows the quorum vote state of the node. A value of 0 indicates that the node does not have a quorum vote. A value of 1 indicates that the node has a quorum vote. DynamicWeight controls the current weight that is attributed to the node because of Dynamic Quorum.

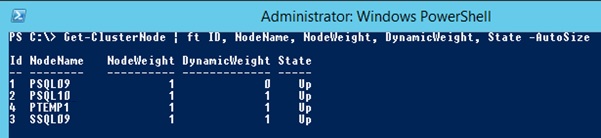

With 3-nodes in the cluster, each of the nodes have a DynamicWeight of 1 which sums to an odd number of 3 votes.

Get-ClusterQuorum | ft Cluster, QuorumType -AutoSize Get-ClusterNode | ft ID, NodeName, NodeWeight, DynamicWeight, State -AutoSize

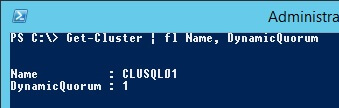

You can execute the PowerShell command below if you are interested to see the Dynamic Quorum property. Because the PowerShell command is executed on one of the cluster nodes, the cmdlet will output the name of the local cluster.

Get-Cluster | fl Name, DynamicQuorum

Let’s make the demonstration interesting by adding a node into the cluster. This will then make the cluster have an even number of nodes.

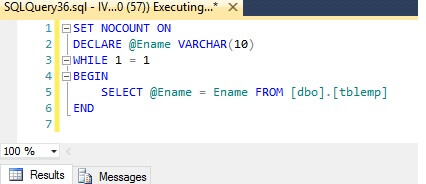

Before adding the new node into the cluster, a simple continuous workload is started on the Availability Group Listener (AGL) to make sure the AGL is online and alive when making changes on the cluster.

SET NOCOUNT ON DECLARE @EName VARCHAR(10) WHILE 1 = 1 BEGIN SELECT @Ename = Ename FROM [dbo].[tblemp] END

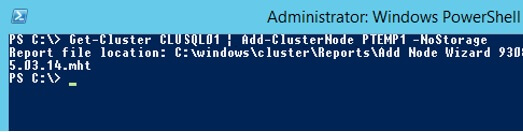

When adding a new node into the cluster, it is important that NoStorage option is specified with the Add-ClusterNode cmdlet to make sure shared storage on all nodes are not added to the cluster during the join operation. Adding shared storage to cluster has a serious repercussion because all shared storage on all nodes will be OFFLINE when they are added to the cluster. This will crash SQL Server if you have databases residing on shared storage.

When node PTEMP1 is added into the cluster, a report will be generated and PowerShell will prompt for the location of the report. This process is similar to using the Failover Cluster Manager GUI to add a node into the cluster.

Get-Cluster CLUSQL01 | Add-ClusterNode PTEMP1 -NoStorage

PTEMP1 is a node residing in the primary site. All is well and if we check the cluster quorum votes now, PTEMP1 is added with DynamicWeight of 1. The node PSQL09 DynamicWeight is now set to 0, and the cluster has assigned the newly added node PTEMP1 with a DynamicWeight of 1. The cluster uses the Dynamic Quorum to adjust the quorum votes dynamically using DynamicWeight.

Shutting Down Windows Cluster Nodes

Now we will simulate shutting down nodes in the cluster. The primary Availability Group and cluster resource is hosted on PSQL09. So we will attempt to keep PSQL09 alive as the last node and shut down all other nodes. If the cluster node on PSQL09 is shutdown, the AGL will go down even of this is the only node being shutdown.

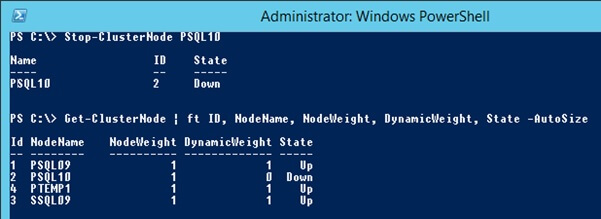

Picking the next node in the cluster, we will shut down node PSQL10. Checking the quorum vote after shutting down node PSQL10, the cluster has removed the quorum vote from node PSQL10. We still have an odd number of quorum votes because now PSQL09 is assigned a DynamicWeight of 1.

Stop-ClusterNode PSQL10 Get-ClusterNode | ft ID, NodeName, NodeWeight, DynamicWeight, State -AutoSize

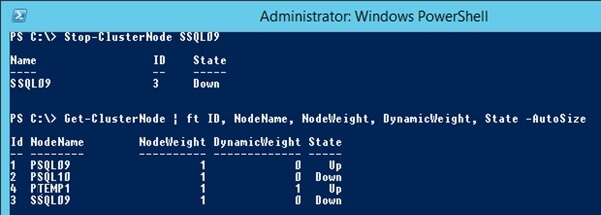

Next shut down node SSQL09. We now have 2 out of 4 nodes still up. PTEMP1 DynamicWeight has dynamically adjusted to 1. The workload on the AGL is still running and the cluster is still up.

Stop-ClusterNode SSQL09 Get-ClusterNode | ft ID, NodeName, NodeWeight, DynamicWeight, State -AutoSize

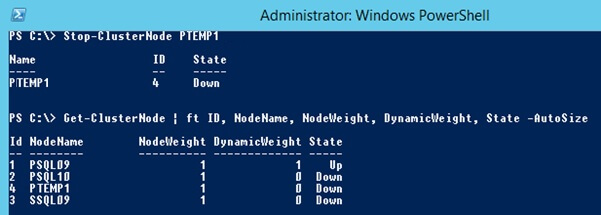

Proceed next to shut down the second to last node PTEMP1. Now the DynamicWeight 1 is set on PSQL09 which is also the last node still up and also the node hosting the primary AG.

Stop-ClusterNode PTEMP1 Get-ClusterNode | ft ID, NodeName, NodeWeight, DynamicWeight, State -AutoSize

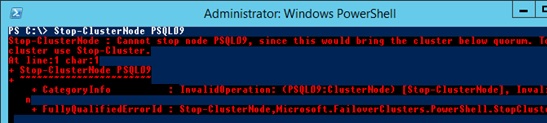

If you attempt to shut down the last node PSQL09, you will get an error "Cannot stop node PSQL09, since this would bring the cluster below quorum. To stop the entire cluster use Stop-Cluster."

From the AGL, the workload is still running and the cluster was able to stay up with the last node using a quorum type of Node Majority.

If you startup all nodes, the nodes will rejoin the cluster and recalculate the quorum as shown below.

Summary

The introduction of Dynamic Quorum has greatly enhanced the resilience of Windows Cluster availability and the way a cluster handles a tie breaker when nodes are split evenly.

Underneath, the cluster uses the DynamicWeight property to determine the quorum majority. So, even in a cluster with even number of nodes, the quorum votes are adjusted dynamically to an odd number so that the cluster can form quorum majority to avoid a split-brain situation. Implementing WSFC might seem easy, but the design consideration can get very complicated when multiple data centers and the public cloud are involved. For example, imagine there are 2 nodes at each site and Dynamic Quorum assigned 1 vote to the primary site and 2 votes to the secondary site. In this example, the primary site will go down when the site-to-site network is unavailable and the secondary site cluster will be up as the primary. Typically it is not an ideal situation to invoke a full DR failover when the primary site is still up and running.

My next tip will introduce another concept called Dynamic Witness and demonstrate the cluster behavior when a witness is introduced in the cluster as a tie-breaker for a 50% node split situation.

Next Steps

- Check out these articles to learn more:

About the author

Simon Liew is an independent SQL Server Consultant in Sydney, Australia. He is a Microsoft Certified Master for SQL Server 2008 and holds a Master’s Degree in Distributed Computing.

Simon Liew is an independent SQL Server Consultant in Sydney, Australia. He is a Microsoft Certified Master for SQL Server 2008 and holds a Master’s Degree in Distributed Computing.This author pledges the content of this article is based on professional experience and not AI generated.

View all my tips