By: Rakesh Patil | Updated: 2023-10-13 | Comments (5) | Related: > Locking and Blocking

Problem

Data modification in SQL Server is one of the DML operations. For DBA/Developers, it's important to understand its modification workflow and internal lock system.

Solution

This tip explains how data modification happens from the locks and SQL Server engine point of view and to understand each lock initiated at each database object during modification. Additionally, it will describe the details of the data modification process and internal locks acquired during the modification process.

To demonstrate, we have a sample database, AdventureWorks2019, and we want to update two data records from a table Person.Person. We will see how data modification happens internally and which locks are acquired during modification.

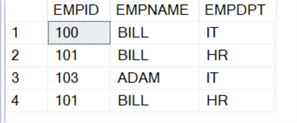

CREATE DATABASE COMPANY GO USE COMPANY CREATE TABLE EMPLOYEE (EMPID INT, EMPNAME VARCHAR(10), EMPDPT VARCHAR(10)) GO SELECT * FROM EMPLOYEE INSERT INTO EMPLOYEE VALUES (100,'BILL','IT') INSERT INTO EMPLOYEE VALUES (101, 'BILL', 'HR') INSERT INTO EMPLOYEE VALUES (103, 'ADAM', 'IT') INSERT INTO EMPLOYEE VALUES (101, 'MIKE', 'HR')

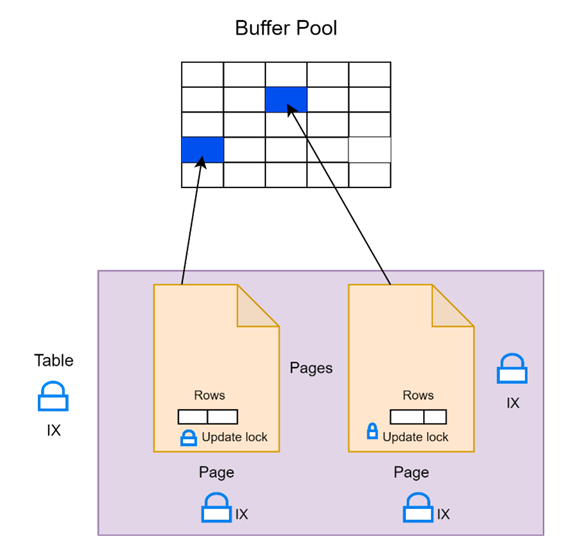

Before any changes are made to the two data pages, the pages will be read into SQL Server's buffer pool. The buffer pool is part of the SQL Server buffer manager, which manages the physical memory SQL Server uses to store data pages. The buffer pool is an area of memory that stores frequently accessed data pages from a database.

When a SQL Server instance reads a data page from disk, it first checks to see if it is already in the buffer pool. If the page is already in memory, the instance can read it directly from the buffer pool instead of from the disk, which is much faster. If the page is not in memory, the instance must read it from the disk and store it in the buffer pool for future use.

Initial SQL Server Locking

Once the pages are in memory, the storage engine's access methods will use a locking hierarchy to acquire locks. There are three objects (Table, Page, and actual rows), and each object acquires one specific lock depending on the operation.

As we update records in the UPDATE query, before actual modification, the following locks get acquired:

- One Intent-exclusive (IX) table-level lock

- Two Intent-exclusive (IX) page-level locks

- Two UPDATE row-level locks

These locks indicate that the storage engine intends to obtain exclusive row-level locks somewhere on the page. Then, it will take two update locks, one for each row. These locks will remain in place until the transaction is either committed or rolled back, and they intend to protect the changes made by the transaction.

Update locks are used instead of exclusive locks because they allow other threads to read the locked row until it is changed. This approach allows for better concurrency, and it is used by the default isolation level in SQL Server, which is read committed.

Two data file pages are highlighted in blue in the buffer pool. These pages contain the table structure and two rows we plan to update.

When locking, SQL Server will obtain an intent-exclusive lock at the table level and on those two pages. After that, we can start acquiring update locks on the individual rows. However, we may encounter a situation where we must wait for an updated lock to be released. During this time, other threads or transactions can still read the row we have locked, as we haven't made any changes.

Only when we convert to an exclusive lock the row will become unreadable. Once we have all the update locks, we can change to exclusive locks and make our modifications.

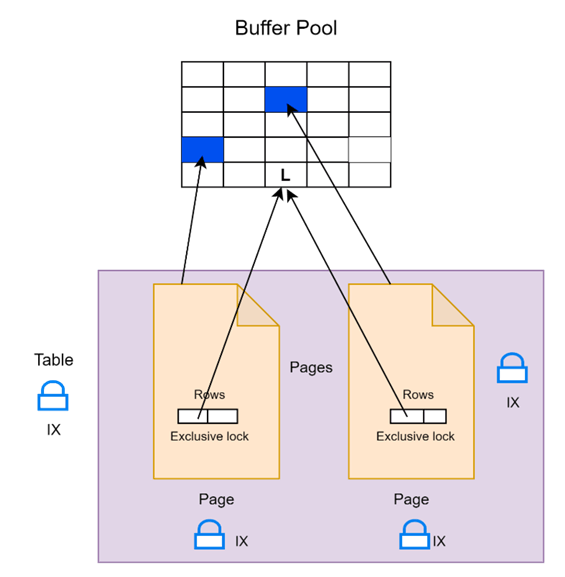

Changing and Logging

Once all the update locks are acquired for the records that need to change, a set of steps is followed for each row. First, the update lock needs to convert into an exclusive lock, which can only happen after other threads have released any shared locks they may have been holding on the same row while reading it.

Once the exclusive lock is obtained, the necessary changes to the data file page currently in memory are made. The lock protects this change and will continue to do so until the end of the transaction.

After the change, a log record is generated to describe the specific alteration made to that page. However, the transaction is not yet committed, which will occur later.

Modification

Each update lock will be converted to an exclusive lock during the modification process. Once it holds an exclusive lock, we could change the row.

Once we've changed the row on the data file page in memory, it generates a log record for each modification, and these log records will be stored in log blocks. The image below shows that each modification generates a log record and is stored in buffer pool memory indicated with "L." In summary, for each modification, the Update lock converts to an exclusive lock, modifies records in a data file in memory, generates log records stored in memory, and so on, and the transaction is still not committed.

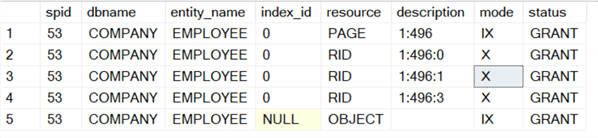

CREATE VIEW Lock_checks AS

SELECT

request_session_id AS SessionID,

DB_NAME(resource_database_id) AS DatabaseName,

CASE

WHEN resource_type = 'OBJECT' THEN OBJECT_NAME(resource_associated_entity_id)

WHEN resource_associated_entity_id = 0 THEN 'Not Applicable'

ELSE OBJECT_NAME(p.object_id)

END AS EntityName,

index_id AS IndexID,

resource_type AS ResourceType,

resource_description AS ResourceDescription,

request_mode AS RequestMode,

request_status AS RequestStatus

FROM

sys.dm_tran_locks t

LEFT JOIN

sys.partitions p ON p.partition_id = t.resource_associated_entity_id

WHERE

resource_database_id = DB_ID() AND resource_type <> 'DATABASE';

BEGIN TRAN

UPDATE EMPLOYEE SET EMPNAME = 'JENNY' WHERE EMPID = '100'

GO

UPDATE EMPLOYEE SET EMPNAME = 'JENNY' WHERE EMPID = '101'

SELECT * FROM Lock_checks WHERE SessionID = @@spid AND ResourceType <> 'METADATA' AND EntityName <> 'Lock_checks'

COMMIT TRAN

Committing the Changes

Although we've made all the changes for this transaction, the transaction is not yet committed. It's ready to commit; however, some steps must be done before the transaction can commit.

All the transaction's log records, including the final commit tran log record, must be written to the transaction log file. And they're written out using a write-through flag that forces the log records to write to disk.

Now, forcing the log records out to disk makes the transaction durable. If a system crashes, for instance, the record of all the changes is on disk so that they can be replayed as part of crash recovery. This write-through nature is by design; there's no way to change or override it.

Suppose synchronous high-availability features are used, like database mirroring or availability groups. In that case, the system will stop and wait for the log records for this particular transaction to be sent across to the database mirror or the availability group replica and then acknowledge back to the main system. That's to ensure the transaction is also durable on those other databases.

Once all the log records are durable on disk, all the locks held by the transaction can be released. Once the locks are released, we can acknowledge to the user or the application that the commit has occurred successfully. So, the user will get back the five rows affected message. The transaction is now committed. It cannot be rolled back. Commit is a final operation that cannot be undone. So, our changes are now durable on disk.

Committing

In memory, we've got our two changed data file pages and a set of log records stored in the buffer pool.

Before committing the change, the log records must be written to the transaction log. The log is doing sequential writes in a write-ahead logging mechanism, meaning that the log records will be written to disk before the data file pages are written out, all the locks will be released, and the transaction can be committed. The user gets two rows of affected messages.

The Transaction Has Committed - Now What?

All the changes made in the transaction have been recorded in the transaction log, ensuring durability. If there is a crash, the effects of the transaction can be replayed. The data file pages with modified data are still stored in memory and haven't been written to disk yet. These modified pages in memory are referred to as "dirty pages." The reason they are not immediately written to disk when the transaction is committed is that it's not necessary. The transaction log already contains a complete description of all the changes made on those pages, ensuring durability. Eventually, these dirty pages will be written to disk, synchronizing the data files with the transaction log. However, immediately writing them out upon transaction commitment would be highly inefficient. A "checkpoint" is used to write out the data file pages periodically to address this.

Next Steps

- Implement complex data modification to understand buffer pool, locking workflow at each database object.

- Read additional articles about locking.

About the author

Rakesh Patil has six years of experience as a SQL Server Database Administrator with a focus on performance tuning, configuration, Disaster Recovery, Log Shipping, Database Mirroring, Replication, AlwaysON and AWS.

Rakesh Patil has six years of experience as a SQL Server Database Administrator with a focus on performance tuning, configuration, Disaster Recovery, Log Shipping, Database Mirroring, Replication, AlwaysON and AWS.This author pledges the content of this article is based on professional experience and not AI generated.

View all my tips

Article Last Updated: 2023-10-13