By: Aaron Bertrand | Updated: 2022-10-03 | Comments (39) | Related: 1 | 2 | > Functions System

Problem

When writing T-SQL, a lot of developers use either COALESCE or ISNULL in order to provide a default value in cases where the input is NULL. They have various reasons for their choice, though sometimes this choice may be based on false assumptions. Some think that ISNULL is always faster than COALESCE. Some think that the two are functionally equivalent and therefore interchangeable. Some think that you need to use COALESCE because it is the only one that adheres to the ANSI SQL standard. The two functions do have quite different behavior and it is important to understand the qualitative differences between them when using them in your code.

Solution

The following differences should be considered when choosing between COALESCE and ISNULL:The COALESCE and ISNULL SQL Server statements handle data type precedence differently

COALESCE determines the type of the output based on data type precedence. Since DATETIME has a higher precedence than INT, the following queries both yield DATETIME output, even if that is not what was intended:

DECLARE @int INT, @datetime DATETIME; SELECT COALESCE(@datetime, 0); SELECT COALESCE(@int, CURRENT_TIMESTAMP);

Results:

1900-01-01 00:00:00.000 2012-04-25 14:16:23.360

With ISNULL, the data type is not influenced by data type precedence, but rather by the first item in the list. So swapping ISNULL in for COALESCE on the above query:

DECLARE @int INT, @datetime DATETIME; SELECT ISNULL(@datetime, 0); --SELECT ISNULL(@int, CURRENT_TIMESTAMP);

For the first SELECT, the result is:

1900-01-01 00:00:00.000

If you uncomment the second SELECT, the batch terminates with the following error, since you can't implicitly convert a DATETIME to INT:

Implicit conversion from data type datetime to int is not allowed. Use the CONVERT function to run this query.

While in some cases this can lead to errors, and that is usually a good thing as it allows you to correct the logic, you should also be aware about the potential for silent truncation. I consider this to be data loss without an error or any hint whatsoever that something has gone wrong. For example:

DECLARE @c5 VARCHAR(5); SELECT 'COALESCE', COALESCE(@c5, 'longer name') UNION ALL SELECT 'ISNULL', ISNULL(@c5, 'longer name');

Results:

COALESCE longer name ISNULL longe

This happens because ISNULL takes the data type of the first argument, while COALESCE inspects all of the elements and chooses the best fit (in this case, VARCHAR(11)). You can test this by performing a SELECT INTO:

DECLARE @c5 VARCHAR(5);

SELECT

c = COALESCE(@c5, 'longer name'),

i = ISNULL(@c5, 'longer name')

INTO dbo.testing;

SELECT name, t = TYPE_NAME(system_type_id), max_length, is_nullable

FROM sys.columns

WHERE [object_id] = OBJECT_ID('dbo.testing');

Results:

name system_type_id max_length is_nullable ---- -------------- ---------- ----------- c varchar 11 1 i varchar 5 0

As an aside, you might notice one other slight difference here: columns created as the result of COALESCE are NULLable, while columns created as a result of ISNULL are not. This is not really an endorsement one way or the other, just an acknowledgement that they behave differently. The biggest impact you'll see from this difference is if you use a computed column and try to create a primary key or other non-null constraint on a computed column defined with COALESCE, you will receive an error:

CREATE TABLE dbo.works ( a INT, b AS ISNULL(a, 15) PRIMARY KEY ); CREATE TABLE dbo.breaks ( a INT, b AS COALESCE(a, 15) PRIMARY KEY );

Result:

Cannot define PRIMARY KEY constraint on column 'b' in table 'breaks'. The computed column has to be persisted and not nullable.

Msg 1750, Level 16, State 0, Line 1

Could not create constraint. See previous errors.

Using ISNULL, or defining the computed column as PERSISTED, alleviates the problem. Trying again, this works fine:

CREATE TABLE dbo.breaks ( a INT, b AS COALESCE(a, 15) PERSISTED PRIMARY KEY );

Just be aware that if you try to insert more than one row where a is either NULL or 15, you will receive a primary key violation error.

One other slight difference due to data type conversion can be demonstrated with the following query:

DECLARE @c CHAR(10); SELECT 'x' + COALESCE(@c, '') + 'y'; SELECT 'x' + ISNULL(@c, '') + 'y';

Results:

xy x y

Both columns are converted to VARCHAR(12), but COALESCE ignores the padding implicitly associated with concatenating a CHAR(10), while ISNULL obeys the specification for the first input and converts the empty string to a CHAR(10).

The SQL Server COALESCE statement supports more than two arguments

Consider that if you are trying to evaluate more than two inputs, you'll have to nest ISNULL calls, while COALESCE can handle any number. The upper limit is not explicitly documented, but the point is that, for all intents and purposes, COALESCE will better handle your needs in this case. Example:

SELECT COALESCE(a, b, c, d, e, f, g) FROM dbo.table; -- to do this with ISNULL, you need: SELECT ISNULL(a, ISNULL(b, ISNULL(c, ISNULL(d, ISNULL(e, ISNULL(f, g)))))) FROM dbo.table;

The two queries produce absolutely identical plans; in fact, the output is extrapolated to the exact same expression for both queries:

CASE WHEN [tempdb].[dbo].[table].[a] IS NOT NULL THEN [tempdb].[dbo].[table].[a] ELSE CASE WHEN [tempdb].[dbo].[table].[b] IS NOT NULL THEN [tempdb].[dbo].[table].[b] ELSE CASE WHEN [tempdb].[dbo].[table].[c] IS NOT NULL THEN [tempdb].[dbo].[table].[c] ELSE CASE WHEN [tempdb].[dbo].[table].[d] IS NOT NULL THEN [tempdb].[dbo].[table].[d] ELSE CASE WHEN [tempdb].[dbo].[table].[e] IS NOT NULL THEN [tempdb].[dbo].[table].[e] ELSE CASE WHEN [tempdb].[dbo].[table].[f] IS NOT NULL THEN [tempdb].[dbo].[table].[f] ELSE [tempdb].[dbo].[table].[g] END END END END END END

So the main point here is that performance will be identical in this case and that the T-SQL itself is the issue, it becomes needlessly verbose. And these are very simple, single-letter column names, so imagine how much longer that second query would look if you were dealing with meaningful column or variable names.

COALESCE and ISNULL perform about the same (in most cases) in SQL Server

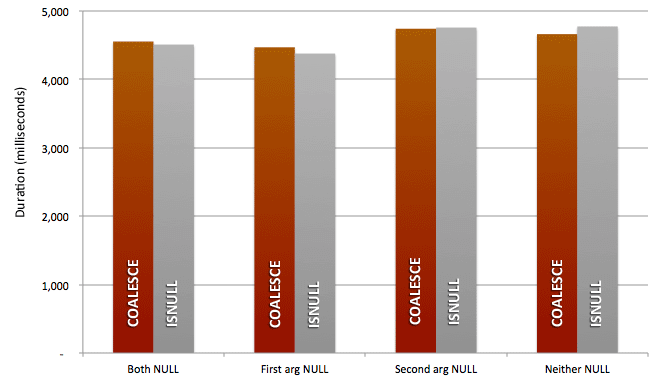

Different people have run different tests comparing ISNULL and COALESCE, and have come up with surprisingly different results. I thought I would introduce a new test based on SQL Server 2012 to see if my results show anything different. So I created a simple test with two variables, and tested the speed of COALESCE and ISNULL in four scenarios: (1) both arguments NULL; (2) first argument NULL; (3) second argument NULL; and, (4) neither argument NULL. I simply assigned the result of COALESCE or ISNULL to another variable, in a loop, 500,000 times, and measured the duration of each loop in milliseconds. This was on SQL Server 2012, so I was able to use combined declaration / assignment and a more precise data type than DATETIME:

DBCC DROPCLEANBUFFERS; DECLARE @a VARCHAR(5), -- = 'str_a', -- this line changed per test @b VARCHAR(5), -- = 'str_b', -- this line changed per test @v VARCHAR(5), @x INT = 0, @time DATETIME2(7) = SYSDATETIME(); WHILE @x <= 500000 BEGIN SET @v = COALESCE(@a, @b); --ISNULL --this line changed per test SET @x += 1; END SELECT DATEDIFF(MILLISECOND, @time, SYSDATETIME());

I ran each test 10 times, recorded the duration in milliseconds, and then averaged the results:

This demonstrates that, at least when we're talking about evaluating constants (and here I only evaluated two possibilities), the difference between COALESCE and ISNULL is not worth worrying about.

Where performance can play an important role, and hopefully this scenario is uncommon, is when the result is not a constant, but rather a query of some sort. Consider the following:

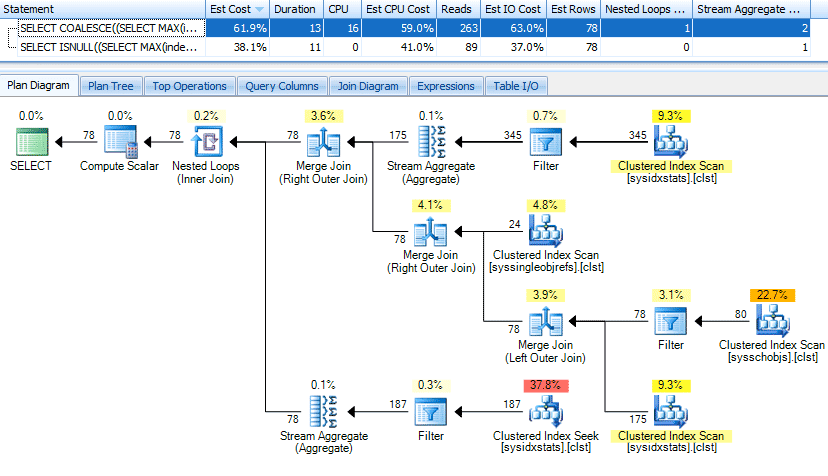

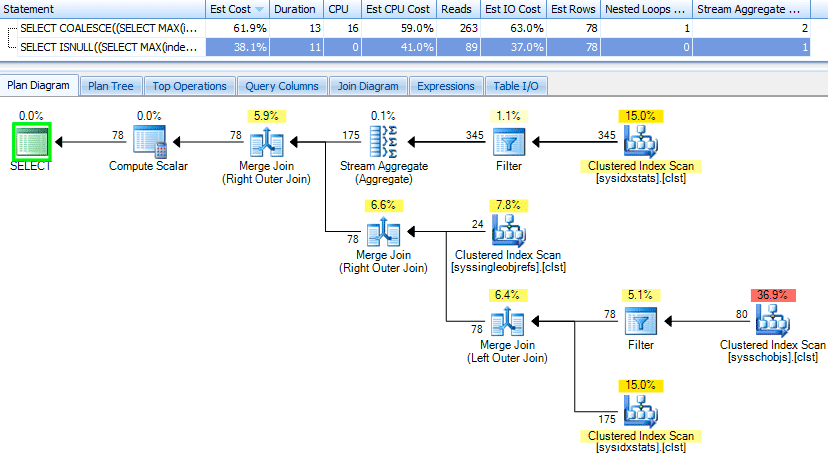

SELECT COALESCE((SELECT MAX(index_id) FROM sys.indexes WHERE [object_id] = t.[object_id]), 0) FROM sys.tables AS t; SELECT ISNULL((SELECT MAX(index_id) FROM sys.indexes WHERE [object_id] = t.[object_id]), 0) FROM sys.tables AS t;

If you look at the execution plans (with some help from SQL Sentry Plan Explorer), the plan for COALESCE is slightly more complex, most noticeably with an additional Stream Aggregate operator and a higher number of reads. The plan for COALESCE:

And the plan for ISNULL:

The COALESCE plan is actually evaluated as something like:

SELECT CASE WHEN (SELECT index_id FROM sys.indexes WHERE [object_id] = s.[object_id]) IS NOT NULL THEN (SELECT MAX(index_id) FROM sys.indexes WHERE [object_id] = s.[object_id]) ELSE 0 END;

In other words, it is evaluating at least part of the subquery twice. To me, this is kind of like selecting the number of rows of a table to determine if the number is greater than zero, then as a result of that, computing the count again. ISNULL, on the other hand, somehow has the smarts to only evaluate the subquery once. To be honest, I think this is often an edge case, but the sentiment seems to proliferate into all discussions that involve the two functions.

If you are writing complex expressions using ISNULL, COALESCE or CASE where the output is either a query or a call to a user-defined function, it is important to test all of the variants to be sure that performance will be what you expect. If you are using simple constant, expression or column outputs, the performance difference is almost certainly going to be negligible. But if every last nanosecond is important, the only way you can know for sure which will be faster, is to test for yourself, on your hardware, against your schema and data.

ISNULL is not consistent across Microsoft products/languages

ISNULL can be confusing for developers from other languages, since in T-SQL it is an expression that returns a context-sensitive data type based on exactly two inputs, while in - for example - MS Access, it is a function that always returns a Boolean based on exactly one input. In some languages, you can say:

IF ISNULL(something) -- do something

In SQL Server, you have to compare the result to something, since there are no Boolean types. So you have to write the same logic in one of the following ways:

IF something IS NULL -- do something -- or IF ISNULL(something, NULL) IS NULL -- do something -- or IF ISNULL(something, '') = '' -- do something

Of course you have to do the same thing with COALESCE, but at least it's not different depending on where you're using the function. Now you could also argue the other way - and I'm trying hard to not be biased against ISNULL here. Since COALESCE isn't available in other languages or within MS Access at all, it can be confusing for those developers to have to learn about COALESCE when they realize that ISNULL does not work the same way.

COALESCE is ANSI standard

COALESCE is part of the ANSI SQL standard, and ISNULL is not. Adhering to the standard is not a top priority for me personally; I will use proprietary features if there are performance gains to take advantage of outside of the strict standard (e.g. a filtered index), if there isn't an equivalent in the standard (e.g. GETUTCDATE()), or if the current implementation doesn't quite match the functionality and/or performance of the standard (again a filtered index can be used to honor true unique constraints). But when there is nothing to be gained from using proprietary functionality or syntax, I will lean toward following the standard.

SQL Server 2022 Improvements

COALESCE and ISNULL are sometimes used to handle optional parameters, comparing the input (or some token date) with the value in the table (or the same token date). One common pattern looks like this:

DECLARE @d datetime = NULL; SELECT COUNT(*) FROM dbo.Posts WHERE COALESCE(CreationDate, '19000101') = COALESCE(@d, '19000101');

The underlying problem leading to this complexity is that you can't use equality or inequality checks to compare anything to NULL (including comparing two NULLs) - those checks always return unknown (which leads to false).

SQL Server 2022 adds a new form of predicate, IS [NOT] DISTINCT FROM, that bypasses the problem and considers NULLs equal. Now, in SQL Server 2022, we can simplify and eliminate those token values by using this new predicate form:

DECLARE @d datetime = NULL; SELECT COUNT(*) FROM dbo.Posts WHERE CreationDate IS NOT DISTINCT FROM @d;

Another pattern is to manually check if either side is NULL before making any comparisons, e.g.:

WHERE CreationDate <> @d OR (CreationDate IS NULL AND @d IS NOT NULL) OR (CreationDate IS NOT NULL AND @d IS NULL);

In SQL Server 2022, the exact same logic can be accomplished like this:

WHERE CreationDate IS DISTINCT FROM @d;

This improvement does not render COALESCE or ISNULL obsolete, but it may help clean up some of your more convoluted code samples.

Conclusion

Developers should be well aware of the different programmability characteristics of COALESCE and ISNULL, and should be careful not to draw any general conclusions about performance from hearsay or from isolated observations.Personally I always use COALESCE both because it is compliant to the SQL standard and because it supports more than two arguments. Also I have yet to write a query that uses an atomic subquery as one of the possible outcomes of CASE or COALESCE, so the obscure scenario where performance can matter has not been a concern to date.

Next Steps

- Take an inventory of your T-SQL codebase to see if you are using one or both functions consistently.

- Test some of those scenarios to see if using the other function will work better (particularly cases where the result is a subquery or function call).

- Review the following tips and other resources:

About the author

Aaron Bertrand (@AaronBertrand) is a passionate technologist with industry experience dating back to Classic ASP and SQL Server 6.5. He is editor-in-chief of the performance-related blog, SQLPerformance.com, and also blogs at sqlblog.org.

Aaron Bertrand (@AaronBertrand) is a passionate technologist with industry experience dating back to Classic ASP and SQL Server 6.5. He is editor-in-chief of the performance-related blog, SQLPerformance.com, and also blogs at sqlblog.org.This author pledges the content of this article is based on professional experience and not AI generated.

View all my tips

Article Last Updated: 2022-10-03