By: Tim Smith | Comments | Related: > PowerShell

Problem

We're looking for techniques to debug our PowerShell scripts, which use values from configuration tables or files, as variables in our script. What are some examples of creating meaningful output when testing so that we can identify if the values being tested are correct without needing to look through each table or file so we can compare?

Solution

When we're reading information and executing an action based off that information, such as reading information from a table and using that information to complete tasks, we may want to validate what that information is in testing. In this tip, we'll look at a technique that we can use for producing the information we read, whether from a file, table, API, or another source, on the screen, in a file, or in output for testing purposes.

PowerShell Output

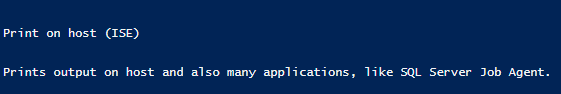

Open PowerShell ISE and run the below code (the image should be similar):

[Environment]::NewLine Write-Host "Print on host (ISE)" [Environment]::NewLine Write-Output "Prints output on host and also many applications, like SQL Server Job Agent." [Environment]::NewLine

There are two options in PowerShell to output comments or capture variables. Both options are valuable to know because in some applications, using Write-Host may not output anything so you can use the alternative Write-Output. If you use PowerShell through the command line or ISE, both will output information.

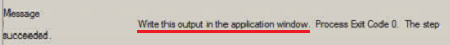

PowerShell Output from SQL Server Agent

If you run the code snippet below in SQL Server Job Agent, the Write-Host will fail because it is not recognized, but you can remove it and execute the same job with the Write-Output and it will pass with the output as expected. Some applications may work in a similar manner with PowerShell - they will output only with one and not the other - it's helpful to know multiple routes to output data or information when debugging. Create a test job in SQL Server Job Agent with the below code, you should see something similar to the below image from the SQL Server Job Agent history:

Write-Output "Write this output in the application window."

Save PowerShell Commands to a Log File

When debugging or tracking commands called in PowerShell, we may want to log what code is executed with and without the variable names replaced (in rare situations we may want both and we'll look at examples with either). As an example using this tip, I might want to log all the variables in the script being used when it's called (see final example below this), if each of those variables change based on a configuration file, table, etc. If several values are permanently set - like an example where the file location, server and database are always the same, I may only want to track the changing values. If we're using a later edition of PowerShell, we can use the debugger, but if we want to keep a log of some commands - especially dynamic commands, we can save these commands with their variables to a file. In the next few examples, we'll look at saving an add content command to a file. In the next two code snippets, we do this in PowerShell ISE by saving the executed code to a log file. In the first snippet, we do not replace the variable. In the second snippet, we replace the variable:

Snippet 1:

$content = "New content"

if (!(Test-Path "C:\ETLFiles\file.txt")) { New-Item "C:\ETLFiles\file.txt" -ItemType File }

Add-Content "C:\ETLFiles\file.txt" $content

[string]$logcommand1 = 'Add-Content "C:\ETLFiles\file.txt" $content'

Add-Content "C:\ETLFiles\log.txt" $logcommand1

Add-Content "C:\ETLFiles\log.txt" ([Environment]::NewLine)

Snippet 2:

$content = "New content"

if (!(Test-Path "C:\ETLFiles\file.txt")) { New-Item "C:\ETLFiles\file.txt" -ItemType File }

Add-Content "C:\ETLFiles\file.txt" $content

[string]$logcommand1 = 'Add-Content "C:\ETLFiles\file.txt" "{0}"' -f $content

Add-Content "C:\ETLFiles\log.txt" $logcommand1

Add-Content "C:\ETLFiles\log.txt" ([Environment]::NewLine)

After I execute each, the log file's output is:

Add-Content "C:\ETLFiles\file.txt" $content Add-Content "C:\ETLFiles\file.txt" "New content"

If I want to stamp the date, so that I can search for a history of commands called:

$content = "New content"

if (!(Test-Path "C:\ETLFiles\file.txt")) { New-Item "C:\ETLFiles\file.txt" -ItemType File }

Add-Content "C:\ETLFiles\file.txt" $content

[DateTime]$lognow = [DateTime]::Now

[string]$logcommand1 = 'Add-Content "C:\ETLFiles\file.txt" "{0}"' -f $content + " | " + $lognow

Add-Content "C:\ETLFiles\log.txt" $logcommand1

Add-Content "C:\ETLFiles\log.txt" ([Environment]::NewLine)Using the example from the other tip:

[string]$logfiledetails = $location + $file + $extension

[string]$logconnectionstring = 'Data Source={0};Database={1};integrated security=true' -f $server,$database

### Save to a log file:

Add-Content "C:\files\log.txt" $logfiledetails

Add-Content "C:\files\log.txt" $logconnectionstringReplacing the variable with the actual value for tracking an issue is generally more helpful, provided the value isn't sensitive (like a password or private key). With later editions of PowerShell, we can use the debugger with breakpoints, so for a one-time debugging situation, we may want to use this instead of saving details to a file. Developers will need to use their intuition as to where they want to keep records of script calls and where they don't - I generally prefer some commands to be saved if those commands are using a configuration table without sensitive data. For an example where I wouldn't log information:

Start-Service $service

Even if this script failed, this script lacks complexity and troubleshooting would be very quick. A good technique to use is how long does the troubleshooting take? These may be scripts where you want to add a log of what commands were called when the script executed. This shows a simple example of how we can troubleshoot what functions are being called with what variables exist in their place, while also seeing what functions are being called when we also want to see the variable's value, if this is the route developers need to use.

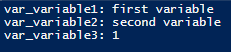

Output all PowerShell Variables

While I would seldom use this technique, it can be helpful to know how to output all variables. If we want to see the output of all our variables (in this case, in PowerShell ISE's window) and we've named our variables in a manner that can be filtered, one way we can do this is to retrieve all (or some of) the variables, filter the variables we're looking for (in this case by starting name), and return it on screen. We can also use this for some variables that may change during the script - and this is for validation of these variables. Like the above code, we could add this to a file for debugging purposes if we needed:

$var_variable1 = "first variable"

$var_variable2 = "second variable"

$var_variable3 = 1

foreach ($var in Get-Variable)

{

if ($var.Name -like "var_*")

{

$write = $var.name + ": " + $var.Value

Write-Host $write

}

}

The above code returns the below:

Combining this by saving the output to a file with the above code that saves the scripts executed, we get:

$var_content = "New content"

if (!(Test-Path "C:\ETLFiles\file.txt")) { New-Item "C:\ETLFiles\file.txt" -ItemType File }

Add-Content "C:\ETLFiles\file.txt" $var_content

[string]$logcommand1 = 'if (!(Test-Path "C:\ETLFiles\file.txt")) { New-Item "C:\ETLFiles\file.txt" -ItemType File }'

[string]$logcommand2 = 'Add-Content "C:\ETLFiles\file.txt" $var_content'

Add-Content "C:\ETLFiles\log.txt" $logcommand1

Add-Content "C:\ETLFiles\log.txt" $logcommand2

Add-Content "C:\ETLFiles\log.txt" ([Environment]::NewLine)

Add-Content "C:\ETLFiles\log.txt" "Variables Used:"

foreach ($var in Get-Variable)

{

if ($var.Name -like "var_*")

{

$write = $var.name + ": " + $var.Value

Add-Content "C:\ETLFiles\log.txt" $write

}

}

Which outputs the below in the log file:

if (!(Test-Path "C:\ETLFiles\file.txt")) { New-Item "C:\ETLFiles\file.txt" -ItemType File }

Add-Content "C:\ETLFiles\file.txt" $var_content

Variables Used:

var_content: New content

Since I've already outputted the values of the "logcommands", I don't name them in a manner which would return them. This provides a few examples of how you can save what commands are executed and what the current variables are when debugging.

Next Steps

- One hint about debugging is that often writing out meaningful logging for troubleshooting when you're experiencing an issue will solve it. By repeating variables or functions, you'll often catch the mistake.

- Using a file or printing out the details on screen (if applicable) can help quickly identify what's not matching. Sometimes it's as simple as forgetting to rename a variable, or add the right one.

- When passing values from an API or table to variables in a script, this is one helpful way to identify if those values are incorrect for the script.

About the author

Tim Smith works as a DBA and developer and also teaches Automating ETL on Udemy.

Tim Smith works as a DBA and developer and also teaches Automating ETL on Udemy.This author pledges the content of this article is based on professional experience and not AI generated.

View all my tips