By: Aaron Bertrand | Comments (10) | Related: > SQL Server 2019

Problem

If you've ever waited for a SQL Server database to recover after an unexpected shutdown or failover, you know that the more transactions you have, and the larger they are, the longer it can take for your SQL Server database to become available. In any kind of crash or service interruption, the process of getting the database back online and in a consistent state can be quite complicated under the covers. This can be amplified by the size and complexity of transactions in flight at the time of the interruption, meaning you are waiting longer with no recourse.

Solution

SQL Server 2019 provides a way to limit the impact of large or long-running transactions on the entire process of database recovery with accelerated database recovery.

Summary of the SQL Server Recovery Process

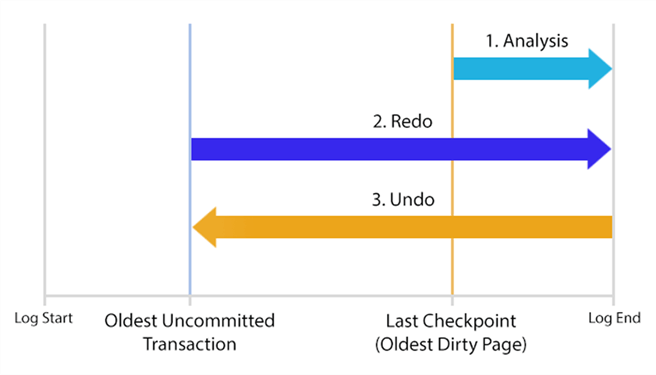

As a quick refresher on the recovery process (much more here and here), there are three phases to recovery:

- Analysis – Reads the transaction log forward from

the last checkpoint, determining the oldest dirty page and the state of all

transactions when the service stopped. All in-flight transactions will fall

into one of two categories; they were either:

- committed to the log but not written to the database, and need to be "redone"; or,

- uncommitted – or already in rollback – and need to be "undone".

- Redo – Starting from the oldest uncommitted transaction, reads the log forward and commits any transactions that were committed to the log, but not to the database.

- Undo – Starting from the end of the log, reads backward and rolls back any transactions that were still open and/or weren't committed to the log.

All of these phases must be complete before the database becomes available, except in Enterprise Edition, where fast recovery is possible. In fast recovery, the database can come online after redo is complete, allowing access to all of the data not protected by the undo phase.

A quick illustration of the process:

The problem with this process, as mentioned above, is that the recovery time is sensitive to the longest-running transactions that must be applied or rolled back. It is necessary to scan potentially significant portions of the log two or even three times, starting from the oldest uncommitted transaction. These factors can create substantial delays in getting a critical database back online.

Enter Accelerated Database Recovery in SQL Server 2019

This new feature in SQL Server 2019 aims to get your databases online faster by eliminating some of the bottlenecks inherent in the current recovery process. This is not just for crash scenarios, but also for cluster failovers and even redo operations within Availability Groups.

One of the key components is the persisted version store, similar to the version store used by Read Committed Snapshot Isolation (RCSI), but stored in the user database rather than tempdb. When a row changes, the prior version of the row is kept either in-row (if it fits; either a diff or a full row, up to 200 bytes) or off-row (using the familiar 14-byte pointer). This allows the previous image of a row to be generated from the current version.

There is a new concept called a logical revert, which is a row-level undo using the persisted version store. When a transaction aborts, the previous version is made available through a pointer as opposed to physically changing the in-row value. This allows all locks to release immediately instead of waiting for the change to complete. Put another way, if a transaction rolls back, logical revert allows a second transaction to use the persisted version store for the same row immediately, when it would otherwise be blocked waiting for the first transaction to finish rolling back.

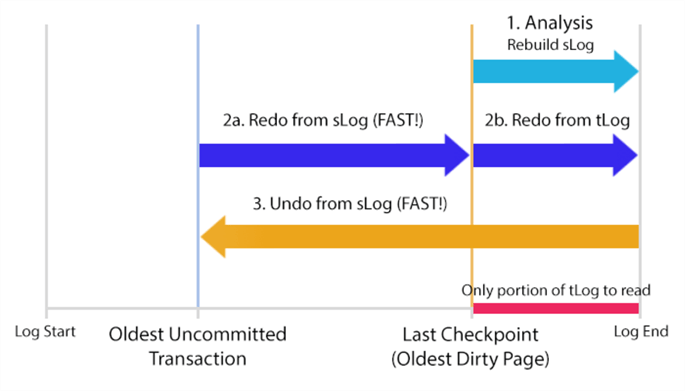

An important component is a new in-memory log stream, called the sLog for "secondary log stream", though I just call it the streaming log. The sLog records low-volume and non-versioned operations – such as acquiring locks for DDL or bulk operations. This helps redo and undo operations run faster because they only have to process non-versioned operations. Since the contents are persisted to the transaction log on checkpoint, this enables aggressive log truncation. Effectively, the only part of the physical transaction log that must be read during a redo or undo operation is the portion since the last checkpoint.

Finally, there is a new asynchronous cleaner process, which iterates through all relevant databases (similar to ghost cleanup) and deallocates unneeded page versions. It runs every minute, but you can call it manually using sys.sp_persistent_version_cleanup should you need to run it more often for a specific database. With more databases and more volume, much like database mirroring, you may want to be careful about how many databases use this functionality. You will be able to use Extended Events to monitor the cleaner activity, and can adjust the timing and even maxdop of the process.

A very quick illustration of the new recovery process (minus the cleaner):

For a lot more depth on this process, please see this Microsoft article. Now that the feature is available outside of Azure SQL Database, surely there will be further articles and deep dives handling all of the internals.

SQL Server 2019 Accelerated Database Recovery in Action

Theory is nice, and that all sounds good, but you're probably wondering how well it works in practice. In my initial testing with SQL Server 2019 CTP 2.3, very well indeed. (This functionality was available earlier, but only under an undocumented trace flag.)

I created two databases, one with accelerated database recovery enabled:

CREATE DATABASE OldStyle;

GO CREATE DATABASE NewStyle;

ALTER DATABASE NewStyle SET ACCELERATED_DATABASE_RECOVERY = ON;

GO

I then set up a query for each database that I knew would take longer than a few minutes, with the intention of killing both, and then restarting the instance to observe the recovery process.

In the first window, at about 15:45, I ran this code:

WAITFOR TIME '15:46:30'; USE OldStyle;

GO DROP TABLE IF EXISTS dbo.fl1, dbo.fl2, dbo.fl3;

SELECT s2.* INTO dbo.fl1 FROM sys.all_columns AS s1 CROSS JOIN sys.all_objects AS s2;

SELECT s2.* INTO dbo.fl2 FROM sys.all_columns AS s1 CROSS JOIN sys.all_objects AS s2;

SELECT s2.* INTO dbo.fl3 FROM sys.all_columns AS s1 CROSS JOIN sys.all_objects AS s2;

A few seconds later, in a second window, I ran this code:

WAITFOR TIME '15:46:30'; USE NewStyle;

GO DROP TABLE IF EXISTS dbo.fl1, dbo.fl2, dbo.fl3;

SELECT s2.* INTO dbo.fl1 FROM sys.all_columns AS s1 CROSS JOIN sys.all_objects AS s2;

SELECT s2.* INTO dbo.fl2 FROM sys.all_columns AS s1 CROSS JOIN sys.all_objects AS s2;

SELECT s2.* INTO dbo.fl3 FROM sys.all_columns AS s1 CROSS JOIN sys.all_objects AS s2;

In a third window (which I called Killer.sql, for fun), I executed this code, to simulate a crash or failover after two and a half minutes:

WAITFOR DELAY '00:02:30';

SHUTDOWN WITH NOWAIT;

Note: Please do NOT do this in production (or at least call it CareerKiller.sql).

As soon as I did that, killer.sql showed this output:

Server shut down by NOWAIT request from login FONZIE\aaronbertrand.

SQL Server is terminating this process.

Both of the other windows were killed too, but they had different output. One said:

Msg 596, Level 21, State 1, Line 3

Cannot continue the execution because the session is in the kill state.

Msg 0, Level 20, State 0, Line 3

A severe error occurred on the current command. The results, if any, should be discarded.

The other said:

Msg 109, Level 20, State 0, Line 3

A transport-level error has occurred when receiving results from the server.

(provider: Shared Memory Provider, error: 0 - The pipe has been ended.)

I don't think there is anything particularly interesting about the fact that these two sessions had different error messages; likely just the way Management Studio handled the death of the server. Something to perhaps investigate another time; for now, I had an instance to start up, and an error log to review!

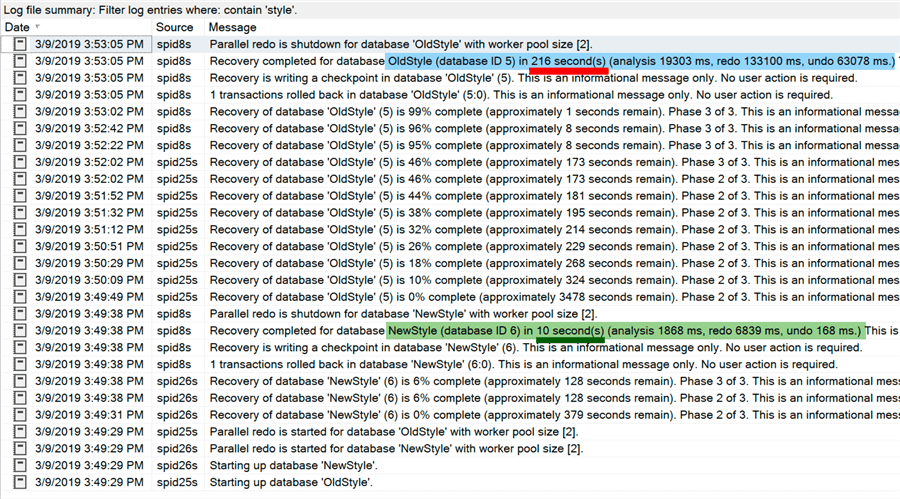

I went to the Configuration Manager and started my SQL Server instance. Using SSMS was noticeably sluggish as I re-established my connection and started navigating into Object Explorer – as you might expect when databases are recovering and rolling back rather large transactions. The differences in the error log, when both databases were finally available, were astounding:

That's a big difference: the database using Accelerated Database Recovery was fully online after just 10 seconds. You can see that it spent under 2 seconds in analysis, 7 seconds in redo, and a fraction of a second in undo. The other database, however, took over 3 minutes to come online; this is longer than the progress of the only in-flight transaction at the time of the crash. It took almost 20 seconds to perform analysis, over 130 seconds on redo, and 63 seconds on undo. It took 20X longer for essentially the same database to be restored to the same state after the same sequence of events. (You can dig much further into what is happening during these phases using Extended Events – and there should be new diagnostics available that distinguish between sLog and tLog activity.)

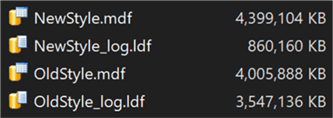

The aggressive log truncation now in effect also shows some impact here. Examining the data and log files in File Explorer shows that the new architecture exhibits substantially less log file growth:

The extra versioning information leads to about a 10% increase in the data file size, but roughly a 75% reduction in log file size. Forget about the edge case where recovery is a necessity and databases come back online more quickly; this is a much more observable benefit, all the time, for systems of all sizes.

Next Steps

This was just an initial peek at what these changes might mean for you. I still have some research to do in order to investigate all of the other effects, such as data file space, fragmentation, less thrashing in tempdb, and how all of it works in a much more highly concurrent environment. It may be that, for certain workloads, the trade-offs are not beneficial – this is part of the reason it isn't simply enabled by default outside of Azure SQL Database. But like other settings, such as Optimize for Ad Hoc Workloads, I think this is one that will usually be one that you'll want to turn on. In a future tip, I'll look at how you can monitor the various impacts of Accelerated Database Recovery.

In the meantime, read on for related tips and other resources:

- SQL Server Recovery Models

- Selecting the SQL Server database recovery model to ensure proper backups

- Understanding SQL Server Recovery Models and Transaction Log Use

- Understanding database recovery with SQL Server 2016 Extended Events

- Accelerated Database Recovery (Microsoft SQL Docs)

- The Transaction Log (Microsoft SQL Docs)

About the author

Aaron Bertrand (@AaronBertrand) is a passionate technologist with industry experience dating back to Classic ASP and SQL Server 6.5. He is editor-in-chief of the performance-related blog, SQLPerformance.com, and also blogs at sqlblog.org.

Aaron Bertrand (@AaronBertrand) is a passionate technologist with industry experience dating back to Classic ASP and SQL Server 6.5. He is editor-in-chief of the performance-related blog, SQLPerformance.com, and also blogs at sqlblog.org.This author pledges the content of this article is based on professional experience and not AI generated.

View all my tips