Problem

In the previous tip, Auditing for Azure SQL Database, we explored the process to audit an Azure SQL Database using the Azure Portal and Azure PowerShell cmdlets. In this article we look at how you can leverage Kusto Query Language (KQL) for querying the audit data.

Solution

Kusto Query Language (KQL) is a read-only query language for processing real-time data from Azure Log Analytics, Azure Application Insights, and Azure Security Center logs. SQL Server database professionals familiar with Transact-SQL will see that KQL is similar to T-SQL with slight differences.

For example, in T-SQL we use the WHERE clause to filter records from a table as follows.

SELECT * FROM Employees WHERE firstname='John'

We can write the same query in KQL with the following syntax. Like PowerShell, it uses a pipe (|) to pass values to the next command.

Employees | where firstname == 'John'

Similarly, in T-SQL we use the ORDER BY clause to sort data in ascending or descending order as follows.

SELECT * FROM Employees WHERE firstname='John' ORDER BY empid

The equivalent KQL code is as follows.

Employees | where firstname == 'John' | order by empid

The query syntax for KQL language looks familiar, right.

Enable Audit for Azure SQL Database

In the previous tip, we configured audit logs for Azure SQL Database using Azure Storage. If you have the bulk of the audit data in Azure Storage, it might be complex to fetch the required data. You can use the sys.fn_get_audit_file() function for fetching data, but it also takes longer for a large data set. Therefore, for critical databases you should store audits in Azure Log Analytics.

To configure the Azure SQL Database Audit logs in Azure Log Analytics, login to the Azure portal using your credentials and navigate to Azure Server.

As shown below, server-level auditing is disabled. It is also disabled for all databases in the Azure server.

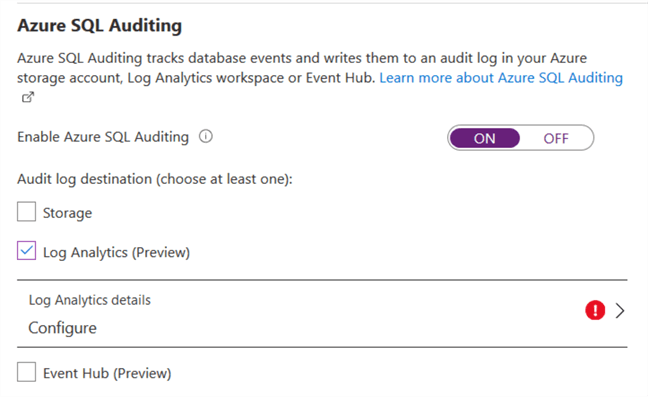

Enable the server-level auditing and put a tick on Log Analytics (Preview) as the audit log destination.

This enables the configuration option for log analytics. Click on Configure and it opens Log Analytic Workspaces.

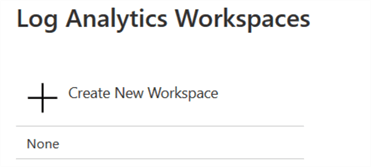

Click on the Create New Workspace and in the new workspace, enter the following values:

- Enter a name for log analytics workspace

- Select your Azure subscription

- Resource group

- Azure region

- Pricing tier

As shown below, the auditing is configured for Azure SQL Database.

Save the audit configurations for Azure SQL Database. It enables server-level auditing for the Azure SQL Database. The database auditing is still disabled because if we enable server auditing, it applies to all databases.

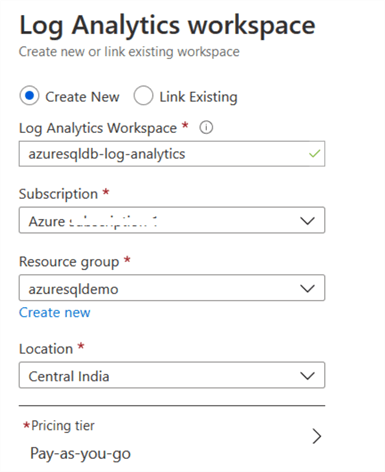

Configure the diagnostic telemetry

We need to configure the diagnostic settings for SQL Database for gathering the metrics using the Azure portal. You can configure the data for errors, blocking, deadlocks, query store, and automatic tuning in the diagnostics.

- SQL Insights: It captures Intelligent Insights performance.

- AutomaticTuning: It contains automatic tuning recommendations for your Azure SQL Database.

- QueryStoreRunTImeStatics: It captures query statistics such as CPU usage, query duration.

- QueryStoreWaitStatistics: It captures query wait statistics such as locking, CPU, memory stats.

- Errors: It contains information for errors on a database.

- DatabaseWaitStatistics: It captures information for database wait statistics.

- Timeouts: It captures timeouts on a database.

- Blocks: It captures database blocking information.

- Deadlocks: It captures deadlocks events for Azure databases.

- Basic: It contains information for DTU\CPU, failed or successful connections, storage usage etc.

- InstanceAndAppAdvanced: It captures data for TempDB data and logs usage.

You can refer to this Microsoft doc for detailed information.

Click on Add diagnostics setting. Let us enable diagnostics for errors and InstanceAndAppAdvanced. Send this data to the log analytics workspace using your subscription and log analytics workspace. Click on Save for the configuration.

Use KQL for Azure SQL database log analysis

Navigate to the Azure database and click on Logs. You get the welcome page for Log Analytics.

Click on Get Started and it opens the query editor for KQL queries. In the left-hand side, it shows a SQL database AzureDiagnostics.

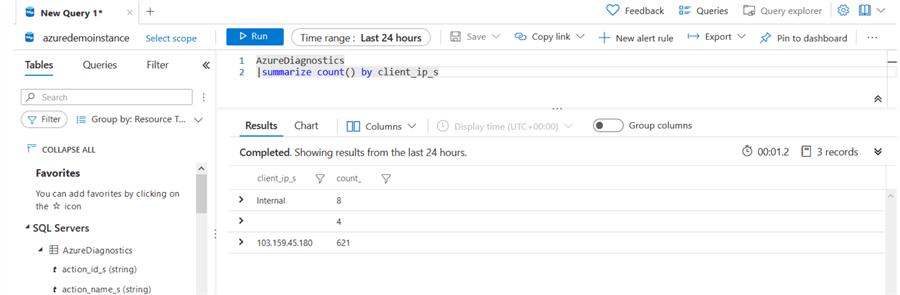

Kusto Query Language (KQL) to summarize the client IP Connections

Suppose we want to identify the client IP address and a number of connections for Azure SQL Database. In the below KQL query, we use the followings.

- Summarize function for generating an output table from the input table aggregate.

- Count() operator to return the number of records.

- It uses the client_ip_s table as the data source for data ingestion.

AzureDiagnostics |summarize count() by client_ip_s

In the KQL query output, we get an IP address and aggregate count.

KQL query for finding out login failures count by IP address

In a traditional SQL Server, we get login failure messages in the error logs. Similarly, in Azure SQL Database, we can use KQL to determine the IP address from where these connections are originating.

In the below KQL query, we have the following arguments.

- It filters the category for the SQLSecurityAuditEvents.

- The query uses LogicalServerName_s argument for filter records for my Azure SQL Server (azuredemoinstance)

- DBAF – Database Authentication Failure.

- It uses summarize and count() by function and returns login failures count for each server principal. In the below query output, it shows [sqladmin] login and its failure count as 7.

AzureDiagnostics | where Category == 'SQLSecurityAuditEvents' and LogicalServerName_s == "azuredemoinstance" | where action_id_s == 'DBAF' | summarize count() by client_ip_s, OperationName, server_principal_name_s

KQL query for listing events for Azure SQL Database

In the below KQL query, we filter records for the [labazuresql], my Azure SQL database.

AzureDiagnostics | where DatabaseName_s == "labazuresql"

By default, it gives output for last hour. You can click on the time range and select the appropriate period such as 12 hrs, 24 hrs, etc. You can expand the result set for detailed information such as the TenantId, TimeGenerated datetime, Resourceid, etc. which are all different data types.

Suppose someone executed an INSERT and SELECT statement for the Azure database. As a database administrator, you may want to get SQL statements. You can use KQL language and filter records from the statement_s table that have INSERT statements.

AzureDiagnostics | where statement_s contains "insert"

As shown below, we get the complete INSERT statement from the audit logs.

Similarly, you can fetch data from other diagnostic tables with the help of the KQL language. You can also monitor performance data such as CPU, Memory using the diagnostics configuration.

Next Steps

- Implement audit for Azure SQL Database in a storage account or Azure Log Analytics.

- You can go through Microsoft docs for KQL reference.

- Explore more on the Kusto Query Language.

Hi! I am Rajendra Gupta, Database Specialist and Architect, helping organizations implement Microsoft SQL Server, Azure, Couchbase, AWS solutions fast and efficiently, fix related issues, and Performance Tuning with over 14 years of experience.

I am the author of the book “DP-300 Administering Relational Database on Microsoft Azure.” I can be reached at: Rajendra.gupta16@gmail.com for any consulting help.

- MSSQLTips Awards:

- Author of the Year – 2022 | Author Contender – 2021/2023/2024 | Champion Award (100+ tips) – 2020