By: Rajendra Gupta | Updated: 2022-02-08 | Comments | Related: > Azure Cosmos DB

Problem

The tutorial, Introduction to the Azure Cosmos DB, helped you get familiar with Microsoft Azure Cosmos DB as a scalable NoSQL database. We used Azure Cosmos DB for Free to create a new container, database, insert JSON documents, and query data stored in the container. In this tutorial we look at how to migrate SQL relational data into Azure Cosmos DB.

Solution

The Azure Cosmos DB Data Migration tool can import data from various sources into Cosmos DB. The supported formats are outlined below:

- JSON

- CSV

- SQL

- MongoDB

- Azure Table Storage

- DynamoDB

- Azure Cosmos DB SQL API Collections

Prerequisites

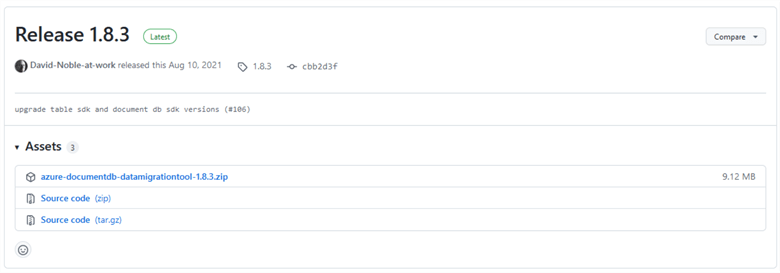

The Azure Cosmos DB Data Migration tool requires Microsoft .Net framework 4.51 or higher to run the tool. Download the executable package from GitHub.

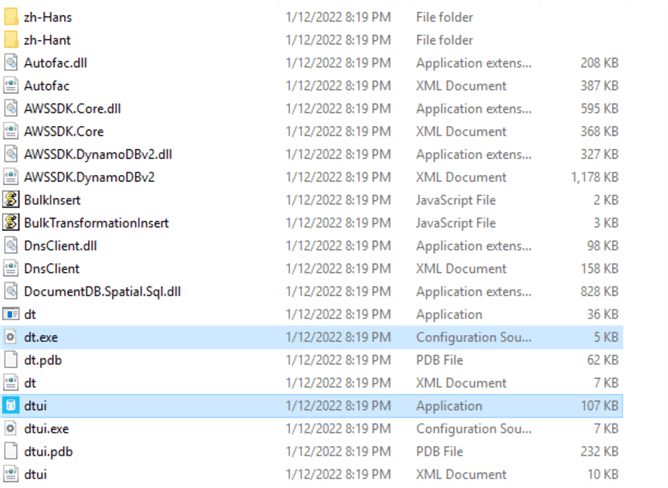

Extract the compressed data migration tool files and the folder container to access the following:

- dt.exe: Command-line version of data migration tool

- dtui.exe: Graphical user interface version of data migration tool

Migrate SQL Data into Azure Cosmos DB

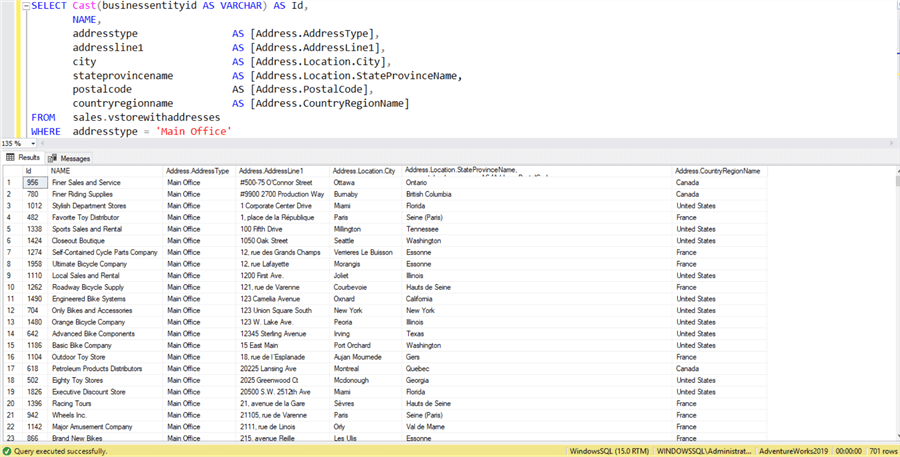

First, you need SQL relational data that you want to migrate to Azure Cosmos DB. This tip uses the [AdventureWorks2019] database and runs the following T-SQL statement that retrieves data for migration.

SELECT CAST(businessentityid AS VARCHAR) AS Id,

NAME,

addresstype AS [Address.AddressType],

addressline1 AS [Address.AddressLine1],

city AS [Address.Location.City],

stateprovincename AS [Address.Location.StateProvinceName,

postalcode AS [Address.PostalCode],

countryregionname AS [Address.CountryRegionName]

FROM sales.vstorewithaddresses

WHERE addresstype = 'Main Office'

Note: If you are doing this with production data it would make sense to tune your SQL query to retrieve relevant columns and records for optimized migration.

The query returns 701 rows for data migration as shown below.

We use the column alias such as [Address.AddressType] or [Address.Location.City]. The "." is a nesting operator to create the subdocuments. We will explore the generated JSON data later in this tutorial.

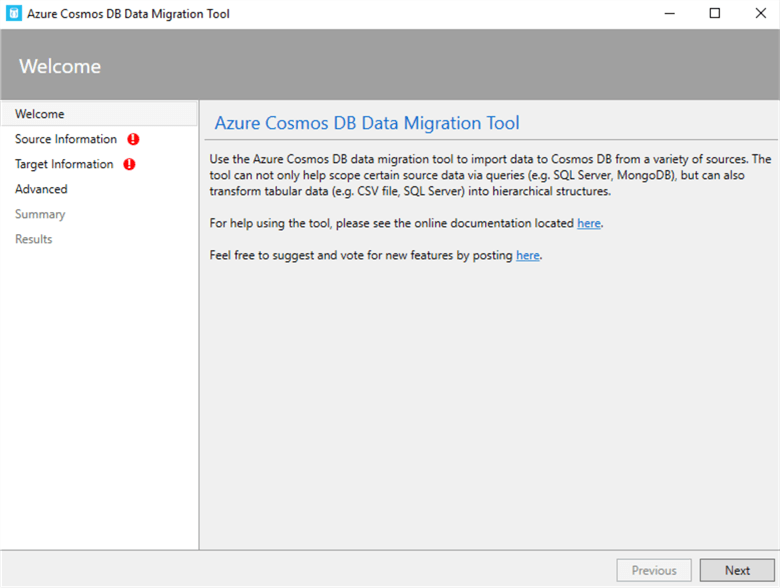

Double-click on dtui.exe to launch the Azure Cosmos DB Migration Tool. The Welcome page gives tool introductory information, an external link for documentation, and new feature voting.

Click Next.

Source Information:

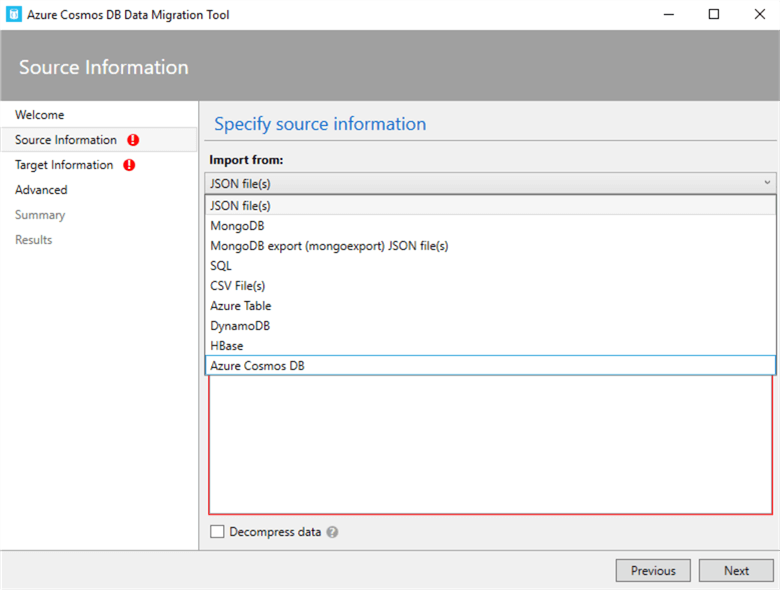

The source information page asks what type of data you want to import. It specifies JSON files, MongoDB, MongoDB export JSON file, SQL, CSV files, Azure Table, DynamoDB, HBase, and Azure Cosmos DB.

Choose SQL.

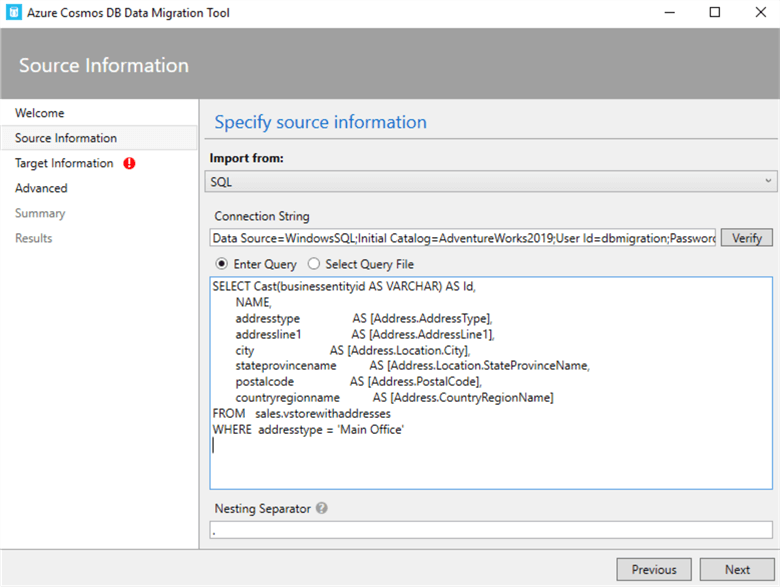

Then enter a standard SQL connection string like below with the appropriate values.

Data Source=myServerAddress;Inital Catalog=myDataBase;UserID=myUsername;Password=myPassword;

Specify your connection string with correct values and click Verify to see if the tool can connect to your SQL database. In the Enter Query section paste the query to fetch the data required for migration. You can also use a SQL file with the option "Select Query File".

The Nesting Separator is for modifying the document structure based on the specified operator. Specify "." as the nesting operator as shown below which we used above.

Click Next.

Target Information:

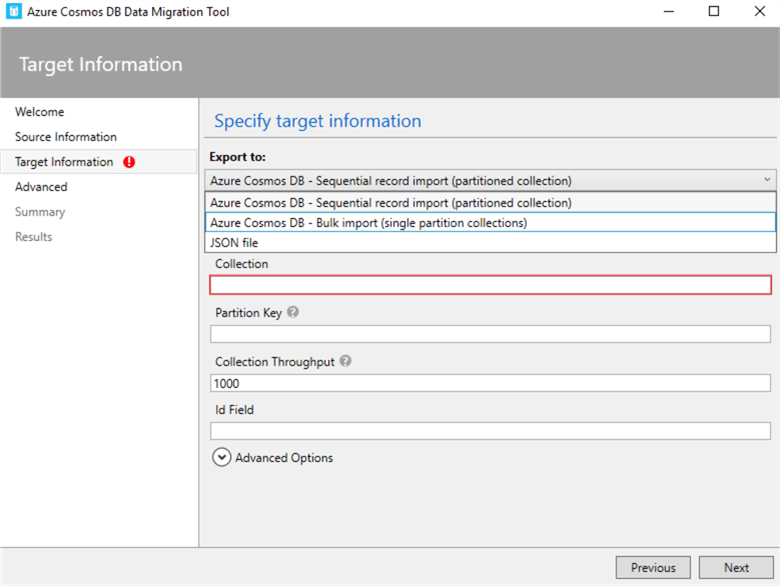

The target information page requires the following user inputs.

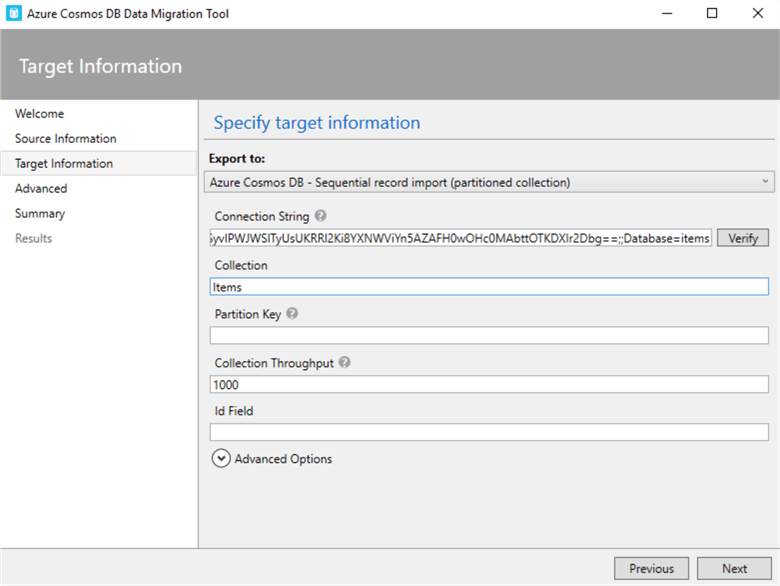

Export To:: You need to specify the export mode to the Azure Cosmos DB container using the sequential or bulk import method. The sequential method copies the records one by one. Therefore, it can migrate data to single or multiple partitions container. The bulk importer method uses a stored procedure to migrate data into single partition collections during the import operation. We will use Azure Cosmos DB – Sequential record import (partitioned collection) in this tip.

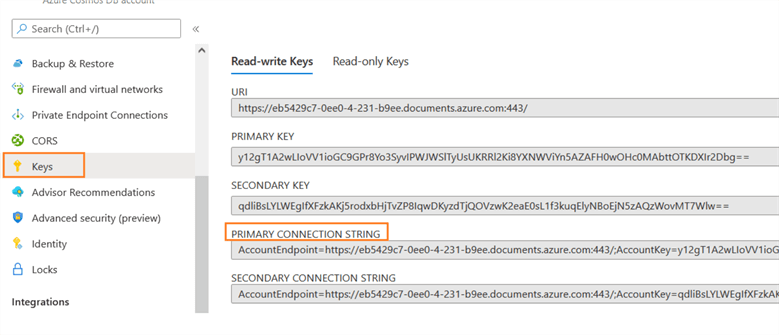

Connection String: The data migration tool requires Cosmos DB connection string in the following format.

AccountEndpoint=<CosmosDB Endpoint>;AccountKey=<CosmosDB Key>;Database=<CosmosDB Database>;

For the connection string (AccountEndpoint=<CosmosDB Endpoint>; AccountKey=<CosmosDB Key>), navigate to the Azure Cosmos DB account and copy the primary connection string from the key tab as shown below.

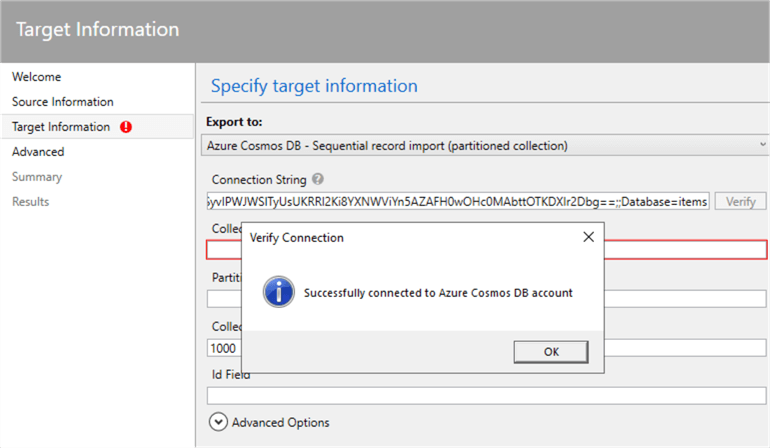

Append the database name on the right side of the primary connection string. Click Verify to validate the connection status for the Azure Cosmos DB account. As shown below, it successfully connected to the Azure Cosmos DB account.

Collection: Specify the container name in the collection field.

The target information field has additional configurations such as Partition Key, Collection Throughput, Id Field, and Advanced Options.

Advanced Options

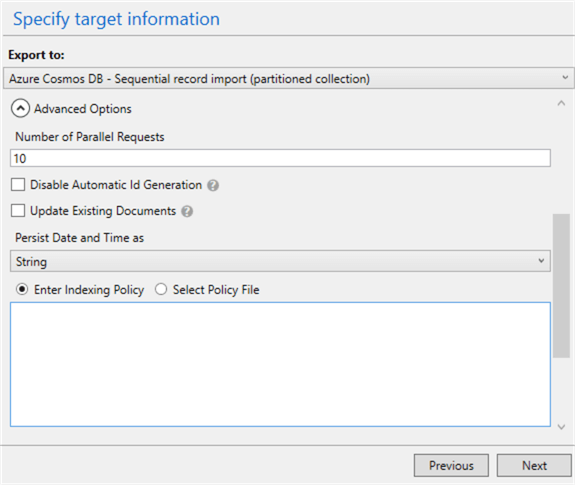

The advanced options has the following configurations.

- Number of parallel requests: The migration tool uses two parallel requests by default. You can increase the parallel requests in case the documents are small.

- Disable automatic id generation: We can select this option to disable automatic ID generation. Usually, you should select it if the document has an ID field that uniquely defines it.

- Number of retries on failure: If the data migration fails due to transient failures (network interruption), the number of retries can help to restart the process automatically. Its default value is 30.

- Retry Interval specifies the wait type between retrying connection to Cosmos DB during network interruptions.

- Connection mode: The connection mode supports DirectTcp, DirectHttps, and Gateway modes. By default, it uses DirectTcp mode for connection.

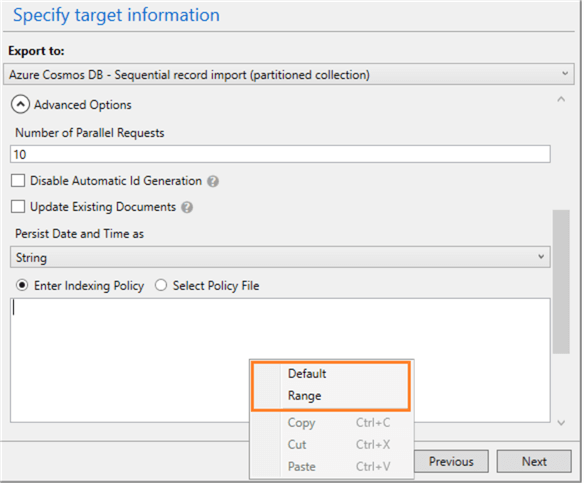

- Enter Indexing policy: The data migration tool allows to specify indexing

policy for the collections. It provides the following policy templates.

- Default: is suitable for performing equality queries against strings or using ORDER BY, range or equalities for numbers.

- Range: The range policy is suitable for using ORDER BY, range queries on numbers and strings.

We are not specifying an indexing policy, therefore the tool uses the default indexing policy.

Click Next.

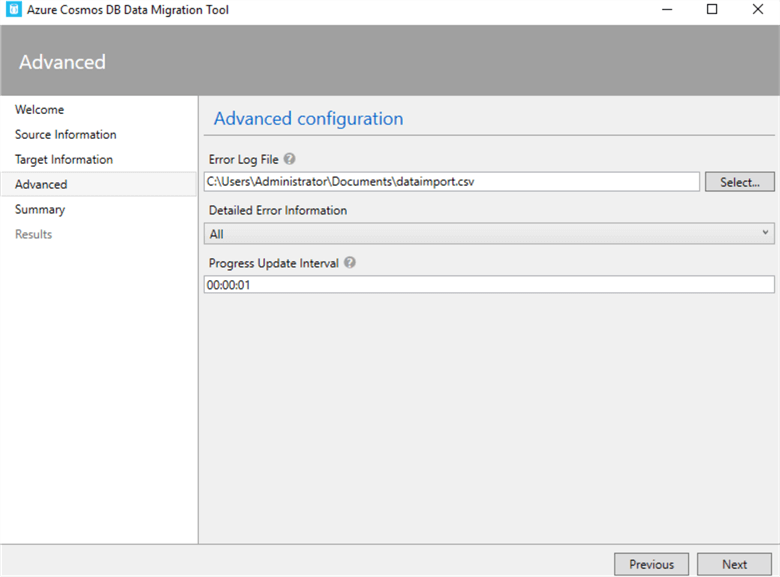

Advanced:

You can specify an error log file, control the detail in the log file, and progress update interval as shown below.

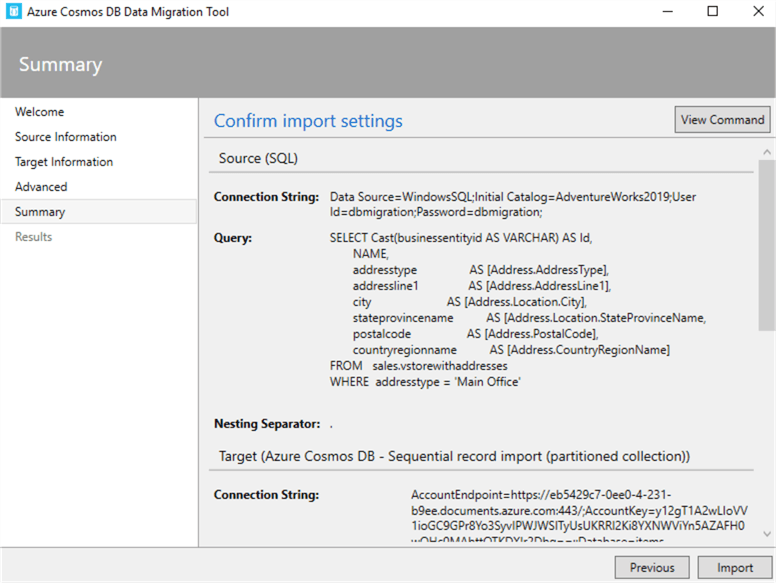

Summary:

We can review the migration summary and generate the resulting migration command.

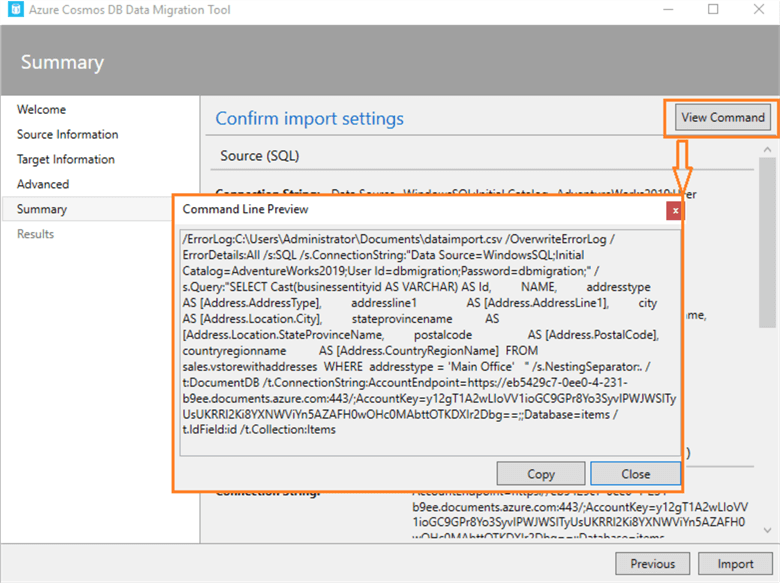

Click on the View Command button to generate the commands if we do not want to run it in graphical mode.

Once we have verified the source and target configurations, click Import.

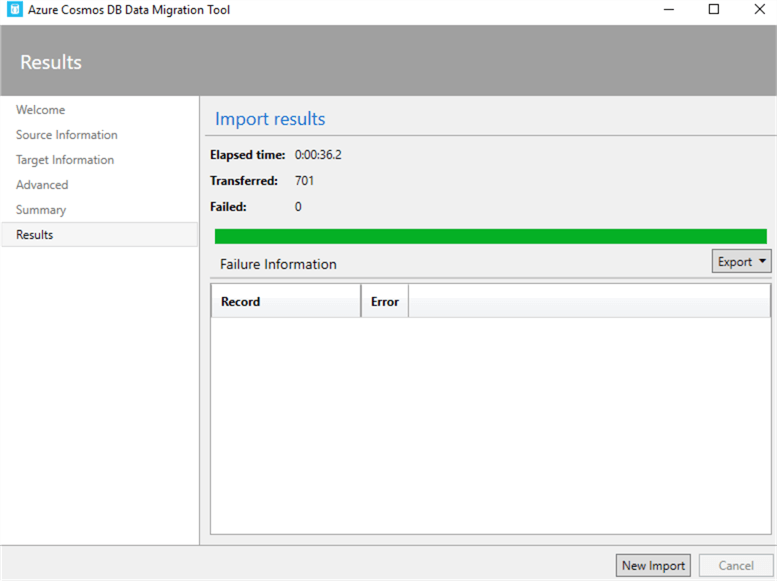

Results:

It starts the SQL data migration to Azure Cosmos DB and reports the elapsed time, transferred count, and failure information if any. The following screenshot states that it transferred 701 documents into Azure Cosmos DB without failure.

Query Imported Data

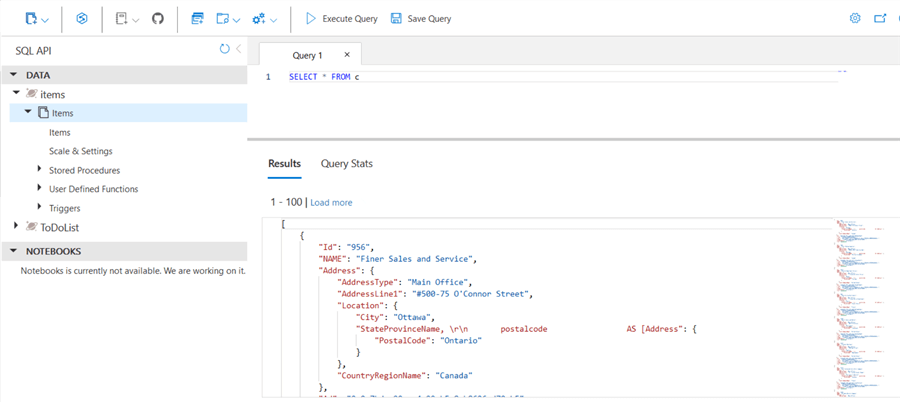

Let’s use data explorer to query the imported data into Azure Cosmos DB.

Start a new query tab and it displays the default query that retrieves all records. As shown below, the SQL data is imported into JSON format into Cosmos DB.

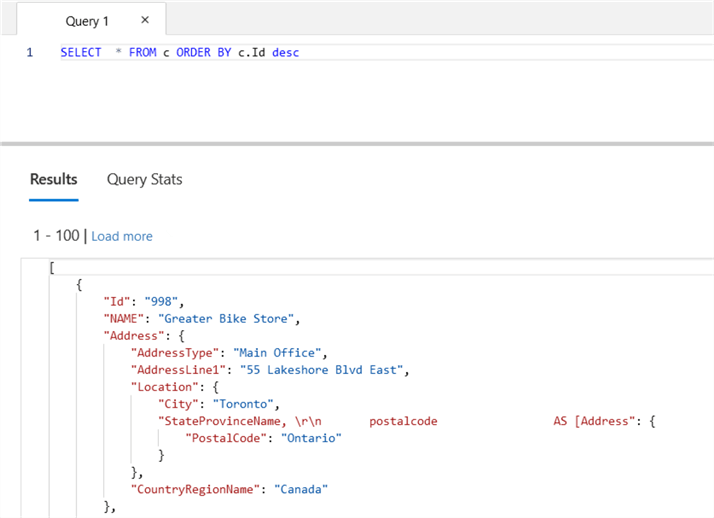

We can sort documents using an ORDER BY clause similar to the T-SQL statement.

Wrapping Up

That's it. That was a simple use case of how to import SQL data into Azure Cosmos DB.

Next Steps

- Implement an Azure Cosmos DB container and database for migrating a SQL Database into it.

- Refer to the Cosmos DB documentation for further details.

- Go through existing tips on Microsoft Azure.

- Stay tuned for more articles on Azure Cosmos DB.

About the author

Rajendra Gupta is a Consultant DBA with 14+ years of extensive experience in database administration including large critical OLAP, OLTP, Reporting and SharePoint databases.

Rajendra Gupta is a Consultant DBA with 14+ years of extensive experience in database administration including large critical OLAP, OLTP, Reporting and SharePoint databases.This author pledges the content of this article is based on professional experience and not AI generated.

View all my tips

Article Last Updated: 2022-02-08