By: Pablo Echeverria | Updated: 2022-08-26 | Comments | Related: > SQL Server and Oracle Comparison

Problem

We want to perform sentiment analysis on data. How can we do that in Oracle 19 on Windows and SQL Server 2019? Check out this article to learn how to set up machine learning for Oracle and SQL Server as well as learn about the similarities and differences between these two database platforms.

Solution

We will perform sentiment analysis using the R tool, but note that both SQL Server and Oracle use a different version. We're not going to use Python because Oracle 19 on Windows doesn't support it, only SQL Server supports it. Also, it's worth noting that the Intel Math Kernel Library (MKL) is supported by both Oracle and SQL Server but requires a separate installation and licensing, so this won't be covered in this article.

Once everything is set up, we will analyze tweets discussed previously when we loaded them into the database, identified common words, misspellings and stemming, and when we identified common phrases and removed bias. Now we will do a better sentiment analysis on the data using Machine Learning.

Oracle

Oracle R Enterprise (ORE) is now named Oracle Machine Learning for R (OML4R), an environment for analytical processing in the R language, with the benefit of query optimization, parallel processing, and scalability of the Oracle database.

Remember, Oracle Text Search allows you to perform sentiment analysis but learning how to do it using Machine Learning will enable you to get better performance and resolve different kinds of problems, not only with text.

Due to the Oracle version being freely available to download (19.3) and we're testing it on Windows, some caveats will be explained in each step. These are not found in Oracle documentation nor My Oracle Support, and they're supposedly not required in version 19.7+ or Linux. The steps are detailed below.

Step 1

Set up the Windows Server Core 2019 container in Docker and prepare the Oracle installation described in this article. However, Oracle 19.3 Machine Learning in Windows can't work with a virtual account. You need to create a local user who will own the Oracle binaries, or you'll get this error ORA-28575 "unable to open RPC connection to external procedure agent":

vim C:\app\Oracle19c\install\response\db_install.rsp

You need to run the following instructions to create the user without administrative privileges and assign a password to it:

$password = Read-Host -AsSecureString New-LocalUser -Name MyOracle -Password $Password -AccountNeverExpires -UserMayNotChangePassword | Set-LocalUser -PasswordNeverExpires $true

The first command is to store the password in a secure variable, and the second line creates a username named MyOracle. Take note of the password, as it will be asked when you create a new service during the database creation process and if you manually start the listener. Once that is done, you can use vim to edit the db_install.rsp file and set the following variables at the bottom, replacing "YourPassword" with your own:

oracle.install.IsBuiltInAccount=false oracle.install.IsVirtualAccount=false oracle.install.OracleHomeUserName=MyOracle oracle.install.OracleHomeUserPassword=YourPassword

Then you can continue installing the software. Once installed, you need to restart your container.

Step 2

Create the database as described in this article, but instead run the following commands:

$init=@" db_name=ORCL "@ Add-Content C:\APP\ORACLE19C\DATABASE\INITORCL.ORA $init

You need to change them as below:

$content=@" db_name=ORCL local_listener='(ADDRESS = (PROTOCOL = IPC)(KEY = EXTPROC1))' java_pool_size=256M shared_pool_size=512M "@ Add-Content C:\app\Oracle19c\database\INITORCL.ORA $content

This is because OML4R uses IPC to communicate with extproc.exe to execute libraries external to Oracle, and you need more memory than the default. Continue with the database creation, but take note of your features tablespace (SYSAUX by default) and temporary tablespace (SYSTEM by default, but TEMP is the most common), as those names are required later. If you shut down your container after starting it, remember to start the database with the commands below, if your database is named ORCL:

net start OracleServiceORCL sqlplus / as sysdba startup

Step 3

Download Oracle R Distribution from this link. The filename is ORD-3.6.1-win.zip with a size of 43.7 MB. Unzip it with the command below (in my case, I saved it to my host C:\temp folder, which is mapped to my container C:\setup folder):

Expand-Archive C:\setup\ORD-3.6.1-win.zip c:\app\ORD -force

Then install it with the command below:

& c:\app\ORD\R-3.6.1-win.exe /SILENT /DIR="C:\app\ORD"

Wait a few seconds and confirm it is installed by running the following command:

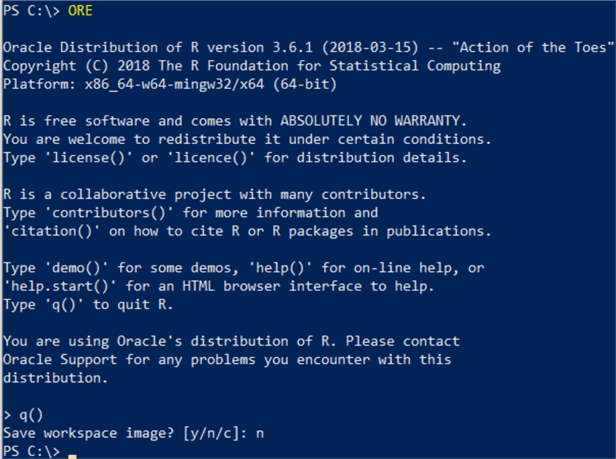

ORE

Once it succeeds, exit with the command below:

q()

And finally, because Oracle 19.3 in Windows Server 2019 is only able to search for libraries inside the ORACLE_HOME\bin folder (no matter if you set up your environment variables, set the PATH correctly, and specify the DLL locations in the extproc.ora file), you need to copy the R libraries with the command below, otherwise you'll get error ORA-06520 "Error loading external library":

cp C:\app\ORD\bin\x64\*.dll C:\app\Oracle19c\bin

This is undocumented, and unfortunately, you can't run procmon (Process Monitor) inside this Docker container, so figuring this out required some time and testing it in VMWare Workstation Pro running Windows Server 2019 Datacenter Edition with graphical UI.

Step 4

From this link, download Oracle Machine Learning for R server (ore-server-win-x86_64-1.5.1.zip (13.8MB)) and Oracle Machine Learning for R supporting (ore-supporting-win-x86_64-1.5.1.zip (4.37MB)). Before installing them, you need to take a backup of the existing R libraries with the commands below:

cd C:\app\Oracle19c\R\library

(dir) | rename-item -NewName {$_.name+".orig"}

Unzip the server libraries with the command below:

Expand-Archive C:\setup\ore-server-win-x86_64-1.5.1.zip c:\app\ORD -force

And install them with these commands:

cd C:\app\ORD\server ORE CMD INSTALL OREbase_1.5.1.zip ORE CMD INSTALL OREcommon_1.5.1.zip ORE CMD INSTALL OREdm_1.5.1.zip ORE CMD INSTALL OREdplyr_1.5.1.zip ORE CMD INSTALL OREeda_1.5.1.zip ORE CMD INSTALL OREembed_1.5.1.zip ORE CMD INSTALL OREgraphics_1.5.1.zip ORE CMD INSTALL OREmodels_1.5.1.zip ORE CMD INSTALL OREpredict_1.5.1.zip ORE CMD INSTALL OREserver_1.5.1.zip ORE CMD INSTALL OREstats_1.5.1.zip ORE CMD INSTALL ORExml_1.5.1.zip ORE CMD INSTALL ORE_1.5.1.zip

You also need to unzip the supporting libraries with the command below:

Expand-Archive C:\setup\ore-supporting-win-x86_64-1.5.1.zip c:\app\ORD -force

And install them with the commands below:

cd C:\app\ORD\supporting ORE CMD INSTALL ROracle_1.3-2.zip ORE CMD INSTALL arules_1.5-0.zip ORE CMD INSTALL Cairo_1.5-9.zip ORE CMD INSTALL DBI_0.6-1.zip ORE CMD INSTALL png_0.1-7.zip ORE CMD INSTALL randomForest_4.6-12.zip ORE CMD INSTALL statmod_1.4.29.zip

Step 5

Enable the OML4R Server components that are part of the database by first logging in as sysdba:

cd C:\app\Oracle19c\ sqlplus / as sysdba

Then run the PL/SQL commands below:

spool c:\setup\rqcfg.out ALTER PROFILE DEFAULT LIMIT PASSWORD_VERIFY_FUNCTION NULL; @C:\app\Oracle19c\R\server\rqcfg.sql

It will prompt you for four parameters in this order: 1) permanent tablespace, 2) temporary tablespace, 3) Oracle home, and 4) R home. In my case, I specified them in this order: SYSAUX, SYSTEM, C:\app\Oracle19c\ and C:\app\ORD\, but remember if your TEMP tablespace is named TEMP, you need to put it instead of SYSTEM. Once that is installed, verify there are no invalid objects with the query below:

COL OWNER FOR A20 COL OBJECT_TYPE FOR A15 COL OBJECT_NAME FOR A30 SET PAGESIZE 500 SELECT OWNER, OBJECT_TYPE, OBJECT_NAME FROM DBA_OBJECTS WHERE STATUS <> 'VALID' ORDER BY OWNER, OBJECT_TYPE;

And all installed components are valid with the query below:

SET LINESIZE 300 COL COMP_ID FOR A7 COL COMP_NAME FOR A40 COL VERSION FOR A10 SELECT COMP_ID, COMP_NAME, VERSION, STATUS FROM DBA_REGISTRY;

Also, outside of the database, confirm there were no errors during the installation with the command below:

Get-Content "c:\setup\rqcfg.out" | Select-String "(ORA)|(ERR)" | Select LineNumber, Line | Format-Table

Step 6

Create the R user logged in as SYSDBA running the PL/SQL commands below and replacing the password with your own:

sqlplus / AS SYSDBA CREATE USER MYDB IDENTIFIED BY MyPwd; GRANT CREATE SESSION, CREATE TABLE, CREATE VIEW, CREATE PROCEDURE, CREATE MINING MODEL TO MYDB; ALTER USER MYDB QUOTA UNLIMITED ON SYSTEM;

Step 7

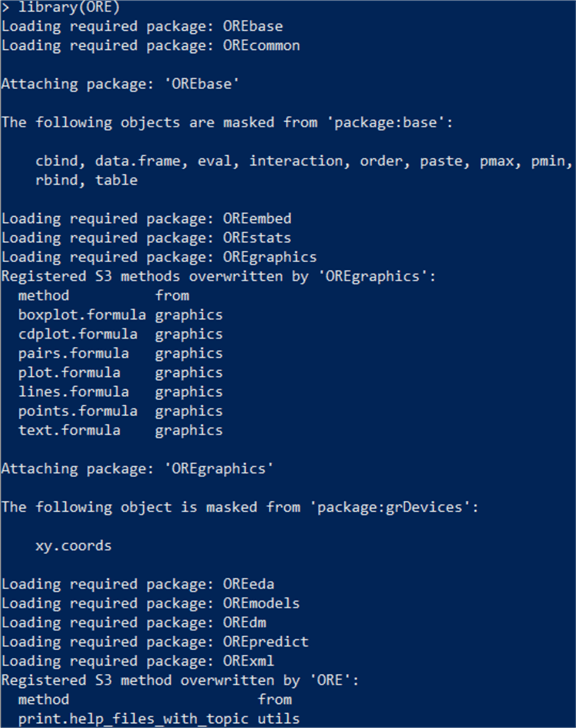

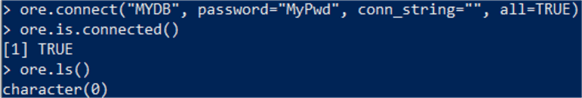

Confirm the R user can run scripts from ORE, entering ORE and running the commands below:

ORE

library(ORE)

ore.connect("MYDB", password="MyPwd", conn_string="", all=TRUE)

ore.is.connected()

You can also run the examples to confirm everything works as expected with the R commands below:

example("ore.glm") ## Builds an OML4R generalized linear model.

example("ore.stepwise") ## Builds an OML4R stepwise least squares regression model.

example("ore.odmAI") ## Builds an OML4SQL attribute importance model.

example("ore.doEval") ## Runs an embedded R execution function.

Note the last example, "ore.doEval," requires that you grant RQADMIN role to the MYDB user, but this role must be granted only if/when required, not to every user.

Step 8

Confirm the R user can run scripts from PL/SQL, logged in as MYDB running the PL/SQL commands below (thanks to Sherry Lamonica, who blogs about Oracle Machine Learning and answered a related question):

sqlplus MYDB/MyPwd /*Test OML4R extproc*/ select rqGamma(0.1) from dual; /*Test OML4R embedded R*/ select rqBesselI(1,1,1) bes1, rqBesselI(1,1,0) bes2 from dual;

Everything is set up at this point, and we can perform sentiment analysis on the previously loaded data.

We're going to start in R. To perform the sentiment analysis, I'm following the guide in this link, which has a very good explanation of each command, but I had to fix some things. The script is below with comments:

# install packages

install.packages("tm", repos='http://cran.us.r-project.org') # for text mining

install.packages("SnowballC", repos='http://cran.us.r-project.org') # for text stemming

install.packages("RColorBrewer", repos='http://cran.us.r-project.org', type='binary') # because source is newer and compile fails

install.packages("wordcloud", repos='http://cran.us.r-project.org') # word-cloud generator

install.packages("textshape", repos='http://cran.us.r-project.org', type='binary') # because source is newer and compile fails

install.packages("syuzhet", repos='http://cran.us.r-project.org') # for sentiment analysis

install.packages("lifecycle", repos='http://cran.us.r-project.org', type='binary') # because source is newer and compile fails

install.packages("R6", repos='http://cran.us.r-project.org', type='binary') # because source is newer and compile fails

install.packages("withr", repos='http://cran.us.r-project.org', type='binary') # because source is newer and compile fails

install.packages("scales", repos='http://cran.us.r-project.org', type='binary') # because source is newer and compile fails

install.packages("pillar", repos='http://cran.us.r-project.org', type='binary') # because source is newer and compile fails

install.packages("ggplot2", repos='http://cran.us.r-project.org', type='binary') # for plotting graphs and binary because source is newer and compile fails

install.packages("generics", repos='http://cran.us.r-project.org', type='binary') # because source is newer and compile fails

# load libraries

library("tm")

library("SnowballC")

library("wordcloud")

library("RColorBrewer")

library("syuzhet")

library("ggplot2")

library(ORE)

ore.connect("MYDB", password="MyPwd", conn_string="", all=TRUE)

ore.is.connected()

ore.ls()

df_tweets <- ore.pull(MYTBL)

df_tweets <- data.frame(doc_id=row.names(df_tweets),

text=df_tweets$TEXT)

class(df_tweets) # debug

head(df_tweets) # debug

TextDoc <- Corpus(DataframeSource(df_tweets))

#Replacing "/", "@" and "|" with space

toSpace <- content_transformer(function (x , pattern ) gsub(pattern, " ", x))

TextDoc <- tm_map(TextDoc, toSpace, "/")

TextDoc <- tm_map(TextDoc, toSpace, "@")

TextDoc <- tm_map(TextDoc, toSpace, "\\|")

# Convert the text to lower case

TextDoc <- tm_map(TextDoc, content_transformer(tolower))

# Remove numbers

TextDoc <- tm_map(TextDoc, removeNumbers)

# Remove english common stopwords

TextDoc <- tm_map(TextDoc, removeWords, stopwords("english"))

# Remove your own stop word

# specify your custom stopwords as a character vector

TextDoc <- tm_map(TextDoc, removeWords, c("https", "t.co", "amp")) # these are found in my dataset

# Remove punctuations

TextDoc <- tm_map(TextDoc, removePunctuation)

# Eliminate extra white spaces

TextDoc <- tm_map(TextDoc, stripWhitespace)

# Text stemming - which reduces words to their root form

TextDoc <- tm_map(TextDoc, stemDocument)

# Build a term-document matrix

TextDoc_dtm <- TermDocumentMatrix(TextDoc)

dtm_m <- as.matrix(TextDoc_dtm)

# Sort by descearing value of frequency

dtm_v <- sort(rowSums(dtm_m),decreasing=TRUE)

dtm_d <- data.frame(word = names(dtm_v),freq=dtm_v)

# Display the top 5 most frequent words

head(dtm_d, 10)

# regular sentiment score using get_sentiment() function and method of your choice

# please note that different methods may have different scales

syuzhet_vector <- get_sentiment(DataframeSource(df_tweets), method="syuzhet")

# see the first row of the vector

head(syuzhet_vector)

# see summary statistics of the vector

summary(syuzhet_vector)

# bing

bing_vector <- get_sentiment(DataframeSource(df_tweets), method="bing")

head(bing_vector)

summary(bing_vector)

#affin

afinn_vector <- get_sentiment(DataframeSource(df_tweets), method="afinn")

head(afinn_vector)

summary(afinn_vector)

rbind(

sign(head(syuzhet_vector)),

sign(head(bing_vector)),

sign(head(afinn_vector))

)

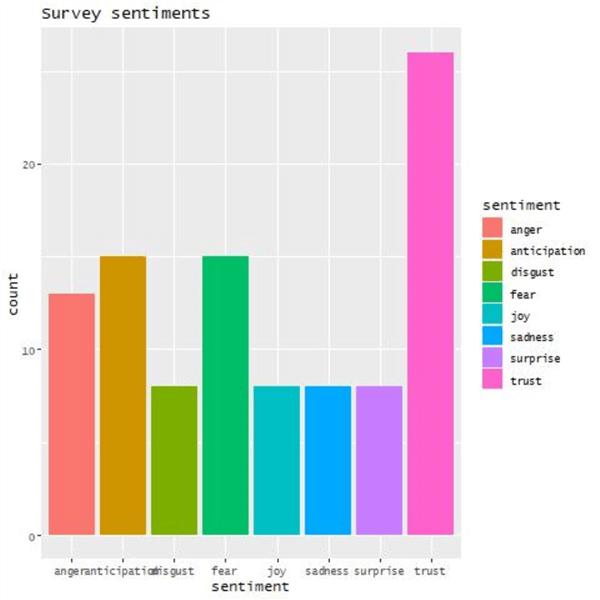

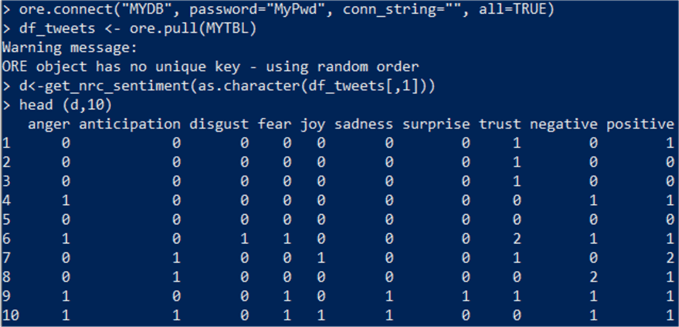

# run nrc sentiment analysis to return data frame with each row classified as one of the following

# emotions, rather than a score:

# anger, anticipation, disgust, fear, joy, sadness, surprise, trust

# It also counts the number of positive and negative emotions found in each row

df_tweets <- ore.pull(MYTBL)

print(as.character(df_tweets[,1])) # debug

d<-get_nrc_sentiment(as.character(df_tweets[,1]))

# head(d,10) - to see top 10 lines of the get_nrc_sentiment dataframe

head (d,10)

#transpose

td<-data.frame(t(d))

#The function rowSums computes column sums across rows for each level of a grouping variable.

td_new <- data.frame(rowSums(td[2:72]))

#Transformation and cleaning

names(td_new)[1] <- "count"

td_new <- cbind("sentiment" = rownames(td_new), td_new)

rownames(td_new) <- NULL

td_new2<-td_new[1:8,]

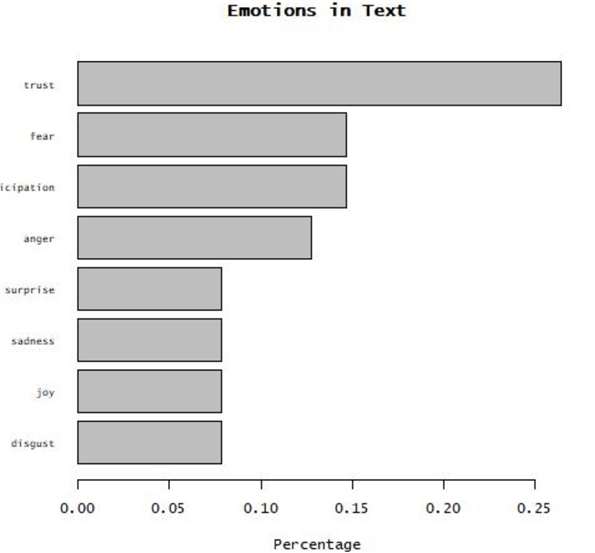

#Plot One - count of words associated with each sentiment

jpeg(filename="C:\\setup\\Plot1.jpg")

quickplot(sentiment, data=td_new2, weight=count, geom="bar", fill=sentiment, ylab="count")+ggtitle("Survey sentiments")

dev.off()

#Plot two - count of words associated with each sentiment, expressed as a percentage

jpeg(filename="C:\\setup\\Plot2.jpg")

barplot(

sort(colSums(prop.table(d[, 1:8]))),

horiz = TRUE,

cex.names = 0.7,

las = 1,

main = "Emotions in Text", xlab="Percentage"

)

dev.off()

Note: In the last steps, I generated two graphs in a shared path to view them outside of my container:

From the database, I'm only interested in the numeric values from each tweet, so the only instructions and output I want are here:

And now I can run those instructions from the database. Because I need to create a script, I'm going to grant RQADMIN role to the MYDB user with the instructions below (another option is to create another user with RQADMIN role, create the scripts, and then use rqGrant to allow the MYDB user to run it):

sqlplus / as sysdba GRANT RQADMIN TO MYDB; exit

And now, I can convert the previous R script to PL/SQL:

sqlplus MYDB/MyPwd

BEGIN

FOR i IN (SELECT 1 FROM ALL_RQ_SCRIPTS WHERE OWNER='MYDB' AND NAME='MyNRCSentimentAnalysis') LOOP

EXECUTE IMMEDIATE 'BEGIN sys.rqScriptDrop(''MyNRCSentimentAnalysis''); END;';

END LOOP;

sys.rqScriptCreate('MyNRCSentimentAnalysis',

'function(df_tweets) {

library("syuzhet")

get_nrc_sentiment(df_tweets)

}');

END;

/

The first lines remove the script if it exists, then create a new script as follows:

- Name MyNRCSentimentAnalysis

- The script is a function that receives data from the database in the variable df_tweets

- The script loads the only library I need, which is syuzhet

- The output is the last line ran: get_nrc_sentiment

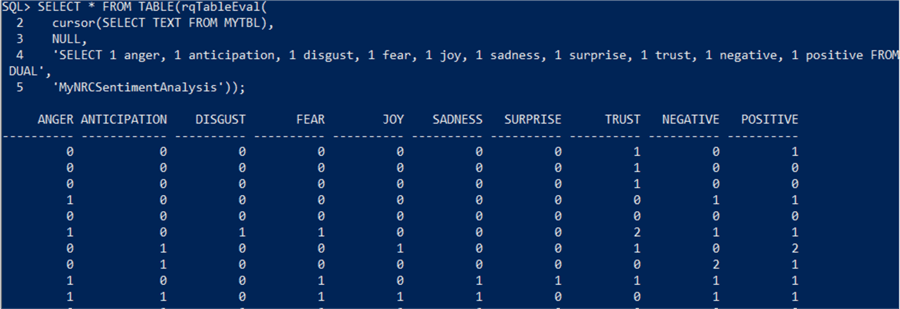

Then the script can be called as follows:

SET LINESIZE 300 SET PAGESIZE 100 SELECT * FROM TABLE(rqTableEval( cursor(SELECT TEXT FROM MYTBL), NULL, 'SELECT 1 anger, 1 anticipation, 1 disgust, 1 fear, 1 joy, 1 sadness, 1 surprise, 1 trust, 1 negative, 1 positive FROM DUAL', 'MyNRCSentimentAnalysis'));

The script is executed using rqTableEval. The first parameter is the table (which needs to be a cursor). The second parameter is NULL as there are no parameters to send. The third parameter is how the output should be returned with column names and data types. (If it is NULL, it returns a serialized object; if it is XML, it can return data and images; if it is PNG, it returns a single image.) And the last parameter is the script name. Part of the output is below, one line per row:

With this output, you can update the rows, load into another table, pass to another Machine Learning script, etc.

Note: There are other functions for different functionality: rqEval, rwqGroupEval, and rqRowEval. The official documentation can be found here:

- https://docs.oracle.com/en/database/oracle/machine-learning/oml4r/1.5.1/oread/index.html

- https://docs.oracle.com/en/database/oracle/machine-learning/oml4r/1.5.1/oreug/index.html

SQL Server

Machine Learning Services in SQL Server allows you to run R scripts with relational data, allowing you to use open-source packages and frameworks in-database without moving data outside SQL Server or over the network.

Note: Enabling this feature requires a working firewall in Windows, so this couldn't be tested in Docker. For this tip, it was done in VMWare Workstation Pro running Windows Server 2019 Datacenter Edition.

The steps are detailed below, and you can find them in the official documentation in graphical mode:

Step 1

Install the feature running the command below:

& "E:\setup.exe" /Q /Action=Install /IAcceptSQLServerLicenseTerms /IndicateProgress /Features=SQLEngine,Conn,AdvancedAnalytics,SQL_INST_MR /IAcceptROpenLicenseTerms /MRCacheDirectory=C:\MRCache /InstanceName=MSSQLSERVER /TcpEnabled=1 /SecurityMode=SQL /SaPwd=YourPassword /SqlSysAdminAccounts="Administrator"

The main feature is AdvancedAnalytics, and SQL_INST_MR installs the R supporting libraries; others are similar for Python and Java. You need to accept R Open license terms and specify a Machine Learning R cache directory. Once installed, make sure there is a service named MSSQLLaunchpad, and it is in the "Running" state. Note: This doesn't install the R runtime. It needs to be done manually in another step.

Step 2

Enable external script execution with the T-SQL commands below:

EXEC sp_configure 'external scripts enabled', 1 RECONFIGURE WITH OVERRIDE GO

Restart the SQL Server service and check the status for all services with the Powershell commands below. This will also restart the MSSQLLaunchpad:

net stop MSSQLSERVER net start MSSQLSERVER get-service -name *sql*

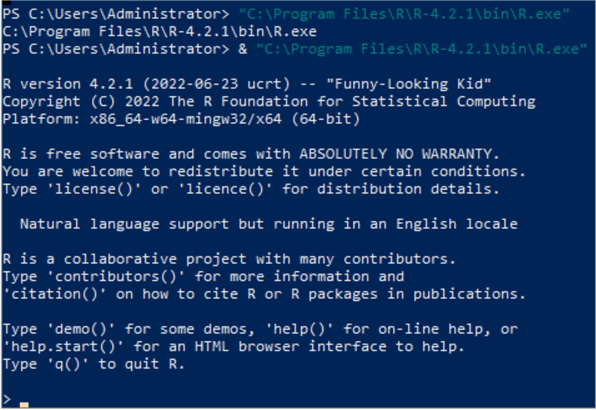

Step 3

Install R as described here. You need to download R-4.2.1 with a size of 79MB and install graphically or with PowerShell:

& c:\R-4.2.1-win.exe /SILENT /DIR="C:\Program Files\R\R-4.2.1"

Open R and install the packages described in the guide (iterators, foreach, R6, jsonlite):

Download CompatibilityAPI and RevoScaleR packages as described in the guide. Install them with the R commands below:

install.package("C:\CompatibilityAPI_1.1.0.zip")

install.package("C:\RevoScaleR_10.0.0.zip")

And configure the installed R runtime with SQL Server with the command below:

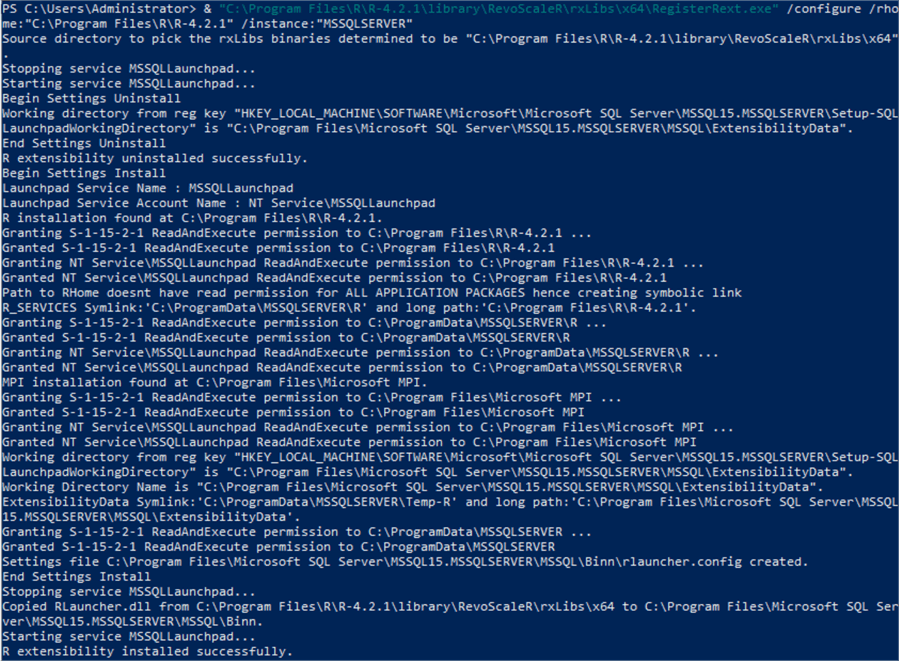

& "C:\Program Files\R\R-4.2.1\library\RevoScaleR\rxLibs\x64\RegisterRext.exe /configure /rhome:"C:\Program Files\R\R-4.2.1" /instance:"MSSQLSERVER"

The output is as follows:

Step 4

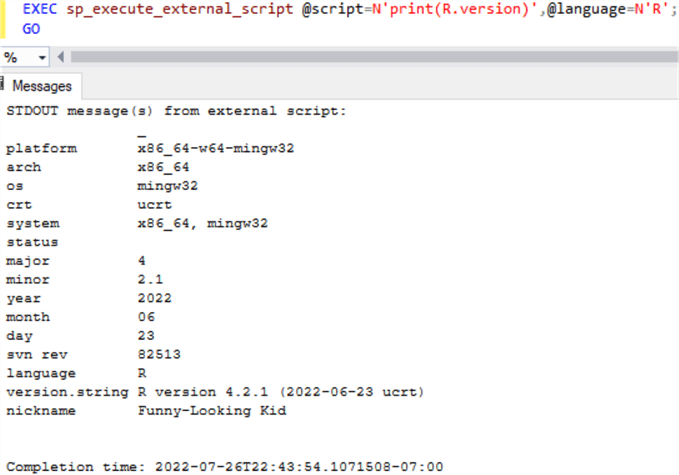

Confirm SQL Server can run R scripts with the T-SQL command below:

EXEC sp_execute_external_script @script=N'print(R.version)',@language=N'R'; GO

The output is below:

Step 5

Check the amount of memory assigned for external script execution and adjust as needed as described in the documentation. In my case, I had to increase from the default 20% to 40% because when executing an R script with the "syuzhet" library, I was getting the error: "Error: cannot allocate vector of size 29.6 Mb." Note: I only assigned 4 GB to the VMWare virtual machine:

SELECT * FROM sys.resource_governor_external_resource_pools WHERE name = 'default'; ALTER EXTERNAL RESOURCE POOL "default" WITH (max_memory_percent = 40); ALTER RESOURCE GOVERNOR RECONFIGURE;

Everything is set up at this point, and we can perform sentiment analysis on the previously loaded data.

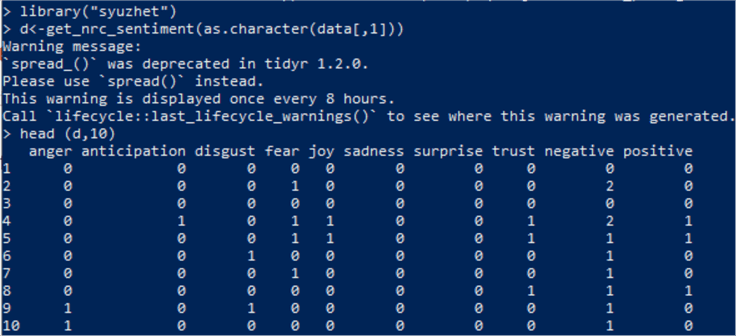

We're going to start in R. To perform the sentiment analysis, I'm following the guide in this link, which has a very good explanation of each of the commands but I had to use other commands for SQL Server as described in this tip. As it was explained earlier, I'm only showing the output from these R commands:

install.packages("RODBC")

install.packages("syuzhet")

library(RODBC);

sqlcnn <- odbcDriverConnect("Driver={SQL Server Native Client 11.0}; Server=.; Trusted_Connection=yes;");

data <- sqlQuery(sqlcnn, "SELECT TEXT FROM MyDb.dbo.MyTbl;");

close(sqlcnn);

head(data);

library("syuzhet")

d<-get_nrc_sentiment(as.character(data[,1]))

# head(d,10) - to see top 10 lines of the get_nrc_sentiment dataframe

head (d,10)

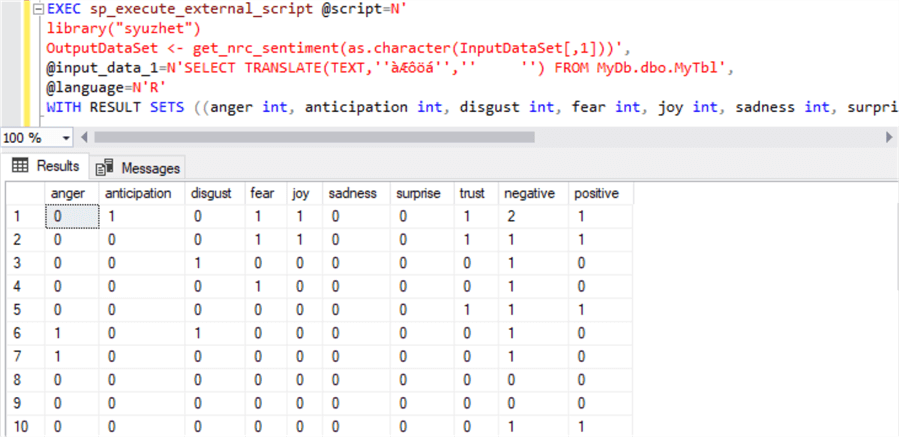

And now, I can run the corresponding commands in T-SQL:

EXEC sp_execute_external_script @script=N'

library("syuzhet")

OutputDataSet <- get_nrc_sentiment(as.character(InputDataSet[,1]))',

@input_data_1=N'SELECT TRANSLATE(TEXT,''àÆôöá'','' '') FROM MyDb.dbo.MyTbl',

@language=N'R'

WITH RESULT SETS ((anger int, anticipation int, disgust int, fear int, joy int, sadness int, surprise int, trust int, negative int, positive int));

Note several things here:

- To pass data, you must put a SQL query in the variable @input_data_1.

- The default name assigned to this dataframe within R is named InputDataSet, but this can be changed.

- The default name assigned to the output dataframe within R to be passed back to SQL Server is OutputDataSet. Without this, nothing gets returned from the query.

- Passing data from T-SQL to R converts special characters to an illegible character that R can't decode. So, you need to replace them first or you'll get the error "invalid input ' ' in 'utf8towcs' Calls: source ... withVisible -> eval -> eval -> get_nrc_sentiment -> tolower".

- If you don't specify WITH RESULT SETS, you'll get the columns without name. It's better to specify which columns are returned and their data types.

The output is below:

The official documentation can be found here:

- https://docs.microsoft.com/en-us/sql/machine-learning/install/sql-ml-component-commandline-install

- https://docs.microsoft.com/en-us/sql/database-engine/install-windows/install-sql-server-from-the-command-prompt

- https://docs.microsoft.com/en-us/sql/machine-learning/install/sql-machine-learning-services-windows-install

- https://docs.microsoft.com/en-us/sql/machine-learning/administration/create-external-resource-pool

Conclusion

There are some similarities between Oracle and SQL Server: 1) the database feature needs to be installed separately, 2) the R runtime needs to be installed separately, 3) after installation, you need to download and install database-specific R libraries, and the whole security schema is strengthened as described for SQL Server and Oracle. In SQL Server, this can run from virtual accounts, but in the Oracle version I tested, this required a local user account. SQL Server can use the latest R version 4.2.1, and I didn't encounter any issue loading libraries, while Oracle provides their own tested R version 3.6.1. Still, I had some issues with newer versions of several libraries. SQL Server supports Python, but Oracle 19c in Windows doesn't. Oracle was able to be tested in a Docker container. It didn't require a service restart after installing the feature. We didn't have to remove special characters from the data sent to the R function, variable name mapping between PL/SQL and R is more straightforward, and Oracle can receive serialized dataframes and pictures. SQL Server security schema makes it hard to work with pictures.

Next Steps

Here is the official documentation for Oracle Machine Learning:

- https://docs.oracle.com/en/database/oracle/machine-learning/oml4r/1.5.1/oread/index.html

- https://docs.oracle.com/en/database/oracle/machine-learning/oml4r/1.5.1/oreug/index.html

Here is the official documentation for SQL Server Machine Learning:

- https://docs.microsoft.com/en-us/sql/machine-learning/install/sql-ml-component-commandline-install

- https://docs.microsoft.com/en-us/sql/database-engine/install-windows/install-sql-server-from-the-command-prompt

- https://docs.microsoft.com/en-us/sql/machine-learning/install/sql-machine-learning-services-windows-install

- https://docs.microsoft.com/en-us/sql/machine-learning/administration/create-external-resource-pool

Here are more tips regarding SQL Server Machine Learning:

- How to get key phrases from Azure SQL using Artificial Intelligence

- Machine Learning Terms and Concepts

- Introduction to SQL Server Machine Learning Services with Python

- SQL Server Monitoring Meets Machine Learning

- Introduction to Microsoft Azure Machine Learning

- Basics of Machine Learning with SQL Server 2017 and R

- Announcing Extraordinary SQL Optimization: Advanced Machine Learning Analytics Meets SQL Server Domain Insight

- Machine Learning Development Lifecycle

- Synapse Analytics vs Snowflake for Delta Format Files, Machine Learning, Table Relationships and Constraints

About the author

Pablo Echeverria is a talented database administrator and C#.Net software developer since 2006. Pablo wrote the book "Hands-on data virtualization with Polybase". He is also talented at tuning long-running queries in Oracle and SQL Server, reducing the execution time to milliseconds and the resource usage up to 10%. He loves learning and connecting new technologies providing expert-level insight as well as being proficient with scripting languages like PowerShell and bash. You can find several Oracle-related tips in his LinkedIn profile.

Pablo Echeverria is a talented database administrator and C#.Net software developer since 2006. Pablo wrote the book "Hands-on data virtualization with Polybase". He is also talented at tuning long-running queries in Oracle and SQL Server, reducing the execution time to milliseconds and the resource usage up to 10%. He loves learning and connecting new technologies providing expert-level insight as well as being proficient with scripting languages like PowerShell and bash. You can find several Oracle-related tips in his LinkedIn profile.This author pledges the content of this article is based on professional experience and not AI generated.

View all my tips

Article Last Updated: 2022-08-26