By: Koen Verbeeck | Updated: 2023-07-25 | Comments (4) | Related: 1 | 2 | 3 | 4 | 5 | > Microsoft Fabric

Problem

Lately, I've seen many blogs and videos talking about Microsoft Fabric. Is this a new data analytics product? Or is it a new name for Azure Synapse Analytics? I'm confused as there seems to be a lot of overlap between features.

Solution

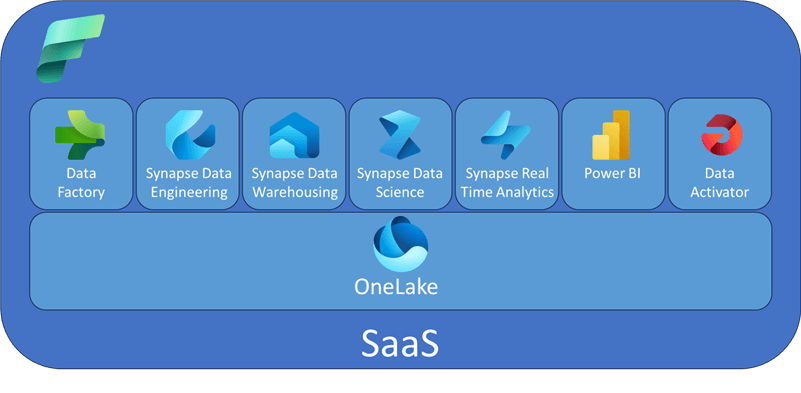

Microsoft Fabric is a new end-to-end unified analytics platform announced at Microsoft Build 2023. "New" is a bit of a stretch because Fabric integrates existing technologies into a single platform: Azure Data Factory, Azure Synapse Analytics, and Power BI. Aside from those existing products, new features are also included, such as OneLake, Copilot, DirectLake mode for Power BI, and Data Activator. Some of those features are still in private preview at the time of writing, while Fabric itself is in public preview.

Architectural Overview

In contrast with previous offerings, Fabric offers a single environment to develop your entire analytics solution: ingestion of data, transformation using one of the available engines (SQL, Spark, or Kusto), and reporting with Power BI. Everything is offered as a Software-as-a-Service (SaaS) solution.

Previously, you had to use several tools in the Microsoft Data Platform, either on-premises or in Azure. For example, if you wanted to create a data warehouse solution with reporting, you needed the following products:

- Azure Data Factory for ingestion. Development was done in ADF studio using a browser.

- Azure SQL Database for storing the data. The data is transformed using SQL queries, and the data warehouse is modeled in the database. Development was done in SQL Server Management Studio (SSMS) or Visual Studio.

- Power BI for reporting. Development was done in Power BI Desktop or a browser. Buying licenses for users was done through Office 365.

With Microsoft Fabric, the development experience is much more unified and less fragmented. Azure Synapse Analytics already made an effort towards that goal, but Fabric goes much further. The entirety of Fabric can be visualized in the following figure:

At the foundation of Fabric, there's OneLake. All data is stored in your OneLake data hub. The name comes from the motto, "OneLake is like OneDrive for your data." Since all data is stored in one central location, collaboration, security, and governance should be improved as the various systems need less data integration. OneLake is built on top of Azure Data Lake Storage, and data can be stored in any format. All data can be read by the various compute engines, and they all store their data in OneLake. This implies that the same compute engines can read data processed by another compute engine. For example, if a table was written by a Spark notebook (Synapse Data Engineering or Synapse Data Science), it can be read by the SQL endpoint (Synapse Data Warehousing).

Data written as tabular format will be stored in the delta format (as originally developed by Databricks, and it is a parquet file). To be clear, every delta file is a parquet file, but not every parquet file is in delta format. Microsoft references the file format as "delta-parquet".

As mentioned earlier, there are different compute engines in Microsoft Fabric:

- Synapse Data Engineering: Like Azure Databricks and Azure Synapse Analytics, you can transform data at scale using Spark notebooks. You can, for example, build a delta lakehouse with this compute engine.

- Synapse Data Warehouse: Use the familiar T-SQL language for your data transformations. This engine is like Azure Synapse Analytics Dedicated SQL Pools and Serverless SQL Pools. You can build a more "traditional" data warehouse, but it's also a delta lakehouse since OneLake uses delta files. Remember that the concept of dedicated SQL pools – where you define a distribution key to distribute data over different compute nodes – doesn't exist anymore because everything is SaaS in Microsoft Fabric.

- Synapse Data Science: Use Azure Machine Learning or Spark notebooks for creating and training models.

- Synapse Real-Time Analytics: Query and analyze large volumes of data in real time. This compute engine is like Azure Data Explorer (a time-series database) or Data Explorer Pools in Azure Synapse Analytics. Queries are written using the Kusto Query Language (KQL).

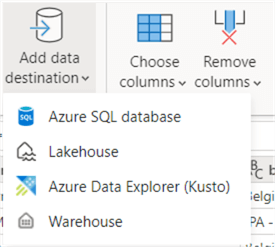

- Data Factory: Data integration with pipelines like Azure Data Factory or (Power Query) Dataflows Gen2. The latter is similar to Power BI Dataflows, but instead of writing to a Power BI dataset, they can write to several destinations (Azure SQL DB, Fabric Lakehouse, Fabric Warehouse, or Azure Data Explorer).

With all these different compute engines, you have a wide array of options for how you want to work with your data. Microsoft Fabric has a true separation of storage and compute, popularized by products such as Databricks and Snowflake.

The availability of the different compute engines, the separation of storage and compute, and the scalability and performance of Fabric are very promising.

What Does This Mean for You?

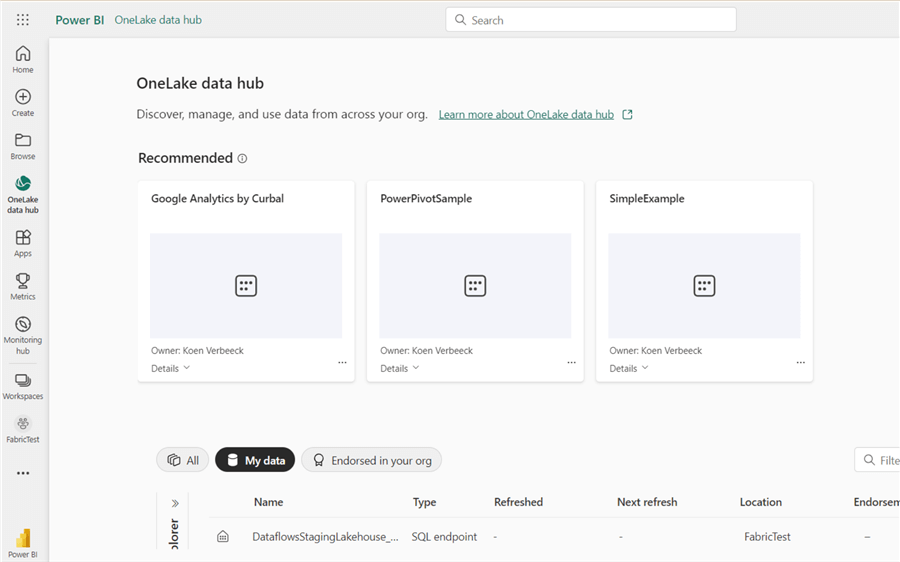

The answer is "it depends," as usual. Not much may change if you're a Power BI developer and only deal with Power BI Desktop, data sets, and reports. You can keep working with Power BI just like you did before. It might become a bit confusing as online resources will refer to Microsoft Fabric sometimes instead of Power BI (some user groups have rebranded themselves from Power BI user groups to Fabric user groups, for example). You might also notice the OneLake data hub popping up in your Power BI environment:

If you are more of a "back-end" data developer and you're working with tools like Azure Data Factory, Azure SQL Database, Azure Synapse Analytics, and so on, you can continue working with those tools just like you did before. All these products will continue to exist for years to come. Or you can migrate your solution to their Microsoft Fabric counterparts. Microsoft disclosed in the Build keynotes that there will be migration paths.

For new projects (Power BI or data platform), you have the same options: use the existing frameworks or start using Fabric. Keep in mind that at the time of writing, Fabric is still in public preview, and not all pricing details have been published yet. Once they are available, you can do a cost-benefit analysis of which platform will yield the best results. If you start with Microsoft Fabric, you must assess which compute engine will be used. If your team has been building a data warehouse in SQL Server for years, they will be most familiar with the data warehousing SQL engine, for example. But the advantage of Fabric is that every user can choose the compute engine that fits their use case best. After all, the data is in one central location.

Next Steps

- If you're interested in what Fabric offers, more articles about this subject are in the pipeline.

- Check out the official website and the official documentation of Microsoft Fabric for admins.

- If you want to get your hands dirty, a Microsoft Learning Path is available.

- There's already a ton of community content available:

About the author

Koen Verbeeck is a seasoned business intelligence consultant at AE. He has over a decade of experience with the Microsoft Data Platform in numerous industries. He holds several certifications and is a prolific writer contributing content about SSIS, ADF, SSAS, SSRS, MDS, Power BI, Snowflake and Azure services. He has spoken at PASS, SQLBits, dataMinds Connect and delivers webinars on MSSQLTips.com. Koen has been awarded the Microsoft MVP data platform award for many years.

Koen Verbeeck is a seasoned business intelligence consultant at AE. He has over a decade of experience with the Microsoft Data Platform in numerous industries. He holds several certifications and is a prolific writer contributing content about SSIS, ADF, SSAS, SSRS, MDS, Power BI, Snowflake and Azure services. He has spoken at PASS, SQLBits, dataMinds Connect and delivers webinars on MSSQLTips.com. Koen has been awarded the Microsoft MVP data platform award for many years.This author pledges the content of this article is based on professional experience and not AI generated.

View all my tips

Article Last Updated: 2023-07-25