By: Arshad Ali | Comments | Related: > In Memory OLTP

Problem

SQL Server 2014 will be released with an In-Memory OLTP feature for OLTP workloads to significantly improve performance and reduce processing time when you have a plentiful amount of memory and multi-core processors. In-memory OLTP allows you to create a memory optimized table which resides completely in memory and is resilient if the server crashes. But what about data durability of memory optimized tables? I heard there is new type of stored procedure which improves performance even further when working with memory optimized tables? What about transactions or isolation level support for these types of tables? Check out this tip to learn more.

Solution

Looking at declining hardware prices, SQL Server 2014 is releasing an In-memory OLTP feature which leverages today's servers with large amounts of memory and multi-core processors. The In-memory OLTP feature allows us to create a table which resides completely in physical memory, significantly improving the performance of the OLTP workload and reduces the time required for processing. With this new feature, SQL Server 2014 is well positioned to take full advantage of changing hardware trends and modern applications.

In my first tip in this series, "SQL Server 2014 In-Memory OLTP Architecture and Data Storage", I talked about these features in detail:

- How In-Memory OLTP is different from DBCC PINTABLE

- What were architectural design principals for SQL Server team

- In-memory OLTP architecture and memory optimized tables

- How memory optimized tables are different from traditional disk based tables

- Data structure for a row when storing data in physical memory

In my second tip, "Overview of Applications, Indexes and Limitations for SQL Server 2014 In-Memory OLTP Tables", I explained the following:

- Types of applications that can take advantage of In Memory OLTP features

- Different types of indexes that we can create on memory optimized tables and in which scenario each index is preferred

- Considerations, restrictions and limitations you need to keep in mind when working with memory optimized tables

In this tip, I am going to discuss different types of memory optimized tables that you can create with respect to the durability of the data. I am also going to explain a new type of stored procedure, called natively compiled stored procedures, which you can create while working with memory optimized tables. This type of stored procedure should significantly improve performance. At the end of this tip, I am going to talk about transaction and isolation level support with memory optimized tables. Let's jump in.

Durability of SQL Server memory optimized tables (SCHEMA_ONLY vs. SCHEMA_AND_DATA)

In-memory OLTP provides two options to create memory optimized tables:

- Durable with logging - You can create a memory optimized table using the DURABILITY = SCHEMA_AND_DATA (default) option. In this case, data changes are logged in SQL Server transaction log and data gets stored in a filestream based filegroup. In other words, with this option you are actually instructing the in-memory OLTP engine to persist the data so that it can be recovered after an unexpected crash or server restart.

- Non-durable without logging - When you create a memory optimized table using the DURABILITY = SCHEMA_ONLY option, data changes are not logged or stored on disk for persistence, but rather only the structure/schema of the table is saved as part of the SQL Server meta data. In other words, after a server restart the table will be available, but no data will be in it.

Now if you are wondering why someone needs a table without data after restart, there are certain scenarios for temporary storage such as storing web sessions, data staging, etc. Remember, these tables don't require any disk IO (as no data gets stored) during transaction processing operations on these tables and meets all the other transactional requirements; i.e. they are atomic, isolated, and consistent.

SQL Server Natively Compiled Procedures vs. Interpreted T-SQL Access

The In-memory OLTP engine introduces a new type of stored procedure, called natively compiled stored procedures. As its name implies, these stored procedures are compiled, by Microsoft's Visual C/C++ compiler, to native code via a C code generator. During compilation it uses aggressive optimization to improve performance. These natively compiled stored procedures can only access memory optimized tables. As these are natively compiled, they provide maximum performance though it has limited T-SQL surface area (for example, no sub-queries, no outer joins, etc.). You can consider creating natively compiled stored procedures for OLTP type queries or when you want to optimize the performance of a critical business process.

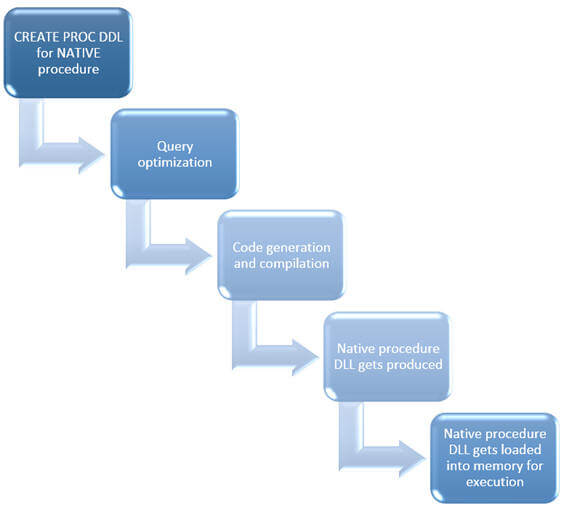

When you create a natively compiled stored procedure, you need to specify the NATIVE_COMPILATION option as part of your procedure creation syntax (you can learn more about this in this tip). The process flow for creating natively complied stored procedure is very similar to the process of creating a memory optimized table as shown below. When you execute the CREATE PROC DDL command, the query gets optimized (please note, queries get optimized at the time of procedure creation) and the C code is generated followed by compilation into machine\native code. A native procedure DLL produced after the compilation then gets loaded into memory for execution.

Unlike natively compiled stored procedure, an interpreted T-SQL or traditional T-SQL (both query or stored procedure) can access both traditional disk based tables as well as memory optimized table and with that you have access to the full T-SQL surface area. But as said before, this will not yield performance like natively complied stored procedures. You can consider using interpreted T-SQL when you want to run some ad-hoc or reporting style queries, or you want to speed up the process of the application migration.

Regular interpreted stored procedures can call natively compiled stored procedures and they can both be part of a transaction; though there are only certain isolation levels supported. Refer to the next section for more detailed information on supported isolation levels for memory optimized tables.

SQL Server Transaction isolation level support for memory optimized table

The lowest isolation level supported for memory optimized tables is Snapshot; it means all transactions are a snapshot based on the memory optimized table. If you remember, these tables are based on a multiversion of rows, which are stored in the memory as part of table data itself. Whereas if you are using Snapshot Isolation or Read Committed Snapshot Isolation for disk based tables the database engine stores a multiversion of the records in the tempdb database. These are the types of isolation levels supported for memory optimized tables, which can be specified in natively compiled stored procedures or when using interpreted T-SQL. Also the isolation level can be specified using the following table-level hints:

- Snapshot - Reads are consistent as of start of the transactions and writes are as always consistent

- Repeatable Read - Read operations yield same row versions if repeated (read same row multiple times) at commit time

- Serializable - Transaction is executed as if there are no concurrent transactions (no new rows in the database) - all actions happen at a single serialization point or commit time.

For all above supported isolation levels, the logical read time of a transaction is set to the start time of the transaction.

The In-memory OLTP engine has built-in conflict detection and validation mechanism to ensure consistency by failing one of the transactions and hence you need to use retry logic to handle conflict and validation failures.

If you have a transaction spanning across disk based tables and memory optimized tables (called cross container transaction), you need to be aware that there are certain combinations of isolation levels supported and not supported as described below:

|

Disk based tables |

Memory optimized tables |

Supported |

|---|---|---|

| Read committed | Snapshot | Yes |

| Read committed | Repeatable Read | Yes |

| Read committed | Serializable | Yes |

| Repeatable Read | Snapshot | Yes |

| Serializable | Snapshot | Yes |

| Snapshot | Any isolation level | No |

| Repeatable Read | Repeatable Read | No |

| Repeatable Read | Serializable | No |

| Serializable | Repeatable Read | No |

| Serializable | Serializable | No |

Next Steps

- Review Understanding In-Memory OLTP feature of SQL Server 2014 - tips Part 1 and Part 2 in this series.

- Review Migrate to Natively Compiled SQL Server Stored Procedures for Hekaton tip.

- Review Native Compilation of Tables and Stored Procedures article on msdn.

- Review Transactions in Memory-Optimized Tables article on msdn.

- Review Migrating to In-Memory OLTP article on msdn.

- Review my previous tips.

About the author

Arshad Ali is a SQL and BI Developer focusing on Data Warehousing projects for Microsoft.

Arshad Ali is a SQL and BI Developer focusing on Data Warehousing projects for Microsoft.This author pledges the content of this article is based on professional experience and not AI generated.

View all my tips