By: Sergey Gigoyan | Comments (12) | Related: > SQL Server Management Studio

Problem

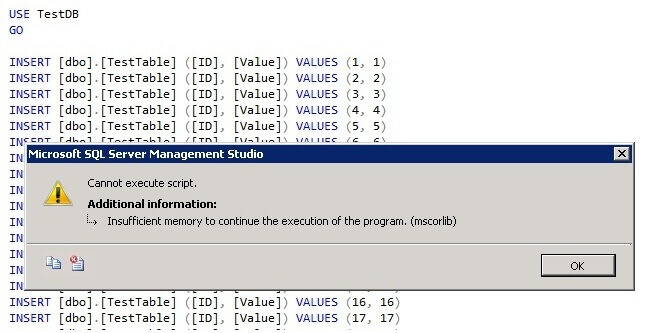

Have you ever faced a situation when executing a large script file in SQL Server Management Studio (SSMS) that raises this error - "Cannot execute script. Insufficient memory to continue the execution of the program. (mscorlib)" ?

The problem is that SSMS is unable to handle large script files and this problem exists even in SQL Server 2014. In this tip we will describe how to overcome this problem using the SQLCMD utility from the command line instead of SSMS.

Solution

Let's illustrate a situation when we are trying to execute a large T-SQL script in SQL Server Management Studio (SSMS) and the above mentioned issue occurs.

First we will create a TestDB database and TestTable:

USE master GO --Creating the database CREATE DATABASE TestDB GO USE TestDB GO --Creating the TestTable CREATE TABLE TestTable ( ID INT, Value INT )

SSMS Fails to Execute Large Scripts

Now we will execute a T-SQL script file in Management Studio to load data into the TestTable table. In our experiment I used SQL Server 2014 SP2 and the size of script file is 55 MB. After executing the script we receive this error:

This is the situation when file is so large for SSMS to handle that an error arises.

Using SQLCMD to run large T-SQL Scripts

As an alternate to SSMS, we will use the SQLCMD utility from the command line to execute this script. SQLCMD is a utility which allows us to execute T-SQL statements, script files, stored procedures from the command line. Note that unlike SSMS, which uses the Microsoft .NET Framework SqlClient for execution, SQLCMD being run from the command line uses the ODBC driver.

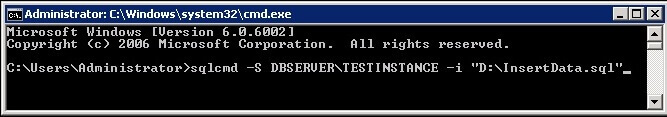

To specify an input file and run it on our instance we use the following syntax:

sqlcmd -S\ -i

Suppose that our server name is DBSERVER, instance name is TESTINSTANCE and file name is "InsertData.sql" which is located on the "D" drive. Our command will be the following:

sqlcmd -S DBSERVER\TESTINSTANCE -i "D:/InsertData.sql"

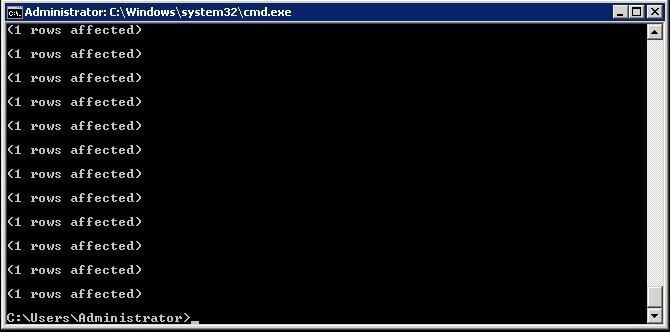

Pressing "Enter" executes this file and after completion we can see the script successfully completes and the table is filled:

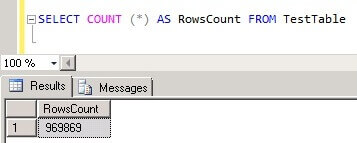

We can check in SSMS that table contains the data:

SELECT COUNT (*) AS RowsCount FROM TestTable

Conclusion

SQL Server Management Studio is unusable for executing large script files. However, sometimes we need to handle large files (for example loading a large amount of data, executing create database scripts generated from large databases and so on) and by using the SQLCMD utility from the command prompt allows us to solve these issues.

Next Steps

Read more about SQLCMD:

- SQLCMD Utility

- Use the SQLCMD Utility

- Start the SQLCMD Utility

- Connecting to SQL Server Using SQLCMD Utility

- SQL Server Management Studio SQLCMD mode option

About the author

Sergey Gigoyan is a database professional with more than 10 years of experience, with a focus on database design, development, performance tuning, optimization, high availability, BI and DW design.

Sergey Gigoyan is a database professional with more than 10 years of experience, with a focus on database design, development, performance tuning, optimization, high availability, BI and DW design.This author pledges the content of this article is based on professional experience and not AI generated.

View all my tips