By: Daniel Calbimonte | Comments (7) | Related: 1 | 2 | More > Import and Export

Problem

I need to import an Excel file to SQL Server that has 1 million rows. Do you recommend using T-SQL or SSIS? Can you provide a comparison of the options? Which option is easier to code? Which option performs better?

Solution

We are going to try both options (T-SQL and SSIS) and you will be able to compare the results!

Requirements

- SQL Server relational database engine installed

- SSIS installed

- SQL Server Data Tools Installed (SSDT) or BIDS (Business Intelligence Development Studio) installed.

- You can use SQL Server 2005 or later versions. In this example, we are using SQL Server 2014.

- Microsoft Excel 2013 installed.

Import 1,000,000 Rows with SQL Server Integration Services

- In this fist example, we are going to import a million rows from Excel to SQL Server.

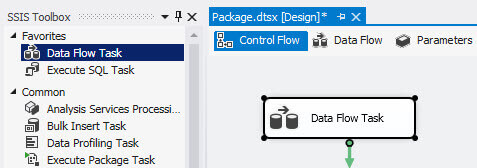

- In the SSDT, create a new SSIS project.

- Drag and drop the Data Flow Task to the design pane.

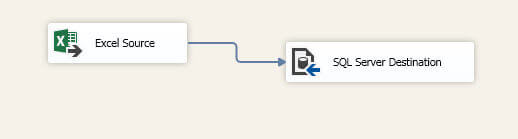

- Double click on the task and drag and drop the Excel source and the SQL Server Destination and join the tasks.

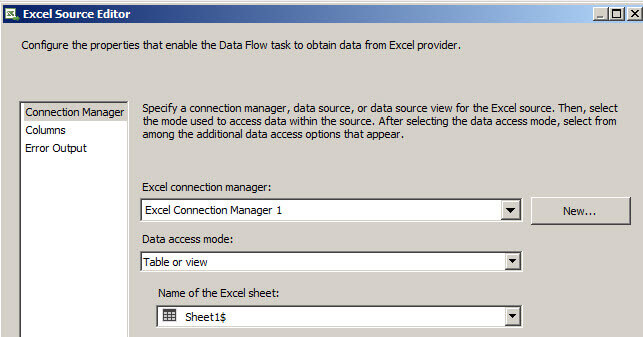

- In the Excel Source add a new connection to the Excel file.

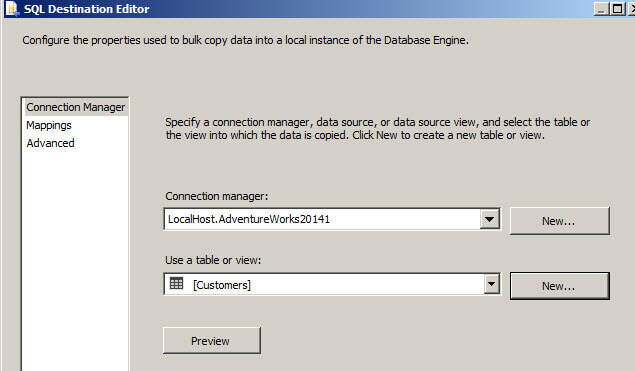

- Double click on the SQL Destination Task and select a SQL connection and in the use a table or view, press the new button to create a new table. Click on the Mappings page to match columns.

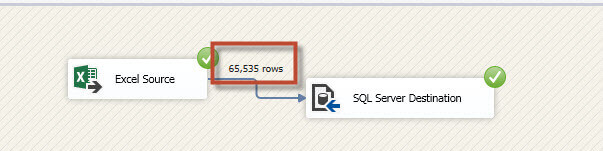

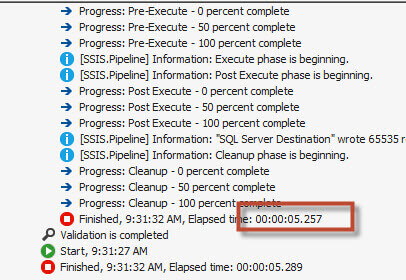

- If you run the package, you will notice that only 65,535 rows were copied.

- It took just 5 seconds, but we need to import a million rows!

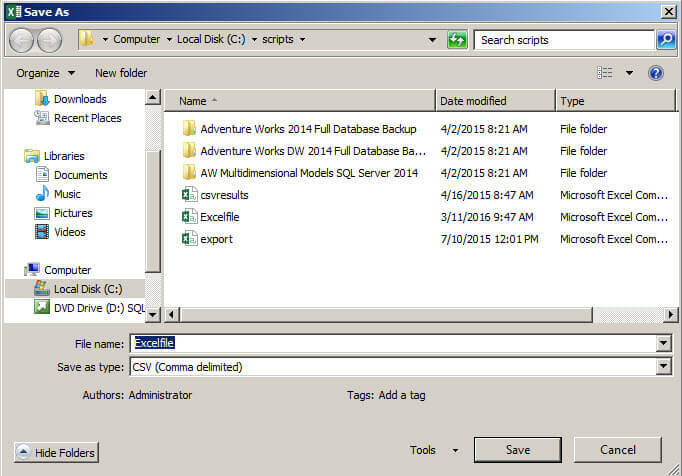

- The easiest way to solve this problem is to save the Excel file as a CSV file.

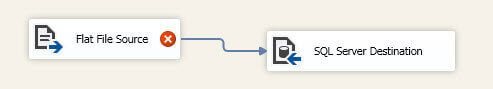

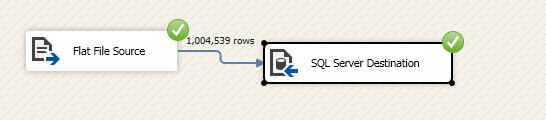

- Let's remove the other tasks and add the Flat File Source and a SQL Server Destination.

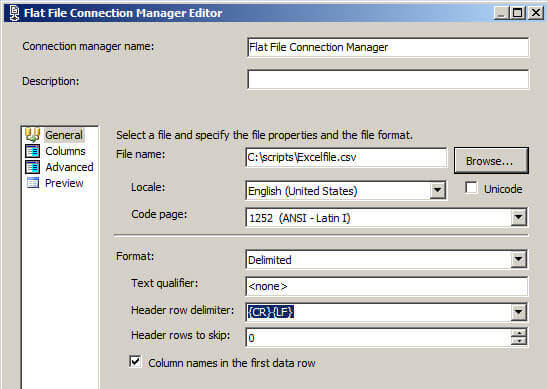

- Press the browse button and select the CSV file.

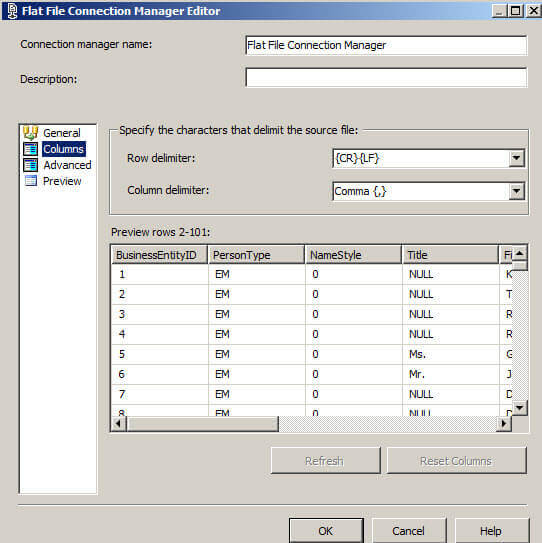

- Go to the columns page to match the columns.

- If you run the package, you will see that the million rows were imported to SQL Server successfully.

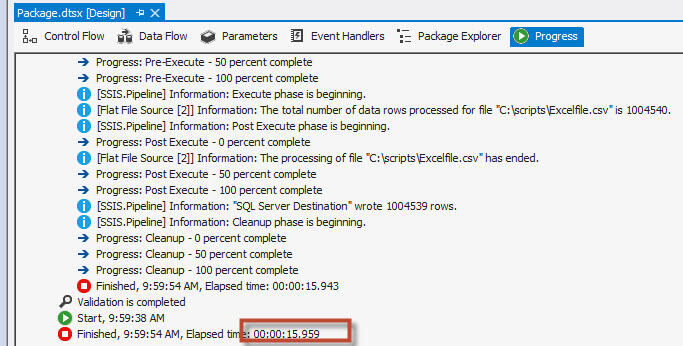

- If we check the time in the progress, we will notice that it took 15.959 seconds.

Import 1,000,000 Rows with T-SQL

- Let's try the same with T-SQL. We are going to import the data using Linked Servers.

- If you are not familiar with Linked servers, we strongly recommend you to check our tip about Linked Servers for Microsoft Excel.

- The following code will create the Linked Server:

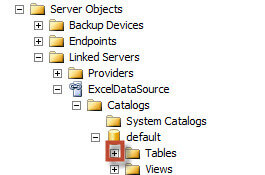

- Try to expand the tables of the Linked Server:

- A typical error is this: The OLE DB provider "Microsoft.ACE.OLEDB.12.0" for linked server "ExcelDataSource" reported and error. Access denied. Cannot obtain the required interface ("IID_IDBSchemaRowset") from OLE DB provider "Microsoft.ACE.OLEDB.12.0" for linked server "ExcelDataSource". (Microsoft SQL Server, Error: 7399)

- One of the solutions is to install the following driver for MS Office. By default, this driver is not installed. You will need to restart the machine after installing this driver.

- If that does not work, run the following commands. The AllowInProcess is used to run the process as a SQL Server Process and the DynamicParameter option is used to run parameterized queries.

USE[master] GO EXEC master.dbo.sp_MSset_oledb_prop N'Microsoft.ACE.OLEDB.12.0',N'AllowInProcess',1 GO EXEC master.dbo.sp_MSset_oledb_prop N'Microsoft.ACE.OLEDB.12.0',N'DynamicParameters',1 GO

- Also, make sure that the query is running under an account with access to the Excel file.

- Once the Linked Server is working, run the following query to create a SQL Server table named MylinkedExcel.

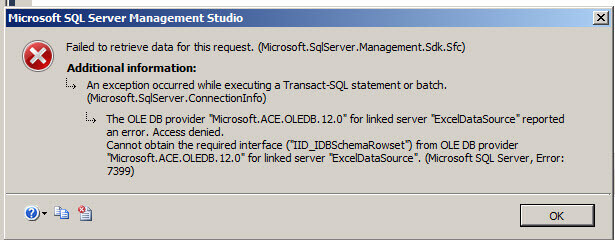

- If we measure the time, we will notice that it takes more than 7 minutes and 47 seconds!

- OK, now we know that using a Linked Server to import data from Excel is very slow. We are going to use the CSV we created above and then use the Bulk Insert command.

- To use the Bulk Insert command we will need to first create the table manually:

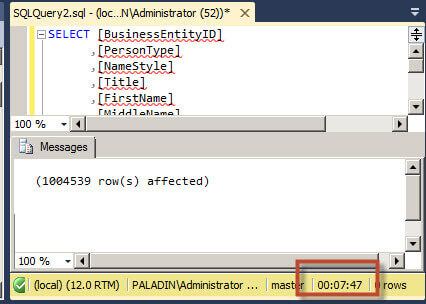

- This code will import the data from the CSV file to the table we just created.

- If we check the time, we will notice that it took 31 seconds to import the data.

EXEC sp_addlinkedserver @server = N'ExcelDataSource', @srvproduct=N'ExcelData', @provider=N'Microsoft.ACE.OLEDB.12.0', @datasrc=N'C:\scripts\Excelfile.xlsx', @provstr=N'EXCEL 12.0' ;

SELECT [BusinessEntityID] ,[PersonType] ,[NameStyle] ,[Title] ,[FirstName] ,[MiddleName] ,[LastName] INTO MylinkedExcel FROM [ExcelDataSource]...[Sheet1$]

CREATE TABLE [dbo].[excelcustomer2]( [BusinessEntityID] [varchar](50) NULL, [PersonType] [varchar](50) NULL, [NameStyle] [varchar](50) NULL, [Title] [varchar](50) NULL, [FirstName] [varchar](50) NULL, [MiddleName] [varchar](50) NULL, [LastName] [varchar](50) NULL, [Column 7] [varchar](50) NULL, [Column 8] [varchar](50) NULL, [Column 9] [varchar](50) NULL ) ON [PRIMARY] GO;

BULK INSERT excelcustomer2 FROM 'c:\scripts\excelfile.csv' WITH ( FIELDTERMINATOR = ',', ROWTERMINATOR = '\n' ) GO;

Conclusion

To summarize, SSIS has an Excel limitation of 65535 rows. We had to work with the flat file component and convert from Excel to a CSV file to import a million rows.

On the other hand, T-SQL had several problems to access the Linked Server file from Excel, but finally when it was working it took more than 7 minutes to import the data. We then tried again with the CSV file and used the Bulk Insert command to improve the performance.

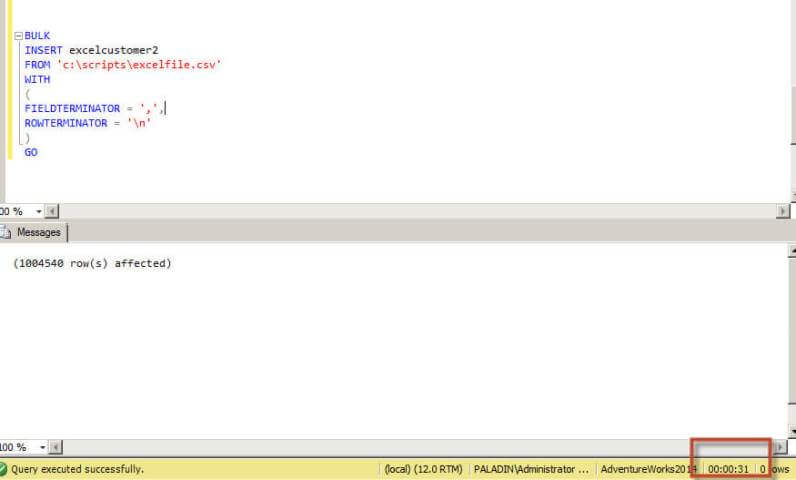

Who is the winner?....the table summarizes the results:

| Solution | Time |

|---|---|

| SSIS using the Excel component | 5 seconds, but it is limited to only 65535 rows |

| SSIS converting the Excel to CSV and using the flat file component | 16 seconds |

| Linked Server to Excel | 7 minutes, 47 seconds (but it worked) |

| The Bulk Insert statement converting the Excel file to a CSV file | 31 seconds |

As you can see, SSIS has very fast tools to import and export files. It also has the advantage that the destination table is created automatically while the Bulk Insert statement requires creating the table manually. In this example SSIS is the winner. Using SSIS can be hard at the beginning and you will find some limitations, but T-SQL has also limitations, so we recommend studying both options to find the best solution for your problem.

Next Steps

Here are several links that will be useful to you:

- Importing SQL Server Data Using SSIS - Which Option is Fastest.

- Using a SQL Server Linked Server to Query Excel Files

- SQL Server Integration Services Excel Tips

- Options to Improve SQL Server Bulk Load Performance

About the author

Daniel Calbimonte is a Microsoft SQL Server MVP, Microsoft Certified Trainer and 6-time Microsoft Certified IT Professional. Daniel started his career in 2001 and has worked with SQL Server 6.0 to 2022. Daniel is a DBA as well as specializes in Business Intelligence (SSIS, SSAS, SSRS) technologies.

Daniel Calbimonte is a Microsoft SQL Server MVP, Microsoft Certified Trainer and 6-time Microsoft Certified IT Professional. Daniel started his career in 2001 and has worked with SQL Server 6.0 to 2022. Daniel is a DBA as well as specializes in Business Intelligence (SSIS, SSAS, SSRS) technologies.This author pledges the content of this article is based on professional experience and not AI generated.

View all my tips