By: Siddharth Mehta | Comments (8) | Related: > SQL Server 2017

Problem

Portable Document Format (PDF) is one of the most prominent office document file formats apart from other formats like Word, Excel and PowerPoint and needs no introduction. Almost everyone who works with office documents would have worked with PDFs at least once. A very common usage pattern is that documents are generally authored in Microsoft Word, then at a certain stage they are converted into a PDF document and circulated / archived. This is done generally when these documents are no longer required to be edited frequently. Over a period of time, many organizations have accumulated a large repository of such documents.

These documents hold a large repository of data which can be very useful to different information processing applications like text mining, data archiving, data warehousing, etc. Usually content management systems hold PDF documents. When a need arises for performing some search or analytics on these documents, they often need to be processed by some data processing tools or technologies. This requires reading of such PDF documents and loading them into a database in an automated fashion.

In this tip, we will learn how to extract textual data from PDF documents and load the data into a SQL Server table without the use any external front-end or integration tools. Instead we will accomplish this just by using SQL Server 2017 and R.

Solution

R can be used to read PDF documents and extract the textual information, which can be loaded into SQL Server tables. SQL Server 2017 ships with an option to install and use the R language inside T-SQL to analyze data. We will use R with T-SQL for the above-mentioned purpose.

Before we start with the implementation, first let's briefly understand the exercise we will perform in this tip to demo the loading of data from PDF in SQL Server. We will be using a sample PDF file that would contain text as well as a graphic. This file will be stored on the same machine as the SQL Server instance. Generally, it is not advisable to have a file server and database server on the same disk subsystem. You can store the file on a different system then the database server, but ensure that the machine on which the SQL Server instance as well as R server is installed, has network connectivity and accessibility to the machine on which the file is stored. We will be reading the text from this file and loading the text into a SQL Server table. Follow the below steps to perform this exercise.

Download the sample PDF file from here. This file contains some text as well as a graphic. You can use any PDF files that you may have. This sample PDF looks as shown below.

We need to create a table in SQL Server in which we will load the data. The table need not be too complex. We just need a field in which we will load large textual values. So preferably we will have the datatype of this field to be varchar(max). Open a new query window in SSMS, point to the database of your choice, and create a new table using the code shown below.

create table test (id int identity, PDFText varchar(max))

It is assumed that you have installed and configured R on this instance of SQL Server. If not, you can follow the installation section of the R tutorial, and install or configure R on SQL Server.

To read the PDF files, we need to use a package named pdftools on the R installation. You can read the instructions from this link on how to install packages on a R server.

Read PDF File Using R and SQL Server

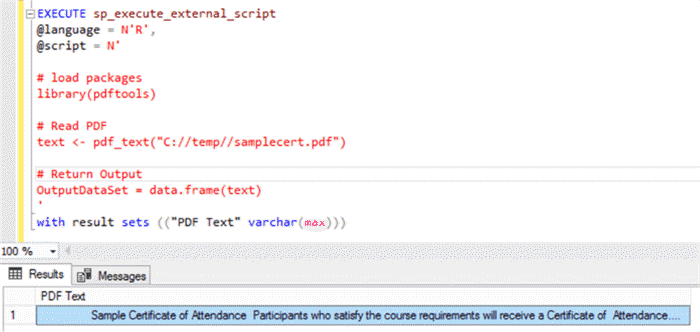

Once you have installed these packages, open a new query window and type the following code.

EXECUTE sp_execute_external_script

@language = N'R',

@script = N'

# load packages

library(pdftools)

# Read PDF

text <- pdf_text("C://temp//samplecert.pdf")

# Return Output

OutputDataSet = data.frame(text)

'

with result sets (("PDF Text" varchar(max)))

Let’s try to understand this code.

- sp_execute_external_script is the store procedure which external scripts can be executed in SQL Server.

- @language parameter signifies the script language being used, which is R in this case.

- @script parameter contains the actual R script which will be executed. In the script, the first part uses the library R function to load library from the package that we installed in the previous step. Then we are executing the pdf_text function of the pdftools library and passing the path of the PDF file that we wish to read. The output of this function is assigned to “text” variable. In the last step, we are converting this variable to a data frame which is the required format to emit any data output from R to SQL Server.

- with result sets clause defines the schema in which the output result will be received by SQL Server.

You can use this approach to read PDF files in bulk by wrapping this code inside a loop. Once you execute this code, the output would look as shown below.

When you expand the output field, you will find that the text in the graphic is not captured, which is expected. But the contents in text have been captured and returned successfully.

Read PDF File and Break Apart Lines Using R and SQL Server

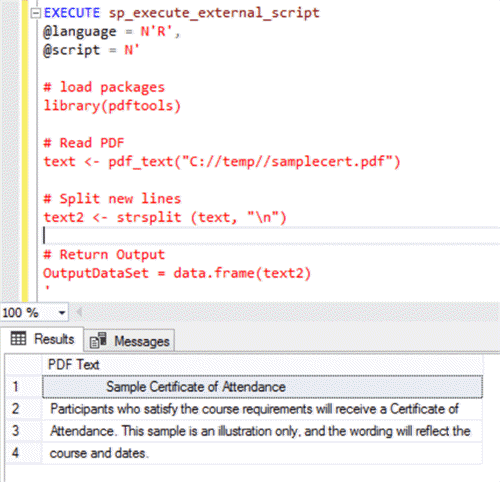

In the above code, the entire output is in a single line, which is desirable while loading data in a table where we may want to save the entire output from a given file in a single field of a single record. But there can be cases where we may want to preserve the format too. In this case, we can use the strsplit function with a new-line character to break down the extracted text in different lines and preserve the same order as it is in the original document.

Type and execute the following code:

EXECUTE sp_execute_external_script

@language = N'R',

@script = N'

# load packages

library(pdftools)

# Read PDF

text <- pdf_text("C://temp//samplecert.pdf")

# Split new lines

text2 <- strsplit (text, "\n")

# Return Output

OutputDataSet = data.frame(text2)

'

with result sets (("PDF Text" varchar(max)))

After you execute this code, the output should look as shown below:

Read PDF File and Load to a Table Using R and SQL Server

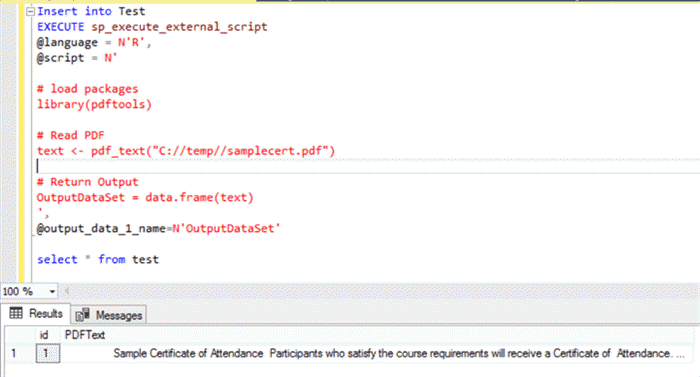

Now that we can extract the data from PDF, it’s now time to insert this data in the test table that we created earlier. This can be done with a simple insert command as shown below.

Insert into Test

EXECUTE sp_execute_external_script

@language = N'R',

@script = N'

# load packages

library(pdftools)

# Read PDF

text <- pdf_text("C://temp//samplecert.pdf")

# Return Output

OutputDataSet = data.frame(text)

',

@output_data_1_name=N'OutputDataSet'

select * from test

Executing this code would insert the extracted data from the PDF file into the table as shown below.

In this way, using SQL Server 2017 and R, you can perform a bulk load of data from PDF files into SQL Server. Once this data repository is created, you can perform free text search and text mining related processing tasks on this data. The beauty of this approach is that we can load data from a PDF file to a SQL Server table with just a couple of lines of code, without the need of using any external data extraction and integration tools.

Next Steps

- Try bulk testing your PDF files containing diverse types of textual data, wrap the code in a Stored Procedure or Function, and test it on a variety of volumes.

About the author

Siddharth Mehta is an Associate Manager with Accenture in the Avanade Division focusing on Business Intelligence.

Siddharth Mehta is an Associate Manager with Accenture in the Avanade Division focusing on Business Intelligence.This author pledges the content of this article is based on professional experience and not AI generated.

View all my tips