Problem

We were recently introduced to a data storage approach where we never remove or update data, but flag data that is no longer true or active along with sometimes replacing a value with a new value (a technique that is sometimes referred to as soft deletes and updates). While this may help some of our applications, since we have a tendency to reverse a data change and the time to restore is costly or causes other data dependencies to fail, what are some considerations of using this approach?

Solution

This can be a useful solution where we approach storing data by disabling the delete and update operation on the back-end. For a delete, we treat the record with an update to a flag column as false (such as IsActive, IsExists, or IsCurrent another derivative). For an update, we add a new record with our flag column as true, while the old record is labeled as false. For explanation purposes, we’ll first look at what soft transactions are with an example where we perform them on a new table derived from an existing table and look at situations where we may want to consider them or avoid them completely.

What Is A Soft Transaction?

In our first step, we will take a table with a primary key and create a copy of this table with either all of the columns or a selection of columns. I suggest testing this by copying an existing table to test how soft transactions will function rather than adding a column to an existing table and assuming that this will function.

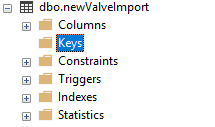

In the below example, we create a full copy of an existing table by using the SELECT * INTO approach, which will copy a full table into a new table. From there, we generate the create table script to verify that no primary key exists on the new table (we could also check through the interface as shown in the below image). After that’s verified, we add a new column with a default bit value of 1 to the table called true, which reflects whether the current record in the database is the true value.

Why do we want the default value to be 1 as opposed to 0? Because every new value needs to be true when we perform a soft transaction – we’re never removing records: in an update, we’re adding a new record (true) and updating the old record to false and in a delete transaction we’re updating an existing record to false. In addition, we do not allow for NULL values on this column, as a row is either true or false.

In review, our steps were:

- Create a new table from an existing table, where the existing table has a primary key using the SELECT * INTO syntax (for testing to validate our design will function correctly)

- Verify the new table has no primary key

- Add a new bit column called True (or another derivative) with a default value of 1 and make sure it’s not NULL.

/*

-- Some data for testing in this example

CREATE TABLE [dbo].[ValveImport](

[ValveOutput] [int] NULL,

[ValveInput] [int] NULL,

[MeasurementTime] [datetime] NULL,

[ImportDate] [datetime] DEFAULT GETDATE(),

[ValveId] [varchar](12) PRIMARY KEY,

[ImportFlag] [int] NOT NULL

) ON [PRIMARY]

GO

INSERT INTO ValveImport (ValveOutput,ValveInput,MeasurementTime,ValveId,ImportFlag)

VALUES (91,100,'2017-01-01 01:35:28.277','00a6f80450',0,1)

, (91,100,'2017-01-01 01:35:28.277','02037071a7',0,1)

, (91,100,'2017-01-01 01:35:28.277','02b33372ec',0,1)

, (91,100,'2017-01-01 01:35:28.277','04e42273c2',0,1)

*/

---- Create new table with primary key from existing table:

SELECT *

INTO newValveImport

FROM ValveImport

/*

---- (Do not run - the table is created from above). Notice the new table (scripted) has no primary key :

CREATE TABLE [dbo].[newValveImport](

[ValveOutput] [int] NULL,

[ValveInput] [int] NULL,

[MeasurementTime] [datetime] NULL,

[ImportDate] [datetime] DEFAULT GETDATE(), ---- updated to match original table

[ValveId] [varchar](12) NOT NULL,

[ImportFlag] [int] NOT NULL

) ON [PRIMARY]

GO

*/

---- Add new column

ALTER TABLE newValveImport ADD True BIT DEFAULT (1) NOT NULL

SELECT *

FROM newValveImport

Now that we have our soft transaction table, we’ll demonstrate how we would run transactions against it. The nature of soft transaction eliminates the DELETE operation – we will no longer run a DELETE command, as a soft delete only updates a record to True equaling 0 (false). A soft UPDATE adds a new record (true) and changes the previous existing record to false. Therefore, we’ve reduced our write operations to INSERTs and UPDATES, with the latter being more nuanced than a straight update.

When we add a new record with a new primary key value (ValveId in this example), we can simply run an INSERT statement, as our table will have no ValveIds with that new value. If a ValveId exists already when we want to insert a new record, we must first set the existing value to false (True = 0), then insert the new record. This means that we run an update and an insert for an soft update transaction. Because we cannot allow one part of this to succeed while the other part fails (such as the update part succeeding, while the insert fails), we wrap this in 1 transaction with a begin transaction.

The below query shows our two scenarios: in situation 1 the ValveId doesn’t exist, so we would run an INSERT after the check. In situation 2, we would first need to update the existing record before inserting the new record. It should be of note that we should only have one true distinct ValveId at a time – a duplicate in this table would be considered a ValveId where two or more of its records have True values of 1.

---- Situation 1: Returns true because the valveid exists

DECLARE @valveid VARCHAR(12) = 'newrecord111'

SELECT ValveId FROM newValveImport WHERE ValveId = @valveid AND True = 1

---- Situation 2: Returns false because the record exists

DECLARE @valveid VARCHAR(12) = '00a6f80450'

SELECT ValveId FROM newValveImport WHERE ValveId = @valveid AND True = 1

In our next step, we handle the logic for these two situations:

---- We will run this twice:

DECLARE @output INT = 90, @input INT = 100

DECLARE @valveid VARCHAR(12) = 'newrecord111'

BEGIN TRAN

IF EXISTS (SELECT ValveId FROM newValveImport WHERE ValveId = @valveid AND True = 1)

BEGIN

UPDATE newValveImport

SET True = 0

WHERE ValveId = @valveid

END

INSERT INTO newValveImport (ValveId, ValveOutput, ValveInput, MeasurementTime, ImportDate, ImportFlag)

---- The output, input, time and import values are contrived for example purposes

VALUES (@valveid, @output, @input, GETDATE(),GETDATE(), 0)

COMMIT TRAN

SELECT *

FROM newValveImport

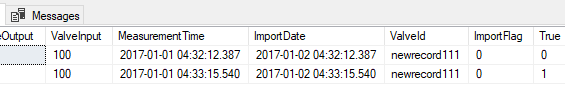

WHERE ValveId = 'newrecord111'

Our result shows two records with a ValveId of newrecord111, and only one record is True while the other is false. Every time we add a new record, our logic checks if a True value of the ValveId exists, updates True to 0 (false) if a record exists, and adds the record. If we call the transaction again, we’ll see 3 records with the same ValveId, 2 which have True values of 0 (false) and the latest record with the same ValveId with a True value of 1.

Should We Use Soft Transactions?

Now that we see how soft transactions work and the required logic we’d need, what should we consider if we want to use them?

The strength of soft transactions is that we never remove a record or update a record without a new record being created, which means we have an entire history of changes. Without a delete, we can optimize for two write transactions – insert and update. The drawbacks are the same – since we never remove a record, our tables can become huge. In addition, we structure our primary key differently, as any ID column may have duplicates in the table because the ID is no longer the primary key. The primary key is the ID and automatic timestamp column when a new record is added to the table, whereas the previous table only had the ID as the primary key because we would directly update or remove a record by the ID.

Situations where soft transactions work well.

If we need to quickly reverse a change or restoring data could be too costly and the data set is small, soft transactions work the best. This includes the fact that we’d still have the record of the original data and the new data, so that if we reverse to the original data, we can still see why the new data value failed – since it would only be set to a True value of 0. Think of an example where we have a configuration table that many of our applications use. We could remove old values, or update existing values, but if we ever need to revert, this may cost us.

Soft transactions work very well with configuration tables. Some historical data may also be useful, provided that the historical values do not change that often and our tendency is to get newer data (in other words, 99% of writes are inserts, 1% of writes would be updates or removals). Finally, if initially scaled correctly, soft transactions can be helpful in situations where updating data or removing data carry high costs. This cost still has to be balanced with a possible size cost though. Before we consider using soft transactions, we want to consider the data growth in time in the database. Reindexing, CHECKDB, updating statistics, etc. will all be impacted if we have a table that grows because of our use of them.

One both false and true popular statement about soft transactions is that an environment will need fewer backups. If the soft transactions are managed in a robust high availability strategy (multiple copies), it may be true that we don’t have to run a backup as often (assuming that the size of the backup is irrelevant), as we have the entire history of our data across multiple nodes. However, we still need to be frequently running integrity checks against the data and we will still need backups. Regardless of whether we have the full history, drive and memory corruption can still happen and taking a risk of never backing up a database could be costly. Consider if CHECKDB failed? What would we restore to without a backup? The frequency of backing up the data heavily depends on the high availability strategy: I would not feel comfortable with infrequent backups if I only had one instance of the data, even with the full history.

Situations where we may want to look at alternatives, or avoid using them.

If we have a large data set and we see a significant number of deletes or updates – for instance, rows that are constantly changing values based on an ID. In our soft transaction scenario, these wouldn’t be updated directly, as we’d set their True set to 0 and add a new record. Suppose that we get 1 billion updates a day – that’s 1 billion new records and 1 billion updates on the old record if we use soft deletes. We also have to consider that every query we run must also now include the True = 1 in the WHERE clause and we must account for appropriately indexing our table to reflect this column. With billions of new records, this cost increases and if it doesn’t offset the benefits that we get from soft transactions, it’s not worth it. If we initially scaled our table by date, an id or some field that allowed us to partition the 1 billion new records across 100 or more tables, we may be in a better situation, but we still have to think about adding addition operations to our where clauses and how we’d have to further reduce the size of even the smaller set of tables in the long run.

Old data points can be archived, such as old records where the True column is 0, meaning it’s not active, but this will introduce limits to our ability to roll back. We may experience situations where a record from three years ago should be true and if we archive records over a year or more, this may not be present. This means that archiving old records should be considered carefully, as an old record may need to be the revert point.

Finally, we should also consider the below questions as well in our environment as any of these may be drawback points:

- What will we consider redundant data? If we have backups and log backups as well, along with the soft transactions for every record, does this create too much redundancy? Note that this doesn’t apply to smaller tables, like lookup or configuration tables, where a reversion may need to happen in seconds, but also means the cost of redundancy is low.

- For larger tables, what is our growth in the past month? What is our growth in the past year? What is our expected growth?

- How will we enforce soft transactions in our environment for developers? Should we revoke all access, except execute on stored procedures which run these transactions on the tables for soft transactions?

- What performance configurations do we want to use, from indexing to maintenance jobs to design, to ensure transactions occur quickly, especially with large tables?

- In addition to situations where we may not want to consider this design, we should also consider how we would answer these questions for our environment in situations where we may be considering using soft transactions.

Next Steps

- For a starting use case, I suggest testing configuration tables with soft transactions if developers are completely new to them.

- What data would be very costly to reverse, if you needed to in an emergency? How do soft transactions here compare with restores or archiving?

- To avoid duplicates where we have two same record IDs that are both active, check out this tip on removing duplicates. Remember that a duplicate involves two or more IDs that are both (or all) set to True, when only 1 should be. In the example where we run a new transaction, note how we do this within a BEGIN TRAN, so that if the update fails, the insert will as well. We never want an update to pass, with the insert failing.

- Smaller data environments will experience less issues with soft deletes, provided that storage and performance are too troublesome for larger environments.

Tim consults for FinTek Development and teaches the course Automating ETL on Udemy. He’s worked with technology since high school, helping his school win its first TCEA award and continues to work in automation, data architecture, back-end development, and smart contract architecture. Tim enjoys testing new technologies early in the diffusion of innovation curve and was an early adopter of NoSQL and smart contract development. He has a blog at http://www.fintekdev.com/ and helps contribute to local technical and financial events in Texas.