By: Koen Verbeeck | Comments | Related: > Azure Integration Services

Problem

I have created an SSIS project in Visual Studio. In this project, I have a master package starting multiple child packages. These child packages run in parallel. When executing my project on a local server, everything runs fine and performance is optimal. However, after migrating the project to the Azure cloud using the Azure-SSIS runtime, performance has degraded. What can be the cause of this issue?

Solution

As explained in the tips Configure an Azure SQL Server Integration Services Integration Runtime and Executing Integration Services Packages in the Azure-SSIS Integration Runtime you can easily lift and shift your existing SSIS projects to the Azure-SSIS runtime with minimal or no changes. However, to make sure performance stays optimal, some changes do have to be made to make sure packages keep running in parallel. In this tip, we’ll share a solution on how to ensure your packages use the maximum of the available resources on the Azure-SSIS cluster. If you haven’t already read the two mentioned tips, please do so as this tip will built upon the knowledge shared in those tips.

Executing Packages in Parallel in the Azure-SSIS Runtime

Test Set-up

First, we need a package performing a task that takes a little while so we can monitor the process. We’re going to use a query to generate 5 million rows and insert them into a table in an Azure SQL database. We’re using the SSIS data flow, to make sure data is transferred over the network to the cluster in the Azure-SSIS runtime where it will consume CPU and memory. The query uses a tally table to generate the rows and is based on the article Creative Solutions by Using a Number Table by Greg Larsen.

WITH

L0 AS(SELECT 1 AS C UNION ALL SELECT 1 AS O), -- 2 rows

L1 AS(SELECT 1 AS C FROM L0 AS A CROSS JOIN L0 AS B), -- 4 rows

L2 AS(SELECT 1 AS C FROM L1 AS A CROSS JOIN L1 AS B), -- 16 rows

L3 AS(SELECT 1 AS C FROM L2 AS A CROSS JOIN L2 AS B), -- 256 rows

L4 AS(SELECT 1 AS C FROM L3 AS A CROSS JOIN L3 AS B), -- 65,536 rows

L5 AS(SELECT 1 AS C FROM L4 AS A CROSS JOIN L4 AS B), -- 4,294,967,296 rows

Nums AS(SELECT ROW_NUMBER() OVER(ORDER BY (SELECT NULL)) AS N FROM L5)

SELECT TOP 5000000 N AS N1, N AS N2 FROM Nums;

This query generates two integer columns, with values ranging from 1 to 5 million. That’s 8 bytes per row, or 40 Megabytes in total.

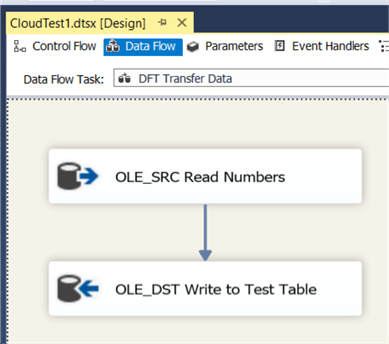

We’re going to run three identical packages in parallel. The data flow looks like this:

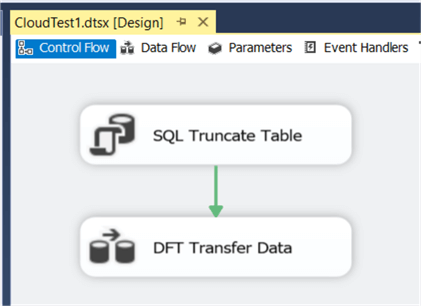

The control flow simply truncates the destination table and loads the generated data into the database.

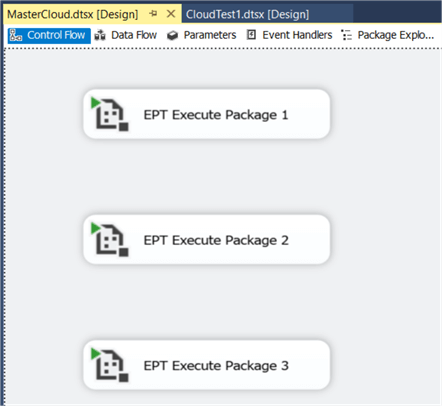

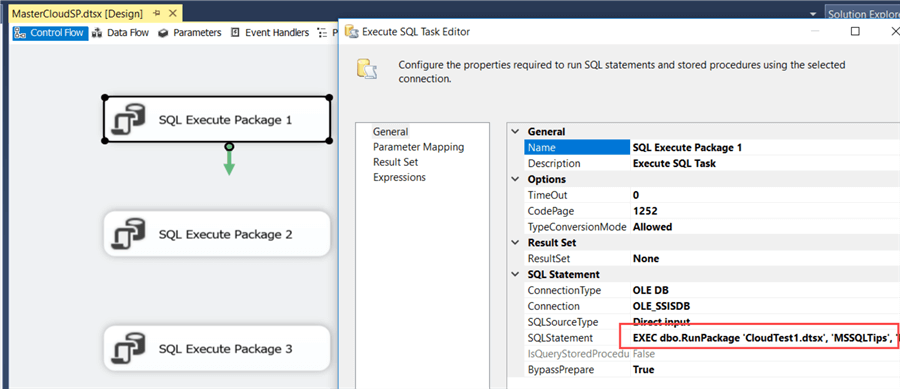

One master package kicks off the three packages in parallel using Execute Package Tasks.

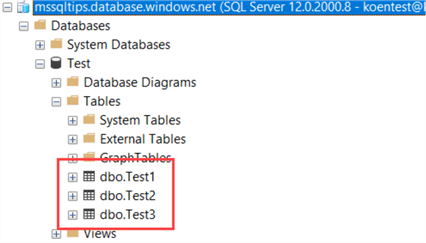

Each package writes to its own destination table, so there is no concurrency and locking on a single table.

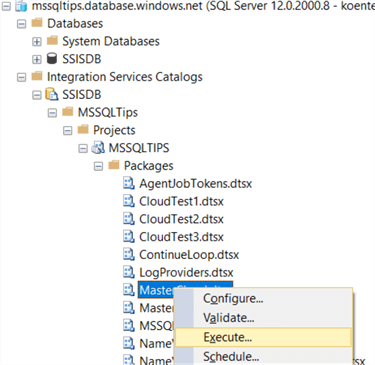

After we’ve deployed the SSIS project, we can start the master package in the SSIS catalog.

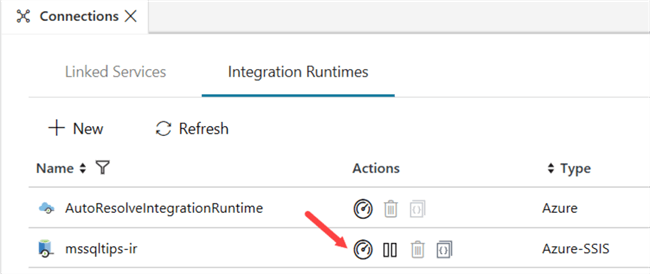

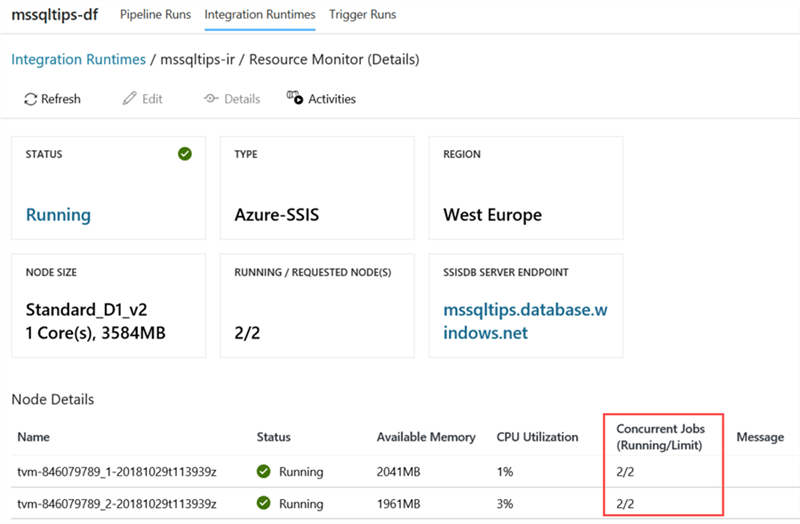

In Azure Data Factory, we can monitor the IR environment to see how many resources are consumed and how many packages are running.

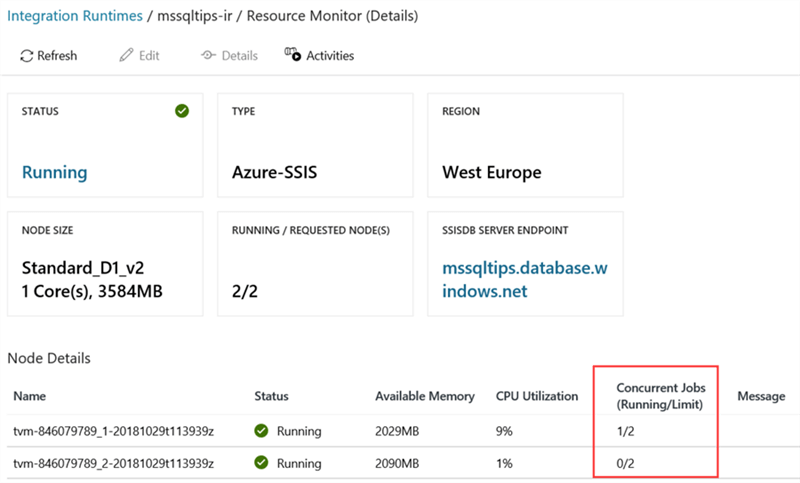

At the resource monitor page, we can see only one job is running, while there are actually 4 packages running (the master package and the three child packages).

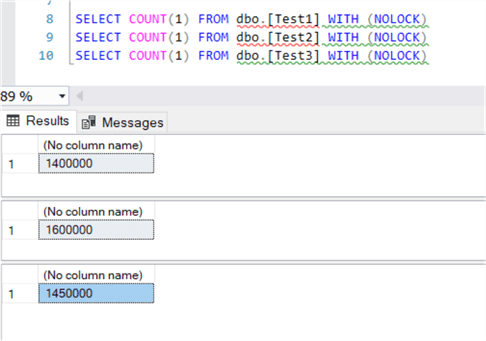

We can verify if all packages are running at the same time by doing a count on the destination tables with the NOLOCK hint.

The problem is all the child packages are being executed inside the execution of the master package. In other words, they are being treated as part of the master package, so their executions are not spread out over the cluster.

Solution to Solve Problem

Luckily, this can be fixed. Instead of using Execute Package Tasks, we using the SSIS catalog stored procedures to start the child packages. The following stored procedure can be used to simplify the T-SQL needed to create and start an execution:

CREATE PROC [dbo].[RunPackage]

(@PackageName VARCHAR(50)

,@FolderName VARCHAR(50)

,@ProjectName VARCHAR(50)

,@Synchronized BIT = 1 -- run synchronously by default

)

AS

BEGIN

DECLARE @execution_id BIGINT;

EXEC [SSISDB].[catalog].[create_execution]

@package_name = @PackageName

,@execution_id = @execution_id OUTPUT

,@folder_name = @FolderName

,@project_name = @ProjectName

,@use32bitruntime = False

,@reference_id = NULL;

--SELECT @execution_id;

EXEC [SSISDB].[catalog].[set_execution_parameter_value]

@execution_id

,@object_type = 50

,@parameter_name = N'SYNCHRONIZED'

,@parameter_value = @Synchronized;

EXEC [SSISDB].[catalog].[start_execution] @execution_id;

END

This stored procedure creates an SSIS execution, sets the Synchronized parameter and starts the execution. By default, the Synchronized parameter is set to true, which means the stored procedure will wait till the SSIS package has finished executing. A sample T-SQL script to call this stored procedure would be:

EXEC dbo.RunPackage 'CloudTest1.dtsx', 'MSSQLTips', 'MSSQLTIPS', 1;

Since Azure SQL databases don’t allow cross-database queries, this stored procedure must be created inside the SSISDB database.

Now we need to replace the Execute Package Tasks with Execute SQL Tasks. Each of those tasks will call the stored procedure to start a child package.

Let’s deploy this master package to the catalog and execute it to monitor its behavior. When running this master package, the resource monitor now shows that every node of the IR environment is executing jobs:

Conclusion

By abandoning Execute Package Tasks and rather using the SSIS catalog stored procedures, we can optimally spread out the execution of packages over the Azure-SSIS integration runtime. This tip provides you with an easy to use stored procedure that creates and starts an SSIS package execution. Keep in mind this stored proc has to be deployed in the SSISDB database.

Next Steps

- To set up your Azure-SSIS IR environment, check out the following tips:

- If you want to try it out yourself, you can get a free Azure trial here.

- You can find more Azure tips in this overview.

About the author

Koen Verbeeck is a seasoned business intelligence consultant at AE. He has over a decade of experience with the Microsoft Data Platform in numerous industries. He holds several certifications and is a prolific writer contributing content about SSIS, ADF, SSAS, SSRS, MDS, Power BI, Snowflake and Azure services. He has spoken at PASS, SQLBits, dataMinds Connect and delivers webinars on MSSQLTips.com. Koen has been awarded the Microsoft MVP data platform award for many years.

Koen Verbeeck is a seasoned business intelligence consultant at AE. He has over a decade of experience with the Microsoft Data Platform in numerous industries. He holds several certifications and is a prolific writer contributing content about SSIS, ADF, SSAS, SSRS, MDS, Power BI, Snowflake and Azure services. He has spoken at PASS, SQLBits, dataMinds Connect and delivers webinars on MSSQLTips.com. Koen has been awarded the Microsoft MVP data platform award for many years.This author pledges the content of this article is based on professional experience and not AI generated.

View all my tips