By: Aleksejs Kozmins | Comments | Related: More > Integration Services Development

Problem

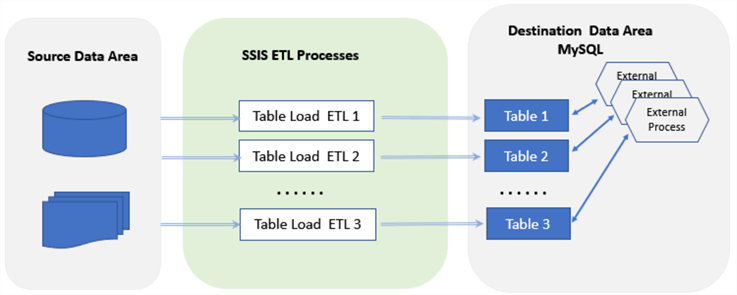

I participated in a project to test a new BI solution for media data. The ETL process included a remote MySQL database as a destination and the ETL processes can start many times per day along with external tasks at the destination performing additional processing on the MySQL data (see Fig.1) independently from the ETL load process.

Figure 1 - The SSIS based ETL with destination tables affected by external processes.

The destination database contained around 10 tables to load and the data in these tables could be processed by external tasks at unpredictable times. According to the project requirements, the table loading process has to pause for the run period of this external processing and should continue again when it finishes.

The question is, how can the SSIS data flow task be paused for a period of time if SSIS does not have a component providing this functionality? The answer is given below.

Solution

The described sample SSIS package illustrates the essence of the approach given in simplified way, using a local database without any remote connection.

The approach is based on control signals the data flow task should receive from the outside. The signals are as follows:

- To freeze data transfer and start waiting for another signal

- To stop waiting and continue data transfer

SSIS delay component controlled by multicast task

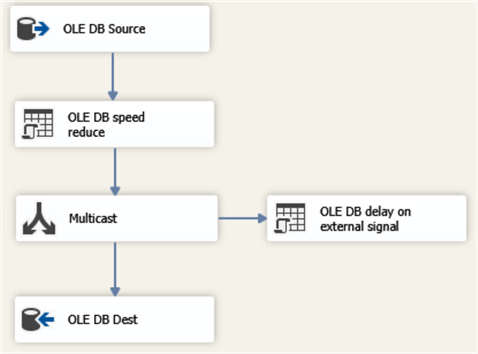

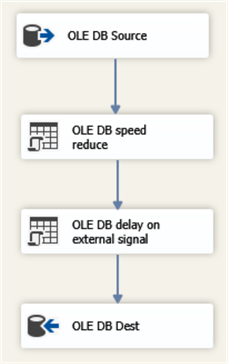

The structure of the SSIS package Data Flow is shown below.

Figure 2 - The data flow task with the delay component outside of the Data Path.

The first OLEDB command is used to reduce the data transfer speed just for illustrative purposes:

WAITFOR DELAY '00:00:00.001'

The component 'OLEDB delay on external signal' is controlled by a Multicast task and uses the following script:

IF (SELECT TOP 1 StopInd FROM dbo.ETLcontrolDelay WHERE ID=1) =1

BEGIN

-- THE SIGNAL TO FREEZE DATA FLOW RECEIVED

-- FREEZE TIMESTAMP LOGGING

UPDATE dbo.ETLcontrolDelay SET StopTime =getdate() WHERE ID=1

-- DELAY FUNCTIONALITY

WHILE (SELECT TOP 1 StopInd FROM dbo.ETLcontrolDelay WHERE ID=1) =1

BEGIN

-- AWAITING FOR THE SIGNAL TO CONINUE

WAITFOR DELAY '00:00:01'

END

-- CONTINUATION TIMESTAMP LOGGING

UPDATE dbo.ETLcontrolDelay SET ContTime =getdate() WHERE ID=1

END

Initially, the control table dbo.ETLcontrolDelay contains StopInd = 0. When the external process starts, this field gets a value 1 that turns on the delay functionality (the WHILE cycle). When the external process is over, the data flow receives StopInd = 0 and continues working.

The SSIS package

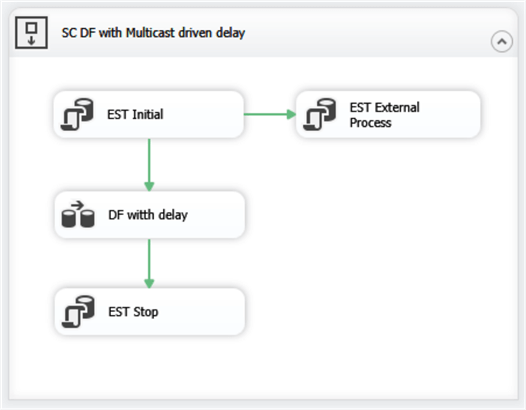

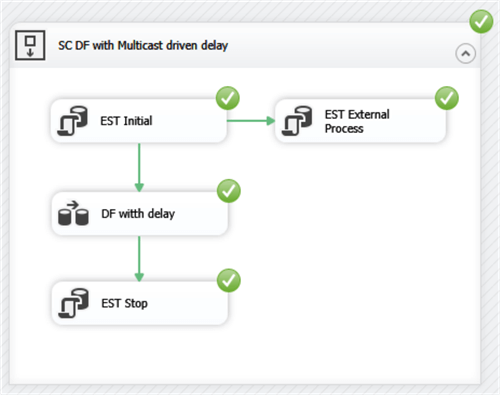

Below is the structure of the SSIS package Control Flow.

Figure 3 - The structure of the SSIS package, emulating interaction with the external process.

The 'EST Initial' component truncates the destination table and sets StopInd = 0 in the control table. Next, it starts the data flow task and the component 'EST External Process' that uses the following script:

WAITFOR DELAY '00:00:05'; -- INITIAL DELAY -- A SIGNAL TO FREEZE DATA FLOW TASK UPDATE dbo.ETLcontrolDelay SET StopInd =1 WHERE ID =1; -- THE EXTERNAL PROCESS STARTED WAITFOR DELAY '00:00:30' ; -- THE EXTERNAL PROCESS COMPLETED -- A SIGNAL TO CONTINUE DATA FLOW TASK UPDATE dbo.ETLcontrolDelay SET StopInd =0 WHERE ID =1;

This is just an emulation of an external process. After a certain delay it sets StopInd = 1 (the external process started) that causes the data flow task to freeze. After 30 seconds it sets StopInd = 0 that is the signal for data flow to continue working.

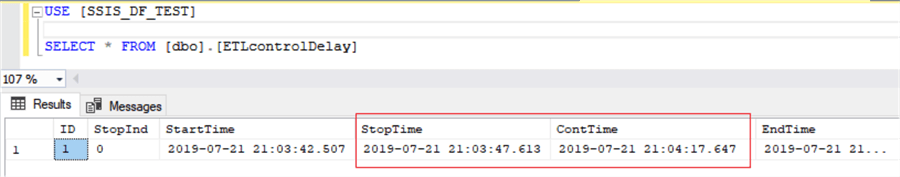

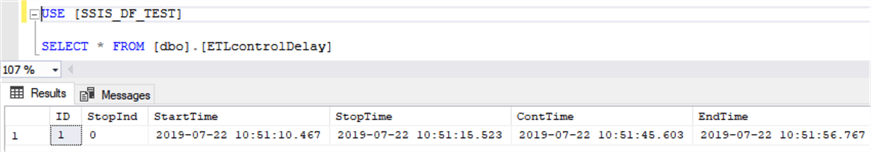

The ETL result is shown in the image below (see Fig.4).

Figure 4 - The ETL result with dataflow freeze and continuation timestamps (the fields StopTime and ContTime).

Here you can see that the dataflow freezes for a period of 30 seconds - the same that is used in the external process emulation.

Delay SSIS component within data path

The same effect can be achieved by including the delay component 'OLEDB delay on external signal' within the Data Path:

Figure 5 - The data flow task with the delay component included into the Data Path.

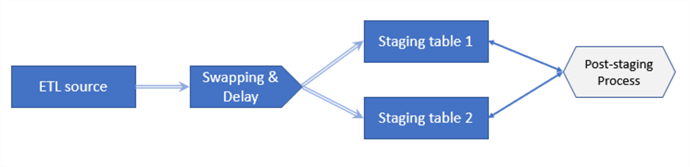

Both approaches provide the same functionality from the point of view of the delay, but there is one significant difference. The delay component within the Data Path can process both the external signals and the incoming source data. The advantage of this combined approach can be illustrated by the following diagram (see Figure 6).

Figure 6 - The combined ETL with delay and swapping out functionality.

It is a bit more complicated than the example given in the introductory part. According to the requirements, the source data should be transferred to the staging layer into one of two staging tables depending on which of them is available, i.e. non-locked. A staging table may be locked by the post-staging processing that can start occasionally during the ETL run. In this case, in order not to interrupt the ETL process, the data flow swaps out the data destination to another staging table. Meanwhile, the ETL process is still running, but the post-staging locks this table. In this case, the data flow should check if another staging table is available. If yes, it should swap out data destination to this table, if not, it should switch on the ETL delay process to wait until either of the staging tables are available. An explanation of how the data flow can swap out the destination points is given in my previous tip.

Conclusion

The data flow delay functionality can be used in different situations that may occur in a data integration process. For example, the data flow speed may be too low because of the network overload. When it is detected, a short pause of the data transfer may help the situation.

Another example is when a destination table should take data from two or more mutually independent SSIS tasks. The tasks can work in overlap and the requirement is not to mix the data going to the table from these data flows. If one of the processes captures the table for loading, the other(s) should wait for its completion.

Setup Test Environment

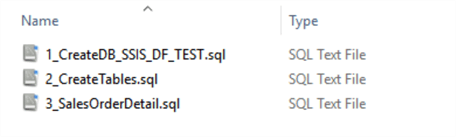

To reproduce what is given in the article download ths scripts and sample project and perform the following steps.

- Create and populate the training database with the SQL scripts given in CreateTrainingDB.zip.

- Run the SQL-scripts following the numbers in the file names.

The created database should contain the following tables:

- dbo.ETLcontrolDelay

- dbo.SalesOrderDetail

- dbo.SalesOrderDetailStg

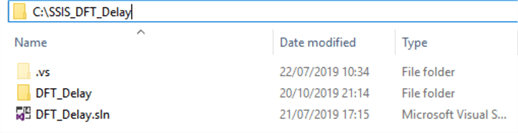

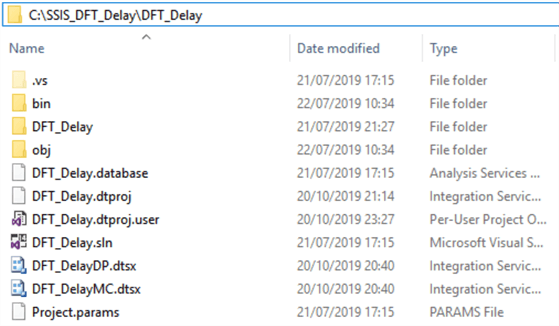

Create the folder C:\ SSIS_DFT_Delay and unzip SSIS_DFT_Delay.zipto this folder (see Fig.7).

Figure 7 - The unzipped ETL components.

- Open Visual Studio, find and open C:\SSIS_DFT_Delay\SSIS_DFT_Delay.sln.

- Expand the solution view, find the packages DF_DelayMC.dtsx that includes Multicast delay control.

- Execute the package. The ETL result should look as given below:

Figure 8 - The executed package and the final look of the data in the log and control table.

The same result is reached after the execution of another package DF_DelayDP.dtsx having a delay component within the Data Path of the data flow.

Next Steps

- Download the sample SSIS project and database scripts.

- The approach described in this tip can be successfully applied to coordinate independently working integration processes.

- Watch for future tips on this subject.

About the author

Aleksejs Kozmins is a Data Warehousing and Business Intelligence professional, focused on efficient SQL Server Integration Services processes.

Aleksejs Kozmins is a Data Warehousing and Business Intelligence professional, focused on efficient SQL Server Integration Services processes.This author pledges the content of this article is based on professional experience and not AI generated.

View all my tips