By: Haroon Ashraf | Comments (6) | Related: 1 | 2 | More > Integration Services Development

Problem

As a SQL Server Business Intelligence Developer I would like to implement and investigate error handling mechanism by manually debugging an SQL Server Integration Services (SSIS) package error to find the cause before deciding to keep or discard the error rows.

Solution

We can implement an error handling strategy by redirecting the error output to store the inconsistent data for further investigation.

We can then debug an SSIS Package manually by working on a copy of its Data Flow Task while keeping the original Data Flow Task intact at the same time.

The difference between the original and copied version is that one handles or redirects the error while other throws it.

Problem Breakdown

Let us simplify the problem scenario by breaking it into the following parts:

- The data needs to be transferred from source to destination

- The data flow must not terminate even if an inconsistent record is received

- The inconsistent data must be stored for later investigation

- The inconsistent data must be justified before it is discarded by finding the reason of its being rejected

Solution Breakdown

We are proposing the solution of above problems as follows:

- An SSIS Package can be used to copy data from source to destination

- Redirecting error output can help us to keep the data transfer running even if an inconsistent or invalid record is received

- Storing the error output to another storage such as table or file can help us to investigate it later

- Replicating the error by removing the error output helps us to understand the reason behind the error

The first part of this tip is focused on creating an SSIS Package to copy the data from source to destination along with creating an error handling mechanism to store the error and to ensure that the process does not terminate even if an invalid record is received.

About the Problem Scenario

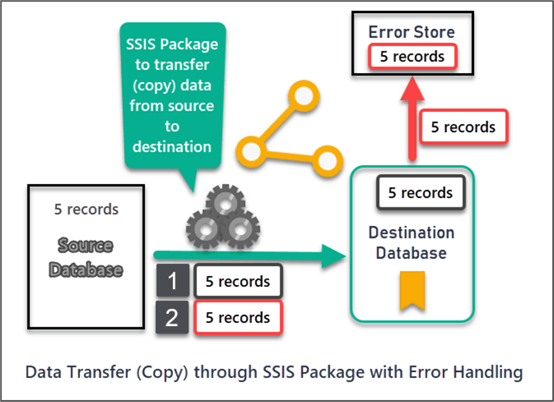

Please consider a data transfer (copy) scenario where an SSIS Package has been designed to copy the data from source to destination.

Let us discuss the SSIS Package and the related things required to be setup for the problem(s) to exist and the solution(s) to work.

The Role of Data Flow Task

The data between source and destination is copied by the help of a Data Flow Task in the SSIS Package.

Error Handling Mechanism

The Data Flow Task offers an error handling mechanism by redirecting errors to a predetermined output so that the data transfer process continues to work even after encountering an error for it to be consistently doing its job.

The philosophy behind error handling is to catch the invalid rows to be investigated later on while making sure that general data transfer does not fail in the middle of the process because of one or more invalid rows.

This does not make sense when we have a handful of records, but in real world scenarios millions of rows are getting transferred (copied) from source to destination and then the importance of error handling mechanism becomes evident.

Setup Source Database (WatchesV5)

Please create a sample database called WatchesV5 to represent source by running the following T-SQL script:

-- (1) Create WatchesV5 database

CREATE DATABASE WatchesV5;

GO

-- (2) Connect to WatchesV5 database

USE WatchesV5

-- (3) Creating Watch table

CREATE TABLE [dbo].[Watch]

(

[WatchId] INT NOT NULL IDENTITY(1,1),

[WatchType] VARCHAR(40),

[Brand] VARCHAR(40),

[Colour] VARCHAR(20),

[Price] DECIMAL(10,2)

CONSTRAINT [PK_WatchType] PRIMARY KEY (WatchId),

);

-- (4) Populating Watch table

SET IDENTITY_INSERT dbo.Watch ON

INSERT INTO [dbo].[Watch] ([WatchId], [WatchType], [Brand], [Colour], [Price]) VALUES (1, N'Digital', N'Timex Ironman ', N'Black', CAST(150.00 AS Decimal(10, 2)))

INSERT INTO [dbo].[Watch] ([WatchId], [WatchType], [Brand], [Colour], [Price]) VALUES (2, N'Analogue', N'Casio G-Shock', N'Blue', CAST(200.00 AS Decimal(10, 2)))

INSERT INTO [dbo].[Watch] ([WatchId], [WatchType], [Brand], [Colour], [Price]) VALUES (3, N'Smart', N'Garmin Smartwatch', N'Yellow', CAST(300.00 AS Decimal(10, 2)))

INSERT INTO [dbo].[Watch] ([WatchId], [WatchType], [Brand], [Colour], [Price]) VALUES (4, N'Sports', N'Timex Expedition', N'Black', CAST(150.00 AS Decimal(10, 2)))

INSERT INTO [dbo].[Watch] ([WatchId], [WatchType], [Brand], [Colour], [Price]) VALUES (5, N'Digital', N'Seiko Superrunner', N'Brown', CAST(185.00 AS Decimal(10, 2)))

SET IDENTITY_INSERT dbo.Watch OFF

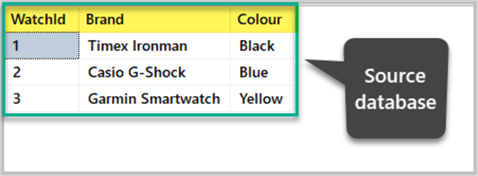

Quick View Source Database

Check the newly created sample source database as follows:

-- View source database SELECT TOP 3 w.WatchId,w.Brand,w.Colour FROM dbo.Watch w

The output is shown below:

Setup Destination Database (WachesV5DW)

We are now setting up the destination database running the following T-SQL script against master database:

-- (1) Create WatchesV5DW database

Create DATABASE WatchesV5DW;

GO

-- (2) Connect to WatchesV5DW database

USE WatchesV5DW;

GO

-- (3) Creating Watch table in Staging environment

CREATE TABLE [dbo].[Watch] (

[WatchId] INT NOT NULL,

[WatchType] VARCHAR (40) NULL,

[Brand] VARCHAR (40) NULL,

[Colour] VARCHAR (20) NULL,

[Price] DECIMAL (10, 2) NULL,

CONSTRAINT [PK_Watch] PRIMARY KEY ([WatchId])

);

The destination database is empty because we are going to populate the database by copying the data through SSIS Package.

Setup SSIS Package for Data Transfer Task

We have to create an Integration Services Project in Visual Studio to setup a data copy task from source to destination.

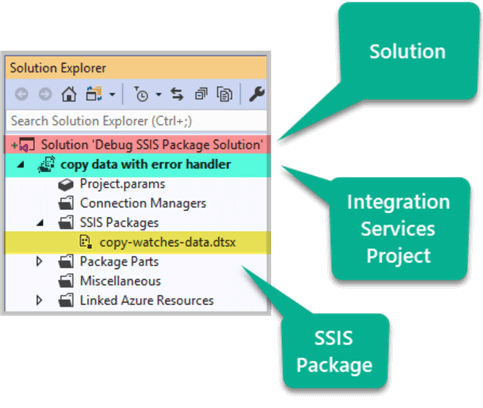

Create Integration Services Project and Package

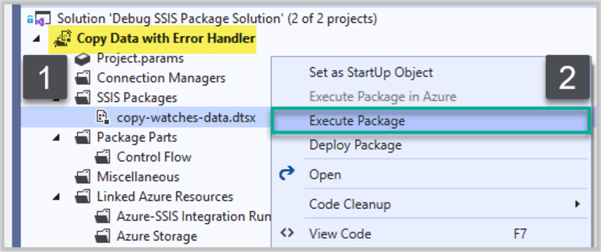

Create a new Integration Services Project called copy data with error handler under a new solution Debug SSIS Package Solution in Visual Studio.

Name the SSIS Package as copy-watches-data as follows:

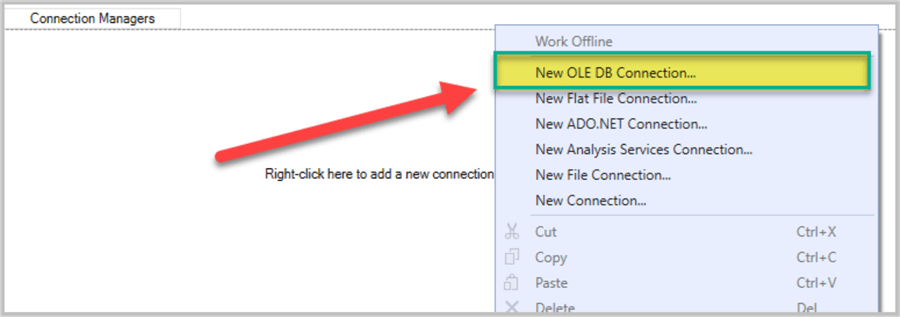

Create Source and Destination Connections

Right click anywhere inside the Connection Managers area and Click New OLE DB Connection… as shown below:

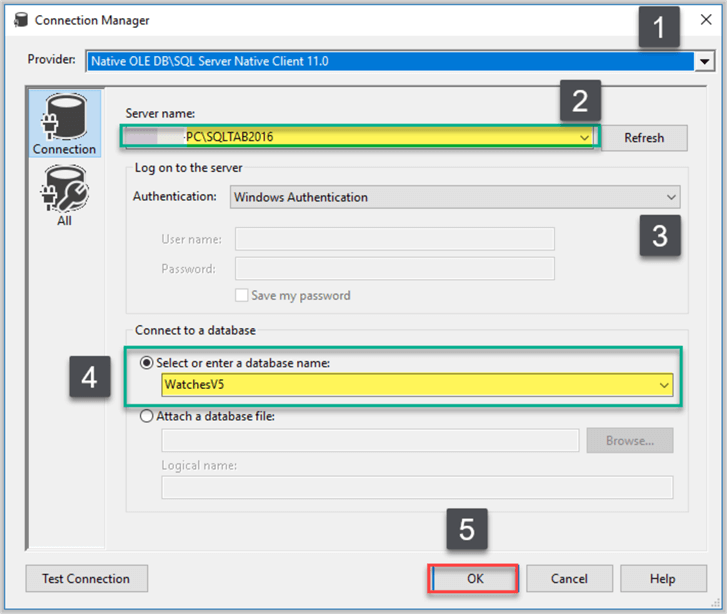

Create a new connection manager pointing to the source database after inputting the provider, server and database information and clicking OK as shown below:

Rename the connection as WatchesV5-Source.

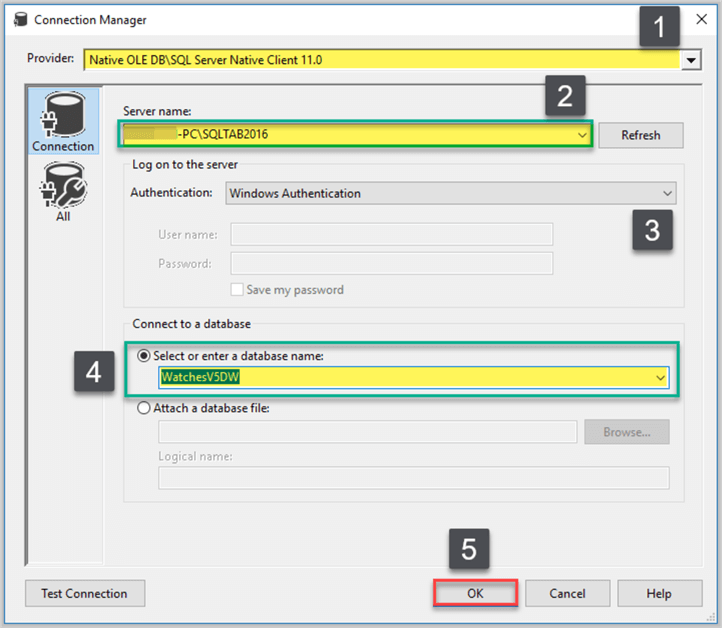

Create a second connection for the destination database in the same way you created the connection for the source database, but please remember to point it to Watches5DW this time:

Rename the connection as WatchesV5DW-Destination.

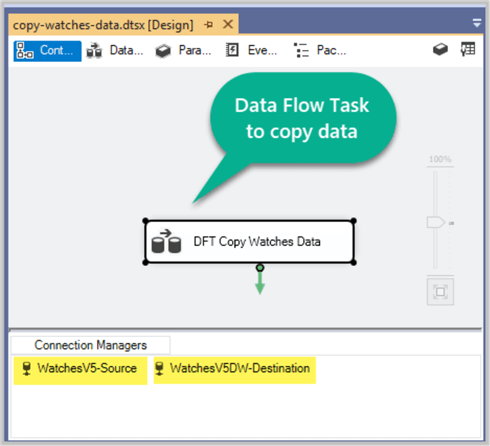

Create Data Flow Task to copy data

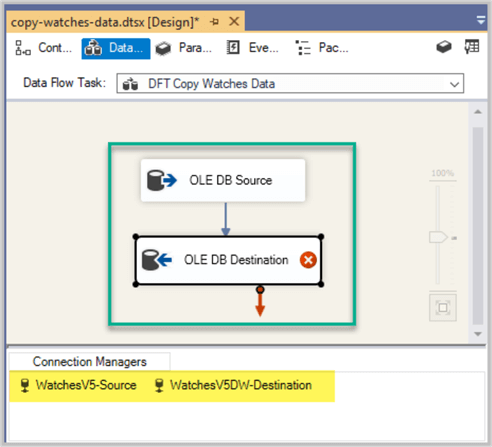

Next please drag Data Flow Task component from SSIS Toolbox and drop it onto the Control Flow surface and rename it DFT Copy Watches Data:

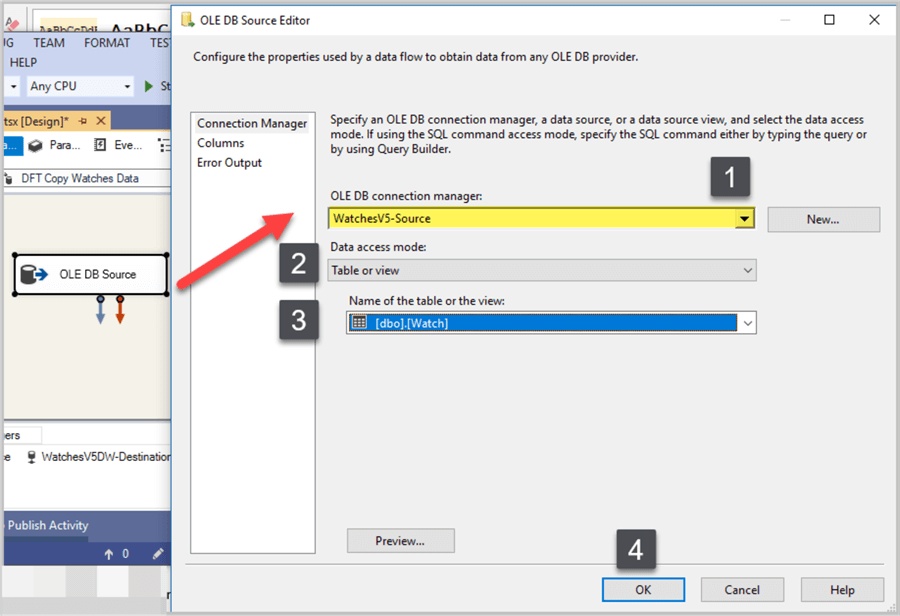

Double click Data Flow Task and add an OLE DB Source component pointing to WatchesV5-Source connection and selecting dbo.Watch table as follows:

Create OLE DB Destination component by bringing it forward from the SSIS Toolbox followed by joining OLE DB Source with it:

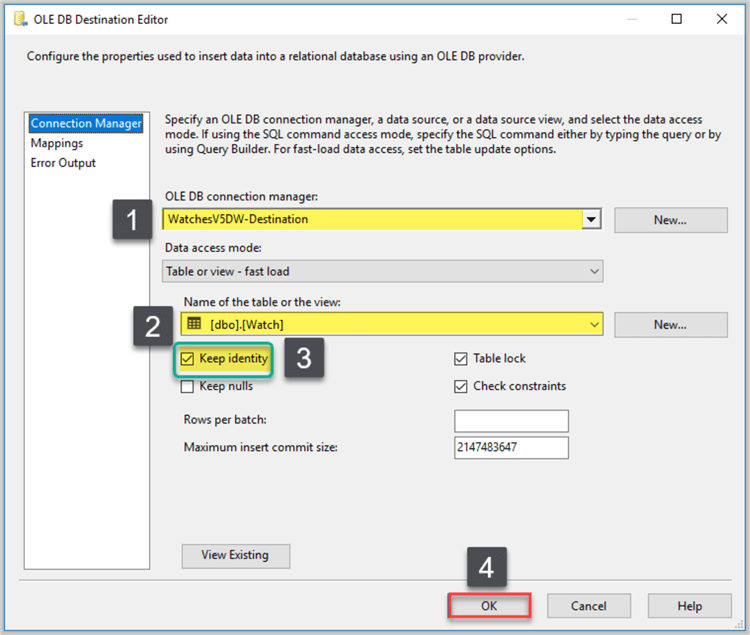

Configure OLE DB Destination and point it to the destination database WatchesV5DW:

Please make sure the following things are done:

- Keep Identity option has been checked

- Mappings are correct (which should be fine if you have followed all the steps)

Please refer to Create Custom SSIS Execution Log - Part 1 tip to get see how column mappings are checked.

Rename OLE DB Source as SRC Watch followed by renaming OLE DB Destination as DST Watch.

Create Error Handler for the SSIS Package

It is recommended to have some error handling mechanism in place to ensure smooth transferring of data even if an invalid row occurs.

Redirecting Errors from OLE DB Destination

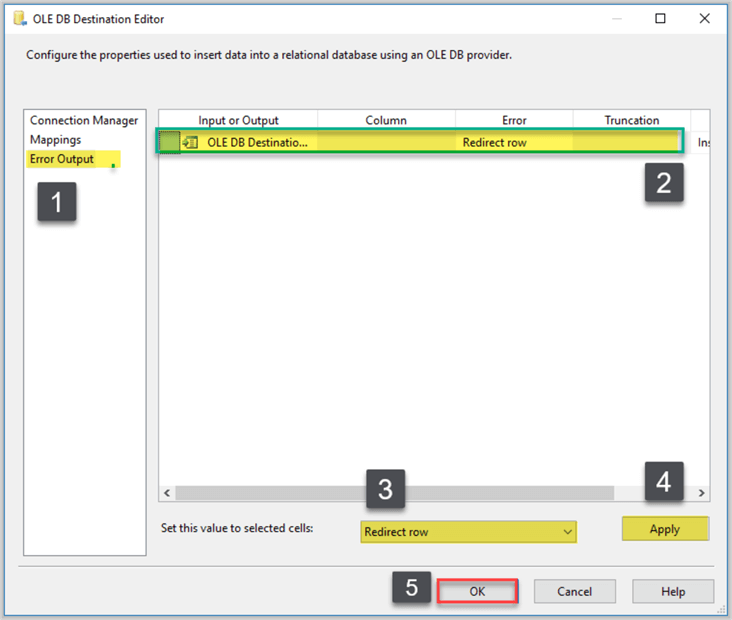

Double click OLE DB Destination component (DST Staging Watches) and go to Error Output from the left navigation bar.

Select Redirect row from Error dropdown box and then select Redirect row at the bottom of the window. Click Apply to apply the changes and then Click OK as shown below:

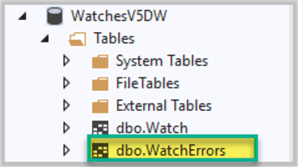

Creating Error Table

The errors can be sent to any output including text files, but to keep simple when starting off. Create an error table called WatchErrors in the destination database as follows:

USE WatchesV5DW

-- Creating WatchErrors table

CREATE TABLE [WatchErrors] (

[WatchErrorId] int primary key identity(1,1),

[WatchId] int,

[WatchType] varchar(40),

[Brand] varchar(40),

[Colour] varchar(20),

[Price] numeric(10,2),

[ErrorCode] int,

[ErrorColumn] int

);

GO

Go to SQL Server Object Explorer in Visual Studio to check the newly created table after refreshing the database:

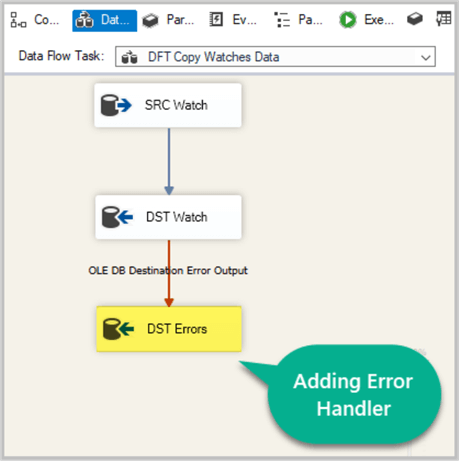

Adding Error Handler

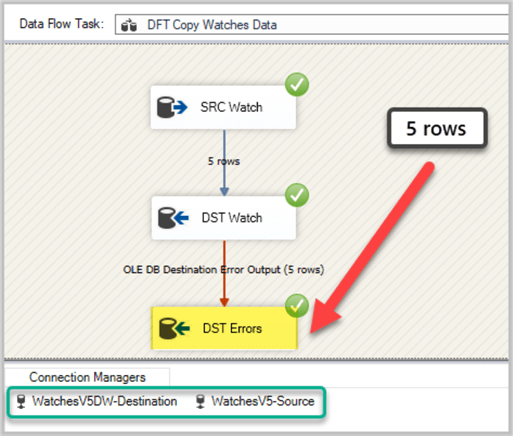

Drag another OLE DB Destination component onto the Data Flow Task designer and rename it DST Errors after joining DST Watch error output with it as shown below:

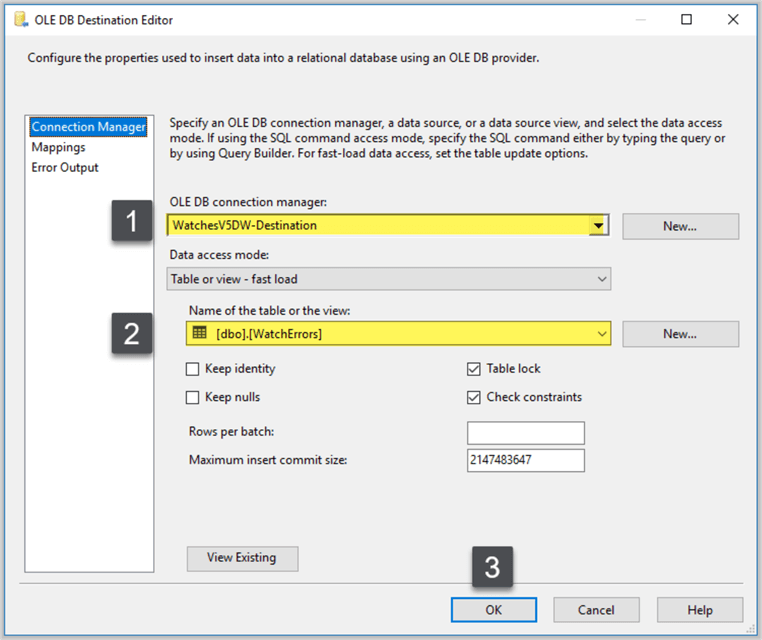

Select the same connection manager we selected for the destination database and select WatchErrors table in the next section and then click OK:

We are all set now since the error handling mechanism is ready to be used when required.

Run SSIS Package to Copy Data from Source to Destination

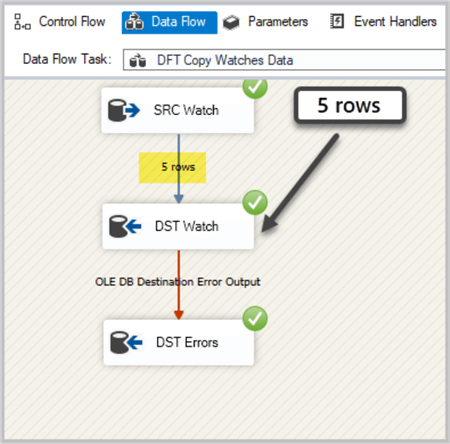

It is time to run the SSIS Package, so right click on the package and click Execute:

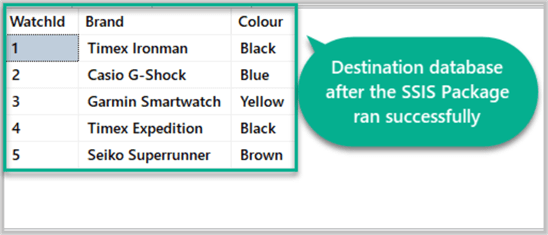

The package has run successfully so please check the destination database by running the following script:

USE WatchesV5DW -- View destination database after the SSIS Package to copy the data has run SELECT sw.WatchId,sw.Brand,sw.Colour FROM dbo.Watch sw

The output as follows:

Error Handling Mechanism Check

Next, we are going to check if the error handling mechanism is working or not.

There are many ways to check if the error handling strategy in place, but we are using a simple approach by re-running the SSIS Package.

Please remember if we re-run the package the same rows are going to be loaded again into the destination database and this is when we are able to see if the error handler is working or not since the target table has primary key constraint in place to reject such data and in the absence of error handler the rejected rows are going to terminate the package as running abnormally.

Re-run SSIS Package to copy the same data

Right click on the SSIS Package and click Execute Package to run it:

The output is as follows:

The package has run successfully by redirecting the duplicate data into the Error table.

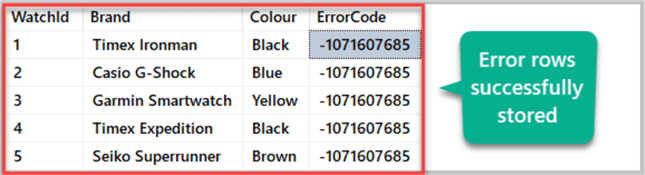

Stored Error Rows Check

Please check the error table where error rows were sent during the SSIS Package run:

-- Connect to destination database USE WatchesV5DW -- View destination database after the SSIS Package to copy the data has run SELECT we.WatchId,we.Brand,we.Colour,we.ErrorCode FROM dbo.WatchErrors we

The results are as follows:

Congratulations, we have implemented following parts of the solution:

- SSIS Package has been designed and tested to copy data from source to destination (database)

- The error handling mechanism works well when inconsistent data is inserted into the destination (table)

- The error rows are also getting stored for later investigation

However, the final and crucial bit of the solution which helps us in investigating the error rows by manually debugging the SSIS Package is yet to be explored in the next part of the tip.

Next Steps

- Please add another table called WatchOrder to the source and destination databases followed by adding and test running its error handling mechanism by following the examples in this tip.

- Please try to disable error handler mentioned in the above tip and run the package with consistent data followed by loading inconsistent data to see it terminating abnormally.

About the author

Haroon Ashraf's interests are Database-Centric Architectures and his expertise includes development, testing, implementation and migration along with Database Life Cycle Management (DLM).

Haroon Ashraf's interests are Database-Centric Architectures and his expertise includes development, testing, implementation and migration along with Database Life Cycle Management (DLM).This author pledges the content of this article is based on professional experience and not AI generated.

View all my tips