By: Haroon Ashraf | Comments (2) | Related: 1 | 2 | More > Integration Services Development

Problem

As a SQL Business Intelligence developer, I need to investigate why errors are occurring in my SQL Server Integration Services (SSIS) package. The package is setup to route errors to a different table, but I can't tell what the error is by looking at the data and the error codes are not always helpful. I need a simple way to manually debug the package without having to redo a lot of rework in the package to find out the actual issues.

Solution

It is vital to know the reason why rows are sent to an error output so you have a level of confidence the package is working correctly and also to get the error rows back (in rare cases) or discard them (in most cases) and the cause of these errors can be found by manual intervention (debugging the package).

Debugging an SSIS package manually is challenging once an error handler is in place. One possible solution is to replicate the process and not route the errors, but let the package fail to show the actual error messages.

Prerequisites

This is the second part of the tip and you should read the first part before proceeding.

For those who have already gone through the first part and implemented the walkthrough, the following things should be in place:

- Source database WatchesV5 has been setup

- Destination database WatchesV5DW has been also setup

- Integration Services Project to copy data from source to destination has been created

- Error table has also been added to the destination database

- Error handling mechanism in the SSIS Package has been setup

- SSIS Package has successfully run and copied records from source to the destination

- Rerunning of SSIS Package has caused records to be sent to error output

Now you have to debug the package manually to replicate the error to find the cause of the error rows so that you can decided to keep or discard the error rows.

Debug SSIS Package Manually to Replicate Error

To debug an SSIS Package manually to replicate the error and keeping the error handler in place may be slightly confusing, so I will explain it further to give a better understanding of the solution.

The work done so far

In the first part of this tip, we successfully copied data from the source to the destination and have successfully sent error rows to the error handler. So, we have data in the destination table and in the error table as well.

The Need to Replicate Error

You might ask, why do we need to replicate the error if inconsistent data has been successfully caught by the error handler? The answer is to find out whether to keep those rows by pulling them back into the database or not. This seems like a lot of work when you have a handful of records, but it makes a lot of sense when there are millions of rows.

Why we cannot fully refer to Error Table

Another point is why not just look at the error table and decide whether those records are good enough to be brought back into the database. There is also a solid reason behind this and the best way to find out the reason is following the steps below.

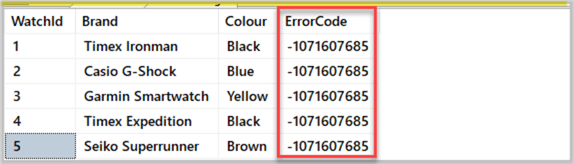

Run the following T-SQL script to see the error table which stored the error output as a result of the SSIS package run from the first part of this tip:

-- Connect to destination database USE WatchesV5DW -- View destination database after the SSIS Package to copy the data has run SELECT we.WatchId, we.Brand, we.Colour, we.ErrorCode FROM dbo.WatchErrors we

The result set is shown below:

The error table clearly shows us an error code, so finding the details of the error code must give us enough information whether to keep the rows or discard them. However, the information provided by the error table this time is not enough as we try to decode the error.

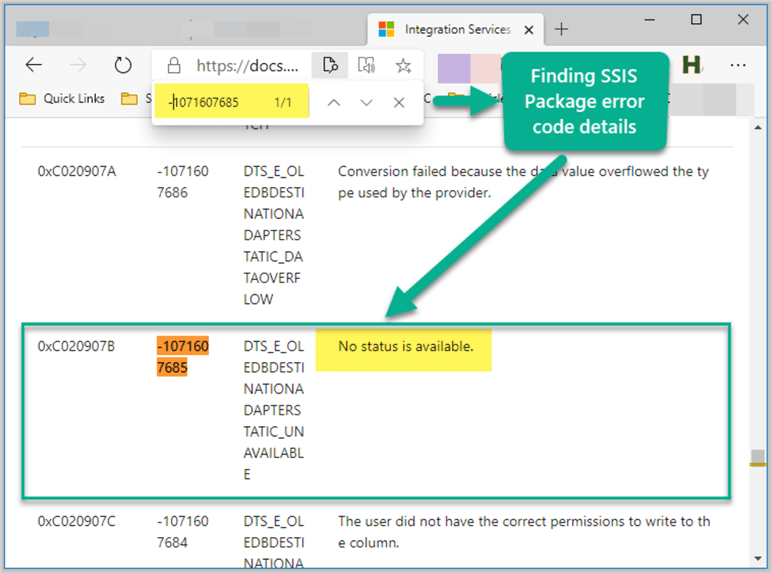

You can go to Integration Services Error and Message Reference and press Ctrl+F to find -1071607685 error code details on the page:

As we can see the error code is translated as “No status is available” which does not help much. That’s why we need to replicate the error without the error handler so we can get better information if the error terminates abnormally, but at the same time we have to make sure that we do not remove the error handler.

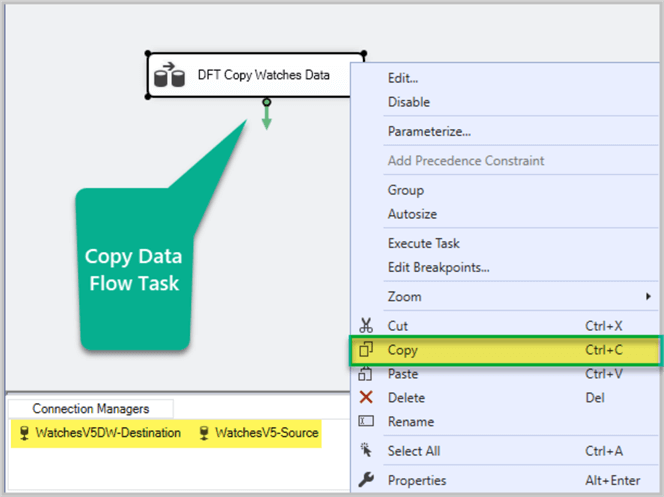

Copying Data Flow Task without Error Handler

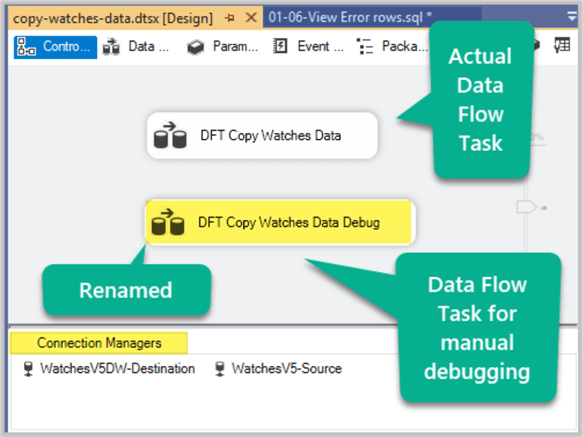

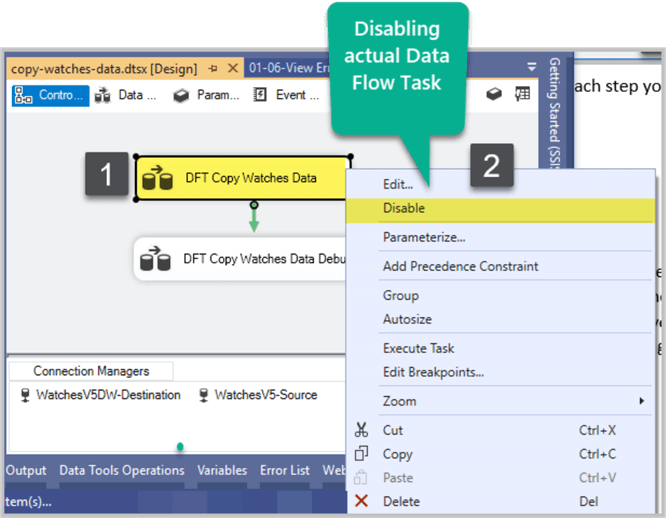

We can replicate the error by copying the Data Flow Task. Right click on the existing Data Flow Task (DFT Copy Watches Data) in the Control Flow of SSIS Package and click Copy as shown below:

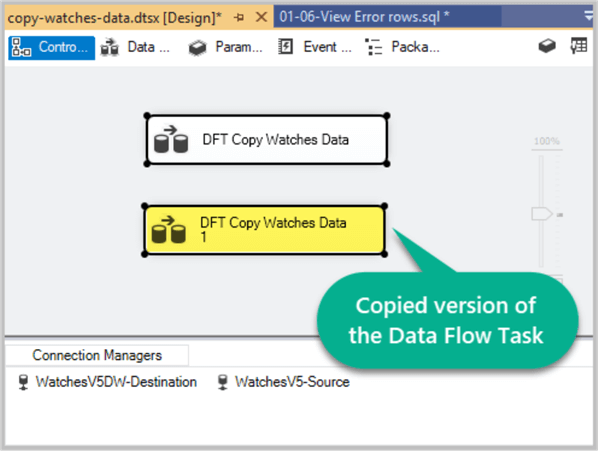

Right click anywhere on the Control Flow surface and click Paste to create a duplicate version of the existing Data Flow Task as follows:

Rename the copied version as DFT Copy Watches Data Debug to make sure you remember this is used for manual debugging as shown below:

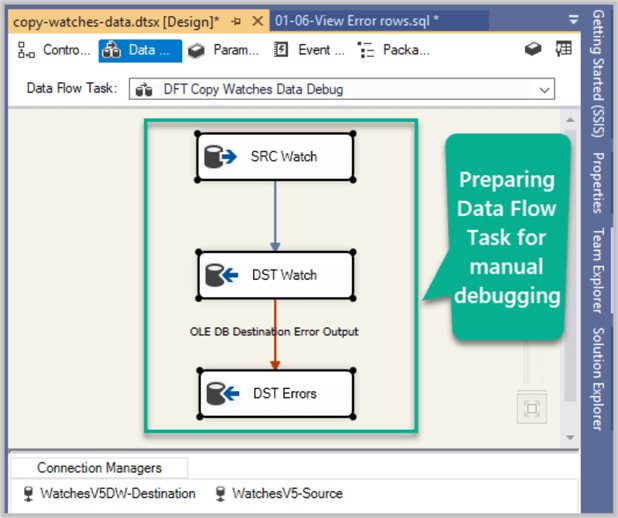

Double click on the Data Flow Task to be used for manual debugging to view this task details which contains an error handler as well:

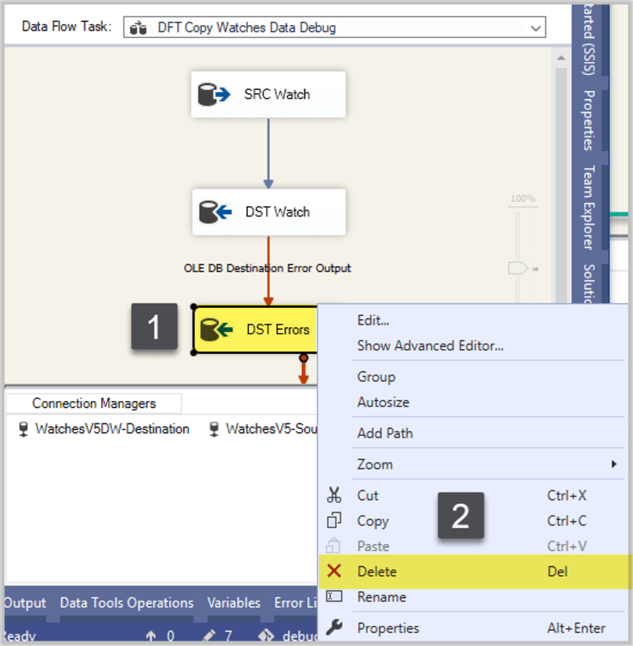

Right click on Destination Component DST Errors and click Delete to remove error handler:

You are left with one source component SRC Watch and one destination component DST Watch, but there is a warning next to the destination component because you have removed the error output, but the destination component DST Errors is not aware of this change:

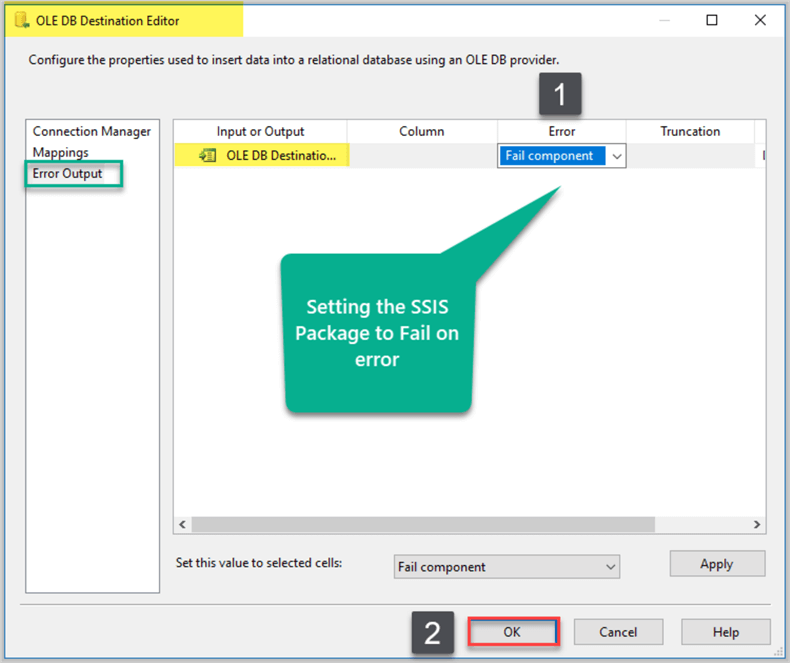

Double click on DST Watch and then select Error Output from left side options and select Fail component from the drop down box next to OLE Destination Input and click OK as shown below:

Please remember to save your changes at the end of each step.

Replicating Error by Running Data Flow Task without Error Handler

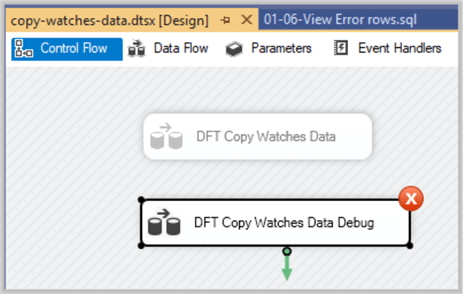

Right click on the actual Data Flow Task DFT Copy Watches Data and click Disable to make sure it does not execute during the manual debugging of the SSIS Package.

Right click DFT Copy Watches Data Debug task and click Execute to run it:

The package has failed and this means we have successfully terminated the package abnormally to get more details of the error which were not available when we ran it with the error handler.

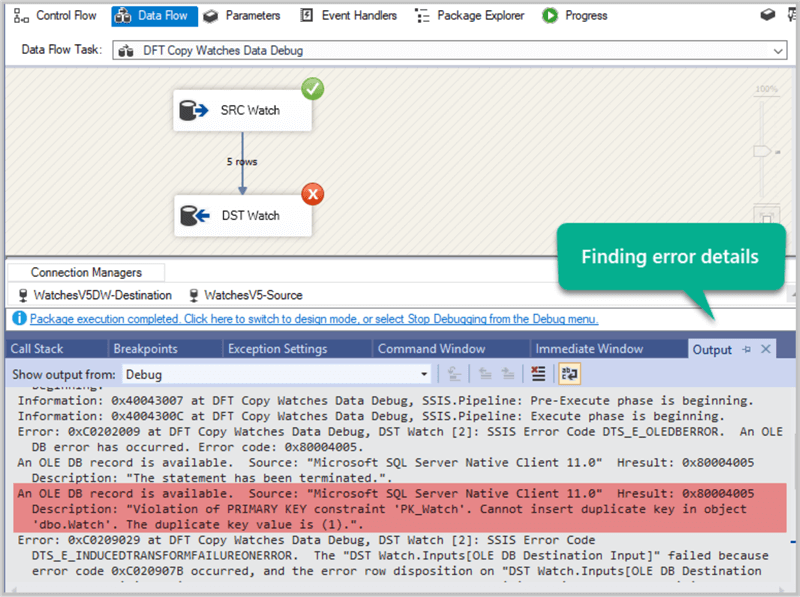

Double click on DFT Copy Watches Data Debug failed task to open it and then click CTRL+ALT+O to view the output window (normally at the bottom of the Visual Studio IDE) to see the error details:

The output windows shows the required error details:

Description: "Violation of PRIMARY KEY constraint 'PK_Watch'. Cannot insert duplicate key in object 'dbo.Watch'. The duplicate key value is (1).".

So, it is clear that the rows which were inserted last time were rejected by the error handler due to the fact that they already existed in the database and it would have been a primary key violation if they had managed to find their way into the database.

Congratulations, we have successfully found the cause of the error without losing the error handler and debugged the package manually.

We don’t want to insert duplicate data into our database so it is easy to decide that these rows need to be discarded as they do not need to be put back into the destination table.

This is a very handy and quick way to find the details of errors especially when a batch consisting of thousands of rows is being sent to the error output and the error code does not give you enough information.

You can later the remove or disable the Data Flow Task created to debug the SSIS package manually and enable the actual Data Flow Task which contains an error handle to keep on running as usual.

Next Steps

- Please try to add another table called WatchOrder a and replicate the error in the same way and see how easy you can debug it manually.

- Please try to add foreign key relationship between WatchOrder and Watch table and then deliberately add WatchId to WatchOrder which does not exist in Watch to first see if this gets caught by the error handler followed by finding error code and details and finally debugging it manually to find out more details.

- Please try to mix and match primary key and foreign key errors to see if they can be caught by the error handler and then try to debug the package manually to find the error details using the above tip as a reference.

About the author

Haroon Ashraf's interests are Database-Centric Architectures and his expertise includes development, testing, implementation and migration along with Database Life Cycle Management (DLM).

Haroon Ashraf's interests are Database-Centric Architectures and his expertise includes development, testing, implementation and migration along with Database Life Cycle Management (DLM).This author pledges the content of this article is based on professional experience and not AI generated.

View all my tips