Problem

“Go NoSQL”, “No need for DBA”, “No need to think about data modeling”, “Just write the code and the database will work and tune itself.” I am sure you have heard all of these things, but the more I work with document stores, the more data modeling techniques I see and implement.

For data that grows exponentially, no performance improvement is superfluous. One document store challenge is to choose the data modeling technique that will work best. Schema design in a document database is flexible, but this does not mean there should be no schema at all. It is most important to figure out what you plan to do with the data BEFORE choosing the document schema.

Time has proved that there is still a great need for people who understand how to build data models properly and avoid common pitfalls. Things can go wrong in NoSQL. Documents sometimes grow huge and reach the document size limit for the specific datastore. Developers do data migrations frequently because there is a need to change the document structure to support evolving requirements. The datastore might fail to do what the logic developer planned to execute, therefore in such cases, the application will need to filter the data on the client side. One of the most frequent problems with an open schema is the same property can come from different application components sometimes in upper or lower case. In such cases, the solution would be either filtering on both properties using the “OR” operator or filtering on only one property, getting less accurate results.

In this article, I will share a series of document store modeling techniques that I have learned on my Data Engineering journey.

Solution

There are various document store modeling techniques and it is beneficial to choose the correct model. Here is an overview of the various models.

Embedded Data Model

In this data model, entities are nested, one inside another. For instance, in the below twitter example, each tweet is persisted as a document with all its properties, like post time, author details, etc. There is another entity, tweet comments and this entity is embedded as a list of documents inside each tweet. The advantage of this technique is that when we filter for a specific tweet, we get all information related to it, including the comments. It is like joining two entities in a SQL JOIN. This technique should be used with caution and only when the embedded entity will not grow too large. Otherwise, the document may reach the document size limit of the data store and inserts will start failing. Another challenge in this data model is performing searches based on multiple comment properties. It’s always a challenge for document data stores to index lists, and the performance of complex queries on lists will usually be quite slow.

Composite Key Data Model

We can add an additional field that contains a combination of properties in a document that can identify it uniquely. When we add such a surrogate key, it can be used for simple searches to speed up the reads. You can use a pattern search on this field instead of an OR search. The disadvantage of this method is remembering to update this field when any properties get changed.

Inverted Index Data Model

Sometimes there are properties in the document with unstructured text, like newspaper articles, blog posts, or book abstracts. The inverted index is easy to build and is similar to data structures search engines use. Usually, the documents are “parents,” and the words inside the document are “children.” To build an inverted index, we invert this relation to make the words “parents” and documents “children”:

- Take all or a subset of keywords from the document and pair it with the document ID

- DocId1: keyword1

- DocId1: keyword2

- DocId1: keyword3

- DocId2: keyword4

- DocId2: keyword1

- Revert the order by taking all unique keywords and making a list of documents where those keywords appear.

Such document structures can help in various complex search patterns, like common word detection, full-text searches, or document similarity searches, using humming distance or l2distance algorithms. Inverted indexes are useful when the number of keywords is not too large and when the existing data is either totally immutable or rarely changed, but frequently searched.

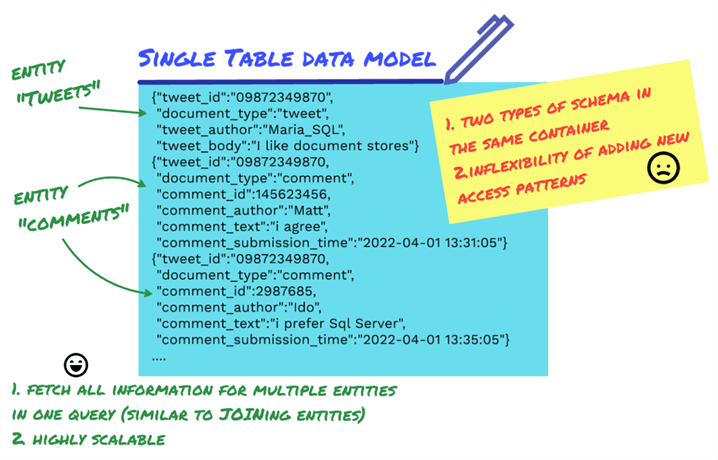

Single Table Data Model

When there are multiple data entities, you must figure out how to model them. If you put each data entity into a separate container, you end up “joining” the data by making multiple network requests, using data from the first request in the second request.

The Single Table data model is helpful to lower the number of requests to the database engine by storing all entities in one container and having the same key in each data entity. This way the data is fetched from multiple data entities in a single request. This data model is highly scalable and works great if there are huge documents. The documents can be split into smaller ones and when filtered by document type, can receive some or all of them.

Different document types in the same container may complicate data analysis when the data analytics engine tries to infer the container schema. A possible correction for this is defining the schema manually to include all document properties.

Next Steps

In the next post we will talk about four more document data models.

Check out these articles for more information:

Maria Zakourdaev is a Data Platform Microsoft MVP and a technology expert with more than 20 years of experience, a community leader with a profound knowledge of Microsoft products and services, while also being able to bring together diverse platforms, products, and solutions to solve real-world problems.

Maria has a hands-on experience managing various data management technologies in Azure, AWS and Google public cloud platforms, including SQL Server, Azure CosmosDB, AWS DynamoDB, MemSQL, MySQL, Postgresql, Snowflake, Redis, Amazon Redshift, Couchbase and Elasticsearch, Spark, Databricks, Big Query.

Maria is an organiser of the annual conference “Data TLV” ( ex “SQL Saturday Israel”), a free training event for any data professionals: http://datatlv.com.

- MSSQLTips Awards: Trendsetter (25+ tips) – 2022 | Author Contender – 2022 | Rookie Contender – 2018

Hi Roman, see this article:

https://www.mssqltips.com/sqlservertip/7358/document-data-store-data-models-and-proximity-queries/

Where I can find the mentioned “the next post we will talk about four more document data models.” ? I looked in article search and on author’s page, there’s only this article about NoSQL?