By: Koen Verbeeck

Overview

Now that we've created our first pipelines, it is time to delve a bit deeper into the inner working of ADF. Let's start with linked services.

The Purpose of Linked Services

In the previous step of the tutorial, every time we created a new connection in the Copy Data tool, we were creating a new linked service. A linked service is a connection to a specific service or data store that can either be a source of data, or a destination (also called target or sink). People who have worked with Integration Services (SSIS) before will recognize this concept; a linked service can be compared with a project connection manager in SSIS.

A linked service will store the connection string, but also any method on how to authenticate with the service. Once a linked service is created, you can reuse it everywhere. For example, if you have a data warehouse in Azure SQL database, you will only need to define this connection once.

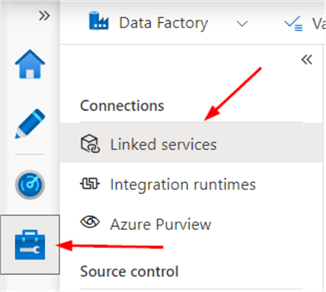

Linked services can be found in the Manage section of ADF Studio (lowest icon in the left menu bar).

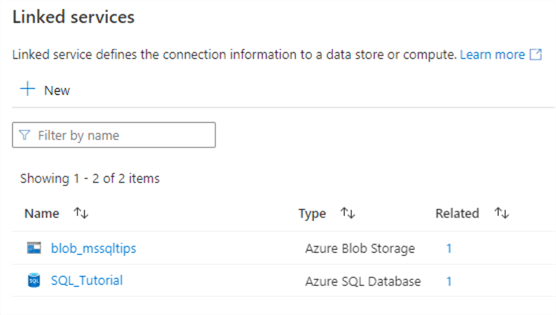

There we can find the two linked services we created in the previous part:

Which Linked Services are there?

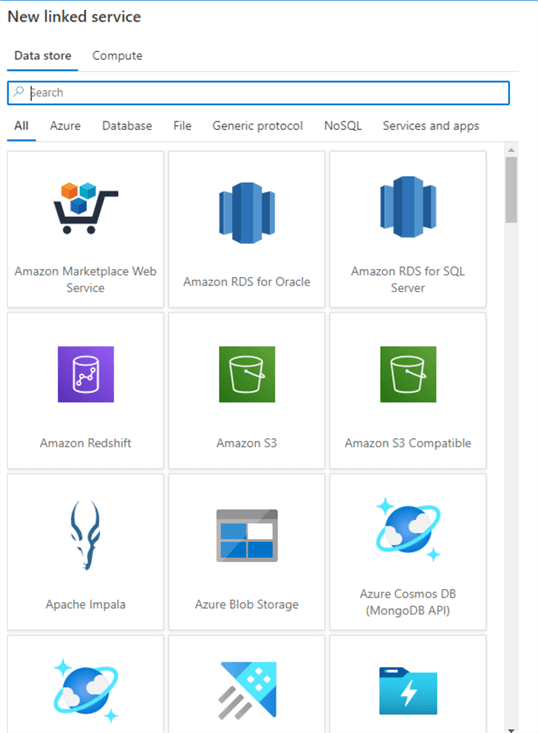

There are many different types of linked services. There are a couple of categories available:

- Azure. Services like Blob Storage, Cosmos DB (with each different API), Data Explorer, Data Lake Storage, Key Vault, Databricks Delta Lake, Table Storage, Synapse Analytics and so on.

- All the Azure databases (Azure SQL DB, Azure SQL DB Managed Instance, Azure Database for MySQL/PostgreSQL/MariaDB …), but also on-premises SQL Server and other vendors like Amazon RDS, Amazon Redshift, Apache Impala, DB2, Google Bigquery, Hive, PostgreSQL, Oracle, SAP BW, SAP HANA, Spark, Sybase, Teradata and many others.

- File. Amazon S3, FTP, File System (on-premises), Google Cloud Storage, HDFS, HTTP, Oracle Cloud Storage and SFTP.

- NoSQL. Cassandra, Couchbase and MongoDB.

- Services and apps. Dataverse, Dynamics, Github, Jira, Office 365, PayPal, REST, Salesforce, Snowflake and many others.

- Generic protocol. When all else fails. ODBC, OData, REST and SharePoint Online List.

This list is not exhaustive and is continuously updated. Keep an eye on the official documentation for updates.

Keep in mind that for on-premises data sources (and some online data sources) we need a special integration runtime, which will be covered later in the tutorial.

Creating a Linked Service Manually

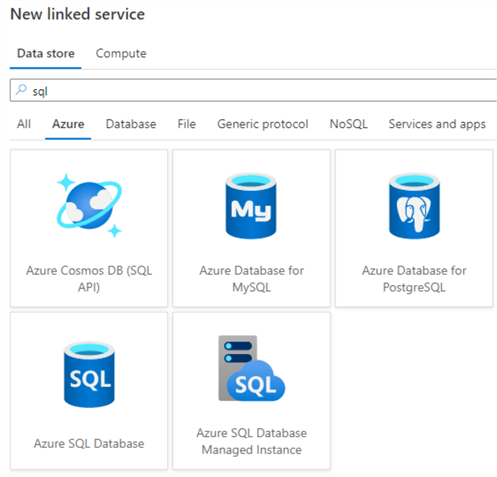

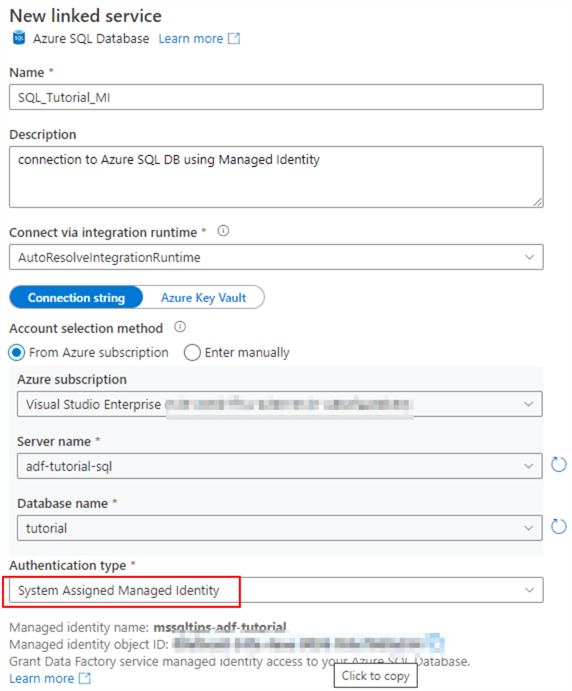

In the Manage section, go to Linked Services and click on New. Search for Azure SQL Database.

Give a name to the new linked service and use the default integration runtime. Instead of choosing SQL authentication or Azure AD authentication, this time we're going to use System Assigned Managed Identity. This means we're going to log into Azure SQL DB using the user credentials of ADF itself. The advantage here is we don't need to specify users or passwords in the linked service.

However, to make this work, we need to add ADF as a user into our database. When logged into the database using an Azure AD user with the necessary permissions, open a new query window and execute the following query:

CREATE USER [mssqltips-adf-tutorial] FOR EXTERNAL PROVIDER;

Next, we need to assign permissions to this user. Typically, ADF will need to read and write data to the database. So we will add this user to the db_datareader and db_datawriter roles. If ADF needs to be able to truncate tables or to automatically create new tables, you can add the user to the db_ddladmin role as well.

ALTER ROLE db_datareader ADD MEMBER [mssqltips-adf-tutorial]; ALTER ROLE db_datawriter ADD MEMBER [mssqltips-adf-tutorial]; ALTER ROLE db_ddladmin ADD MEMBER [mssqltips-adf-tutorial];

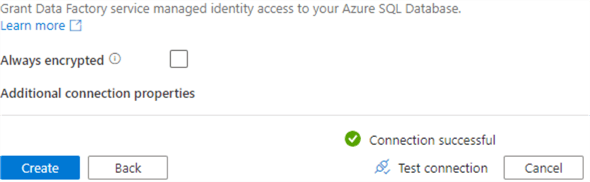

Now we can test our connection in ADF and create it:

Click on Publish to persist the new linked service to the ADF environment.

Linked Services Best Practices

A couple of best practices (or guidelines if you want) for creating linked services in ADF:

- Use a naming convention. For example, prefix connection to SQL server with SQL_ and connection to Azure Blob Storage with BLOB_. This will make it easier for you to keep apart the different types of linked services.

- If you have multiple environments (for example a development and a production environment), use the same name for a connection in all environments. For example, don't call a connection to your development data warehouse "dev_dwh", but rather "SQL_dwh". Having the same name will make it easier when you automate deployments between environments.

- If you cannot use managed identities and you need to specify usernames and passwords, store them in Azure Key Vault instead of directly embedding them in the Linked Service. Key Vault is a secure storage for secrets. It has the advantage of centralizing your secrets. If for example a password or username changes, you only need to update it at one location. You can find an introduction to Azure Key Vault in the tip Microsoft Azure Key Vault for Password Management for SQL Server Applications.

Additional Information

- The tip Create Azure Data Lake Linked Service Using Azure Data Factory explains how to create a linked service for Azure Data Lake Analytics.

- Recently a linked service for Snowflake was introduced. You can check it out in the tip Copy Data from and to Snowflake with Azure Data Factory.

- If you want to create a connection to a file, such as Excel or CSV, you need to create a linked service to the data store where the file can be found. For example: Azure Blob Storage or your local file system.

- For the moment, only SharePoint Lists are supported for SharePoint Online. Reading documents inside a SharePoint library is currently not supported by ADF.