Problem

As more traditional on-premises data warehouses are now being designed, developed and delivered in the cloud, the data integration, transformation, and orchestration layers are being constructed in Azure Data Factory, which is Microsoft’s on-premises SQL Server Integration Services (SSIS) equivalent offering in the Azure Cloud platform.

As I begin to get more comfortable with writing these data transformation scripts in Azure Data Lake Analytics using the U-SQL language, I am now interested in integrating these transformation scripts in my Azure Data Factory ETL Pipeline. I know that there is a Data Lake Analytics U-SQL activity task in the pipeline, however when I try to add this U-SQL Task to my pipeline, I am running in to issues and errors configuring the New Azure Data Analytics Linked Service. This article pin-points these issues and offers a step to step solution to configuring a New Azure Data Lake Analytics Linked Service using Azure Data Factory.

Solution

Azure Data Factory is a Microsoft Azure Cloud based ETL service offering that has the potential of designing and orchestrating cloud-based data warehouses, data integration and transformation layers. Specifically, the Data Lake Analytics activity, containing the U-SQL Task allows us to transform data using custom U-SQL scripts stored in Azure Data Lake. However, the configuration of this U-SQL Task might seem like a daunting task since it is not seamless as it should be at the time of this article. This tip is intended to walk through an example of how to configure this U-SQL task in Azure Data Factory to then allow us to transform big data with U-SQL scripts orchestrated in Azure Data Factory.

Provisioning Azure Resources

Before I begin creating the linked service, I will need to provision a few Azure resources prior to building my Azure Data Factory pipeline that will run a U-SQL script to transform my data and then load it to Azure SQL Database. Let’s get started.

Creating a Data Factory

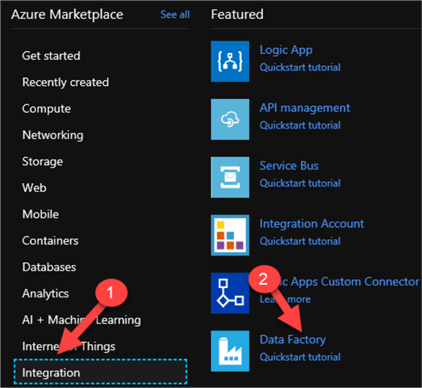

Let’s start by Creating a Data Factory by Navigating to the Azure Marketplace in the Azure Portal and then clicking Integration > Data Factory.

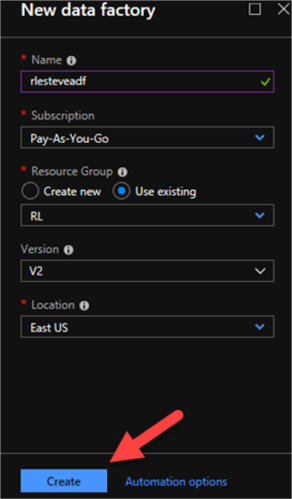

Once I enter the necessary details related to my new data factory, I will click Create.

Creating an Azure Data Lake

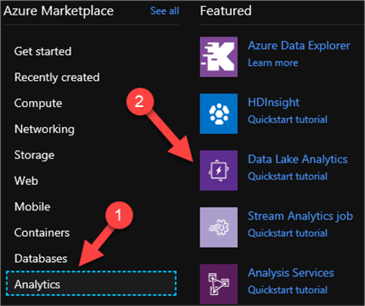

The last resource that I will need to provision for this process is my Azure Data Lake, which will consist of an Azure Data Lake Store and an Azure Data Lake Analytics account. Once again, I will navigate to the Azure Marketplace in the Azure Portal, click Analytics > Data Lake Analytics.

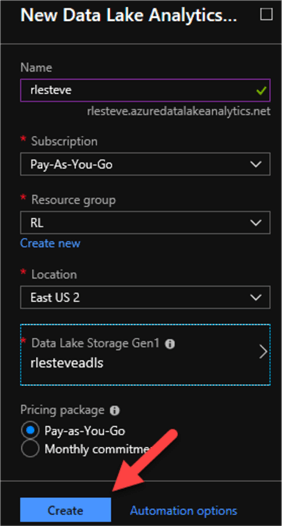

After I enter the following configuration details for the New Data Lake Analytics account, I will click create.

Creating and Azure Data Lake Analytics Linked Service

To create a new Azure Data Lake Analytics Linked Service, I will launch my Azure Data Factory by clicking on the following icon which I have pinned to my dashboard.

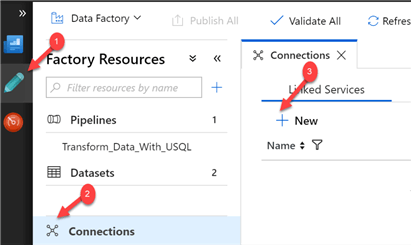

Once my Data Factory opens, I will click Author > Connections > New Linked Service as follows:

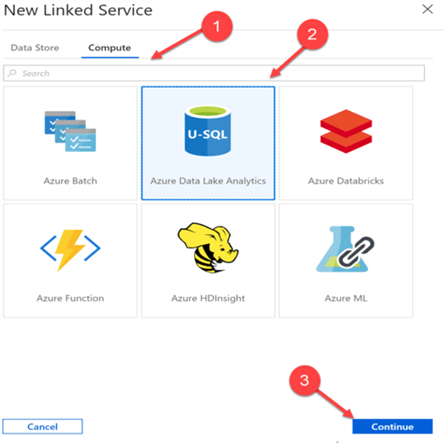

From there, I will select Compute > Azure Data Lake Analytics > Continue.

I’m now ready to begin creating my Azure Data Analytics Linked Service by entering the following details:

Note that I I’m being asked for a Service Principal ID and Service Principal Key. Since I don’t have these credentials yet, I will need to work on getting them in the following steps so that I can complete the configuration of the linked service to allow Azure Data Factory to execute my Data Lake U-SQL Scripts.

To acquire the Service Principal ID and Key, I will need a Service Principal which is similar to a proxy account which allows Azure services to connect to other services.

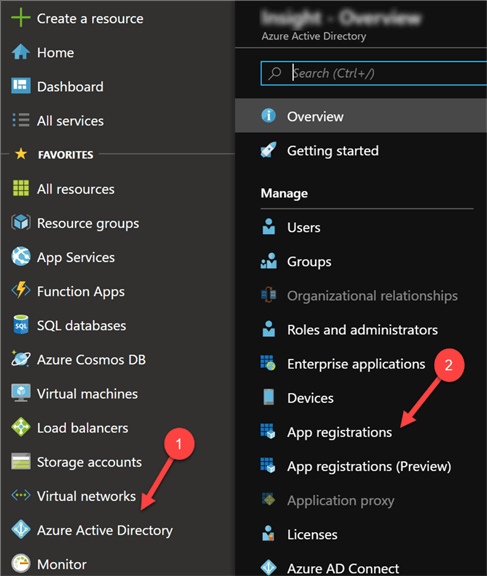

I’ll start by navigate to my Azure Active Directory in the Azure Portal and then click ‘App Registrations’ as seen in the image below.

I’ll then click ‘New Application Registration’ to create a new Service App.

Upon doing so, a new blade will reveal itself, allowing me to name my app and its Sign-on URL. When I first attempted this process, I created a new Web app in the portal. However, after additional discovery I realized I could enter a placeholder URL to achieve the same results.

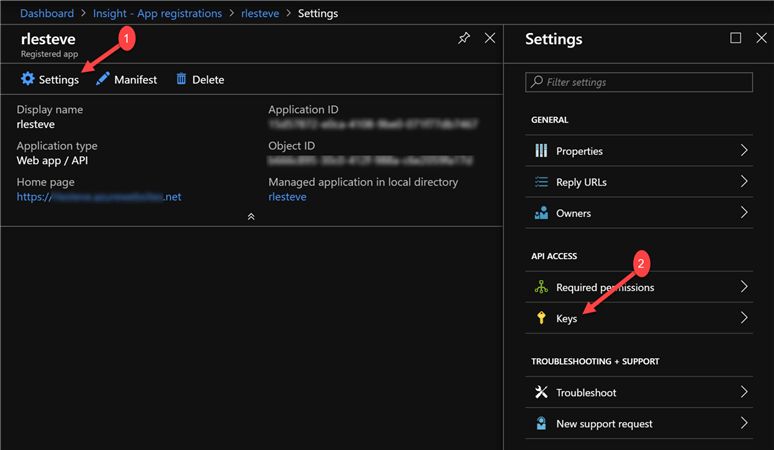

After entering my app details, I’ll click the Create button to move on to the next step. Once my app is created, I will be able to see the Registered app details. The Application ID is the Service Principal ID, so I will copy this ID and paste it in my New Linked Service Dialog box in Data Factory.

Next, I will need to generate my Service Principal Key to complete the New Linked Service registration. To do this, I will click settings on my Registered app and then click Keys.

When the Keys blade opens, I will enter a new key description, the expiration Duration, and then click Save. As I click save, the new Key Value will be generated and visible. Note the warning which reminds me that the key value will no longer be visible after I leave this black so I will Copy and save the Key Value.

Once again, I will navigate back to my New Linked Service registration in Data Factory and Enter the Key Value in the Service Principal Key section of the Linked Service:

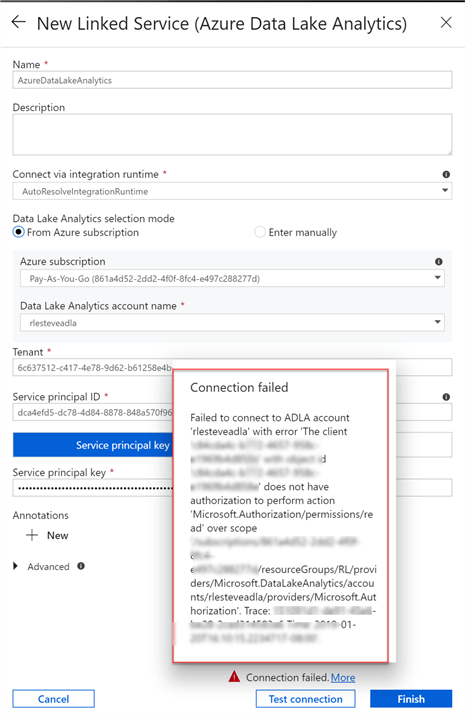

Now that I have entered my Service Principal ID and Service Principal Key, I would think that I should be able to successfully complete the process of adding a New Data Lake Analytics linked service. Unfortunately, there are a few more permissions that will need to be granted to complete this configuration since the connection still failed at this point.

Additional Permissions

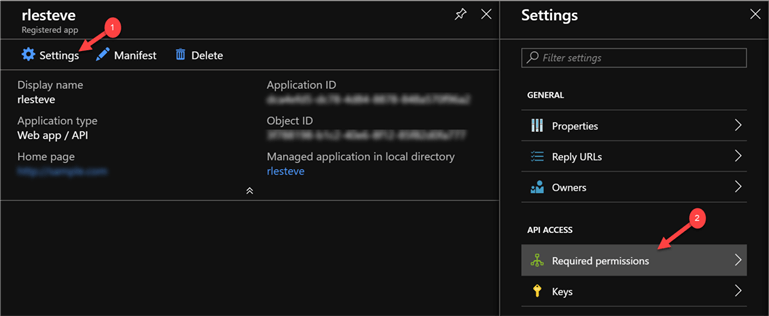

The first step of these additional permissions will be to navigate back to my Registered app and click Settings > Required Permissions.

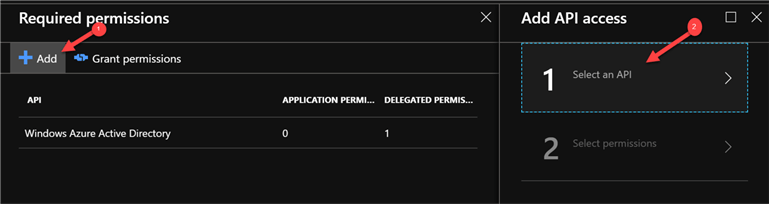

Next click Add to begin Adding an API.

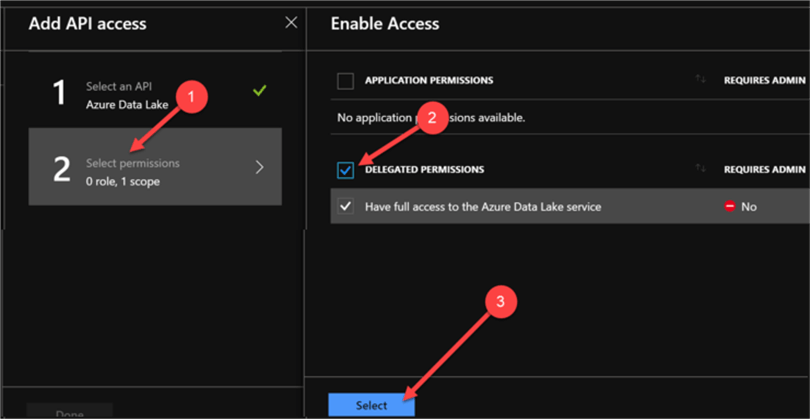

I will then select Azure Data Lake as my API and click select.

I’ll then move on to the next step of selecting permissions and will click Delegate permissions and then click select.

When the Required permissions box is displayed, I notice that Azure Data Lake is set as a Delegated Permission.

This completes our work with the Registered App.

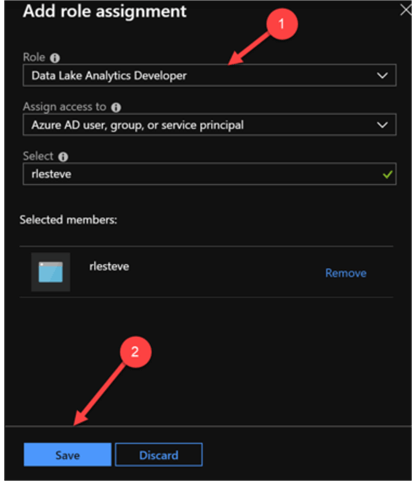

Next, I will need to do a few more final configurations in my Data Lake Analytics Account to give my registered app permissions to my data lake account. I’ll navigate to my Data Lake Analytics Account and then click Access Control (IAM) and click Add role assignment.

In the Add role assignment blade, I will select Data Lake Analytics Developer as my role and I will select my registered app and then click save.

Now that the Azure resource level IAM Access Control is complete, I can proceed to create my Data Lake level permissions.

To do this, I will click Add User Wizard and then select my registered app name and then click select.

Next, I will proceed to select Data Lake Analytics Developer as the role.

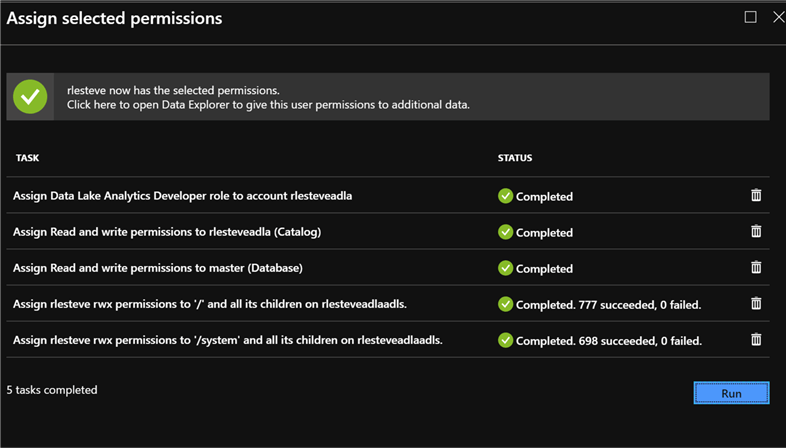

As I move on to the catalog permissions, I will grant Read and write permissions to the Data Lake Analytics catalog and then click Select.

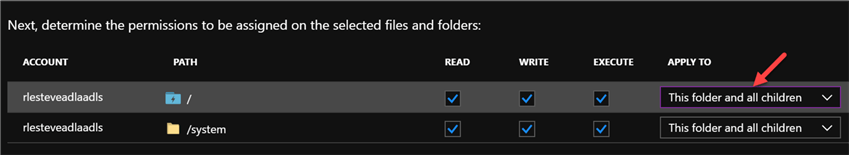

Next, I will be asked to determine the permissions to be assigned on the selected files and folders. I will click ‘This folder and all children’ and click select.

I will then be presented with the Assign Permissions task list base on my previous selections that will have a status of ‘Pending’.

When I click Run, the jobs will begin running as the tasks complete.

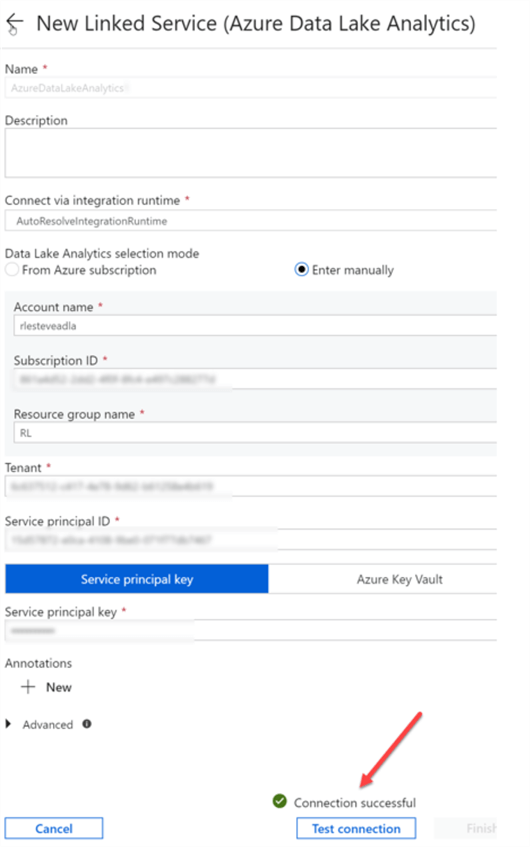

As soon as the job completes, I will click Done to finally complete to Service Principal registration process. Now I can return to my Data Factory and re-test my connection to my New Azure Data Lake Analytics Linked Service. Note that since my Data Factory Linked Service had been open for so long, it will most probably need to be re-created to refresh the new setting and permissions.

After I re-enter my save Service Principal ID and Key, I’ll click Test Connection one last time, and I finally see a ‘Connection Successful’ status. I’ll then click finish and now I am ready to begin transforming data with U-SQL using Azure Data Factory.

Next Steps

- In this article, I walked through a step-by-step example on how to create an Azure Data Lake Linked Service in Azure Data Factory v2.

- As a next step, my article on Using Azure Data Factory to Transform Data with U-SQL will go into detail on how to now utilize this new Azure Data Analytics Linked Service to create pipelines which will use Linked Services and Data Sets to process, transform and schedule data with U-SQL and Data Lake Analytics.

Ron C. L’Esteve is a Data and AI Leader and professional Author residing in Chicago, IL, USA. He is well-known for his impactful books and article publications on Azure Data & AI Architecture, Engineering, and Leadership. Ron holds a Master of Business Administration (M.B.A.) and Master of Science in Corporate Finance (M.S.F.) from Loyola University Chicago. Ron possesses deep technical skills and experience in designing, implementing, leading, and delivering modern Azure Data & AI projects for numerous clients around the world. He is a Motorola Certified Six Sigma Green Belt, a Microsoft Global Challenger, and also holds numerous additional Microsoft, Snowflake, and Databricks professional certifications in Big Data Engineering, Artificial Intelligence, Apache Spark and Data Platform Architecture.

- MSSQLTips Awards: Champion (100+ tips) – 2023 | Author of the Year – 2020-2021 | Rookie of the Year – 2019