By: Koen Verbeeck | Comments (12) | Related: 1 | 2 | > Azure Data Factory

Problem

I've created a data pipeline in Azure Data Factory. I would like to send an e-mail notification if one of the activities fail or if the end of the pipeline has been successfully reached. However, it seems there's no "send e-mail activity" in Azure Data Factory. How can I solve this issue?

Solution

When building ETL pipelines, you typically want to notify someone when something goes wrong (or when everything has finished successfully). Usually this is done by sending an e-mail to the support team or someone else who is responsible for the ETL. In SQL Server Agent, this functionality comes out-of-the-box. See for example the tip How to setup SQL Server alerts and email operator notifications for more information. In Azure Data Factory (ADF), you can build sophisticated data pipelines for managing your data integration needs in the cloud. But there's no built-in activity for sending an e-mail. In this tip, we'll see how you can implement a work around using the Web Activity and an Azure Logic App.

Sending an Email with Logic Apps

Logic Apps allow you to easily create a workflow in the cloud without having to write much code. Since ADF has no built-in mechanism to send e-mails we are going to send them through Logic Apps.

In the top left corner of the Azure Portal, choose to create a new resource.

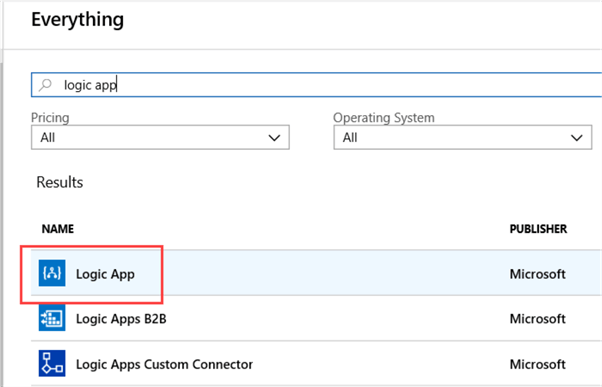

Choose the Logic App resource from the list and click on Create in the next blade.

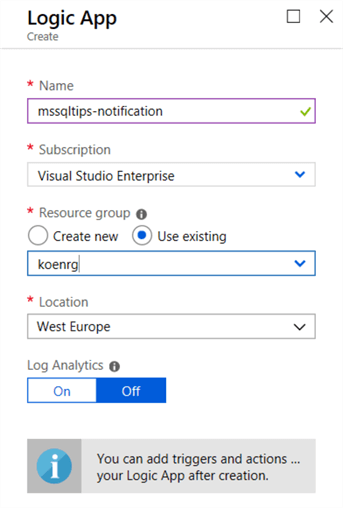

You will be asked to specify some details for the new Logic App:

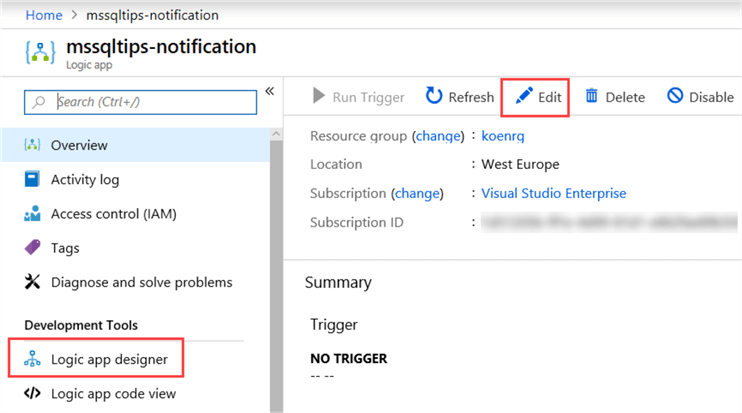

Click on Create again to finalize the creation of your new Logic App. After the app is deployed, you can find it in the resource menu. Click on the app to go to the app itself. There you can go to the editor by clicking on Edit or on the Logic app designer link.

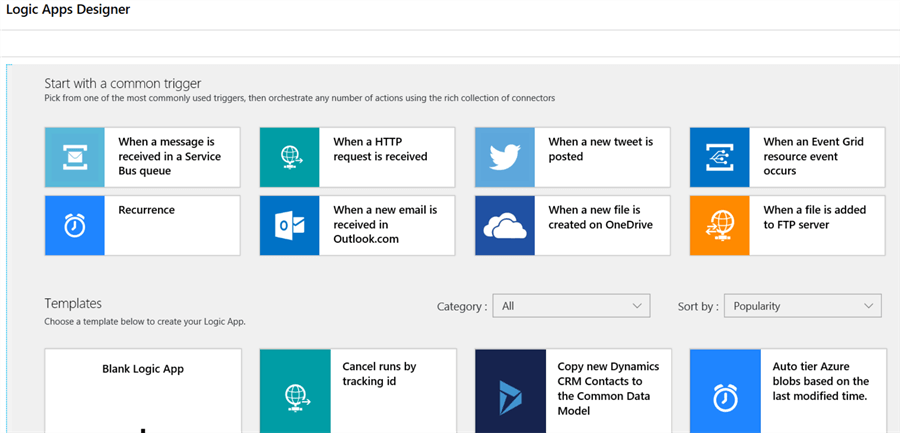

When the designer is opened for the first time, you can either choose to start with a new canvas using a common trigger (the event that will start the workflow) or by choosing one of the many available templates.

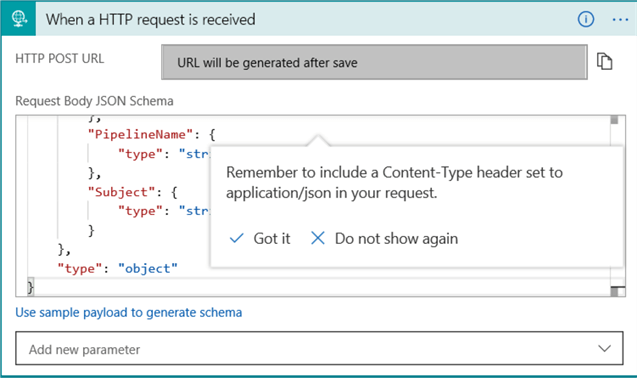

In this tip, we need the HTTP request ("When a HTTP request is received") as the trigger, since we're going to use the Web Activity in ADF to start the Logic App. From ADF, we're going to pass along some parameters in the HTTP request, which we'll use in the e-mail later on. This can be done by sending JSON along in the body of the request.

The following JSON schema is used:

{

"properties": {

"DataFactoryName": {

"type": "string"

},

"EmailTo": {

"type": "string"

},

"ErrorMessage": {

"type": "string"

},

"PipelineName": {

"type": "string"

},

"Subject": {

"type": "string"

}

},

"type": "object"

}

We're sending the following information:

- The name of the data factory. Suppose we have a large environment with multiple instances of ADF. We would like to send which ADF has a pipeline with an error.

- The e-mail address of the receiver.

- An error message.

- The name of the pipeline where there was an issue.

- The subject of the e-mail.

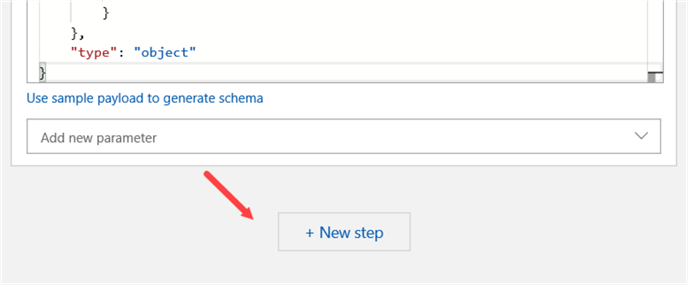

In the editor, click on New step to add a new action to the Logic App:

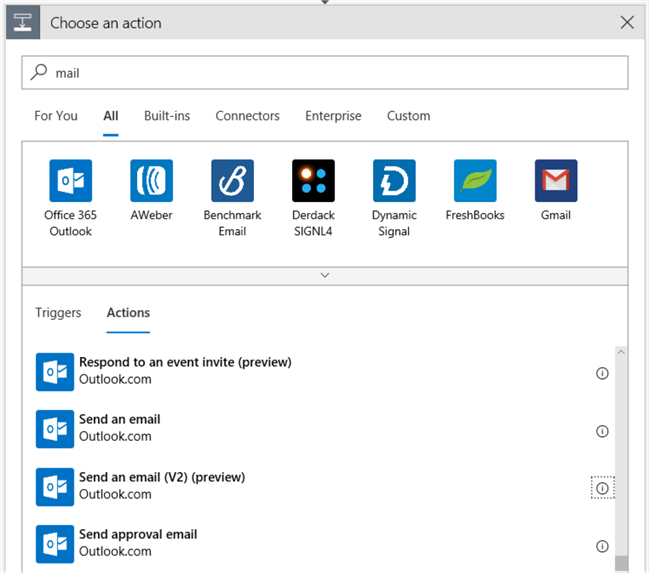

This new step will send the e-mail. When you search for "mail", you'll see there are many different actions:

There is support for Office 365, but also for Outlook.com (the former Hotmail) and for Gmail. Even plain SMTP is available. In this tip, I'll use the

First, you'll need to sign in:

Make sure pop-ups are allowed (the browser Edge gave me lots of trouble with this. Even turning off pop-ups didn't seem to work. Chrome worked without issues):

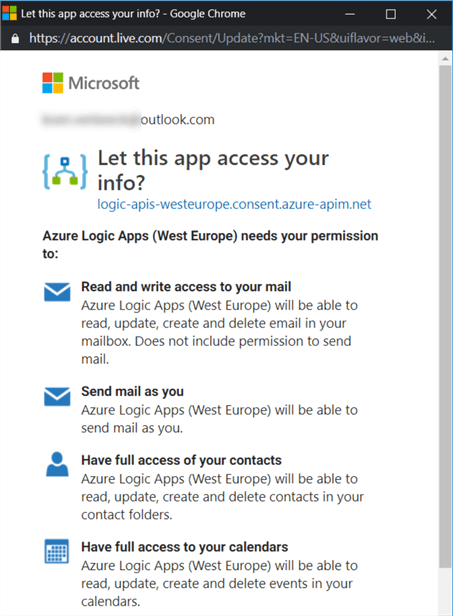

Log in and give consent that Logic Apps can access your mailbox. In a production environment, you'll probably want to use a mail-enabled service account, where the mailbox (and calendar, contacts ...) are empty. If you have two-factor authentication set-up, you might also get a warning on your mobile device.

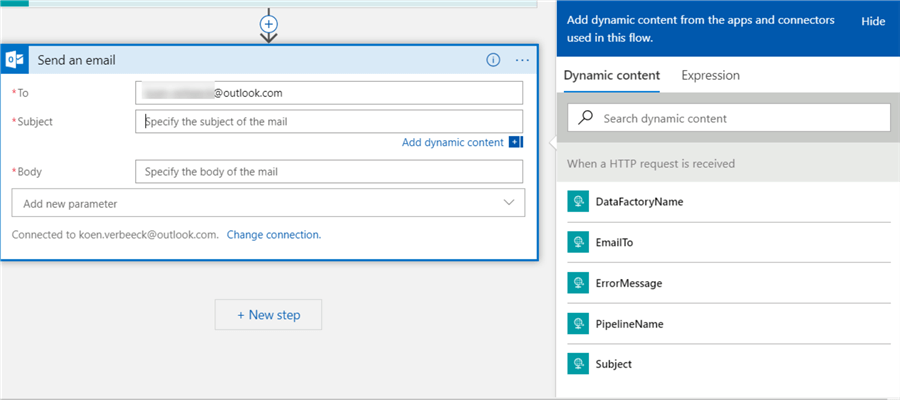

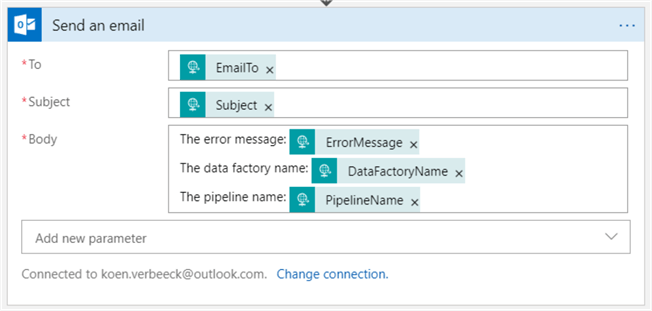

Once you're logged in, you can configure the action. We're going to use dynamic content to populate some of the fields.

The result looks like this:

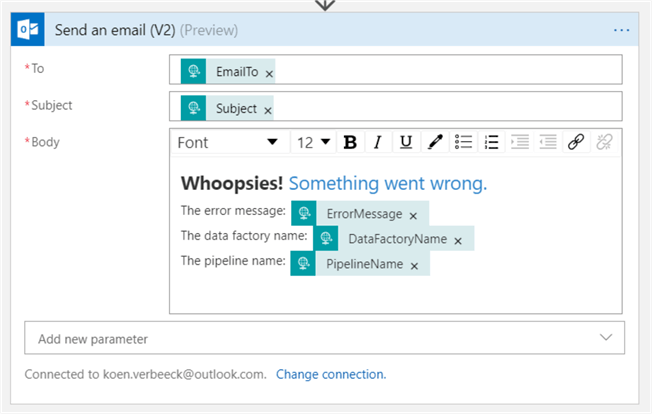

For outlook.com, there's also a V2 for the send mail activity. The difference is that you can now send rich content. An exaggerated example:

You can format text, but also include hyperlinks (maybe a link to a log file?). This action is still in preview at the time of writing and as such might behave buggy from time to time. For example, using bullet points put the entire body in a bullet point, instead of just a portion of the text. For the remainder of this tip, we'll use v1 of the send mail action.

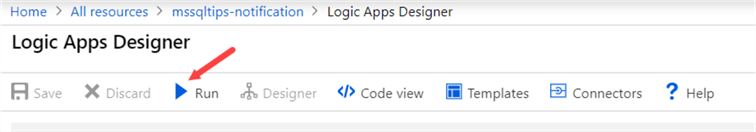

At this point the Logic App is finished. If you try to test it using the run button, the Logic App will fail as no body as passed along in the HTTP request.

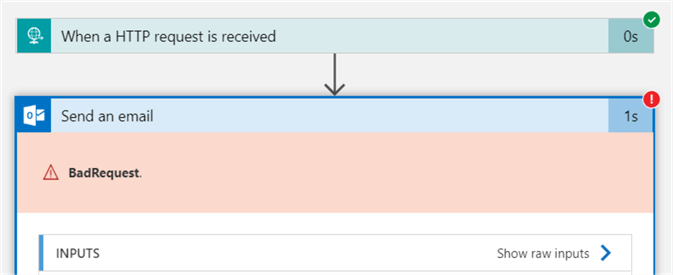

The following error is returned:

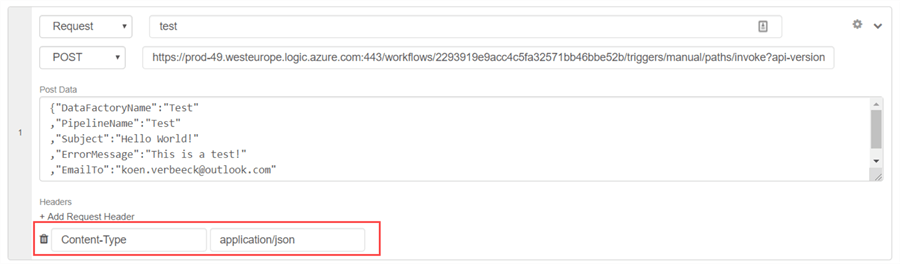

So how can we test our app? We can use an online API tester, like https://apitester.com/. Using this website, you can construct a POST HTTP request where you pass along a body with a content of your choosing.

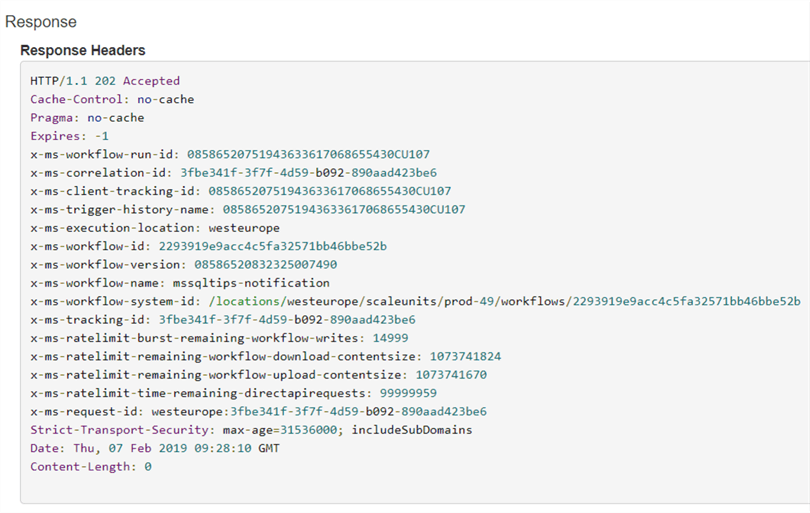

Don't forget to pass along a request header where the content type is set to application/json, or the Logic App will fail again. When you hit test, the POST request will be sent to the Logic App endpoint. The website will let you know if the HTTP request was successful, and it will also show the response headers:

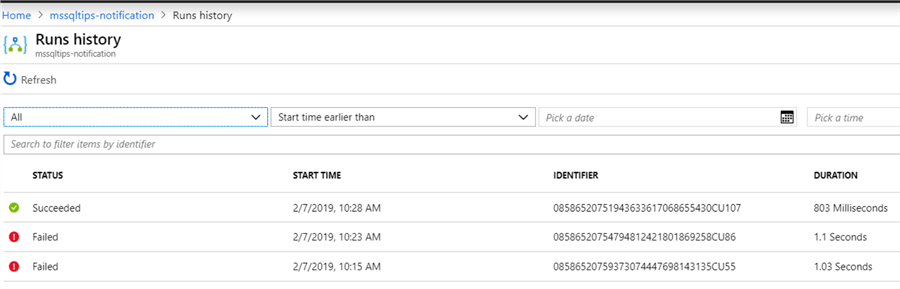

You can check the run history of the Logic App in the Azure Portal:

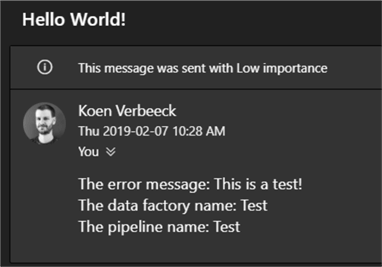

And of course, you can also verify if the e-mail has arrived in your inbox:

In the next part of the tip, we'll explain how you can integrate the Logic App into the ADF pipeline.

Next Steps

- An interesting article comparing Flow, Logic Apps, Webjobs and Azure Functions.

- More tips about Logic Apps:

- You can find more Azure tips in this overview.

About the author

Koen Verbeeck is a seasoned business intelligence consultant at AE. He has over a decade of experience with the Microsoft Data Platform in numerous industries. He holds several certifications and is a prolific writer contributing content about SSIS, ADF, SSAS, SSRS, MDS, Power BI, Snowflake and Azure services. He has spoken at PASS, SQLBits, dataMinds Connect and delivers webinars on MSSQLTips.com. Koen has been awarded the Microsoft MVP data platform award for many years.

Koen Verbeeck is a seasoned business intelligence consultant at AE. He has over a decade of experience with the Microsoft Data Platform in numerous industries. He holds several certifications and is a prolific writer contributing content about SSIS, ADF, SSAS, SSRS, MDS, Power BI, Snowflake and Azure services. He has spoken at PASS, SQLBits, dataMinds Connect and delivers webinars on MSSQLTips.com. Koen has been awarded the Microsoft MVP data platform award for many years.This author pledges the content of this article is based on professional experience and not AI generated.

View all my tips