By: Jeffrey Yao | Comments (4) | Related: More > Import and Export

Problem

As a SQL Server Business Intelligence Professional, I work with numerous large Excel files every day. I need to either submit files to others or upload the files to my SQL Server data warehousing system. To ensure the quality of my SQL Server data, I want to do Excel file data validation of the data in these files per business requirements before I even start to process them. What can I do?

Solution

To validate data, we can rely on Regular Expression for Excel files. One common way to process Excel files is to use the COM Interop interface. In PowerShell, that code is like the following:

$ExcelApp = new-object -com Excel.Application; #then you can handle Excel files using this $ExcelApp

However, this approach requires that the Excel application be installed. In many server environments, Microsoft Office is not installed. On the other hand, the performance of this COM approach is usually slow when handling big Excel files and is not supported:

Microsoft does not currently recommend, and does not support, Automation of Microsoft Office applications from any unattended, non-interactive client application or component (including ASP, ASP.NET, DCOM, and NT Services), because Office may exhibit unstable behavior and/or deadlock when Office is run in this environment.

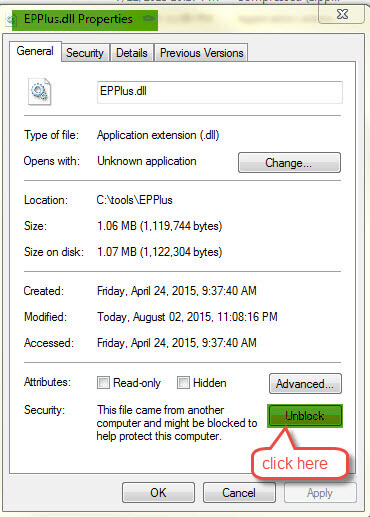

So in this tip, I will use a community-tested and approved approach that does not rely on an Excel installation to work with Excel files. This approach relies on an open-sourced DLL file, EPPlus.dll. After downloading the zip file and extracting the EPPlus.dll to a folder, say C:\Tools\EPPlus.dll, right-click the file and from the pop-up menu click properties and then Unblock it as shown below:

This solution has two key points:

- Use EPPlus.dll to read Excel files

- Use Regular Expression to perform validations on the cells of interest

PowerShell Source Code for Excel File Data Validation

Below is the PowerShell source code to be used for the Excel file data validation.

add-type -path c:\tools\EPPlus\EPPlus.dll

<#

.SYNOPSIS

Validate data in Excel files (for Excel 2007 and later versions only)

.DESCRIPTION

Validate data in Excel files via Regular Expression or just common strings. The validation can be on

multiple columns, each with different validation criteria.

.INPUTS

ExcelFile: The full UNC path to the Excel file;

Condition: Hashtable format, put ColumnName and its corresponding verification criteria in such format as

@{ColumnName='criteria'; ColumnName2='criteria2'; ...}

Sheet: indicates the Excel file sheet number, so that the verification will be carried on this sheet. The default value is 1

.OUTPUTS

System.Data.DataTable

The data table will contain all abnormal data (i.e. failing the verification criteria), The data table will have 3 columns, Rows | ColumnName | Text, and this

will assist quickly locating the abnormal data in the Excel sheet.

.EXAMPLE

Validate-ExcelData -ExcelFile C:\temp\Person.xlsx -Condition @{Phone='^\d{3}-\d{3}-\d{4}$'; Province='^\w{2}$'} -Sheet 1;

This command validates two columns in the first worksheet of c:\Temp\Person.xlsx, to verify that column [Phone] has the format of ddd-ddd-dddd (d is a digit number, 0 to 9).

It also verifies that column [Province] should have a value of two characters, like AB, ON, BC (these are all Canadian province name abbreviations)

#>

function Validate-ExcelData

{

param(

[Parameter(Position=0, Mandatory=$true)] [ValidateScript ({test-path $_ -pathtype 'leaf'})] [string]$ExcelFile,

[Parameter(Position=1, Mandatory=$true)] [ValidateNotNullOrEmpty()] [hashtable]$Condition=@{}, # The format is "ColumnName='regular-expression'"

[Parameter(Position=2, Mandatory=$false)] [ValidateScript ({ ($_ -gt 0) -and ($_ -le 100)})] [int]$Sheet=1

)

try

{

$result = New-Object "System.Data.DataTable"

$c = New-Object "System.Data.DataColumn" ('Row', [System.Int32]);

$result.Columns.Add($c);

$c = New-Object "System.Data.DataColumn" ('Col', [System.String]);

$result.Columns.Add($c);

$c = New-Object "System.Data.DataColumn" ('Text', [System.String]);

$result.Columns.Add($c);

$xl = New-Object OfficeOpenXml.ExcelPackage $ExcelFile;

$wb = $xl.Workbook

if ($wb.Worksheets.count -lt $sheet) #$sheet cannot be beyond the existing WorkSheets

{

Write-Error "There is no [$sheet] sheet in this Excel file." -ea stop;

}

$ws=$wb.Worksheets[$Sheet]

$dm=$ws.Dimension

$Rows=$dm.Rows;

$Columns=$dm.Columns;

[string[]]$array_col= 1..$Columns| %{$ws.Cells[1, $_].Text.toupper()};

[hashtable]$valid_cond=@{};

$condition.keys | % {$valid_cond.add($_, $([System.Array]::indexof($array_col, $_.toupper())))}

$valid_cond.GetEnumerator() | % {

if ($_.Value -eq -1)

{

write-error "Column [$($_.Key)] cannot be found in Excel file [$ExcelFile]" -ea Stop;

}

}

foreach ($itm in $valid_cond.GetEnumerator())

{

foreach ($row in 2..$rows)

{

if ($ws.Cells[$row, ($itm.value+1)].text -notmatch $($Condition.get_item($itm.Key)))

{

$r = $result.NewRow();

$r.Row = $row;

$r.Col = $itm.key;

$r.Text = $ws.Cells[$row, ($itm.value+1)].Text;

$result.rows.add($r);

}

}

}#itm

Write-Output $result;

}#try

catch

{

Write-Error $_;

}

finally

{

$result.Dispose();

$result = $null;

$xl.Dispose();

$xl=$null;

}

}

#uncomment and modify the following parameters to do you test in a PS ISE window

#Validate-ExcelData -ExcelFile C:\temp\Person.xlsx -Condition @{Phone='^\d{3}-\d{3}-\d{4}$'; Province='^\w{2}$'} -Sheet 1;

Example

I ran the following test:

Step 1

Generate test data, I used AdventureWorks2012 to dump data out as csv file.

# generate test data import-module sqlps -DisableNameChecking set-location c:[string]$qry =@" select p.Name ,SalesOrderID, SalesOrderDetailID, CarrierTrackingNumber, OrderQty, s.ProductID, s.UnitPrice, s.LineTotal, s.ModifiedDate from sales.salesorderdetail s inner join Production.Product p on p.ProductID =s.ProductID; "@ # tp_w520 is my laptop name, change it to your own invoke-sqlcmd -server tp_w520 -database AdventureWorks2012 -query $qry | Export-Csv -Path c:\temp\Test.csv -Force -NoTypeInformation;

Step 2

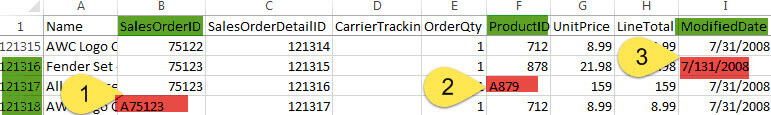

The test data has 120,000+ rows. I used Excel 2013 to open the c:\temp\Test.csv

file and modified the last three lines as shown below to make the data abnormal and

then I saved the modified file as c:\temp\Test.xlsx

- 1st change: [SalesOrderID] should be a number(RegEx check='^\d+$'), but I add a letter "A" in cell (121318, B) to make it invalid

- 2nd change: [ProductID} should be a number(RegEx check='^\d+$'), but I add a letter "A" in cell (121317, F) to make it invalid

- 3rd change: [ModifiedDate] should be a date as MM/DD/YYYY((RegEx check='^\d{1,2}/\d{1,2}/\d{4}$'), but I made cell (121316, I) to be 7/131/2008 to make it an invalid data.

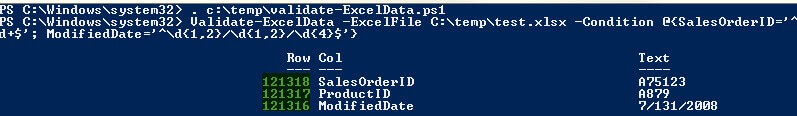

Step 3

Open the PowerShell command line window (ISE is OK as well) and run the following 2 lines of code

. c:\temp\validate-ExcelData.ps1 #dot source the function script, which is saved in c:\temp\ folder

Validate-ExcelData -ExcelFile C:\temp\test.xlsx -Condition @{SalesOrderID='^\d+$'; ProductID='^\d+$'; ModifiedDate='^\d{1,2}/\d{1,2}/\d{4}$'}

The result is shown below:

Summary

This tip suggests a solution to validate Excel data via PowerShell and Regular Expressions. The performance is pretty good, for the example above it took less than 15 seconds to run on my 5 year old laptop (Win 7, 8GB). In the ETL world, another common file type is a CSV file, this generally can be done via Import-CSV and then applying regular expressions to check the corresponding columns.

Next Steps

Please read the following tips that have some similar topics:

- SQL Server Regular Expressions for Data Validation and Cleanup

- Pre-Validating Data with PowerShell Before Importing to SQL Server

- Using a Simple SQL Server Bulk Insert to View and Validate Data

About the author

Jeffrey Yao is a senior SQL Server consultant, striving to automate DBA work as much as possible to have more time for family, life and more automation.

Jeffrey Yao is a senior SQL Server consultant, striving to automate DBA work as much as possible to have more time for family, life and more automation.This author pledges the content of this article is based on professional experience and not AI generated.

View all my tips