Problem

Copying data from a table in one instance to a table in another instance is a common task. You could do this with T-SQL and a Linked Server, but what if you can’t use a Linked Server. To make it more difficult, say I have three servers: A, B, C and I want to copy data from a table on A to a table on B by running a T-SQL script on C, how can I do that?

Solution

There are many ways one can copy data across instances, the most common are:

- Creating a Linked Server to the target instance on the source instance, and then using an INSERT … SELECT … FROM [linked_server].

- Using OpenRowSet

- Using BCP.exe to export data out of the source table and then using BCP to import it to source table

- Using latest SQL Server PowerShell cmdlets, i.e. read-SQLTableData and Write-SQLTableData

- Using SQL Server Integration Services (SSIS)

These methods all have their pros and cons. To answer the question of copying tables between different instances by running a T-SQL on a separate centralized instance without Linked Servers, the only alternative way is to use an SSIS Package and create a SQL Server Agent Job to execute it, but this is tedious in both implementation and maintenance.

With R integration in SQL Server 2016, this difficult question has a perfect solution now.

Algorithm Description

SQL Server R service has included a default R package RevoScaleR, which contains lots of functions that are helpful for loading data from various sources, such as the following:

- Using RxSQLServerData to create two objects each connecting the source and the target

- Using RxDataStep to dump the data from the source to the target.

We will see how we can use these to solve our problem.

Environment Setup

There are three SQL instances on my computer, the default instance is SQL Server 2012, another is [localhost\sql2014] and the last is [localhost\sql2016]. What I will do is to run the T-SQL script on [localhost\sql2016] and copy data from default instance [localhost] to [localhost\sql2014] instance and [localhost\sql2014].

On [localhost], I will create a simple table and populate it with a few records

--on [localhost], i.e. source instance

use TestDB

if object_id('dbo.t', 'U') is not null

drop table dbo.t;

go

create table dbo.t (a int, b varchar(100), d datetime);

go

insert into dbo.t (a, b, d)

select 1, 'hello', '2017-01-01'

union all

select 2, 'world', '2017-01-02'

union all

select 3, 'happy FireRooster year', '2017-01-28'

go

-- assume we have created a sql login on this [localhost] instance

Use TestDB

--drop user xyz;

--drop login xyz;

create login [xyz] with password='pa$$W0rD_xyz', check_policy=off;

go

create user xyz for login xyz;

alter role [db_datareader] ADD MEMBER [xyz]; -- give it db_datareader role

go

On the target instance, we set up the destination table.

-- on [loclahost\sql2014], i.e. the destination instance

USE TestDB

if object_id('dbo.t', 'U') is not null

drop table dbo.t;

go

create table dbo.t (a int, b varchar(100), d datetime);

go

-- also assume, we have created a sql login on this [localhost\sql2014] instance

use TestDB;

--drop user xyz;

--drop login xyz;

create login [xyz] with password='pa$$W0rD_XyZ', check_policy=off;

go

create user xyz for login xyz;

go

alter role [db_datareader] ADD MEMBER [xyz]; -- give it db_datareader role

alter role [db_datawriter] ADD MEMBER [xyz]; -- give it db_datawriter role

go

Implementation

The coded needed is relatively simple as shown below:

-- run on the central instance, i.e. [localhost\sql2016]

-- declare variables, so we can change the source/target tables on the fly when doing the

declare @src_conn varchar(300), @dest_conn varchar(300);

declare @src_tbl varchar(200), @dest_tbl varchar(200);

-- set variables

select @src_conn = 'server=localhost; database=TestDB; UID=xyz; PWd=pa$$W0rD_xyz;'

, @dest_conn = 'server=localhost\sql2014; database=TestDB; UID=xyz; PWd=pa$$W0rD_XyZ;'

, @src_tbl = 'dbo.t'

, @dest_tbl = 'dbo.t';

-- run the R code inside t-sql

exec sp_execute_external_script @language = N'R'

, @script = N'

indata <- RxSqlServerData(table = src_tbl, connectionString = src_conn) # when src and target columns have same names otherwise make some modification

outdata <- RxSqlServerData(table = dest_tbl, connectionString = dest_conn);

rxDataStep(inData = indata, outFile = outdata, append = "rows")'

, @params = N'@src_conn varchar(300), @dest_conn varchar(300), @src_tbl varchar(200), @dest_tbl varchar(200)'

, @src_conn = @src_conn

, @dest_conn = @dest_conn

, @src_tbl = @src_tbl

, @dest_tbl = @dest_tbl

with result sets none;

go

After running the script in a SSMS query window, we will see the following:

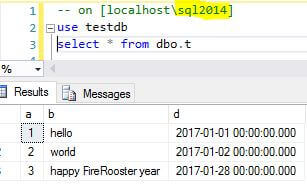

In [localhost\sql2014], we can see that 3 records have been copied from the source table.

Limitations and Workarounds

R integration with SQL Server is still in its early stages, so there is no surprise there were lots of limitations during my research.

The first limitation is both the source table and the target table should have the exact same column names. Otherwise, there will be error like the following.

If we do not have the same column names, we can make a change to indata database source as follows. For example, the source table has columns [a], [b], [c] and the target table has columns [x], [y], [z], then we can alias the source columns as the following:

indata <- RxSqlServerData(sqlQuery="select x=a, y=b, z=c from [src table]", connectionString = src_conn)

The second limitation is that many current SQL data types are not supported, such as XML, varbinary etc, for details see [Restrictions] section.

There is no alternative here. The only way to by-pass it is to run the R code in its native environment (instead of inside T-SQL), for example, you run R code in RStudio IDE.

The third limitation is that if the target table has an identity column, there is no way to turn the identity insert on inside R code.

An alternative is that we load data into a staging table without identity column and later load data from staging to the target table via T-SQL.

One Advantage

There is one specific feature I like most about RxDataStep. In this function, there is a parameter overwrite, if it is set to True, the function can automatically create a target table if it is not there. This is very helpful when we want to just load data into the target instance as staging tables, which we do not need to create beforehand.

Summary

In this tip, we explored a way to copy table data across different SQL instances by running pure T-SQL on a central server, and this tip can be easily customized to do other types of data loading, such as reading CSV file, Excel file, or any ODBC accessible data sources and then writing to destination. In some way, this can be considered a T-SQL command line version of some of the SSIS features.

With T-SQL as a hosting language for R (hopefully Python or others in vNext SQL Server), T-SQL may be more than just a language, it could be a platform.

Although the R language is mainly for data science work, it still brings lots of benefits to DBAs. In some way, it opens up another dimension for database administration and makes some previously tedious easier and some impossible tasks possible.

Next Steps

- We can easily create a stored procedure based on this tip with parameters for the source and target tables, and in scenarios like copying one source table to multiple target tables or vice versa. We can also easily loop through the stored procedure by providing different values to achieve the work efficiently.

- The following links may be helpful for your to dive deep into R language

Jeffrey Yao is a senior SQL Server consultant with 15 years of database administration, his current interests are on database administration automation and visualization. He enjoys reading the concise and smart sql tips and loves R&D work in DBA Engineering topics. His blog is at: http://www.sqlservercentral.com/blogs/jeffrey_yao/.