By: Aleksejs Kozmins | Comments | Related: More > Integration Services Development

Problem

In my SQL Server Integration Services (SSIS) projects I faced a situation where my data flow tasks that were running in parallel and transferring large volumes of data lost their data transfer speed which impacted the entire process. When a data flow task is running much slower with an unpredictable completion time, it may occupy server resources for a long time preventing usage of these resources by other data flow tasks which may heavily impact the productivity of the ETL process.

Solution

In this tip I am going to cover an approach that allows stopping a data flow task in the middle of the process without having to cancel execution for the entire SSIS package. This cancellation request could be because the process is taking a lot longer than normal, there are issues with data quality or for some other reason. The approach below will allow you to trigger the data flow task to stop executing by executing a stored procedure from SQL Server Management Studio (SSMS) or the command line to update a control table that triggers the data flow task to stop running.

General Approach

Below outlines how I am using this process in my environment. I covered a way to load data in parallel in this previous tip which could then be enhanced by the process below.

The data import process requires that each data flow task in the SSIS package is dedicated to a specific table. This way we can control data imports individually.

The following approach was used to resolve the situation:

- When the data transfer speed of a certain table is very slow, the execution of the appropriate data flow task should be interrupted and its name logged in a special stop control table. All other processes will continue to work and run faster because additional server resources will be released.

- When all running TL-packages are finished, the ETL process will launch only the stopped packages.

There are various ways to measure data transfer speed to decide if the data flow task should be interrupted. The question to consider is: how to interrupt a data flow just at the moment when it is necessary?

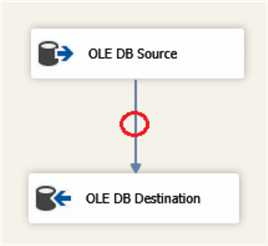

The basic data flow task structure consists of source and destination components as shown in Fig.1.

Fig.1. The basic structure of DF-task.

It is impossible to interrupt a data flow task from within the source or destination component. In fact, it can be done only within the data flow path (red circle in Fig.1). Essentially, it is fairly simple to do this using an OLEDB Command to execute a THROW command to stop the processing of the data flow task.

Example Command: THROW 999999, 'DF-task stopped', 1

The next question is how to stop the data flow at the moment we need it? How to make the process controllable and configurable from outside the package?

Sample SSIS Package

First we look at the Control Flow then the Data Flow.

Control Flow Tasks

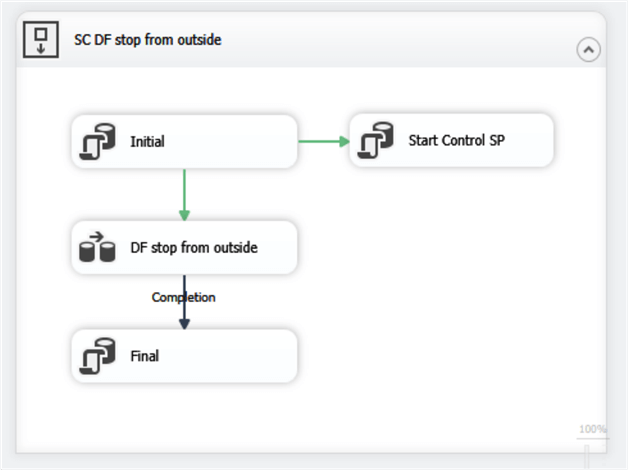

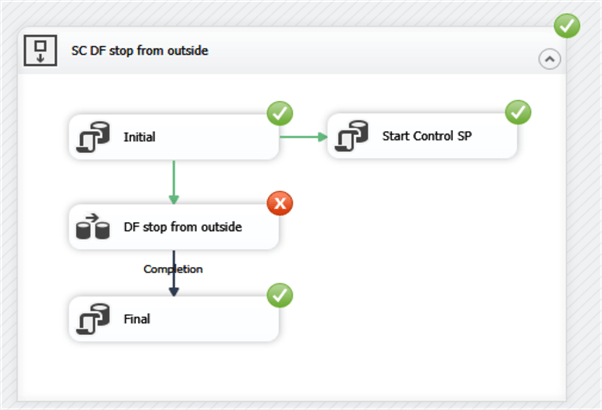

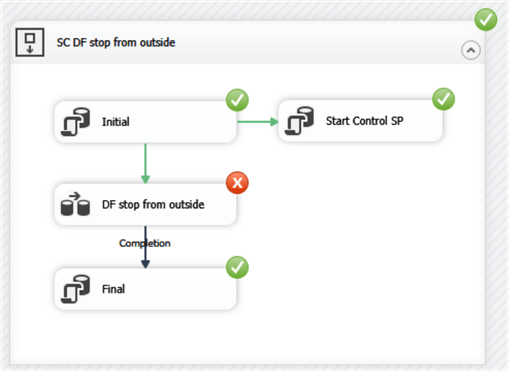

Here is the control flow for the package.

Fig.2. The structure of the SSIS package with the externally controlled DF-task

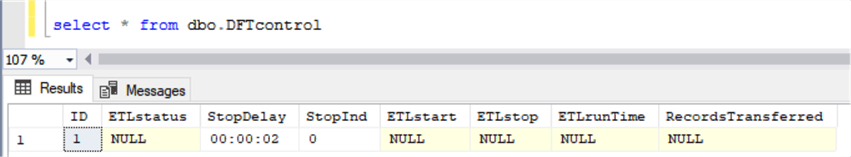

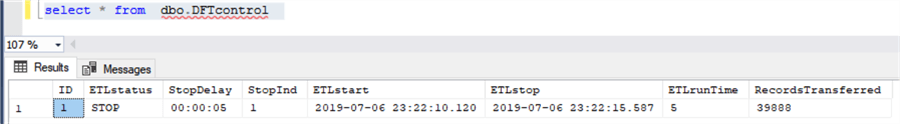

The package uses a control table dbo.DFTcontrol (see below) that allows the process to be stopped:

Fig.3. The structure of the control table

Here is an explanation of the columns in the table.

| Column name | Description | Values |

|---|---|---|

| ETLstatus | ETL process status | START or STOP. |

| StopDelay | Delay time value. It is used in the stored procedure that generates a signal to interrupt the DF-task after the specified delay time. | Current value is 2 seconds. |

| StopInd | The signal to interrupt the DF-task | 0 is the initial value 1 is the signal to interrupt the DF-task. |

| ETLstart | Timestamp of the ETL start | |

| ETLstop | Timestamp of the ETL stop | |

| ETLruntTime | ETL process run time value in seconds | |

| RecordsTransferred | The number of records transferred by the DF-task till it gets interrupted. |

The SSIS package works in the following way. I will describe each task.

- Initial - This truncates the destination table and sets the ETL parameters in the control table as shown below.

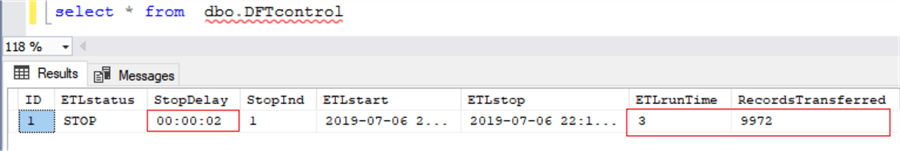

Fig.4. Initial content of the control table.

- Start Control SP- this runs the stored procedure ETLstop which is shown a bit later. This updates the process so we can trigger a failure. In a real world situation, you wouldn't run this task, so one test is to disable this task and run the ETstop stored procedure from within SSMS to trigger the data flow task to stop running.

- DF stop from outside - This is the data flow task to import the data into the database.

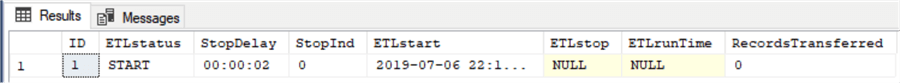

- Final - This updates the control table with the result of the data transfer (see below):

Fig.5. The control table after the ETL completion with the delay of 2 seconds.

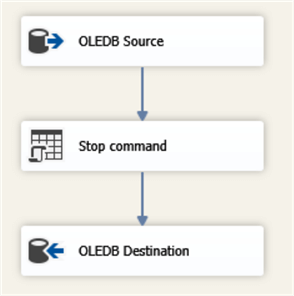

Data Flow Tasks

Here is the data flow.

Fig.6. The DF-task with the stop-component included in the Data Path.

- OLDEB Source - This is a data source that points to a table in a database.

- Stop command - This is an OLE DB Command that checks the status of the control table and if there is a request to stop the process a THROW command is sent. This is checked each time data is passed from the source to the destination.

- OLEDB Destination - This is a data source that points to a table in a database. This includes the mapping of the input and output columns.

In the data flow, the Stop Command component executes the following SQL script:

DECLARE @THROW_STRING VARCHAR(100) = 'DF-task stopped'

IF (SELECT StopInd FROM dbo.DFTcontrol WHERE ID=1) = 1

BEGIN

THROW 999999, @THROW_STRING, 1

END;

During data transfer, the Stop Command component reads the value of StopInd field from the control table that can be updated from the outside (e.g. from a stored procedure). When the command finds StopInd = 1, it throws an error and the dataflow is broken immediately.

The stored procedure dbo.ETLstop generates the interruption signal as shown below. Since this is all being run from the SSIS package, I am using the StopDelay to trigger the failure after so many seconds. As mentioned above, in a real setting you wouldn't have the Start Control SP task executing in the package since this is only used to trigger a failure for this example. This would be done by some outside process to stop the execution.

CREATE PROCEDURE [dbo].[ETLstop]

AS

BEGIN

DECLARE @StopDelay VARCHAR(50);

IF (SELECT ETLstatus FROM dbo.DFTcontrol WHERE ID =1) <> 'STOP'

BEGIN

SELECT @StopDelay = StopDelay FROM dbo.DFTcontrol WHERE ID = 1

WAITFOR DELAY @StopDelay

UPDATE dbo.DFTcontrol SET StopInd = 1 WHERE ID = 1

RETURN

END

END

In the code above, the IF statement is needed only in case the data flow finishes early and the field ETLstatus gets a value of STOP prior to the stored procedure executing.

Sample Execution of the SSIS Package

When the package runs it truncates the destination table, updates the control table that the process has begun. Then it runs the Start Control SP to trigger the stop after 2 seconds. Then it runs the data flow tasks which will stop after 2 seconds. Then the Final task is run that updates the control table. This is all shown below.

Fig.8. ETL execution result with broken data flow task

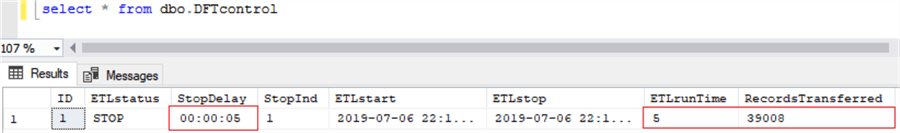

Here is another run if we change the StopDelay to 5 seconds instead of 2 seconds. When the stored procedure is run, a certain number of records get to the destination table during the delay time. The change of the delay value causes changes both of the ETL run time and the number of transferred records (see Fig. 5 compared to Fig.7 below).

Fig.7. ETL run result with the delay of 5 seconds

Note. In order to avoid package failure, the error thrown within the data flow task should not be propagated to upper levels. For this purpose, it needs to set the property MaximumErrorCount = 0 for the sequence container SC DF stop from outside. The result of the package execution in Visual Studio is shown in Fig.8.

Conclusion

The example shows how a dataflow task can be easily interrupted from outside the package depending on the results of the package running. As mentioned above, it can be the data transfer speed measured by a special stored procedure. The data flow gets a signal to stop if the measured data transfer speed is lower than the critical threshold provided in the configuration table. However, it is not the only example of external control of the data flow. For example, it can be a data quality check and the data flow can be interrupted if the quality measure indicator exceeds a certain threshold.

Setup Test Environment

To reproduce what is given in the article download DF_test.zip and perform the following steps.

- Create and populate the training database with the SQL scripts, in CreateTrainingDB.zip.

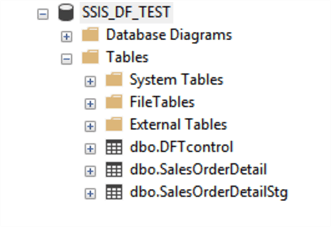

- Run the scripts in order based on the number in the file names. The new DB structure should include the following tables (see Fig.9) and stored procedure dbo. ETLstop.

Fig.9. The structure of dbo.SSIS_DF_TEST.

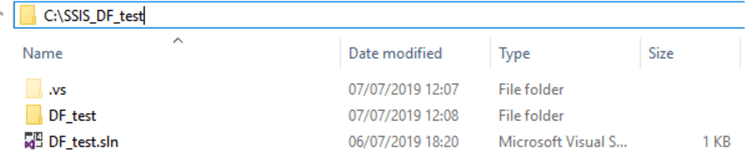

- Create folder C:\SSIS_DF_test and unzip SSIS_DF_test.zip to this folder (see Fig.10).

Fig.10. The unzipped ETL components

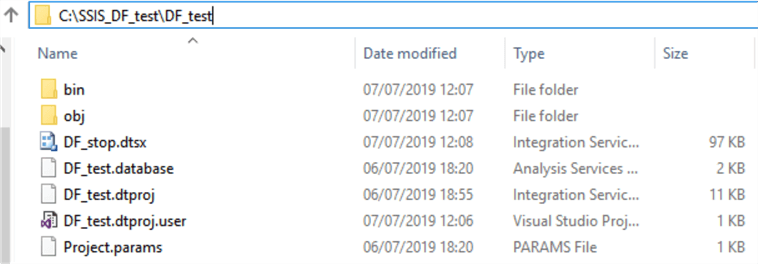

- Open Visual Studio, find and open the solution file C:\SSIS_DF_test\DF_test.sln.

- Expand the solution view, find the package DF_stop.dtsx and execute it. The results should look as below.

- The results in the control table should be similar to below.

Fig.11. The ETL run result

Next Steps

- Check out my prior tip: Parallel Execution of Tasks in SSIS

About the author

Aleksejs Kozmins is a Data Warehousing and Business Intelligence professional, focused on efficient SQL Server Integration Services processes.

Aleksejs Kozmins is a Data Warehousing and Business Intelligence professional, focused on efficient SQL Server Integration Services processes.This author pledges the content of this article is based on professional experience and not AI generated.

View all my tips