By: Fikrat Azizov | Comments (2) | Related: > Azure Data Factory

Problem

In these series of posts, I am going to explore Azure Data Factory (ADF), compare its features against SQL Server Integration Services (SSIS) and show how to use it towards real-life data integration problems. In previous posts, we have discussed copy and transformation activities. Some data integration scenarios require iterative and conditional processing capabilities, which could be achieved using ADF’s control flow activities.

In this post, I will provide a high-level description of the control flow related pipeline activities and show an example of how to use the Execute Pipeline activity.

Solution

Azure Data Factory Control Flow Activities

ADF control flow activities allow building complex, iterative processing logic within pipelines.

The following control activity types are available in ADF v2:

- Append Variable: Append Variable activity could be used to add a value to an existing array variable defined in a Data Factory pipeline.

- Set Variable: Set Variable activity can be used to set the value of an existing variable of type String, Bool, or Array defined in a Data Factory pipeline.

- Execute Pipeline: The Execute Pipeline activity allows a Data Factory pipeline to invoke another pipeline.

- If Condition: If Condition activity allows directing pipeline execution, based on evaluation of certain expressions.

- Get Metadata: Get Metadata activity can be used to retrieve metadata of any data in Azure Data Factory.

- ForEach: The ForEach activity defines a repeating control flow in your pipeline.

- Lookup: Lookup activity can retrieve a dataset from any of the Azure Data Factory supported data sources.

- Filter: Filter activity can be used in a pipeline to apply a filter expression to an input array.

- Until: Until activity executes a set of activities in a loop until the condition associated with the activity evaluates to true.

- Wait: Wait activity allows pausing pipeline execution for specified time period.

- Web: Web activity can be used to call a custom REST endpoint from a Data Factory pipeline.

- Azure Function: The Azure Function activity allows you to run Azure Functions in a Data Factory pipeline.

Some of these activities (like Set Variable Activity) are relatively simple, whereas others (like If Condition activity) may contain two or more activities.

We have already covered the Append Variable and Set Variable activities in the Pipeline variables post and I am going to explore the Execute Pipeline activity in the next section.

Azure Data Factory Execute Pipeline Activity Example

The Execute Pipeline activity can be used to invoke another pipeline. This activity’s functionality is similar to SSIS’s Execute Package Task and you can use it to create complex data flows, by nesting multi-level pipelines inside each other. This activity also allows passing parameter values from parent to child pipeline.

To demonstrate an Execute Pipeline activity, I will create an activity pointing to pipeline ExploreSQLSP_PL we created earlier (see Azure Data Factory Stored Procedure Activity Transformation Activities) and pass some parameter values.

The pipeline ExploreSQLSP_PL contains a single activity, which calls SQL stored procedure to store certain static, as well as some run-time values in the ExceptionLogs table. One of the parameters (TableName parameter) for this activity has been originally set to a static string. We will make the following customizations to ExploreSQLSP_PL pipeline, to demonstrate parameter passing between pipelines:

- Add string parameter PL_TableName to ExploreSQLSP_PL pipeline

- Assign activity parameter TableName to pipeline parameter PL_TableName

Once customizations are done, we will create a parent pipeline and add an Execute Pipeline activity, pointing to the ExploreSQLSP_PL pipeline and supply values for the PL_TableName parameter.

Let's follow the below step-by step instructions to create the above-mentioned nested pipelines.

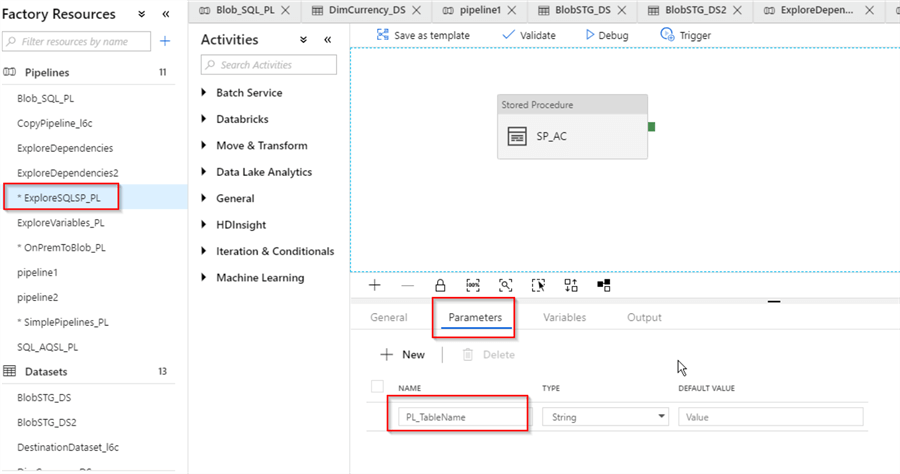

Select ExploreSQLSP_PL pipeline, switch to the Parameters tab and add a new string parameter PL_TableName:

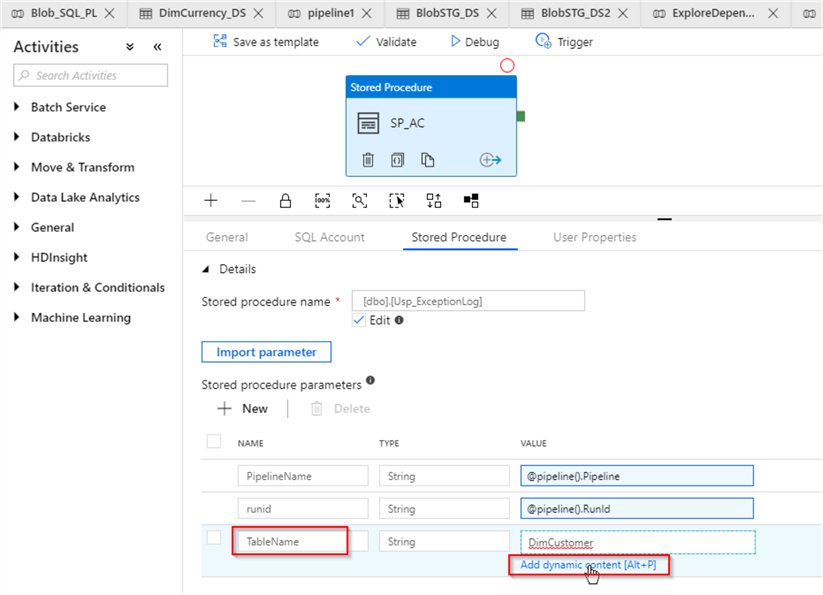

Select activity SP_AC, switch to the Stored Procedure tab, hit value textbox for TableName parameter and click ‘Add dynamic content‘ link under that text box:

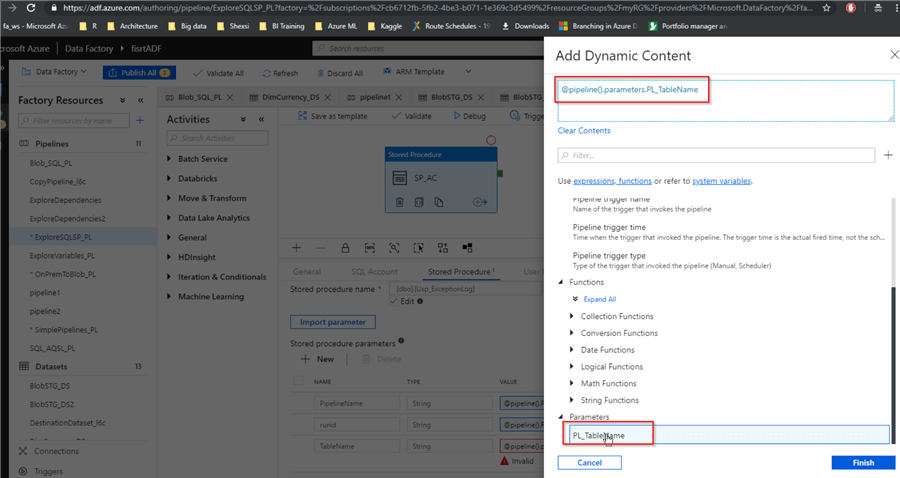

Next, scroll down the screen and select PL_TableName parameter:

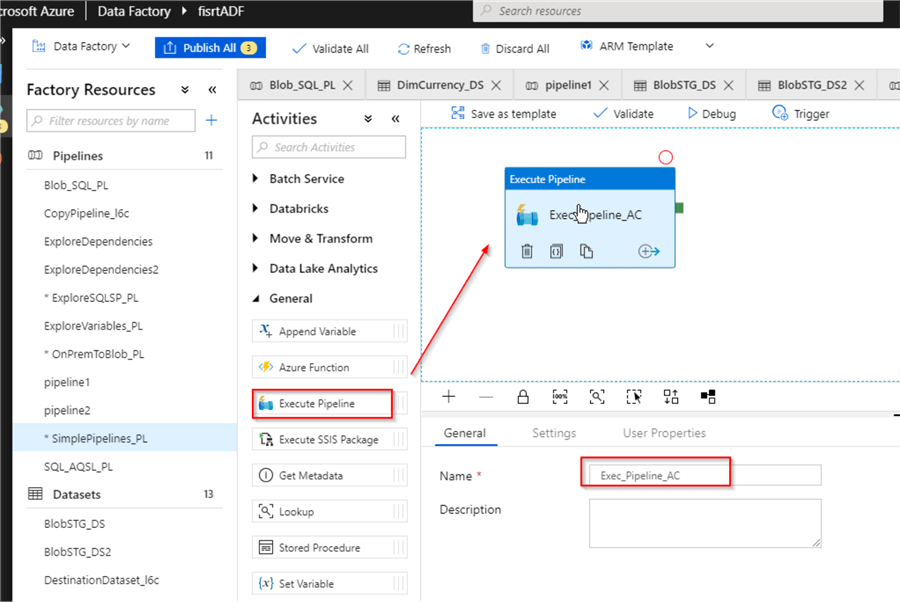

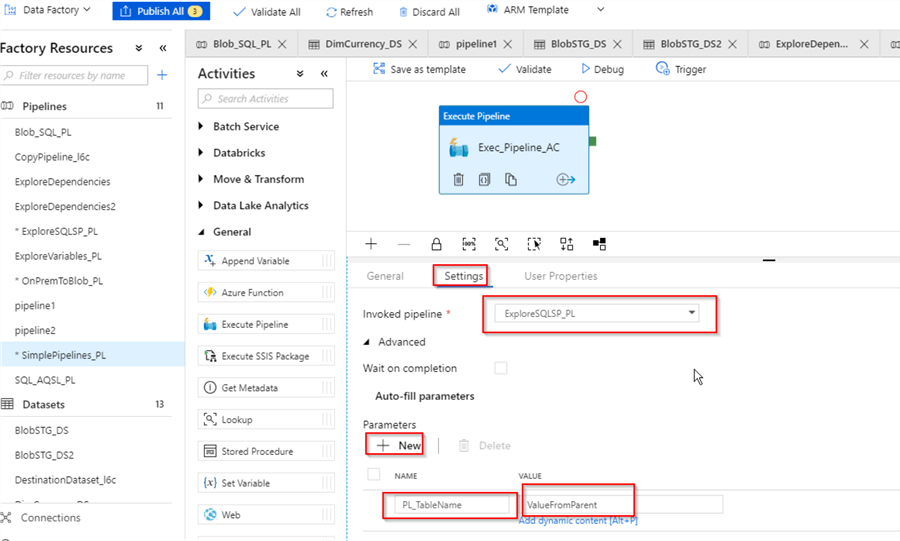

Now that we’ve completed customizations to the child pipeline, let's create the parent pipeline (I named it SimplePipelines_PL) and add an Execute Pipeline activity to it and assign the name (Exec_Pipeline_AC in this example):

Next, switch to Settings tab, select ExploreSQLSP_PL pipeline from Invoked pipeline drop-down list, add new parameter with the name PL_TableName and value ‘ValueFromParent’:

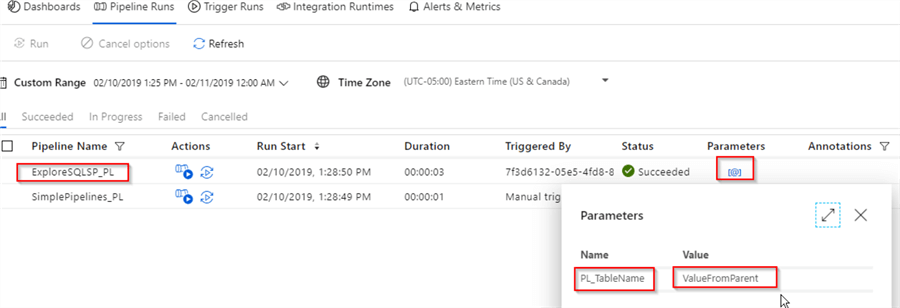

Finally, let’s publish all changes and trigger the parent pipeline SimplePipelines_PL manually and switch to the Monitor screen to examine the execution results:

As you can see from above screen, the child pipeline ExploreSQLSP_PL has been invoked and the string value ‘ValueFromParent’ has been passed to the parameter PL_TableName.

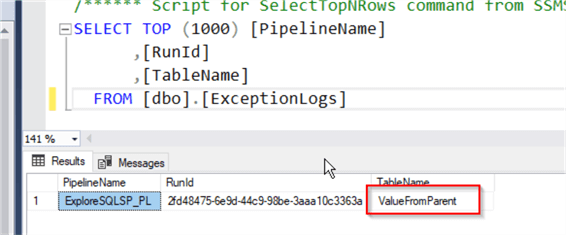

Since the child pipeline’s job is to write into a SQL table, we can examine the table’s content to see the values passed to it from the parent:

Next Steps

- Read: Stairway to Azure Data Factory: Transformations

- Read: Stairway to Azure Data Factory: Variables

- Read: Execute Pipeline activity in Azure Data Factory

About the author

Fikrat Azizov has been working with SQL Server since 2002 and has earned two MCSE certifications. He’s currently working as a Solutions Architect at Slalom Canada.

Fikrat Azizov has been working with SQL Server since 2002 and has earned two MCSE certifications. He’s currently working as a Solutions Architect at Slalom Canada.This author pledges the content of this article is based on professional experience and not AI generated.

View all my tips