By: Alejandro Cobar | Comments | Related: 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9 | 10 | 11 | 12 | > Monitoring

Problem

One of the things that a DBA needs to do is to monitor the status of SQL Server Agent Jobs. This can be done instance by instance, but this takes time. In this article we look at how to collect the last run information for all of the SQL Server instances that are being monitored with this solution.

Solution

This module will collect the information of the very last execution status of each SQL Server Agent Job for all SQL Server instances within the inventory.MasterServerList table.

Prerequisites

In order to use this module, you need to create the core objects found here and also setup this module to collect SQL Agent job information.

Database Objects

One new table will be created and it is a table to store the information of the last execution of the SQL Server Agent Jobs from each instance.

This is the structure of the table, so you can get an idea of what data will be stored.

Note: If you want to add more fields to this table, make sure to adjust the structure within the PowerShell script and adapt the respective logic that will handle the additional fields.

Tables

- monitoring.Jobs

- serverId - serverid ties back to inventory.MasterServerList

- job_name - name of the SQL Agent job

- last_run_date_time - last run date and time

- last_run_duration - length of the run

- last_run_status - status of the last run

- last_run_status_message - any messages from the run

- next_run_date_time - next date and time the job will run

- data_collection_timestamp - when data was last collected.

PowerShell Script

The PowerShell script that creates the above object and inserts data into the monitoring.Jobs table is called:

- Monitor-MSSQL-Instance-Jobs.ps1

The script has some validations that will help you check if some key elements are missing for the script to run successfully. For instance, it will confirm that the inventory.MasterServerList table exists and that it has at least 1 active instance registered to be able to have something to work with, and it will also check that the inventory.Jobs table exists within the central repository database.

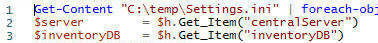

If you have followed along the other modules, you will notice that we have been storing all objects in "C:\temp", but you can use any folder you want. If you make a change to the central folder location, you will need to edit the first line in the following PowerShell script to specify the new folder location.

How to Use

Navigate to the folder where you created the files and you can run the PowerShell script as follows:

Option 1

- Right click on Monitor-MSSQL-Instance-Jobs.ps1 and select Run with PowerShell.

Option 2

- Open a command window and navigate to the folder where you saved the above files and run

powershell "C:\temp\Monitor-MSSQL-Instance-Jobs.ps1"

Option 3

- Schedule this as a SQL Server Agent Job to run the PowerShell script on a predefined basis.

Option 4

- Schedule this as a Windows Task Scheduler job to run the PowerShell script on a predefined basis.

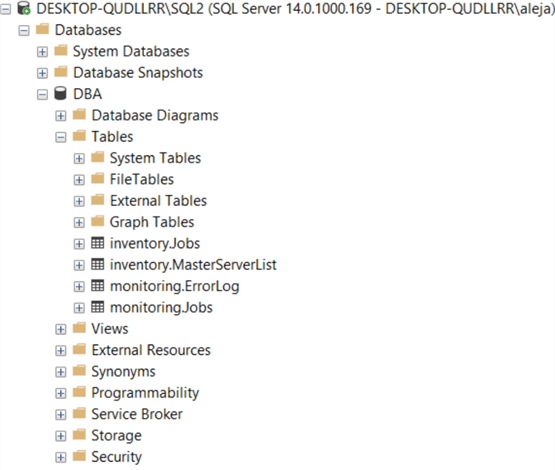

Check Creation of Database and Objects

After running the PowerShell script, we can see the objects that are created.

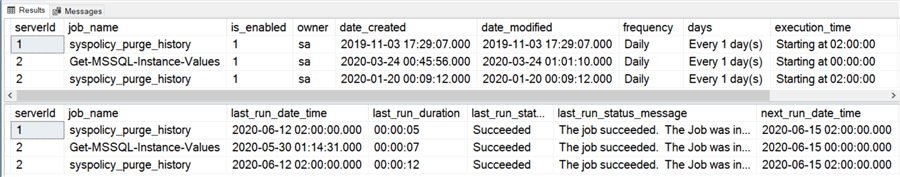

If we query both the inventory.Jobs table and monitoring.Jobs table, we can see the data that has been collected.

Note: The PowerShell script will store only the information from the last execution, no historical data is retained. If you’d like to keep information from previous executions, you would have to modify the script and adapt it to your particular use case.

Checking for Errors

To check for errors query the monitoring.ErrorLog table using the following query:

SELECT * FROM monitoring.ErrorLog WHERE script = Monitor-MSSQL-Instance-Jobs'

If you’d like to know the SQL Server instance that got the errors, you would have to issue the query like this:

SELECT CASE WHEN msl.instance = 'MSSQLSERVER' THEN msl.server_name ELSE CONCAT(msl.server_name,'\',msl.instance) END AS instance, e.script, e.message, e.error_timestamp FROM monitoring.ErrorLog e JOIN inventory.MasterServerList msl ON msl.serverId = e.serverId WHERE e.script = Monitor-MSSQL-Instance-Jobs'

Useful Queries

By collecting all the data related to the execution of jobs across all your instances, you might answer things like:

Which jobs failed the last time that they were executed?

SELECT CASE WHEN msl.instance = 'MSSQLSERVER' THEN msl.server_name ELSE CONCAT(msl.server_name,'\',msl.instance) END AS instance, m.job_name, m.last_run_date_time, m.last_run_duration, m.last_run_status, m.last_run_status_message, m.next_run_date_time FROM monitoring.Jobs m JOIN inventory.Jobs i ON m.job_name = i.job_name AND m.serverId = i.serverId JOIN inventory.MasterServerList msl ON msl.serverId = m.serverId WHERE i.is_enabled = 1 AND m.last_run_status <> 'Succeeded';

Note: Keep in mind that this will report failures only for the jobs within the inventory.Jobs table that are currently enabled. It really doesn’t make sense to know this information for the disabled jobs, but if that’s what you need, then feel free to make the necessary adjustments.

This is a very powerful one, and I highly recommend that you use this as a stepping stone to build a notification mechanism around this data. You could easily have a way to periodically know which jobs are not succeeding, across your entire infrastructure, without necessarily having to configure an email alert within each individual job at each individual instance (imagine you have hundreds/thousands of jobs within your scope without alerts setup).

Which jobs are taking the longest time to complete?

SELECT TOP 10 * FROM monitoring.Jobs ORDER BY last_run_duration DESC

Note: If you are able to identify long running jobs, then probably you have a performance tuning opportunity there. It might not be necessarily the case, but you should be able to tell within your own particular environment.

Download Scripts

Download the scripts for this module

Next Steps

- Download the PowerShell script to collect data for SQL Server Agent Jobs.

- Check out the other parts of this series

About the author

Alejandro Cobar is an MCP, SQL Server DBA and Developer with 10+ years of experience working in a variety of environments.

Alejandro Cobar is an MCP, SQL Server DBA and Developer with 10+ years of experience working in a variety of environments.This author pledges the content of this article is based on professional experience and not AI generated.

View all my tips