By: Aaron Bertrand | Updated: 2023-10-10 | Comments | Related: > Query Optimization

Problem

Query performance can fluctuate over time, and it is not necessarily due to a change to the query itself (or to the application code that calls it). Users often ask why a query suddenly got slower even though they haven't published any changes to the application and the underlying data hasn't changed drastically. This article points out some other reasons – and there are many – that a query might be slower today than it was 10 minutes ago, two weeks ago, or last summer.

Solution

Before we dive into possible reasons for performance fluctuations, let's review some background on how SQL Server processes queries.

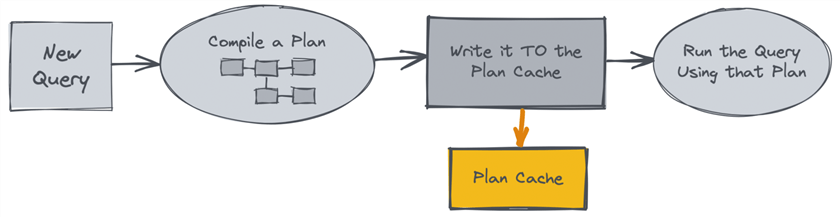

When you submit a query for the first time, the engine produces ("compiles") an execution plan, like a template or flowchart, for how it will satisfy the query. Below is a high-level and quite simplified flowchart:

This isn't always the most optimal plan possible; the optimizer balances minimizing the time it spends with finding a plan that it deems "good enough" to satisfy the given query. The plan includes details like which tables and indexes it will use, what method it will use to perform a join, how it will filter and sort the output, and so on. It makes those decisions based on estimated costs; these guesses are influenced by how many rows exist (according to statistics), how many rows are expected to meet the query criteria, how much memory is available, and other factors. The plan is used to execute the query and stored in the plan cache. This storage is not permanent; a plan will remain in the cache based on a formula involving available memory, complexity, and least recently used (LRU) – if it is a cheap plan and/or doesn't get used frequently enough, it will be evicted from the cache to make room for more complex and/or more "popular" plans. The entire plan cache can be emptied for other reasons, including a service restart, failover, or certain configuration changes or DBCC commands.

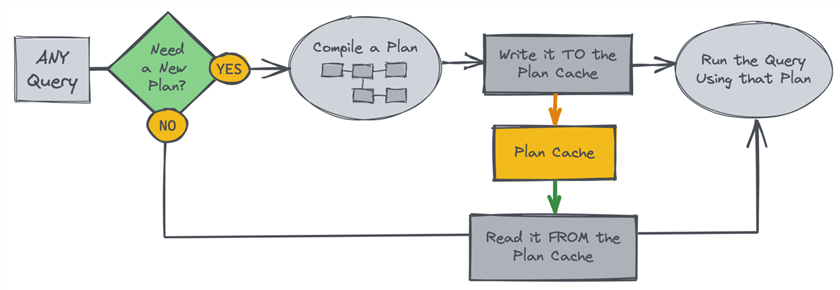

The goal of storing the plan is to promote re-use by subsequent executions of the same query, thereby avoiding paying the compilation cost again. Here is another simplified flowchart:

The downside is that re-using the same plan compiled for one set of parameter values can perform worse given a different set of parameter values (or even if data has changed significantly for the same parameter values). This is because the same approach may not work as well against different data sizes. For many of the query patterns we typically use at Stack Overflow, this compilation cost can often be outweighed by the impact of using a less-than-optimal plan in the wrong scenario.

Imagine a query asking for all the posts (and the comments on those posts for a specific user). If the plan is first compiled for a brand new user (who only has one post) and then later is used for me (over 4,000 posts), it may be optimized for a very low number of reads, leading to sub-optimal processing for the larger data set. In the reverse scenario, it may be optimized for a very large memory grant, which is wasted and can harm concurrency if most executions are for "little fish." In reality, most queries do not have a perfect, one-size-fits-all execution plan, but many will have a "good enough" plan that doesn't have a substantial downside at the extremes.

(Historically, we have called this problem "parameter sniffing," but Microsoft has been trying to push us toward using "parameter sensitivity.")

With that context, the two primary cases we have are:

- The query is slower after building a new, different plan.

- The query is slower even when re-using (or re-creating) the same plan.

New, Different Plan

A query SQL Server has seen before may still get a different plan than last time. While comparing the plans before and after will potentially explain why the different plans have different performance, why the plans are different might be less obvious. Usually, it can be traced to different statistics and/or different parameter values that have changed since the last compilation. This recompile can happen if:

- There is an explicit

OPTION (RECOMPILE)hint on the query. - A table/index/statistics change has invalidated existing plans.

- The new plan could be different based on parameter sensitivity but also due to non-representative statistics sampling (think a migration or backfill that inserts, updates, or deletes a large number of rows).

- The plan has been evicted from the cache due to the algorithm or other influences as described above.

- In typical scenarios, it is very unlikely to be a configuration change, like Optimize for ad hoc workloads, since these changes don't happen often. But if you upgrade the database, change the compatibility level, enable database-scoped features, or patch SQL Server to a new CU level, these can trigger a flurry of new plans.

- Connections have different default schemas or different session settings.

- The query text has changed even though the application code has not – maybe some other change led to different query construction, such as a feature flag or a change to the ORM or API that helps generate the eventual query.

- A query that can't be properly parameterized is considered completely different, requiring its own plan, even though the only difference is a literal - such as a date string embedded in the query. In some cases, every single variation of the query getting passed in may trigger a new compilation. See "Red Flags."

Same Plan

Even if a plan already exists and is the one SQL Server chooses to use, or the plan doesn't exist and the same plan is compiled again, the query can perform worse than before for a variety of reasons (and many of these factors can also come into play when a different plan is created):

- There is higher concurrency, leading to fiercer competition for shared resources such as memory. A lower memory grant can force queries that previously operated entirely in memory to now spill to slower disk, or lack of available memory can force a query to wait for memory to become available.

- There is blocking due to aggressive or wide locks or possibly conflicts with a large data change or cyclical high activity.

- The query is running more frequently than expected – even fast queries can cause problems at scale.

- There's simply more data now (either that the query returns for this specific set of parameter values or overall and has to read past) – certain thresholds (or the "ascending key" problem) can make previous choices less optimal, and they aren't necessarily correlated directly with actual row counts.

- The data may no longer be in the buffer pool like plans, data is also in memory unless it ages out (called "disfavoring"). This means the next time that data is queried, it first has to be lifted from disk into memory, and only then can it be accessed by the query.

- The plan uses an adaptive join or various feedback loop mechanisms, like memory grant feedback, which can cause different operations to occur or different resources to be available for different executions of the same plan.

- Compilation isn't free.

Even generating the same plan over and

over can contribute to decaying query duration for complex queries, so forcing

that through

OPTION (RECOMPILE)is not always ideal either. More complex queries (more joins, CTEs, largeIN()lists, etc.) tend to have longer compilation times, but even for simple queries, this can be amplified in "hot paths" where queries run often and an extra 5ms can be a site-killer. The impact of a hot path query taking even a little more time for any reason is more on the web/application side than the database side.

Mitigation

When a query performs poorly because it now has a sub-optimal plan, the primary goal should be restoring previous performance. One way is to kick the bad plan out of cache, make sure statistics are up to date, and hope it compiles a better plan next time. Unfortunately, this type of immediate mitigation can thwart analysis because you essentially destroy all the evidence. This makes it difficult to determine which of the above factors may have led to the regression. You could consider Query Store plan forcing for queries that repeatedly experience this symptom. Potentially some of the additional performance-related features in SQL Server 2022 will automatically deal with some of these scenarios.

Other scenarios, such as blocking (a necessary part of an OLTP system), tend to resolve in short order. Sometimes, these are due to one-off human errors we almost always learn from.

If data has simply reached thresholds ("tipping points"), it may be impossible to expect SQL Server to generate an efficient enough plan from the given query and existing indexes. We can explore index analysis and/or query enhancements in these cases. For very complex queries (e.g., with many CTEs), one potential fix – as ugly as it seems – is to help the optimizer by materializing some of those CTEs into #temp tables.

Another common case is when we have a one-to-many join (e.g.,

Customers to Orders)

and apply DISTINCT or grouping to the left side when

not actually needing the right side in the output. In this case, it would be better

to use EXISTS and remove the

DISTINCT.

Next Steps

See these tips and other resources:

- SQL Server Performance Tuning Tips

- SQL Server Query Store Tips

- Execution plans 101: (back to) The basics – Hugo Kornelis explains execution plans.

- Multiple Plans for an "Identical" Query – I explain why two seemingly identical queries might generate different plans.

- Different Plans for "Identical" Servers – I talk about different plans for similar servers, but these factors can also explain plan differences for the same server at different points in time.

-

Slow in the Application, Fast in SSMS? – Erland Sommarskog most famously

describes a common reason for this symptom (different

SETsettings). This can happen when you test your query in your local application and again from a web app or API. - Too Many Plans for the Same Query Hash – Eitan Bluman shows how to diagnose when the same query text leads to multiple plans.

- Why Multiple Plans for One Query Are Bad

- What is parameter sniffing?

- Updating Statistics in SQL Server

- How Automatic Updates to Statistics Can Affect Query Performance

- Buffer pool disfavoring

- Plan Caching and Recompilation in SQL Server 2012 – Even though this document was published in 2013, many of the concepts still apply.

About the author

Aaron Bertrand (@AaronBertrand) is a passionate technologist with industry experience dating back to Classic ASP and SQL Server 6.5. He is editor-in-chief of the performance-related blog, SQLPerformance.com, and also blogs at sqlblog.org.

Aaron Bertrand (@AaronBertrand) is a passionate technologist with industry experience dating back to Classic ASP and SQL Server 6.5. He is editor-in-chief of the performance-related blog, SQLPerformance.com, and also blogs at sqlblog.org.This author pledges the content of this article is based on professional experience and not AI generated.

View all my tips

Article Last Updated: 2023-10-10