By: Hristo Hristov | Updated: 2024-02-23 | Comments | Related: > Python

Problem

As a data engineer, you frequently interact programmatically with your database. In some cases, this means constructing a Data Query Language (DQL) command, while in others, it involves Data Definition Language (DDL) statements. As we know, DDL is about modifying the database object and structure. How do you create database objects such as tables programmatically? How do you do so without issuing long string-literal SQL commands from your Python code?

Solution

One way to solve this problem is to use SQLAlchemy, a Python SQL toolkit and Object Relational Mapper (ORM). SQLAlchemy has a handy collection of custom data types, allowing us to define the objects we want to create in a Python script. This article will focus on using SQLAlchemy Core to introduce foundational database metadata objects such as MetaData, Table, and Column and how to use them in your Python project.

Setup

This is a very hands-on article with three major steps to introduce you to working with SQLAlchemy Core:

- Establish a connection using SQLAlchemy

Engine - Define database objects using SQLAlchemy

MetaData - Run the setup

Let's quickly look at the local setup.

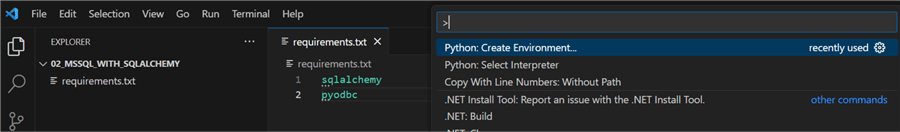

Begin by creating a project folder and open it in VS code. Then, create a requirements.txt

file containing two lines: sqlalchemy

and pyodbc. Next, hit Ctrl+Shift+P

and choose Python: Create Environment.

Follow the prompts for creating a local virtual environment. Make sure to check requirements.txt so the required Python modules will be installed directly:

Checkout my previous tips if you need additional guidance on how to set up your environment. Once the environment has been created, it will be automatically selected for you.

Step 1: Create a Database Connection

To connect to a database, we need an instance of SQLAlchemy

Engine. This is the stepping

stone for all further actions. The

Engine is the basis for

connecting to a database using a specific dialect such as MSSQL, MySQL, or PostgreSQL

(many more are supported). Here, we will use the MSSQL dialect.

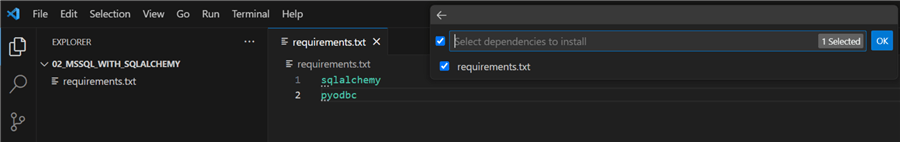

First, let's make a config file containing the connection attributes for an Azure SQL database. In this case, I have chosen a .ini type of file, as it behaves like a dictionary with attributes and allows us to use string data directly without encompassing quotes and escaping (only the % symbol needs escaping in .ini files):

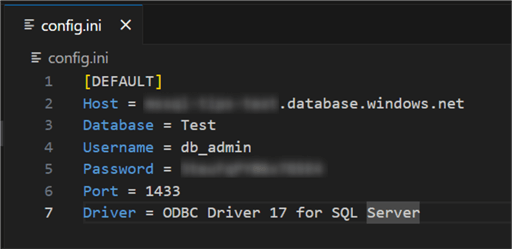

Next, I have a sql_engine.py

file that contains a single class with a single function to create a connection.

The function requires a db_data

argument, which will provide the connection attributes:

01: from configparser import ConfigParser

02: from sqlalchemy import create_engine, URL, Engine

03:

04: class Connector():

05:

06: @classmethod

07: def create_mssql_engine(cls, db_data: ConfigParser) -> Engine:

08: try:

09: params = URL.create(

10: 'mssql+pyodbc',

11: username=db_data['DEFAULT']['Username'],

12: password=db_data['DEFAULT']['Password'],

13: host=db_data['DEFAULT']['Host'],

14: port=db_data['DEFAULT']['Port'],

15: database=db_data['DEFAULT']['Database'],

16: query=dict(driver=db_data['DEFAULT']['Driver']))

17:

18: engine = create_engine(params)

19:

20: return engine

21: except Exception as e:

22: raise ConnectionError(f"Error creating MSSQL engine: {str(e)}")

Let's break it down line by line:

- 01, 02: Import the required external modules.

- 07 – 18: Define a single class method to create a connection. This method uses the URL object, enabling us to pass string configuration values directly without escaping. In this case, the values are coming from our config.ini file.

- 20: Return the engine object.

- 21: Handle exceptions, if any.

With the Connector class in place, we can proceed to the next step.

Step 2: Define Database Objects

To define and create our tables, we will use the SQLAlcehmy

MetaData object, part of

SQLAlchemy Core. This object is a collection of

Table objects. Internally,

it uses a Python dictionary to store the table object data. Having a

MetaData object, we can

define our tables using Table.

Here is an example of how to do that for two tables, Customers and Sales, in a class-friendly

manner. The code is contained in a new file called

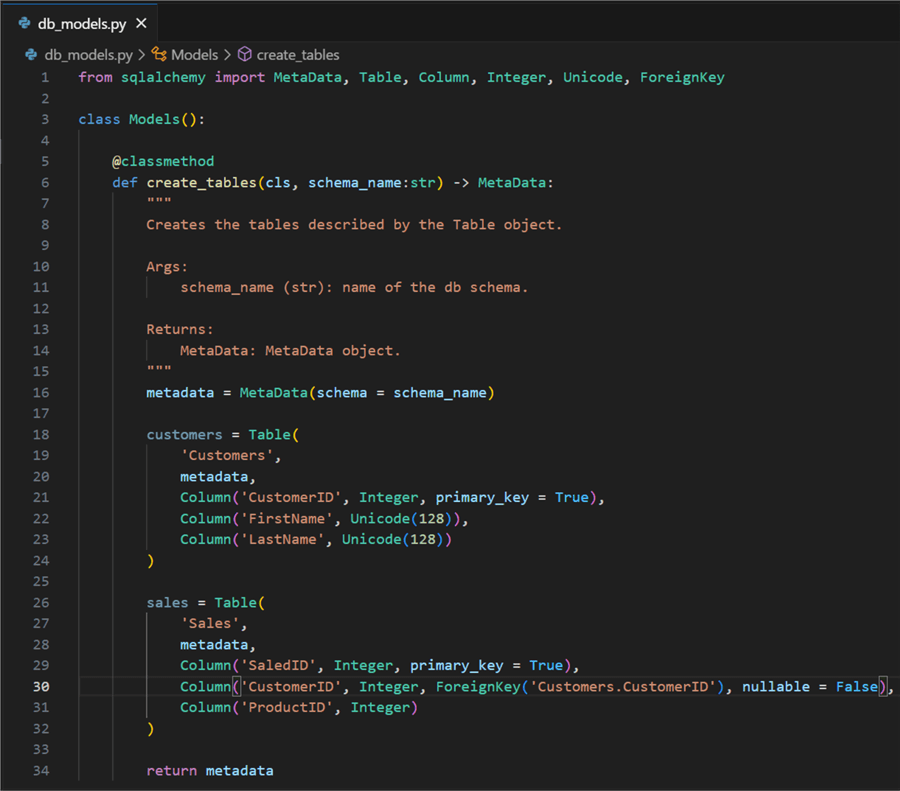

db_models.py:

01: from sqlalchemy import MetaData, Table, Column, Integer, Unicode, ForeignKey

02:

03: class Models():

04:

05: @classmethod

06: def create_tables(cls, schema_name:str) -> MetaData:

07:

08: metadata = MetaData(schema = schema_name)

09:

10: customers = Table(

11: 'Customers',

12: metadata,

13: Column('CustomerID', Integer, primary_key = True),

14: Column('FirstName', Unicode(128)),

15: Column('LastName', Unicode(128))

16: )

17:

18: sales = Table(

19: 'Sales',

20: metadata,

21: Column('SaledID', Integer, primary_key = True),

22: Column('CustomerID', Integer, ForeignKey('Customers.CustomerID'), nullable = False),

23: Column('ProductID', Integer)

24: )

25:

26: return metadata

Let's break it down line by line:

- 01: Import the necessary SQLAlchemy types. Here, we use only a limited number of them. Check this page for a complete list.

- 03 – 06:

We define a class with a single function that will return the

MetaDataobject. - 08: We define

a variable metadata of type

MetaDatathat will act as a container for our tables. - 10 – 16:

Define a table Customers. The

Tableconstructor requires two primary arguments: a name for the table, aMetaDataobject, and a list of column objects. Columns are defined by providing a name, an SQLAlchemy data type, and setting properties such as primary key, foreign, index, etc. In this case, it is a small, basic table. - 18 – 24:

Similar table definition for Sales. It also uses the

ForeignKeytype to make sure the engine issues a foreign key constraint on that column. - 26: Finally,

we return the

MetaDataobject holding our table definition.

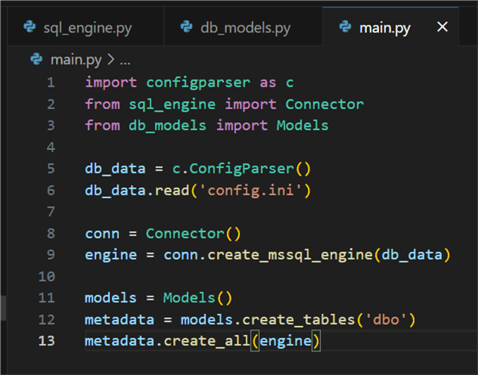

Next, we can create a main.py

file where we can use the code we wrote so far:

01: import configparser as c

02: from sql_engine import Connector

03: from db_models import Models

04:

05: db_data = c.ConfigParser()

06: db_data.read('config.ini')

07:

08: conn = Connector()

09: engine = conn.create_mssql_engine(db_data)

10:

11: models = Models()

12: metadata = models.create_tables('dbo')

13: metadata.create_all(engine)

After having defined our logic for creating a connection engine and database objects, this part of the code is straightforward:

- 05 – 06:

Instantiate a

ConfigParserobject and populate it with our custom config file. - 08- 09: Instantiate

a

Connectorobject and assign theEnginereturn value to a variable. - 11 – 12:

Instantiate a

Modelsobject and assign theMetaDatareturn value to a variable. - 13: Finally, create the database objects.

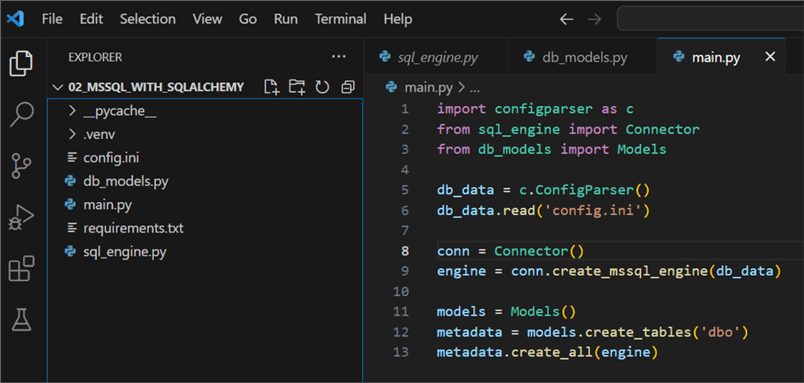

Step 3: Run the Setup

This is what the project namespace looks like:

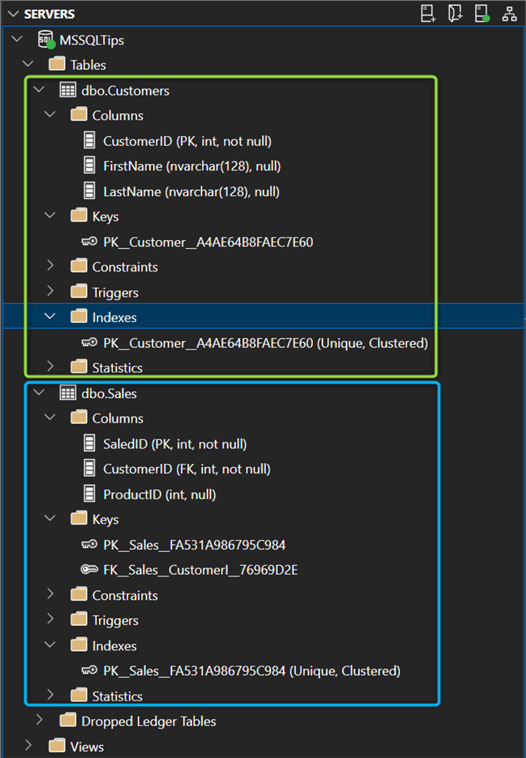

After running main.py, we can check the state of the database from SSMS or Azure Data Studio. The result is we have our two target tables and corresponding primary keys:

Conclusion

We saw how to create an SQLAlchemy

Engine and use database

objects defined by SQLAlchemy types. The setup can be reused and expanded in different

ways, for example, by including it in an Azure function that will run the code automatically

triggered by certain events.

Next Steps

- SQLAlchemy MetaData

- Describing databases with MetaData

- SQLAlchemy Dialects

- SQLAlchemy Table

- SQLAlchemy Column

- How to query SQL with Python pyodbc

About the author

Hristo Hristov is a Data Scientist and Power Platform engineer with more than 12 years of experience. Between 2009 and 2016 he was a web engineering consultant working on projects for local and international clients. Since 2017, he has been working for Atlas Copco Airpower in Flanders, Belgium where he has tackled successfully multiple end-to-end digital transformation challenges. His focus is delivering advanced solutions in the analytics domain with predominantly Azure cloud technologies and Python. Hristo's real passion is predictive analytics and statistical analysis. He holds a masters degree in Data Science and multiple Microsoft certifications covering SQL Server, Power BI, Azure Data Factory and related technologies.

Hristo Hristov is a Data Scientist and Power Platform engineer with more than 12 years of experience. Between 2009 and 2016 he was a web engineering consultant working on projects for local and international clients. Since 2017, he has been working for Atlas Copco Airpower in Flanders, Belgium where he has tackled successfully multiple end-to-end digital transformation challenges. His focus is delivering advanced solutions in the analytics domain with predominantly Azure cloud technologies and Python. Hristo's real passion is predictive analytics and statistical analysis. He holds a masters degree in Data Science and multiple Microsoft certifications covering SQL Server, Power BI, Azure Data Factory and related technologies.This author pledges the content of this article is based on professional experience and not AI generated.

View all my tips

Article Last Updated: 2024-02-23