By: John Grover | Comments | Related: > Amazon AWS

Problem

Three years ago, our organization made the decision to go "all-in" with Amazon Web Services. This was not purely a cost-saving effort, but one that also allowed for far greater flexibility with our IT infrastructure. We have learned a lot during our migration to the cloud, and now that we are in the operational phase we are concentrating on "financial engineering" or how we can use our dollars more efficiently in the cloud to provide a consistent level of service at a lower cost.

Solution

The team jumped into this activity assuming that compute was our biggest expense and began looking at scheduling EC2 instances to run only during business hours, maximizing our use of reserved instances (RIs), and investigating the spot market (buying Amazon's spare computing at significant savings at the expense of instance longevity and stability). Once we started running reports in the AWS billing tool, though, we realized that the biggest percentage of our spend was storage, and all eyes turned to Database Services.

Things to Consider with AWS

There are four main areas to consider when building your database infrastructure in AWS; compute, storage, database as a service, and third-party tools.

Provisioning a database server (we have a mix of SQL Server and Oracle) is an art, combining need for CPU, memory and storage speed based on load, need for HA, etc. Amazon has a wide variety of compute and storage options that can be mixed and matched to meet your performance profile. There is also a database as a service offering (RDS) and many third party tools you can use to analyze what you are spending on cloud services.

AWS Compute

There are "families" of EC2 types that are tuned for processor, memory, and throughput. Cost will vary based on the type and size of the instance you choose. You can go from a t2.nano which is basically a Raspberry Pi-in-the-sky to an x1e.32xlarge with 128 cores and nearly 4TB of RAM. Amazon provides a reference page (https://aws.amazon.com/ec2/instance-types/) to help you choose the type of computing resource you need. Because changing the instance type or size is usually as simple as rebooting the server, take some time to run load tests to optimize your usage, keeping in mind that going up one level in size- large to extra-large or 2xlarge to 4xlarge will double your compute cost. In the cloud, optimizing your usage may mean a compromise between letting a short peak load run a little longer rather than sizing up to that load all the time to cut your cost by 50%.

Saving on compute resources

Reserved instances are a way to save up to 40% by paying for your compute resource up front when you know you will need it long-term rather than paying the on-demand price. This applies especially to database environments. Very rarely do we have a database that we can shut down every evening and start up in the morning to avoid paying for unused compute resource. Our rule of thumb is that if an instance needs to run 40% or more of the time purchasing a reserved instance is the more cost-effective way to go, even for non-production systems.

Spot instances are another way to save money on compute resources. Essentially Amazon places their spare compute up for bid, the caveat is that as soon as someone bids higher than you, your instance will be shut down without much notice and given to the higher bidder. This is an effective strategy in a load-balanced application tier, but really not feasible for a database server (https://docs.aws.amazon.com/AWSEC2/latest/UserGuide/how-spot-instances-work.html).

AWS Storage

Elastic Block Storage (EBS) comes in four flavors: Cold HDD (sc1), throughput optimized HDD (st1), General purpose SSD (gp2), and provisioned IOPS SSD (io1). Early in our move to AWS we provisioned a couple of larger volumes using io2 disk, but did not have monitoring in place to track how many IOPS we really needed for the database. As we entered into a more cost-conscious phase, we realized we were paying thousands of dollars a year for provisioned IOPS we did not need. The lesson here is to keep an eye on your spend even in the beginning of your migration.

Saving on storage resources

Following this, our systems architects suggested that we try st1 storage, at half the cost of gp2 it seemed a logical request. What we quickly learned is that you need to provision volumes at a minimum of 500GB and they are capped at 500 IOPS per volume. Our nightly processing regularly surpasses 1500 IOPS on our data disks so that was a non-starter. The minimum size of 500GB also precluded using st1 as a root volume- our root volumes run about 150GB so even at a 50% savings the requirement to buy 4-5x more disk erased the savings. One of our platforms engineers then pointed out that st1 disk cannot be used as a boot disk anyway. The st1 disk may make sense in a large database that does not require a lot of performance, or you may be able to offset the lack of IOPS by using an instance type with more memory. This is where the ability to build, test, adjust and iterate in the cloud is valuable as you determine which combinations of CPU, memory and storage type will fit your particular requirements without having to spend a lot of money on physical hardware up front. A few hours, a few dollars and a good test suite will allow you to optimize not only performance, but cost as you explore what you can do in the cloud. The lesson here is to be aware of the tradeoff between speed and cost as well as the limitations of the volumes you are provisioning.

Relational Database Service (RDS) Database as a Service on AWS

Amazon offers Database as a Service under the name of Relational Database Service for a variety of database platforms such as Oracle, SQL Server, MySQL, and Postgres. As a managed service you lose some features and flexibility, but if your application just needs a database back end then RDS can save you money over an RDBMS running on an EC2 instance.

Saving on database resources

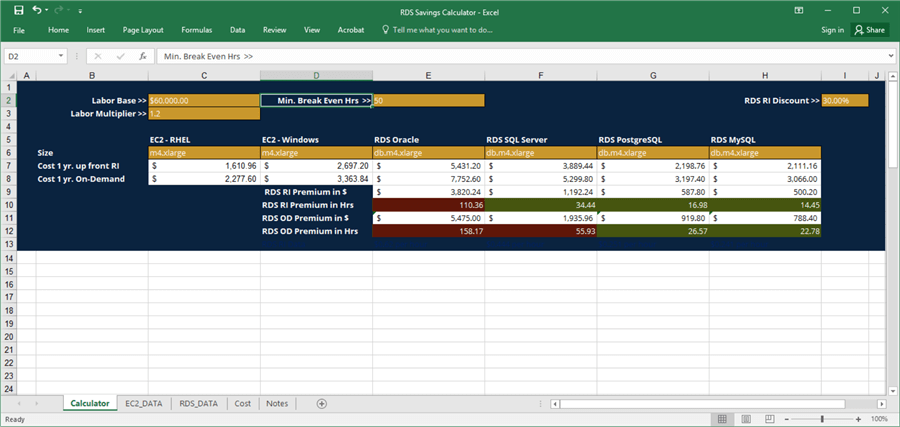

I built a spreadsheet comparing the cost of an RDS instance against and EC2 instance of the same size. From an AWS invoice standpoint RDS looks more expensive, but when you factor in the time your staff will spend patching and maintaining the instance an RDS can show significant savings over a year. Using the formula below, I calculated how many hours a year the managed service needed to save the organization in a year to show cost savings. In some cases it is just a few hours. Using an arbitrary 52 hours a year (1 hour a week) as a cutoff point I was able to identify several families of servers that are far cheaper to run with Amazon doing the maintenance automatically as long as a managed service as long as the required features were available in RDS.

(RDS Annual Cost) - (EC2 Annual Cost + License cost) - (52 * IT Staff hourly salary and benefits) = yearly savings or overage

When this calculation yields a negative number, you will save money using RDS.

The Excel sheet below scrapes AWS cost data from the web and allows the user to adjust the costs for labor and benefits, minimum hours desired to break even on the RDS premium, and the RI discount. By selecting an EC2 instance size in the dropdown in C6 it will calculate the hours required to break even on the RDS premium and color the cells green if it meets the criteria entered. Not all instance sizes have data, but I've found the tool to be useful in presenting to our IT leadership how we can save money (or not) by utilizing Amazon's RDS when it fits our needs. The spreadsheet is available as a download here.

As an example, prices as of the writing of this article, running SQL Server on a Reserves Instance db.m4.xlarge (16GB Memory and 4 CPU) only needs to save 34.44 hours a year in IT staff time to pay for itself, that's an average of about 30 minutes per week. Even a db.r4.2xlarge (60GB memory and 8 CPUs) takes less than 60 hours of staff savings to break even. The lesson here it that when you are evaluating a managed service don't forget to take into account what it costs you to manage your own systems.

Tools for AWS

This is all very easy if you have a dozen servers to deal with, but when your footprint grows to hundreds or thousands of EC2 instances and multi-terabytes of storage you're going to want some help figuring out where you can optimize your cloud spend. There are good third-party tools available to analyze your environment and make recommendations about where you should purchase reserved instances or switch or switch to smaller sized instances based on the wealth of information AWS logs can provide. For the parts of your infrastructure where taking advantage of the spot market is feasible there are also Amazon partners who have been watching the spot market for years and will help you take advantage of huge cost savings where your workload permits. The Google search "aws cost optimization -site:aws.amazon.com" or "aws spot market analysis -site:aws.amazon.com" is a good place to start.

Summary

While cost savings may not be your number one reason for moving to IaaS, you should make informed decisions about the types of infrastructure you choose to build from the very start. The savings can be significant and building a culture of cost efficiency as well as performance efficiency will pay dividends immediately as your engineers and architects look for established or even novel design patterns they may not have considered in an initial, exploratory push to the cloud

Next Steps

- Read and become familiar with the AWS Well-architected Framework, especially the Cost Pillar. Available free in PDF and Kindle format at https://aws.amazon.com/architecture/well-architected/.

- Spend time looking at the different types of compute and storage resources available - https://aws.amazon.com/pricing.

- Search for AWS partners and tools that can help you optimize your cloud environment - i.e. Google search "aws cost optimization -site:aws.amazon.com" or "aws spot market analysis -site:aws.amazon.com".

- Spend time building test servers and running load tests to determine what combination suits your needs.

About the author

John Grover is a 25-year IT veteran with experience in SQL Server, Oracle, clustering and virtualization.

John Grover is a 25-year IT veteran with experience in SQL Server, Oracle, clustering and virtualization.This author pledges the content of this article is based on professional experience and not AI generated.

View all my tips