By: Koen Verbeeck | Comments | Related: > Azure Integration Services

Problem

I migrated my SQL Server Integration Services (SSIS) projects to Azure Data Factory, where the SSIS packages are executed in an Azure-SSIS Integration Runtime. The SSIS catalog is hosted in an Azure SQL Database. I’ve noticed the size of the SSISDB database is growing large because of all the logging by the SSIS packages. Is there some sort of maintenance job that I can run in Azure to clean up this database?

Solution

The SSIS catalog stores a lot of information in the SSISDB database: the SSIS packages, projects, folders, environments and so on. It also stores a new version of your SSIS project each time you deploy to the catalog. Furthermore, every SSIS package logs information to SSISDB when it is executing. This means the database will continue to grow in size, unless some of the logging and old projects versions are deleted. In an on-premises database, there is an SSIS maintenance SQL Server Agent Job that take care of this problem. It’s generated when the SSIS catalog is created. You can find more info about the maintenance job in the tip SQL Server Integration Services Catalog Best Practices. The problem is Azure SQL DB doesn’t have SQL Server Agent, so there’s no maintenance job.

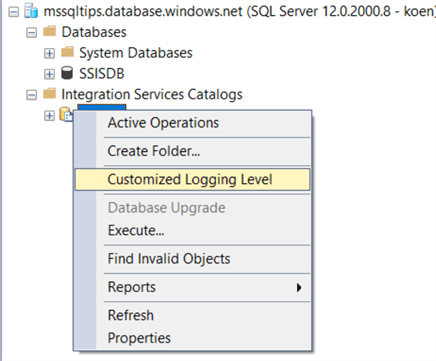

Another problem is the default logging levels of the catalog log too much information to SSISDB, causing it to grow too quickly. Ideally you create a custom logging level which logs less information. If you’re on a version of SSMS lower than 18.0, you need to use a stored procedure to create a custom logging level. Or you can just upgrade to the latest version of SSMS, as you can see in the screenshot (taken with version 18.0):

For more information about the logging levels in SSIS, you can check out the following tips:

- Logging Level Recommendations for the SQL Server Integration Services Catalog

- Integration Services Logging Levels in SQL Server 2016

Let’s now take a look at how we can schedule the maintenance job in Azure.

Creating the Maintenance Job

The easiest option – in my opinion – is to create an Azure Data Factory pipeline with a stored procedure activity. The reason for this is because since you are running SSIS in Azure, you already have an Azure Data Factory v2 (ADF) configured. Executing a stored procedure in ADF is straight forward and you can schedule the pipeline using a trigger.

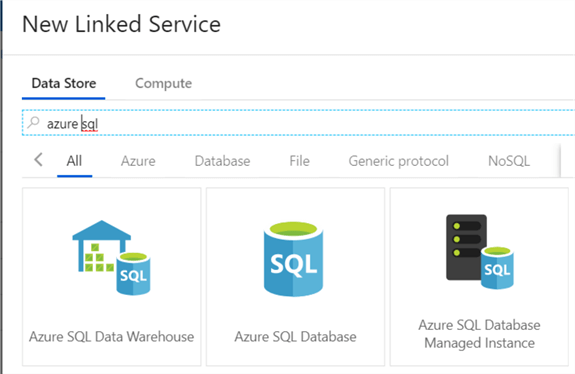

The first step is to add a connection to your SSISDB database in ADF. In the Author pane, go to Connections and then Linked Services. Click on New and select Azure SQL DB from the list of available connectors and click on Continue.

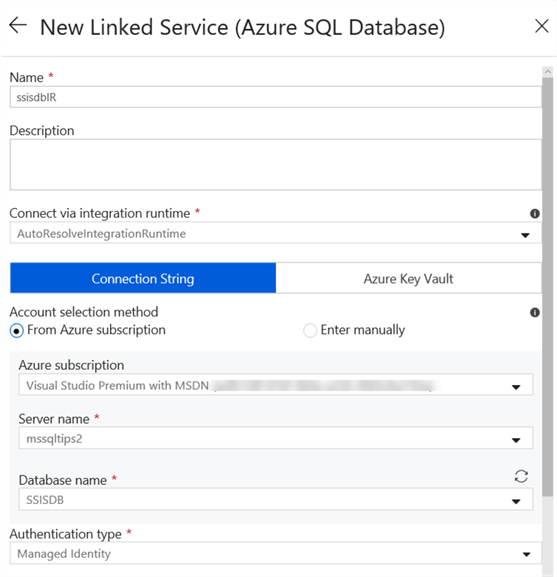

Choose a name for the connection, the integration runtime to connect through (this will normally be the default AutoResolveIntegrationRuntime) and select the subscription, server and database from the dropdowns. You can connect with SQL Authentication, service principal or Managed Identity.

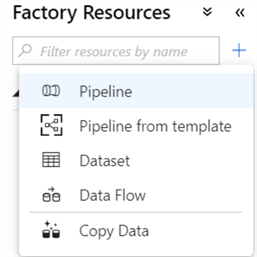

Test the connection and click on OK to create it. The next step is to create a new pipeline in ADF.

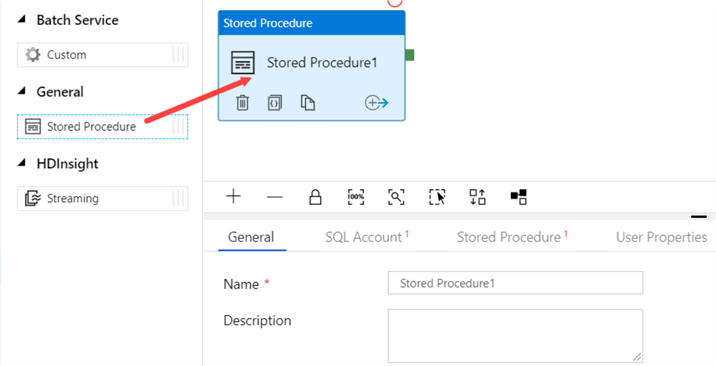

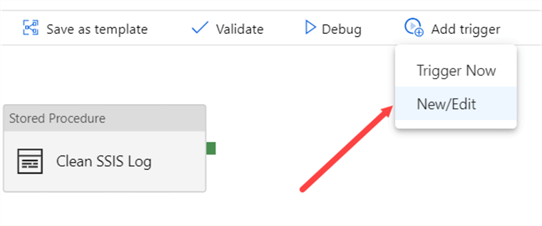

Give it a decent name and add a stored procedure activity to the canvas.

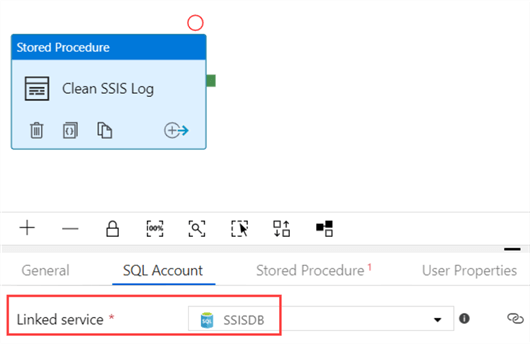

Select the activity, give it a name and go to SQL Account to select the connection we created in the previous steps:

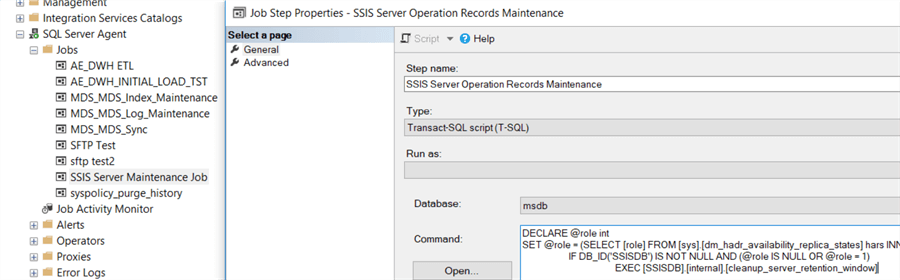

Go to the next tab to configure the Stored Procedure. If you would take a look at an on-premises SSISDB, it has created a SQL Server Agent job, which executes the following script:

DECLARE @role int

SET @role = (SELECT [role] FROM [sys].[dm_hadr_availability_replica_states] hars INNER JOIN [sys].[availability_databases_cluster] adc ON hars.[group_id] = adc.[group_id] WHERE hars.[is_local] = 1 AND adc.[database_name] ='SSISDB')

IF DB_ID('SSISDB') IS NOT NULL AND (@role IS NULL OR @role = 1)

EXEC [SSISDB].[internal].[cleanup_server_retention_window]

We can skip the check for availability replicas and just execute the stored procedure internal.cleanup_server_retention_window.

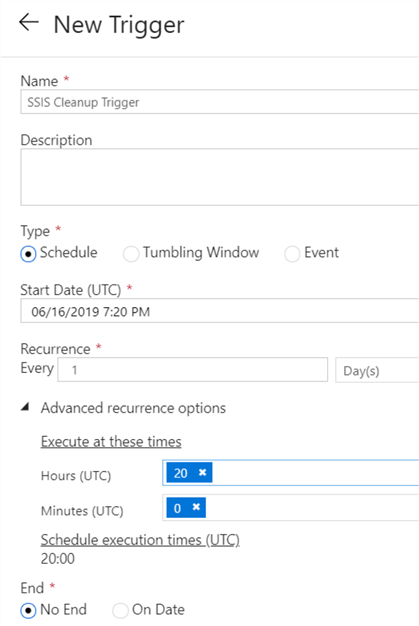

All that is left is to create a trigger for the pipeline.

Choose a name and pick Schedule as the trigger type. Configure the schedule that is appropriate for your environment.

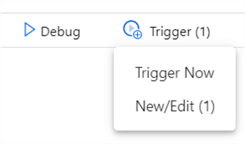

Click on Next and then Finish. Finally publish your pipeline and trigger.

You can trigger the pipeline to test the stored procedure or you can just hit Debug.

Keep in mind if your SSIS catalog has been running for a long time, a lot of logging needs to be cleaned up which can take quite some time.

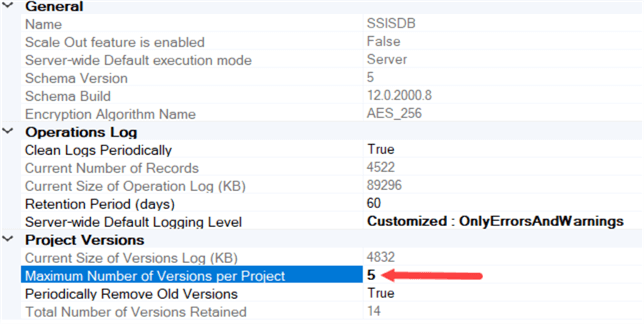

Cleaning Project Versions

Aside from all the logging, there’s an extra overhead in the SSISDB database: old project versions. It’s possible to clean those as well. In the catalog properties, you can configure how many versions the catalog needs to keep before they are removed:

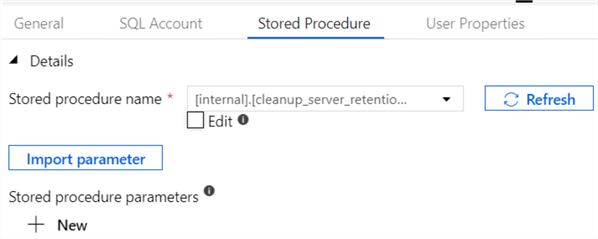

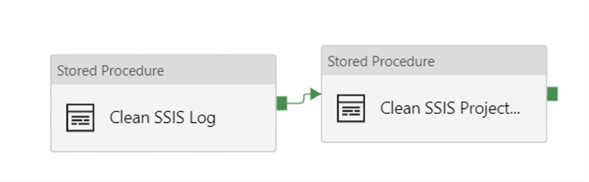

In the ADF pipeline, copy paste the Stored Procedure activity and give it a new name:

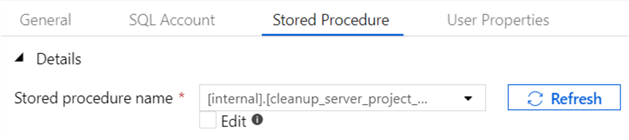

In the Stored Procedure tab, change the stored procedure name to [internal].[cleanup_server_project_version].

Publish the updated pipeline so the changes can take effect.

Alternatives for Azure Data Factory

It’s not a prerequisite to use ADF to schedule the maintenance of your SSISDB database. You can use any tool in Azure that can execute a stored procedure and that can be scheduled. The two best alternatives are:

- Azure Automation, where you execute the same stored procedures in either PowerShell or Python.

- Azure Elastic Database Jobs. In fact, the official documentation gives an example on how to do this in either PowerShell or T-SQL: Clean up SSISDB logs with Azure Elastic Database Jobs. The setup is more difficult though than just using ADF pipelines. For more information about Azure Elastic Database Jobs, check out the tip Introduction to Azure Elastic Database Jobs.

Next Steps

- Try it out yourself! If you have SSIS catalogs in the cloud, it’s certainly a good idea to schedule maintenance to keep the size of the Azure SQL DB small.

- For more information about the Azure-SSIS IR, check out the following tips:

- You can find more Azure tips in this overview.

About the author

Koen Verbeeck is a seasoned business intelligence consultant at AE. He has over a decade of experience with the Microsoft Data Platform in numerous industries. He holds several certifications and is a prolific writer contributing content about SSIS, ADF, SSAS, SSRS, MDS, Power BI, Snowflake and Azure services. He has spoken at PASS, SQLBits, dataMinds Connect and delivers webinars on MSSQLTips.com. Koen has been awarded the Microsoft MVP data platform award for many years.

Koen Verbeeck is a seasoned business intelligence consultant at AE. He has over a decade of experience with the Microsoft Data Platform in numerous industries. He holds several certifications and is a prolific writer contributing content about SSIS, ADF, SSAS, SSRS, MDS, Power BI, Snowflake and Azure services. He has spoken at PASS, SQLBits, dataMinds Connect and delivers webinars on MSSQLTips.com. Koen has been awarded the Microsoft MVP data platform award for many years.This author pledges the content of this article is based on professional experience and not AI generated.

View all my tips