By: Ron L'Esteve | Comments | Related: > Azure Synapse Analytics

Problem

The numerous new additions to Microsoft's Azure Data platform have been creating both excitement and confusion around a number of similar offerings and their purpose in the Modern Azure Data platform. Azure Synapse Analytics Workspace is one such offering, not to be confused with Azure Synapse Analytics DW, which is a next generation Azure Data warehouse rebranded. While Azure Synapse Analytics DW has been generally available for quite some time now, Azure Synapse Analytics (workspaces) is a relatively new addition to the Azure platform and worth exploring. While customers are beginning to get more comfortable with Azure Synapse Analytics DW, how can they get started with Azure Synapse Analytics (workspaces), which is currently in preview?

Solution

Azure Synapse Analytics (workspaces) is a web-native experience that unifies end-to-end analytics solutions for Data Engineers to empower and enable them to ingest, explore, prepare, orchestrate, and visualize their data through one experience by utilizing either SQL or Spark pools. Additionally, it brings along with it the capabilities for debugging, performance optimization and integration with CI/CD. In this article, I will cover how to get started with Synapse Analytics (workspaces) by leveraging some practical examples, code, and use cases provided by the Synapse Analytics Workspaces Knowledge Center.

Create a Azure Synapse Analytics Workspace

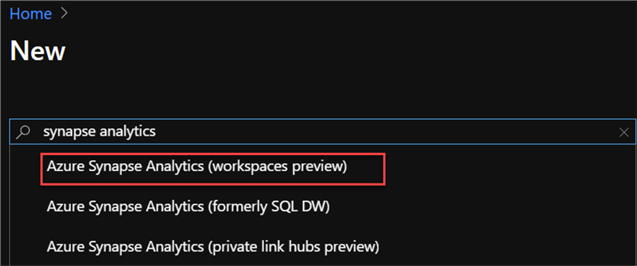

Let's begin by creating a new Azure Synapse Analytics (workspaces preview). Note that there are a few options presented when searching for 'synapse analytics' in the Azure portal. Be sure to choose (workspaces preview) to explore the features presented in this demonstration.

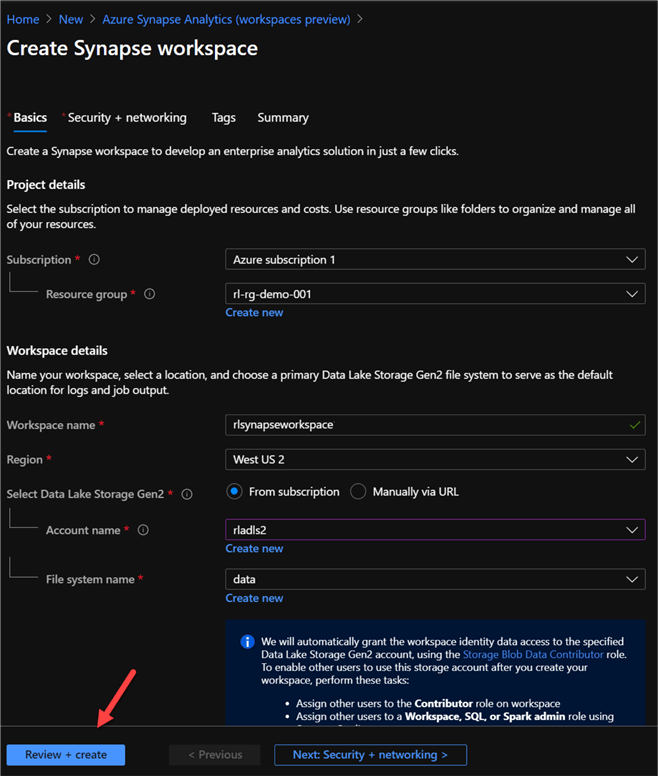

Next, the 'Create Synapse workspace' section will need to be configured and completed. Note that an Azure Data Lake Storage gen2 will need to be created and used in the workspace creation process.

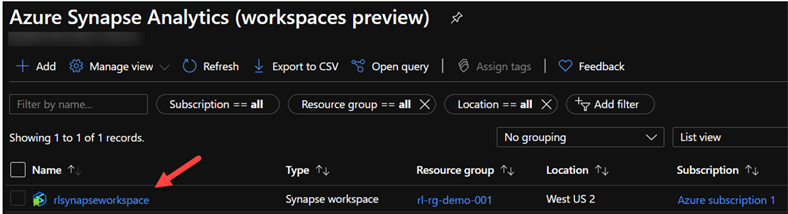

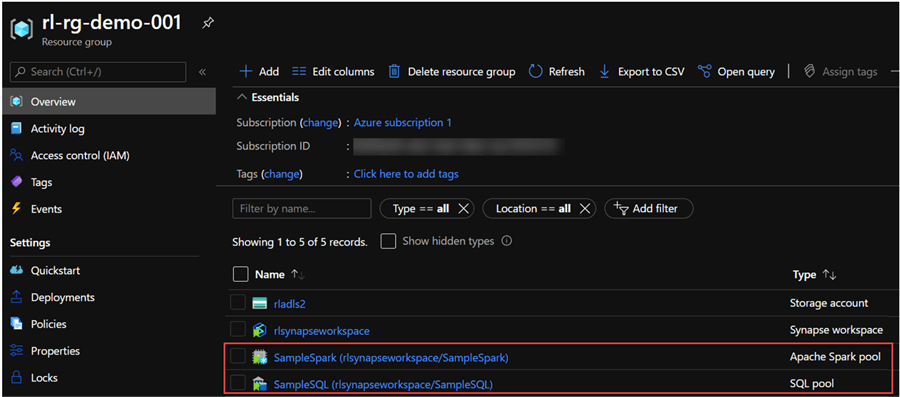

Once the workspace is created, it will be available in the Azure portal in the chosen resource group and appear as follows.

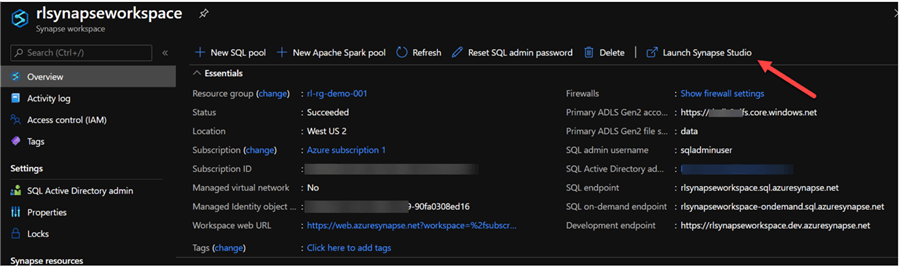

Now that the workspace is created, we are ready to explore the workspace by clicking 'Launch Synapse Studio'.

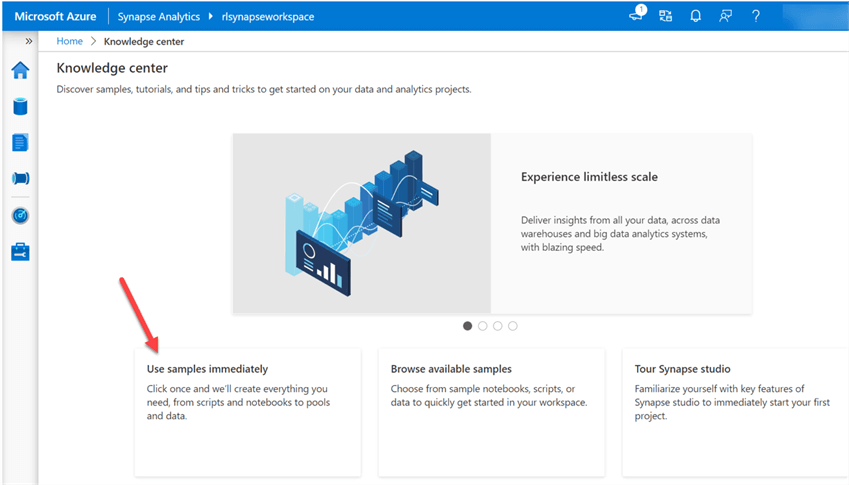

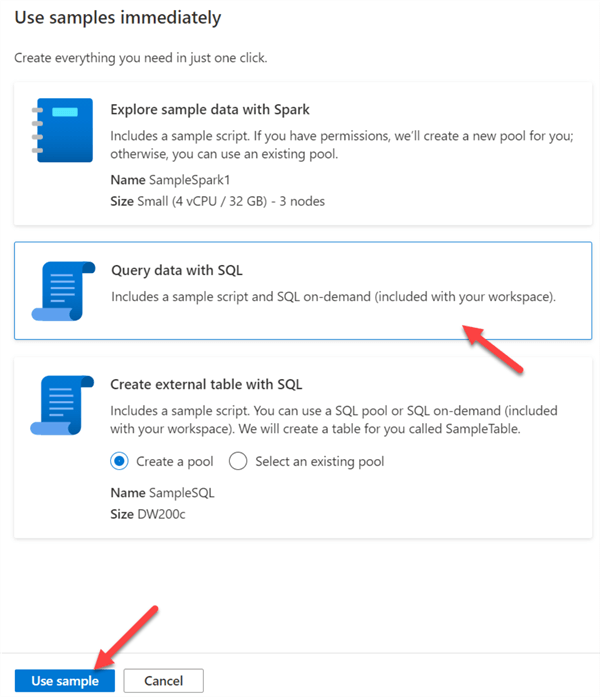

Once the Synapse Analytics Studio workspace launches, navigate to the Knowledge Center and click 'Use samples immediately' to instantly explore scripts, notebooks, datasets, and pools that are automatically provisioned for you.

Explore Sample Data with Spark

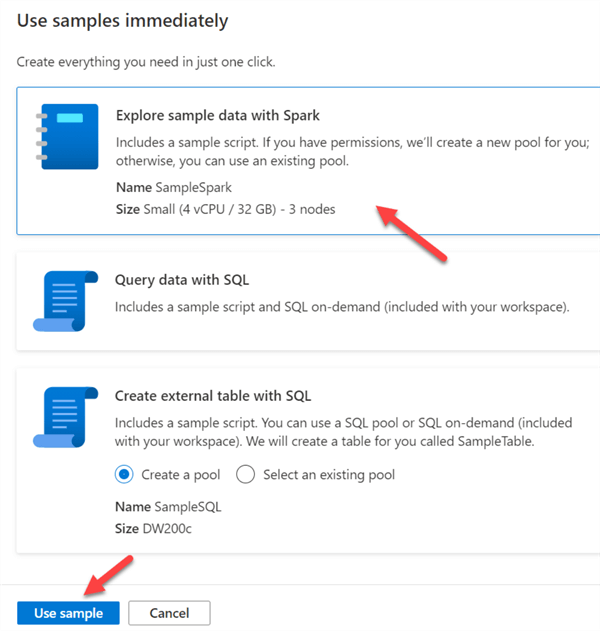

There are three samples included for immediate exploration. The first explores sample data with Spark, includes sample scripts, and even creates a new Spark-pool for you, or you can also bring you own.

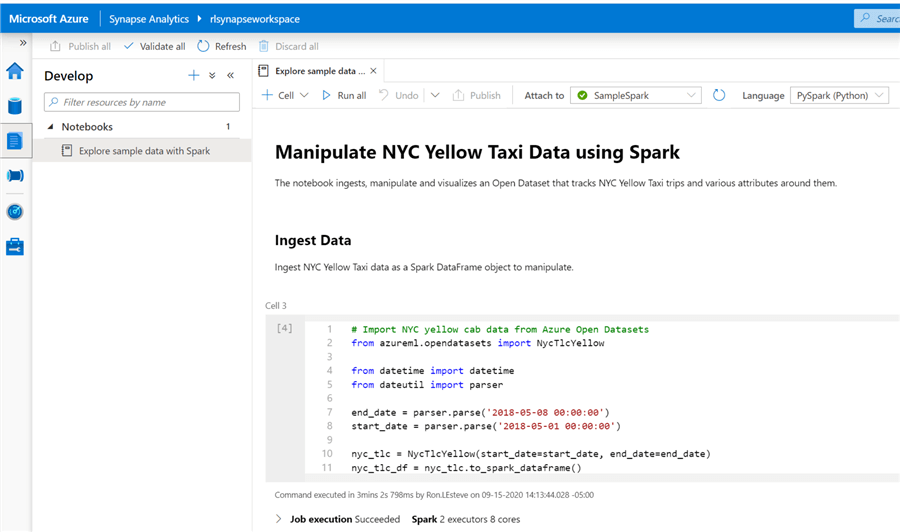

Once the notebook is created, it will contain a number of scripts containing data from the Azure ML OpenDatasets Package. Specifically, this notebook uses the NYC Yellow Taxi Datasets. Once the job completed running, I notice that it used 2 Spark executors and 8 cores to ingest the data.

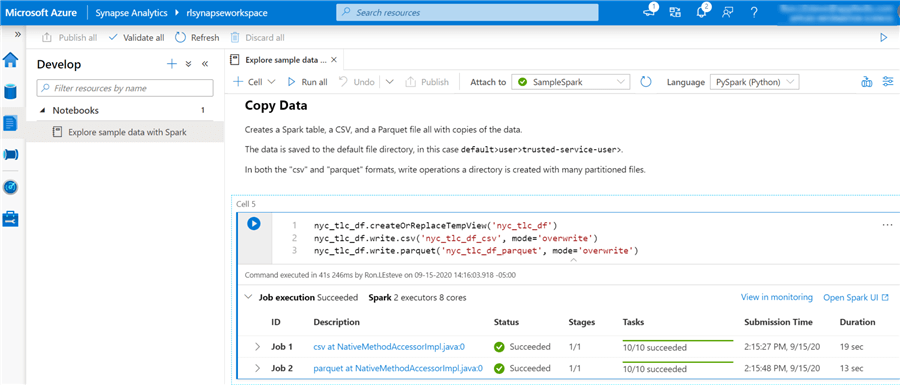

The next step in the process would be to copy the data to the associated Azure Data Lake Store Gen2 account. The script in the notebook will complete this Copy Data task by creating a Spark table, a CSV, and Parquet file with the copied data.

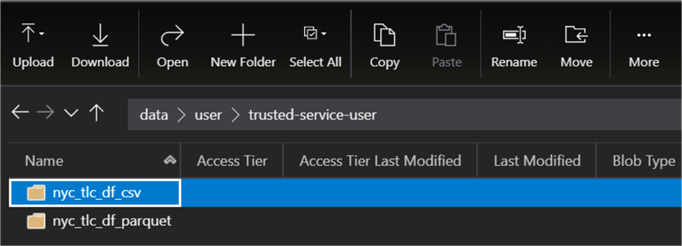

After the jobs completed running, I navigated to the Azure Data Lake Storage gen2 account to verify that both the csv and parquet files had been created. Sure enough, I can see two new folders containing the NYC Taxi datasets.

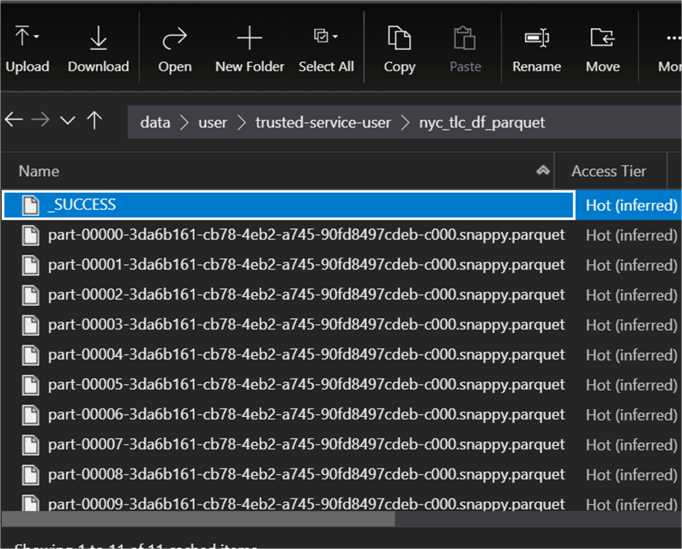

Upon opening the parquet folder, I could see a number of snappy compressed parquet files.

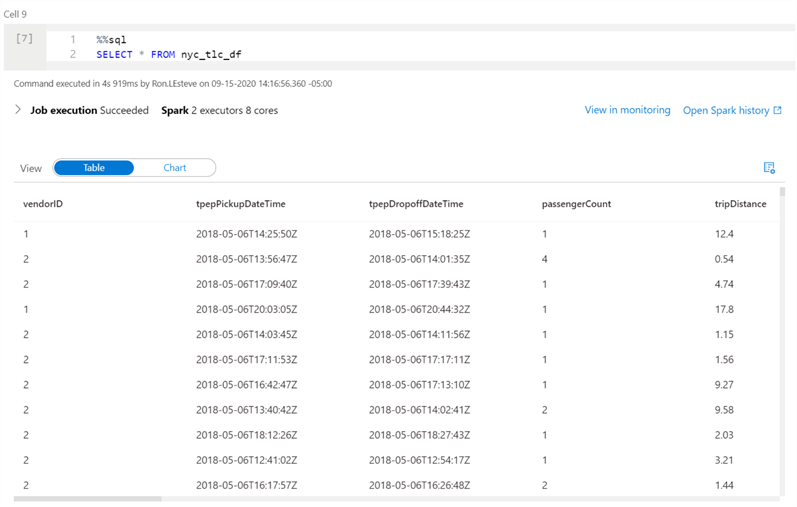

Next, I navigated back to the Azure Synapse Studio workspace and ran the select statement to ensure that the data was also ingested into a Spark table.

Finally, its tile to clean up our resources by ending the connected session to ensure that the Spark instance is shut down. Note that the pool shuts down when the idle time specified in the Apache Spark pool is reached. You can also select end session from the status bar at the bottom of the notebook.

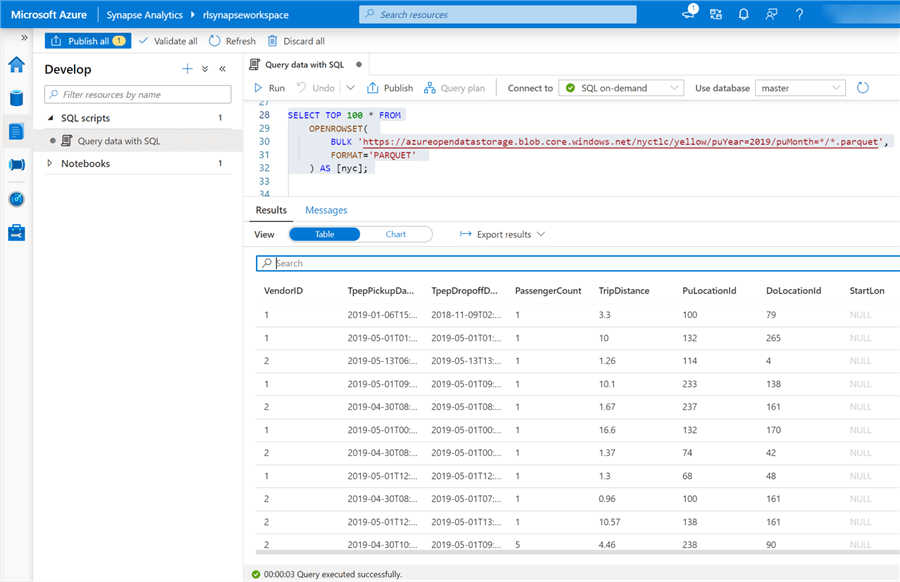

Query Data with SQL

Now that we've completed one the three available samples, let's move on to the second sample which includes sample script and a SQL on demand pool to query data using SQL.

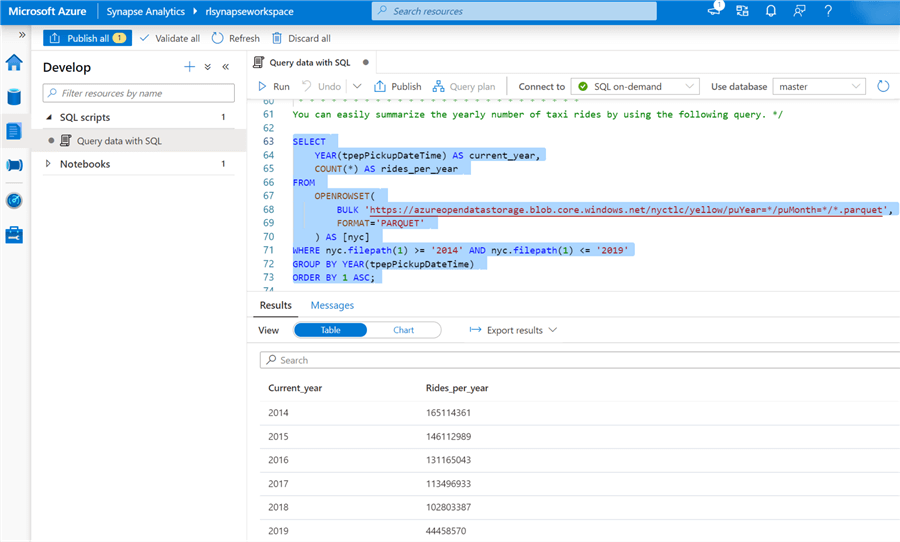

Similar to the first sample, a new SQL script is created which demonstrates how to query the Azure Data Lake Storage gen2 account by using standard SQL commands along with the OPENROWSET function.

The next query adds more complexity by adding additional filters and clauses and does an excellent job at displaying the capabilities of Synapse Studio Workspaces to directly query an Azure Data Lake Storage Gen2 account from a familiar SQL experience. For more detail see Use SQL on-demand to analyze Azure Open Datasets and visualize the results in Azure Synapse Studio.

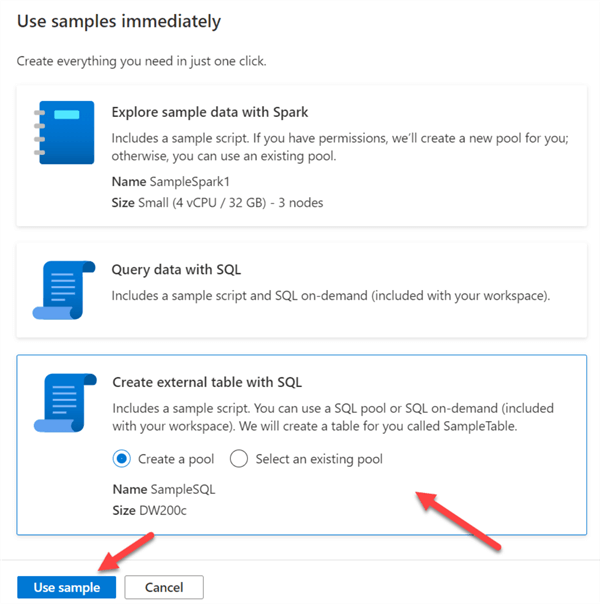

Create External Table with SQL

So far, we've completed two demonstrations: one for exploring sample data with Spark and the second to query data with SQL. The last demonstration in this article includes creating and querying an external table with SQL.

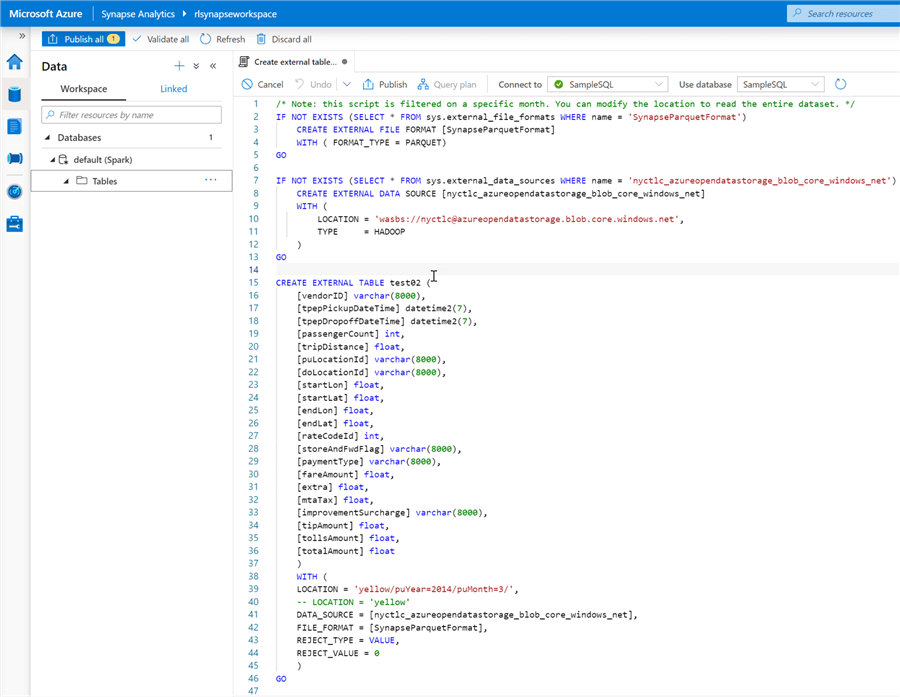

Similar to the previous samples, a sample script and table will be created for you in the workspace.

The following SQL code block included in the sample will demonstrate how to create an external table.

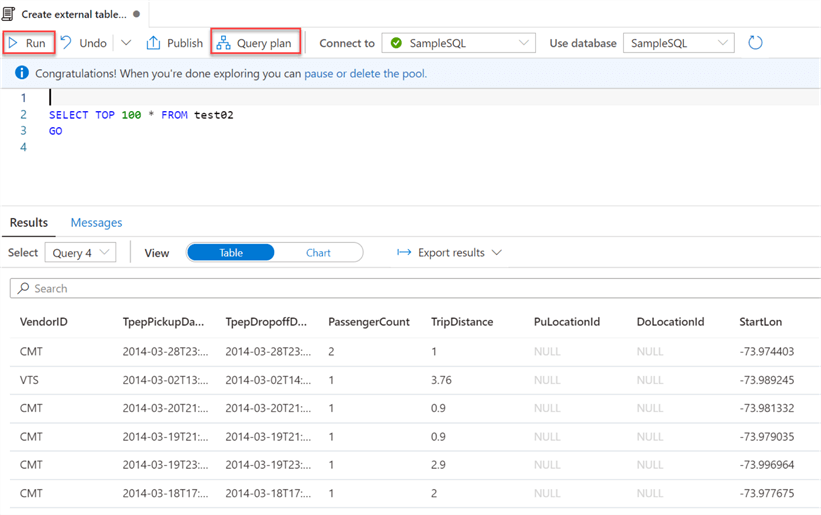

After the table is created, I can go ahead and run a simple SQL select statement to query the newly create external table. Notice that there is also a 'Query plan' button that is available.

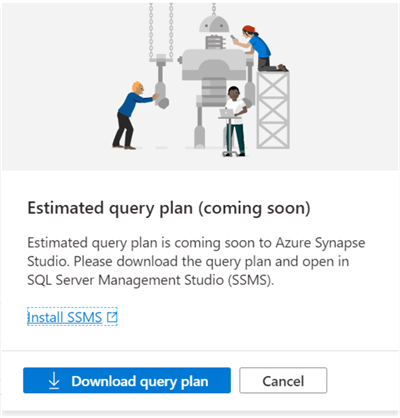

While the query plan is still a bit underbaked and in (coming soon) status, there is the capability of downloading the query plan and opening it in SQL Server Management Studio (SSMS), which could potentially be a useful feature.

In this demo, we explored how to create a new Azure Synapse Analytics Studio workspace and then create three samples from the Knowledge Center: 1) Explore Data with Spark, 2) Query Data with SQL, and 3) Create External table with SQL.

Remember to delete any unused resources and Spark / SQL pools to prevent any additional costs.

Next Steps

- For an overview of the features and benefits of Azure Synapse Analytics Workspace, check out the following MSSQLTips article Azure Synapse Analytics Overview and Microsoft's article What is Azure Synapse Analytics (workspaces preview).

- For more Azure Synapse Analytics Workspace samples, check out the following GitHub branches: Azure-Samples/Synapse and Microsoft Docs/azure-docs.

- For more details on creating a Synapse Workspace, read: Quickstart: Create a Synapse workspace.

About the author

Ron L'Esteve is a trusted information technology thought leader and professional Author residing in Illinois. He brings over 20 years of IT experience and is well-known for his impactful books and article publications on Data & AI Architecture, Engineering, and Cloud Leadership. Ron completed his Master’s in Business Administration and Finance from Loyola University in Chicago. Ron brings deep tec

Ron L'Esteve is a trusted information technology thought leader and professional Author residing in Illinois. He brings over 20 years of IT experience and is well-known for his impactful books and article publications on Data & AI Architecture, Engineering, and Cloud Leadership. Ron completed his Master’s in Business Administration and Finance from Loyola University in Chicago. Ron brings deep tecThis author pledges the content of this article is based on professional experience and not AI generated.

View all my tips