By: Pablo Echeverria | Updated: 2022-09-28 | Comments | Related: > SQL Server and Oracle Comparison

Problem

I've read that adding multiple data files (NDF files) in SQL Server can improve performance for large databases, reducing disk/storage latency metrics, which is a common task performed in TempDB. I've also read that there are benefits on startup, checkpointing, and write operations in Oracle bigfile tablespaces (single datafile) without interfering with parallel operations that access different parts of the file at the same time (backup, restore, full table scan, etc.). How can I test this in my specific environment to determine what works better in terms of performance?

Solution

In this tip, we will run several test scenarios for different configurations to compare the performance:

- Single datafile, one datafile for each CPU, thousands of datafiles.

- Unallocated space (file growth operations), pre-allocated space.

- Single process, concurrent operations.

- INSERT, SELECT, UPDATE and DELETE operations.

Note: My test is on a local file system. You must test and evaluate your results. For example, you may have a different configuration, like Oracle ASM, span files across multiple disks, Oracle RAC, different types of RAID, partitioned tables, mixed storage speeds, SAN or NAS, storage file system types, network speed to storage, etc.

Note: This is a small test and does not reflect my real load, but it was done this way for easy comparison. A real scenario test can help you determine what might work best for you.

Oracle

The documentation shows a performance benefit for bigfiles on startup, checkpointing, and DBWR operations. We will analyze if there are significant differences in test load scenarios and DML operations against different configurations and number of files.

To create a single datafile tablespace with unallocated space and auto extending:

CREATE BIGFILE TABLESPACE MYTBSP DATAFILE 'MYTBSP.ORA' SIZE 6265K AUTOEXTEND ON;

This is simpler than growing datafiles programmatically. Note: Around 6MB is the minimum size for 8K blocks (default database setting).

To create a single datafile tablespace with pre-allocated space:

CREATE BIGFILE TABLESPACE MYTBSP DATAFILE 'MYTBSP.DBF' SIZE 71M;

Note: Oracle auto-grows bigfile to a minimum of 71MB:

To completely erase the tablespace:

DROP TABLESPACE MYTBSP INCLUDING CONTENTS AND DATAFILES;

To create a tablespace with a variable number of datafiles (in this case 8) and unallocated space auto extending:

DECLARE

NUM_FILES INTEGER := 8;

BEGIN

EXECUTE IMMEDIATE 'CREATE TABLESPACE MYTBSP DATAFILE ''MYTBSP.DBF'' SIZE 81K AUTOEXTEND ON';

FOR I IN 2..NUM_FILES LOOP

EXECUTE IMMEDIATE 'ALTER TABLESPACE MYTBSP ADD DATAFILE ''MYTBSP'||I||'.DBF'' SIZE 81K AUTOEXTEND ON';

END LOOP;

END;

/

It can be easily modified to have pre-allocated space; 81K is the minimum size for 8K blocks (default database setting).

To create a test table in the tablespace:

CREATE TABLE T1 ( KEY INTEGER, RANDOM_INT INTEGER, RANDOM_FLOAT FLOAT) TABLESPACE MYTBSP;

To insert from a single process:

SET SERVEROUTPUT ON;

ALTER SESSION SET NLS_DATE_FORMAT='DD-MON-YYYY HH:MI:SS PM';

DECLARE

NUM_ROWS NUMBER := 50000;

I NUMBER := 1;

BEGIN

DBMS_OUTPUT.PUT_LINE(SYSDATE);

WHILE I <= NUM_ROWS LOOP

INSERT INTO T1 (KEY, RANDOM_INT, RANDOM_FLOAT)

SELECT I, DBMS_RANDOM.VALUE(1, 10 + 1), DBMS_RANDOM.VALUE(1, 10 + 1)

FROM DUAL;

--EXECUTE IMMEDIATE 'SELECT KEY, RANDOM_INT, RANDOM_FLOAT FROM T1 WHERE KEY = '||I;

--UPDATE T1 SET RANDOM_INT = RANDOM_INT+1, RANDOM_FLOAT=RANDOM_FLOAT+1 WHERE KEY = I;

--DELETE FROM T1 WHERE KEY = I;

COMMIT;

I := I + 1;

END LOOP;

DBMS_OUTPUT.PUT_LINE(SYSDATE);

END;

/

For the above command, the SELECT/UPDATE/DELETE can be adapted similarly (see the commented rows 12-14). The COMMIT is done per row to compare with a concurrent load and with SQL Server, but the performance is much faster without it.

To insert concurrently using PowerShell script:

$ErrorActionPreference = "Stop"

$NumRows = 2000

$NumRowsStep = 1000

$SleepTimer = 1000

$MaxResultTime = 300

$Maxthreads = 32

$Jobs = @()

$ISS = [system.management.automation.runspaces.initialsessionstate]::CreateDefault()

$RunspacePool = [runspacefactory]::CreateRunspacePool(1, $MaxThreads, $ISS, $Host)

$RunspacePool.Open()

$script = {

Param ([int]$i)

$ErrorActionPreference = "Stop"

$query = "

INSERT INTO T1 (KEY, RANDOM_INT, RANDOM_FLOAT)

SELECT $i, DBMS_RANDOM.VALUE(1, 10 + 1), DBMS_RANDOM.VALUE(1, 10 + 1)

FROM DUAL;"

#$query = "SELECT KEY, RANDOM_INT, RANDOM_FLOAT FROM T1 WHERE KEY = $i"

#$query = "UPDATE T1 SET RANDOM_INT = RANDOM_INT+1, RANDOM_FLOAT=RANDOM_FLOAT+1 WHERE KEY = $i"

#$query = "DELETE FROM T1 WHERE KEY = $i"

$query | sqlplus -s / as sysdba

}

function CreateThread() {

param ([int]$i, [ref]$Jobs)

$PowershellThread = [powershell]::Create().AddScript($script)

$PowershellThread.AddArgument($i) | out-null

$PowershellThread.RunspacePool = $RunspacePool

$Handle = $PowershellThread.BeginInvoke()

$Job = "" | select Handle, Thread, object

$Job.Handle = $Handle; $Job.Thread = $PowershellThread

$Jobs.value += $Job

}

$ResultTimer = Get-Date

for ($j=1; $j -le $NumRows/$NumRowsStep; $j++) {

for ($i=1; $i -le $NumRowsStep; $i++) {

CreateThread ($i+(($j-1)*$NumRowsStep)) ([ref]$Jobs)

}

while (@($Jobs | where {$_.Handle -ne $Null}).count -gt 0) {

foreach ($Job in @($Jobs | where {$_.Handle -ne $Null -and $_.Handle.IsCompleted -eq $True})) {

$results = $Job.Thread.EndInvoke($Job.Handle)

$Job.Thread.Dispose()

$Job.Thread = $Null

$Job.Handle = $Null

}

$inProgress = @($Jobs | where {$_.Handle.IsCompleted -eq $False}).count

$pending = ($NumRows/$NumRowsStep-$j)*1000

Write-Progress `

-Activity "Inserting..." `

-PercentComplete (($NumRows - $inProgress - $pending) * 100 / $NumRows) `

-Status "$inProgress+$pending pending"

$currentTime = Get-Date

if (($currentTime - $ResultTimer).totalseconds -gt $MaxResultTime) {

Write-Error "Child script appears to be frozen, try increasing MaxResultTime"

break

}

Start-Sleep -Milliseconds $SleepTimer

}

}

$RunspacePool.Close() | Out-Null

$RunspacePool.Dispose() | Out-Null

$ResultTimer

$currentTime

The above script was taken from a recent tip, Check current patch levels for all SQL Servers in environment where I explain it better. For this scenario, INSERT 2000 rows doing 1000 at a time, waiting 1 second between completion checks with an overall max duration of 300 seconds (5 minutes), and loading 32 threads at a time. The SELECT/UPDATE/DELETE can be adapted similarly (see the commented rows 18-20).

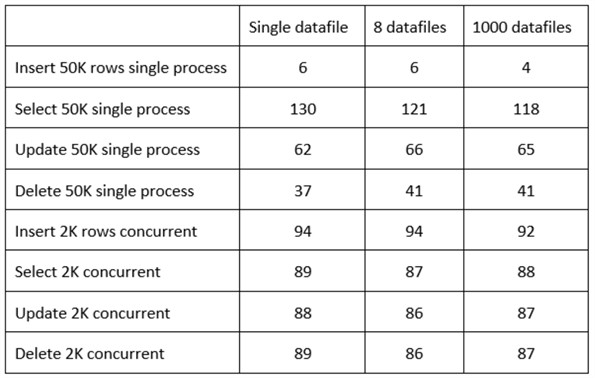

Results. The results are shown below in seconds for comparison:

In the scenario above, I benefited from smallfile tablespaces with thousands of datafiles if I'm doing inserts and selects within a single module (single PL/SQL module or application server). But if I want to benefit from updates and deletes within a single module, I'm better with bigfile tablespaces. And if I have a single application server sending database commands in parallel, it makes more sense to have a single datafile per CPU core.

As you can see, bigfiles have a performance comparable with smallfiles. Thus, we don't need to maintain file-add and growth scripts as in previous Oracle versions, giving us a simplified administration. In my opinion, you should spend your valuable time tuning bad SQL queries rather than monitoring things a machine should do automatically.

SQL Server

According to the documentation, adding multiple NDF files in separate disks will improve performance as the engine spreads queries across all disks. It is the same as having a RAID stripe set. I don't have multiple disks or a RAID, so we will analyze if there are significant differences in test load scenarios and DML operations against different configurations and the number of files in a single disk.

To create a single datafile database with unallocated space and auto extending:

CREATE DATABASE MYDB ON PRIMARY (NAME=MYDBMDF, FILENAME='C:\MYDB.MDF', SIZE=8MB, FILEGROWTH=1MB) LOG ON (NAME=MYDBLOG, FILENAME='C:\MYDB.LDF', SIZE=1MB, FILEGROWTH=1MB);

Note: 8 MB is the minimum size, and 1 MB is the minimum growth.

To create a single datafile database with pre-allocated space:

CREATE DATABASE MYDB ON PRIMARY (NAME=MYDBMDF, FILENAME='C:\MYDB.MDF', SIZE=12MB) LOG ON (NAME=MYDBLOG, FILENAME='C:\MYDBLOG.LDF', SIZE=438MB);

Loading 350K rows will use around 12 MB, and after running all DML operations, the log file size will be about 438 MB.

To drop the database:

DROP DATABASE MYDB;

To create a database with a variable number of datafiles (in this case 8) and unallocated space auto extending:

DECLARE

@NUM_FILES INTEGER = 8,

@I INTEGER = 2,

@SQL NVARCHAR(MAX);

BEGIN

SET @SQL = 'CREATE DATABASE MYDB ON PRIMARY (NAME=MYDBMDF, FILENAME=''C:\MYDB.MDF'', SIZE=8MB, FILEGROWTH=1MB) LOG ON (NAME=MYDBLOG, FILENAME=''C:\MYDB.LDF'', SIZE=1MB, FILEGROWTH=1MB)'

EXEC sp_executesql @SQL;

WHILE @I <= @NUM_FILES

BEGIN

SET @SQL = 'ALTER DATABASE MYDB ADD FILE (NAME=MYDB'+CAST(@I AS VARCHAR)+', FILENAME=''C:\MYDB'+CAST(@I AS VARCHAR)+'.NDF'', SIZE=1MB, FILEGROWTH=1MB)'

EXEC sp_executesql @SQL;

SET @I = @I + 1;

END;

END;

The minimum for the MDF is 8 MB. The minimum for the NDF and LDF files is 1 MB. And 1 MB is the minimum for the file growth. Loading 350K rows will use around 12 MB.

To create a test table in the database:

USE MYDB; CREATE TABLE T1 ( MYKEY INTEGER, RANDOM_INT INTEGER, RANDOM_FLOAT FLOAT);

To insert from a single process:

USE MYDB;

SET NOCOUNT ON

DECLARE

@NUM_ROWS INT = 350000,

@I INT = 1;

BEGIN

PRINT(SYSDATETIME());

WHILE @I <= @NUM_ROWS

BEGIN

INSERT INTO T1 (MYKEY, RANDOM_INT, RANDOM_FLOAT)

SELECT @I, ABS(CHECKSUM(NEWID())) % 10 + 1, RAND(CHECKSUM(NEWID())) * 10 + 1;

--UPDATE T1 SET RANDOM_INT = RANDOM_INT+1, RANDOM_FLOAT=RANDOM_FLOAT+1 WHERE MYKEY = @I;

--DELETE FROM T1 WHERE MYKEY = @I;

SET @I = @I + 1;

END;

PRINT(SYSDATETIME());

END;

SET NOCOUNT OFF

The update/delete can be adapted similarly (see the commented rows 12-13). No transaction is started beforehand.

To perform a SELECT from a single process, it needs to be tweaked a little bit to not return the output:

USE MYDB;

SET NOCOUNT ON

DECLARE

@NUM_ROWS INT = 10000,

@I INT = 1;

BEGIN

SELECT TOP 0 * INTO #TMP FROM T1;

PRINT(SYSDATETIME());

WHILE @I <= @NUM_ROWS

BEGIN

INSERT INTO #TMP SELECT MYKEY, RANDOM_INT, RANDOM_FLOAT FROM T1 WHERE MYKEY = @I;

SET @I = @I + 1;

END;

PRINT(SYSDATETIME());

DROP TABLE #TMP

END;

SET NOCOUNT OFF

To insert concurrently using PowerShell script:

$ErrorActionPreference = "Stop"

$NumRows = 2000

$NumRowsStep = 1000

$SleepTimer = 1000

$MaxResultTime = 300

$Maxthreads = 32

$Jobs = @()

$ISS = [system.management.automation.runspaces.initialsessionstate]::CreateDefault()

$RunspacePool = [runspacefactory]::CreateRunspacePool(1, $MaxThreads, $ISS, $Host)

$RunspacePool.Open()

$script = {

Param ([int]$i)

$ErrorActionPreference = "Stop"

$query = "INSERT INTO T1 (MYKEY, RANDOM_INT, RANDOM_FLOAT) SELECT $i, ABS(CHECKSUM(NEWID())) % 10 + 1, RAND(CHECKSUM(NEWID())) * 10 + 1"

#$query = "SELECT MYKEY, RANDOM_INT, RANDOM_FLOAT FROM T1 WHERE MYKEY=$i"

#$query = "UPDATE T1 SET RANDOM_INT = RANDOM_INT+1, RANDOM_FLOAT=RANDOM_FLOAT+1 WHERE MYKEY=$i"

#$query = "DELETE FROM T1 WHERE MYKEY = $i"

sqlcmd -d MYDB -Q $query

}

function CreateThread() {

param ([int]$i, [ref]$Jobs)

$PowershellThread = [powershell]::Create().AddScript($script)

$PowershellThread.AddArgument($i) | out-null

$PowershellThread.RunspacePool = $RunspacePool

$Handle = $PowershellThread.BeginInvoke()

$Job = "" | select Handle, Thread, object

$Job.Handle = $Handle; $Job.Thread = $PowershellThread

$Jobs.value += $Job

}

$ResultTimer = Get-Date

for ($j=1; $j -le $NumRows/$NumRowsStep; $j++) {

for ($i=1; $i -le $NumRowsStep; $i++) {

CreateThread ($i+(($j-1)*$NumRowsStep)) ([ref]$Jobs)

}

while (@($Jobs | where {$_.Handle -ne $Null}).count -gt 0) {

foreach ($Job in @($Jobs | where {$_.Handle -ne $Null -and $_.Handle.IsCompleted -eq $True})) {

$results = $Job.Thread.EndInvoke($Job.Handle)

$Job.Thread.Dispose()

$Job.Thread = $Null

$Job.Handle = $Null

}

$inProgress = @($Jobs | where {$_.Handle.IsCompleted -eq $False}).count

$pending = ($NumRows/$NumRowsStep-$j)*1000

Write-Progress `

-Activity "Inserting..." `

-PercentComplete (($NumRows - $inProgress - $pending) * 100 / $NumRows) `

-Status "$inProgress+$pending pending"

$currentTime = Get-Date

if (($currentTime - $ResultTimer).totalseconds -gt $MaxResultTime) {

Write-Error "Child script appears to be frozen, try increasing MaxResultTime"

break

}

Start-Sleep -Milliseconds $SleepTimer

}

}

$RunspacePool.Close() | Out-Null

$RunspacePool.Dispose() | Out-Null

$ResultTimer

$currentTime

The above script was taken from a recent tip, Check current patch levels for all SQL Servers in environment. For this scenario, insert 2000 rows doing 1000 at a time, waiting 1 second between completion checks with an overall max duration of 300 seconds (5 minutes), and loading 32 threads at a time. The SELECT/UPDATE/DELETE can be adapted similarly (see the commented rows 15-17).

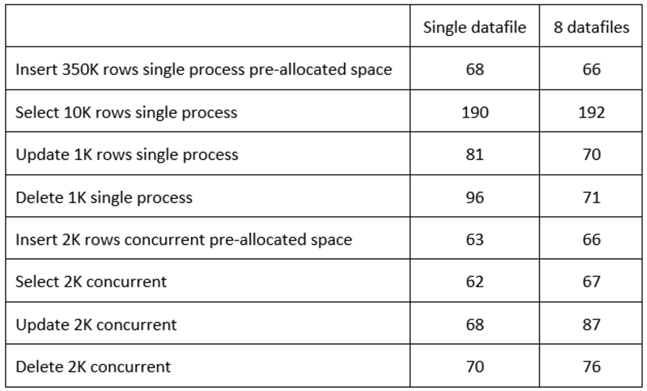

Results. The results are shown below in seconds for comparison. I couldn't test with 1000 datafiles because that requires about 1 GB of information, and based on the results, that would take about 3 hours:

In the scenario above, I benefit from a single datafile if I'm doing concurrent DML or single process SELECT. But, if I want to benefit from single process data manipulation, I'm better with multiple datafiles.

Another thing you may notice is that Oracle is faster than SQL Server for single processes but is slower for concurrent operations. The only operation with almost the same performance is when there is one datafile per CPU, and the operation is an update.

Next Steps

Check out these tips to learn more:

- SQL Server tempdb one or multiple data files

- Splitting a SQL Server Table Over Multiple Files

- How to Add Database File to a Log Shipped database in SQL Server

- How to Remove a SQL Server Data File for a Multi-data File Database

- Renaming Physical Database File Names for a SQL Server Database

- How to Shrink SQL Server Database Files

About the author

Pablo Echeverria is a talented database administrator and C#.Net software developer since 2006. Pablo wrote the book "Hands-on data virtualization with Polybase". He is also talented at tuning long-running queries in Oracle and SQL Server, reducing the execution time to milliseconds and the resource usage up to 10%. He loves learning and connecting new technologies providing expert-level insight as well as being proficient with scripting languages like PowerShell and bash. You can find several Oracle-related tips in his LinkedIn profile.

Pablo Echeverria is a talented database administrator and C#.Net software developer since 2006. Pablo wrote the book "Hands-on data virtualization with Polybase". He is also talented at tuning long-running queries in Oracle and SQL Server, reducing the execution time to milliseconds and the resource usage up to 10%. He loves learning and connecting new technologies providing expert-level insight as well as being proficient with scripting languages like PowerShell and bash. You can find several Oracle-related tips in his LinkedIn profile.This author pledges the content of this article is based on professional experience and not AI generated.

View all my tips

Article Last Updated: 2022-09-28