By: Levi Masonde | Updated: 2023-01-17 | Comments | Related: > Python

Problem

You want to know more about your Twitter account and followers, like the number of followers, how many tweets have been posted, and when they began using Twitter or to see if you can pick up on patterns. This information can be used to make a strong analysis by investigating what impacts the number of followers or can be used to familiarize yourself with Twitter's APIs before diving in deeper.

Solution

Twitter has an excellent API for developers, students, and enterprises. Using Tweepy to boil down the interaction with the APIs, you can get comfortable making requests and storing the results for later use.

Prerequisites

- SQL Server instance - you can create one following this guide

- Python, Version 3.11.0

- Visual Studio Code, Version 1.72.1

- Windows 10 PC or Windows Server 2019/2022

- Twitter Developer Account - create one here

Get Twitter API

To get Twitter's API token, you must log in to the Developer Portal and create a Twitter app (not an actual app, but a project).

Create a Simple App

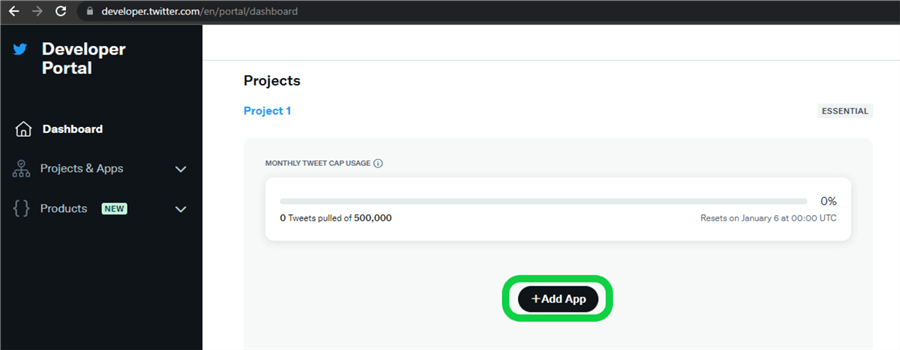

Log in to Twitter's Developer Portal and click on +Add App.

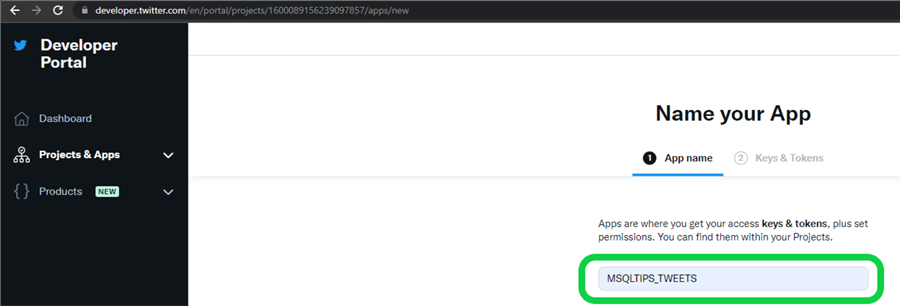

Name the new app.

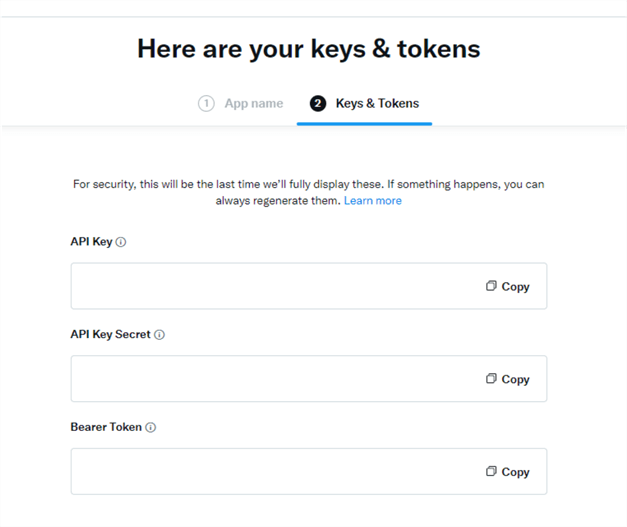

After creating the app, you will receive API keys and tokens attached to your project. Copy the keys and store them in a safe location.

Accessible Information

Now that you have API keys and tokens to access Twitter's API, what data can you retrieve, and what do you use it for?

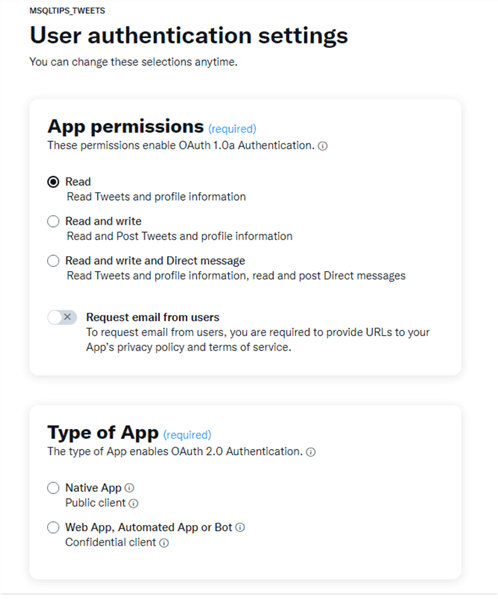

User Authentication

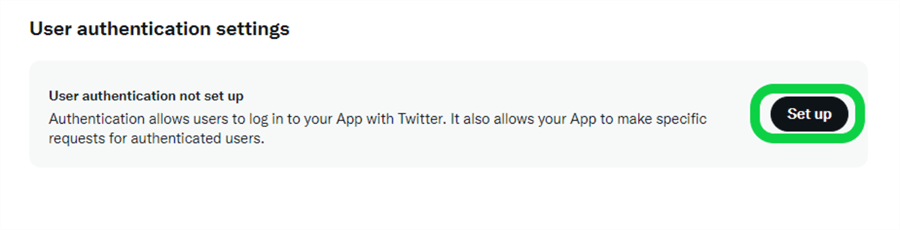

Authentication allows users to log in to your app with Twitter and will enable you to make specific requests for authenticated users.

On your app settings, click on Set up.

The Authentication API enables users in your app to read and write tweets and access direct messages.

You also need to specify the type of app you will be using to interact with the API.:

- Native App: Apps developed for a specific operating system such as Windows, IOS, Android, or MacOS.

- Web App, Automated App, or Bot: Web apps have many levels of UI, supported by a backend server.

Automated Apps and Bots are built to perform tasks automatically. Bots on Twitter often post information or perform actions automatically based on data inputs or triggers.

Search Tweets

Searching for tweets is an important feature used to surface Twitter conversations about a specific topic or event. These search queries are created with operators matching tweets and user attributes, such as message keywords, hashtags, and URLs.

Twitter offers two endpoints that allow you to search for tweets:

- Get historical: Requests are for a period of interest, with no focus on the real-time nature of the data. A single request is made, and all matching data is delivered using pagination as needed. This is the default mode for Search Tweets.

- Polling or listening: Requests are made in an "any new Tweets since my last request?" mode. Requests are made continually, and typically there is a use case focused on near real-time "listening" for Tweets of interest.

There are plenty more endpoints that will not be covered in this tutorial. Learn more about the endpoints here.

Limitations

| Essential | Elevated | Elevated+ (coming soon) |

Academic Research |

|---|---|---|---|

| Getting access | Sign up | Apply for additional access within the developer portal Need more? Sign up for our waitlist |

Apply for additional access |

| Price | Free | Free | Free |

| Access to Twitter API v2 | ✔ | ✔ | ✔ |

| Access to standard v1.1 | ✔ (Limited access - only media endpoints) | ✔ | ✔ |

| Access to premium v1.1 | ❌ | ✔ | ✔ |

| Access to enterprise | ❌ | ✔ | ✔ |

| Project limits | 1 Project | 1 Project | 1 Project |

| App limits | 1 App per Project | 3 Apps per Project | 1 App per Project |

| Tweet caps | Retrieve up to 500k Tweets per month | Retrieve up to 2 million Tweets per month | Retrieve up to 10 million Tweets per month |

| Filtered stream rule limit | 5 rules | 25 rules | 1000 rules |

| Filtered stream rule length | 512 characters | 512 characters | 1024 characters |

| Filtered stream POST rules rate limit | 25 requests per 15 minutes | 50 requests per 15 minutes | 100 requests per 15 minutes |

| Search Tweets query length | 512 characters | 512 characters | 1024 characters |

| Access to full-archive search Tweets | ❌ | ❌ | ✔ |

| Access to full-archive Tweet counts | ❌ | ❌ | ✔ |

| Access to advanced filter operators | ❌ | ❌ | ✔ |

| Option to manage a team in the developer portal | ❌ | ✔ (Requires an organization type account) | ❌ |

| Access to the Ads API | ✔ (Requires additional application) | ✔ (Requires additional application) | ❌ |

| Authentication methods |

OAuth 2.0 with PKCE App only |

OAuth 2.0 with PKCE OAuth 1.0a App only |

OAuth 2.0 with PKCE OAuth 1.0a App only |

Connecting to the API with Python

Create a file named APIKEY.py and add all the API keys as shown below:

API_key_public = "<YourKey>" API_key_Secret = "<YourKey>" Bearer_Token = "<YourKey>" Access_token = "<YourKey>" Access_token_secret = "<YourKey>"

Tweepy

Tweepy is a free Python wrapper that makes it easier to authenticate and interact with the Twitter API. Tweepy maps Twitter's endpoints into manageable functions. In this tutorial, you will use the home_timeline API reference. Take a look at all available API references.

Use the pip command pip install Tweepy to install the Tweepy library.

Make sure to elevate your account on the portal.

Create a file named HomeTimeLine.py and add this code as shown below:

from APIKEY import API_key_public

from APIKEY import API_key_Secret

from APIKEY import Bearer_Token

from APIKEY import Access_token

from APIKEY import Access_token_secret

import tweepy

import pandas as pd

import json

auth = tweepy.OAuth1UserHandler(

API_key_public, API_key_Secret, Access_token, Access_token_secret

)

api = tweepy.API(auth)

public_tweets = api.home_timeline()

data1 = []

for tweet in public_tweets:

user_names = tweet.user.name

followers = tweet.user.followers_count

num_of_tweets = tweet.user.statuses_count

created = tweet.user.created_at

data = {'user_names':user_names,'followers':followers,'number of tweets':num_of_tweets,'created':created}

data1.append(data)

df = pd.DataFrame(data1)

print(df)

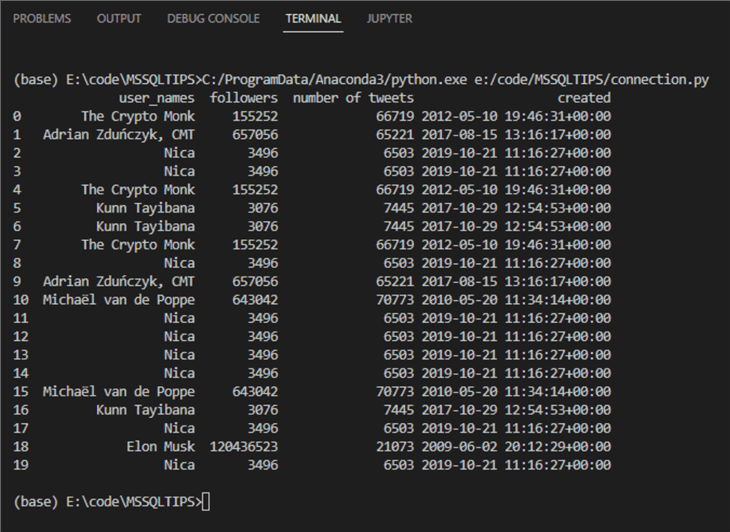

The code above retrieves the home timeline tweets, the information of the tweet's owner, and much more. There is a lot of data to go through. The data is stored in a dataframe for now and will print an output as shown below:

Now I know Elon Musk has been on Twitter for at least 12 years and has posted 21073 tweets since. Fun facts! Next, we will create a SQL Server table to write this to.

Getting SQL Server Ready

Establish a connection with SQL Server to Create, Read, Update, or Delete items on the server. There is a method to establish a connection to the server in a straightforward way.

To write to a SQL Server database, you either need to create one or have an existing one. Let's assume you will be creating a new one.

SQLCMD

To query the Server, you will be using SQLCMD.

Now, fire up your Visual Studio Code to start coding.

Click " CTRL + SHFT + ` " to open a new terminal.

Use the SQLCMD command sqlcmd -S <yourServerName> -E to

login to the SQL Server instance. Once logged in, create a new database using

the CREATE DATABASE command.

sqlcmd -S <yourServerName> -E 1> CREATE DATABASE TwitterData; 2> GO

Engine Configuration

The engine describes the connection pool and dialect for the BDAPI (Python Database API Specification) which communicates with the database specified.

Click " CTRL + SHFT + ` " in Visual Studio Code to open a new terminal.

Use the npm command npm install sqlalchemy in your terminal to

install the sqlalchemy module and create a python file DbConn.py.

// DbConn.py

import sqlalchemy as sa

from sqlalchemy import create_engine

import urllib

import pyodbc

conn = urllib.parse.quote_plus(

'Data Source Name=MssqlDataSource;'

'Driver={SQL Server};'

'Server=POWERSERVER\POWERSERVER;'

'Database=TwitterData;'

'Trusted_connection=yes;'

)

try:

coxn = create_engine('mssql+pyodbc:///?odbc_connect={}'.format(conn))

print("Passed")

except:

print("failed!")

Create a New Table in the Database

After creating a database and connecting to it, you need a table to store the data. Use SQLCMD again to accomplish this.

Open the command terminal.

Use the command USE TwitterData to tell SQL which database to use.

USE TwitterData; GO

The command prompt will notify you of the changes.

Now use the CREATE TABLE command to create a table on the database using the SQLCMD code shown below:

1> CREATE TABLE TWTTER_TIMELINE(ID int NOT NULL PRIMARY KEY); 2> GO

Writing Twitter Data to SQL Server

Now that you have created the database table, it's time to populate it with the Twitter data. Create a Python script file called HomeTimeLine.py to add data every time you run the script with an "append" parameter:

from APIKEY import API_key_public

from APIKEY import API_key_Secret

from APIKEY import Bearer_Token

from APIKEY import Access_token

from APIKEY import Access_token_secret

import tweepy

import pandas as pd

import json

from tweepy import OAuthHandler

from DbConn import coxn

auth = tweepy.OAuth1UserHandler(

API_key_public, API_key_Secret, Access_token, Access_token_secret

)

api = tweepy.API(auth)

public_tweets = api.home_timeline()

data1 = []

for tweet in public_tweets:

user_names = tweet.user.name

followers = tweet.user.followers_count

num_of_tweets = tweet.user.statuses_count

created = tweet.user.created_at

data = ({'user_names':user_names,'followers':followers,'number of tweets':num_of_tweets,'created':created})

data1.append(data)

df = pd.DataFrame(data1)

print(df)

try:

df.to_sql('TWITTER_TIMELINE',con=coxn,if_exists='append')

except:

pass

print("Failed!")

print(coxn.execute("SELECT * FROM TWITTER_TIMELINE").fetchall())

else:

print("saved in the table")

Conclusion

Great! You have set up your SQL Server to receive data from Twitter's API data using Python.

Consider this simple Twitter API connection as a gateway to do more with the API. There is still so much data to be retrieved and used. There are many applications to work with the Twitter API. One of the most popular applications is using the data to create Twitter semantic analysis. This topic will be covered in a later article.

Next Steps

- Check out these other Python articles.

- Get an Introduction to SQL Server's sqlcmd utility to learn more about SQLCMD.

About the author

Levi Masonde is a developer passionate about analyzing large datasets and creating useful information from these data. He is proficient in Python, ReactJS, and Power Platform applications. He is responsible for creating applications and managing databases as well as a lifetime student of programming and enjoys learning new technologies and how to utilize and share what he learns.

Levi Masonde is a developer passionate about analyzing large datasets and creating useful information from these data. He is proficient in Python, ReactJS, and Power Platform applications. He is responsible for creating applications and managing databases as well as a lifetime student of programming and enjoys learning new technologies and how to utilize and share what he learns.This author pledges the content of this article is based on professional experience and not AI generated.

View all my tips

Article Last Updated: 2023-01-17