By: Sergiu Onet | Updated: 2023-04-18 | Comments | Related: 1 | 2 | 3 | > Google Cloud

Problem

In a previous tip, we saw Google Cloud Platform (GCP) options for database services and storage. As a data professional/DBA, we also need insights into monitoring and billing.

Solution

This tip continues the GCP series and we will learn how to manage and monitor cloud resources and discuss billing.

GCP Resource Management

Resource Manager

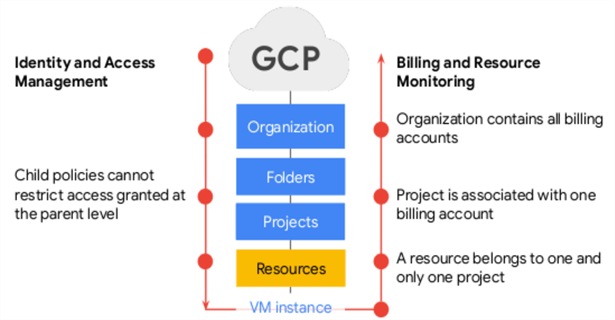

In GCP, resources are organized using Resource Manager. With Resource Manager, we can manage resources by project, folder, and organization hierarchically. Below is a high-level image describing the flow of policies and the relationship between these concepts.

Identity and Access Management (IAM) handles access and security—basically, 'who can do what' on 'which resource.'

The organization node is the root node for all GCP resources. It contains all the billing accounts, and the policies used at this level are also applied to all the resources inside the organization.

Projects help to organize infrastructure resources in GCP. A project covers all the services we use (compute engine instance, cloud storage buckets, networks, APIs, permissions, and so on) and helps organize these resources. Also, a resource belongs to one project only, and a project accumulates the consumption of all the resources inside.

All the resources from projects have quotas (limits) on them, but if needed, the limits can be raised with a ticket to Google.

Policies are a collection of roles and members and are set on resources. These resources inherit policies from their parent, so resource policies are a union of parent and resource. One thing to consider here: if a parent policy is less restrictive, it overrides the more restrictive resource policy.

Quotas

All resources in GCP have project quotas or limits. A quota can be changed, and its main purpose is to:

- Prevent consumption in case of an error or an attack

- Prevent billing from spiking

- Force sizing consideration and periodic review

Labels

Labels are key-value pairs that can be attached to resources like disks or VMs. Labels propagate through billing, and this can be useful if you want to break down the billed charges by label.

An example of using a label would be to create one to describe the environment for our virtual machines and set the label for each of the instances as either production or dev. Then we can use this label to search and find all the production resources for an inventory or just to have an overview of the resources.

Here's an example of a label:

environment: prod environment: dev

Billing

Because a project accumulates the consumption of all its resources, it can be used to track resources and quota usage. Specifically, projects let you enable billing, manage permissions and credentials, and enable service and APIs. Billing is accumulated from the bottom up, as seen on the right side of the image above. The consumption of a resource is measured in quantities, like the rate of use.

Each project has its own billing account and reporting. You can set up a budget limit and associate it with a project, create alerts, and receive notifications if the budget is exceeded or close to a certain limit.

GCP Resource Monitoring

An essential part of any infrastructure and service is monitoring. Let's look at the GCP products that handle monitoring and logging.

Google Cloud's Operations Suite (the Old Stackdriver)

The Operations Suite contains all the products used for monitoring, logging, error reporting, and application performance monitoring. We will not cover how to configure the products; we'll have a general view of each product's features, what it is used for, and its main strengths and benefits.

Cloud Monitoring

Cloud Monitoring collects metrics and events from Google Cloud, AWS, application instrumentation, and on-premises and hybrid cloud systems. It ingests the data and generates insights where you can create custom dashboards containing charts of the metrics you want to monitor.

You can set uptime and health checks to look at the availability of a GCP resource like Compute Engine or even an AWS EC2 instance. Uptime checks can be private when checking internal GCP resources or public when besides GCP resources you can also check a publicly available URL. Protocols used for uptime checks can be TCP, HTTP, or HTTPS. If an uptime check fails, you can set up an alert to be notified of this event. For more information, check out uptime checks.

You can also set up alert policies to monitor the service-level objectives (SLOs). Say you want to be notified when the budget for a project reaches a certain percentage of its allocation or if the latency for a service exceeds a desired value.

Cloud Monitoring agent (Ops Agent) can be installed on Compute Engine and AWS EC2 instances to capture more system and applications events and collect metrics from third-party applications. Several third-party applications can be integrated with Ops Agent. You can also create monitoring charts from log outputs from Cloud Logging, set up alerts for certain events, and receive notifications through channels like email or SMS. More about alert policies can be found here: Alerting.

Cloud Monitoring is subject to quotas.

Cloud Logging

Cloud Logging is a fully managed log management service that stores logs from GCP, AWS, on-premises systems, and application logs. You can read, write, analyze, search, and alert on logging events. Check out how to read and write logs using various methods and APIs: Setting Up Language Runtimes.

BigQuery can be used to analyze the logs in a SQL manner, and logs can be exported in Cloud Storage for longer periods.

Cloud Audit Logs can be used to audit administrative activities, system events, and policy-denied events like someone has accessed a resource that doesn't have permissions because of a security policy.

Cloud Logging also has limits and quotas.

Error Reporting

Error Reporting lets you collect the errors from services you run in the cloud. You can filter, aggregate them using dashboards, and get notifications for new errors. At the time of writing, Error Reporting is available for many platforms, including Compute Engine and AWS EC2 and for many programming languages.

See 'Collect Error Data by Using Error Reporting' for a complete list of available environments and programming languages, and specific guides on collecting error data from your services.

There are some limits and quotas for Error Reporting and a retention period of 30 days.

Cloud Trace

Cloud Trace is a tool that gathers latency data and tracks relationships between services like App Engine or load balancers. It can show your application's dependencies and help investigate performance-related issues like latency by providing reports in near real-time.

Here is documentation on how to set up Cloud Trace and the supported programming languages: Instrument for Cloud Trace.

Similar to GCP services, Cloud Trace also has quotas.

Cloud Profiler

Another performance analyzer tool is Cloud Profiler. It focuses on collecting CPU and memory statistics from the application's source code, helping you better understand the application parts and their resource consumption.

You can profile certain languages and environments in different configurations. To find the available configurations and profiles for each supported programming language and how to set up the Cloud Profiler agent for a specific language, check out Cloud Profiler Overview. The Profiler interface lets you visualize, analyze the data in the form of flame graphs, and compare profiles for a certain timeframe. See 'Compare Profiles' concerning how to compare profiles and 'Select the Profiles to Analyze' to select a profile to analyze.

Profiler API has some quotas.

Next Steps

- Google Cloud Operations Suite services are free to use, but charges will occur if thresholds are exceeded for some features. Check here for details: Google Cloud's Operations Suite Pricing.

About the author

Sergiu Onet is a SQL Server Database Administrator for the past 10 years and counting, focusing on automation and making SQL Server run faster.

Sergiu Onet is a SQL Server Database Administrator for the past 10 years and counting, focusing on automation and making SQL Server run faster.This author pledges the content of this article is based on professional experience and not AI generated.

View all my tips

Article Last Updated: 2023-04-18