By: Hristo Hristov | Updated: 2023-06-16 | Comments (1) | Related: > Python

Problem

Working with two or more different datasets in a Python environment, you may need to combine them, like to perform a join on a key column or condition. What are ways to do that in a Python notebook?

Solution

In this tutorial, we explore three separate ways to join data in Python:

- The pandas merge method

- The pandas join method

- The pandasql library

We will explore each method in detail and list some pros and cons.

Dataset

To display the three methods, I will use the AdventureWorksLT2019 database. You can restore it from back up from here. After I import the database, I will export the tables Product, SalesOrderDetail, and SalesOrderHeader to CSV files. This way, I can use them in my Jupyter notebook and simulate a scenario without access to an SQL Server environment.

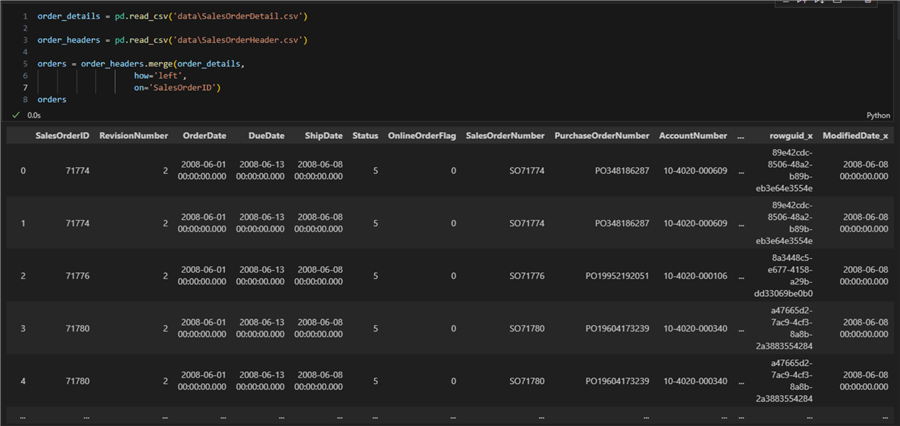

pandas Merge

You call the merge method on a pandas dataframe. As parameters you pass the other dataframe to be joined, the type of join and column to join on, in case your dataframe has no index columns. The merge method returns a dataframe so you can assign it to a new variable.

For example:

order_details = pd.read_csv('data\SalesOrderDetail.csv')

order_headers = pd.read_csv('data\SalesOrderHeader.csv')

orders = order_headers.merge(order_details,

how='left',

on='SalesOrderID')

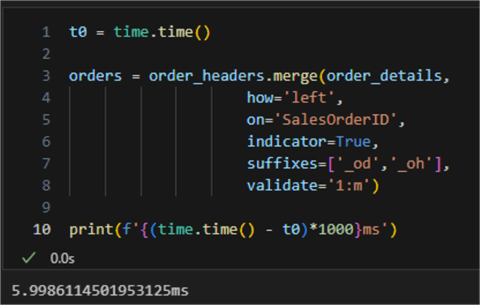

Additionally, we can pass a suffix to columns from the different dataframes, have an indicator column indicating the source of each row, and validate the type of merge (join) we did.

This is how the code can be expanded:

orders = order_headers.merge(order_details,

how='left',

on='SalesOrderID',

indicator=True,

suffixes=['_oh','_od'],

validate='1:m')

If I check the list columns from the dataframe, I will see that the ones that overlap have a suffix indicating which dataframe they belong to:

Index(['SalesOrderID', 'RevisionNumber', 'OrderDate', 'DueDate', 'ShipDate',

'Status', 'OnlineOrderFlag', 'SalesOrderNumber', 'PurchaseOrderNumber',

'AccountNumber', 'CustomerID', 'ShipToAddressID', 'BillToAddressID',

'ShipMethod', 'CreditCardApprovalCode', 'SubTotal', 'TaxAmt', 'Freight',

'TotalDue', 'Comment', 'rowguid_od', 'ModifiedDate_od',

'SalesOrderDetailID', 'OrderQty', 'ProductID', 'UnitPrice',

'UnitPriceDiscount', 'LineTotal', 'rowguid_oh', 'ModifiedDate_oh',

'_merge'],

dtype='object')

If I check the head of the new dataframe, I still see the indicator column ('_merge').

I did not get an error; therefore, the merge (join)

is one to many. If I change the validate argument to

'1:1', I will get a validation error:

pandas Merge Pros

- Straightforward to use

- The type of join can be defined easily

- The join can be validated, handy when in doubt or working with large datasets.

pandas Merge Cons

- Only two dataframes can be joined at a time; if you have more than two, let's say four, you will have to call merge three times: once on the first dataframe and two times on the output dataframes. You could nest the multiple merge calls, though, e.g., pd.merge(pd.merge(df1,df2,on='name'),df3,on='name')

- Merge does not support conditions; it just uses a column to join on, whether an index or non-index column

- If both key columns null values, those rows will be matched against each other. This is different from usual SQL join behavior and can lead to unexpected results.

Execution Time

Timing the execution of the merge method reveals it took about 6ms for the dataframes we used:

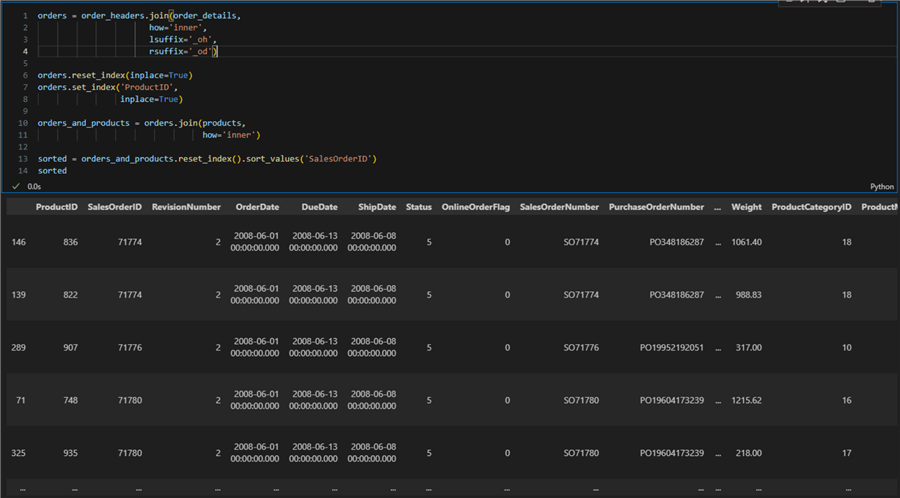

pandas Join

To get the same result as with

merge, we can also

use join. The difference is that you can prefer to

use join when you have an index defined on your dataframes. Therefore, I will first

make sure each dataframe has an index column. Then, I will join the order details

to the order headers and finally join the products data frame to the previous result:

products = pd.read_csv('data\Product.csv')

products.set_index('ProductID',

inplace=True)

order_details = pd.read_csv('data\SalesOrderDetail.csv')

order_details.set_index('SalesOrderID',

inplace=True)

order_headers = pd.read_csv('data\SalesOrderHeader.csv')

order_headers.set_index('SalesOrderID',

inplace=True)

orders = order_headers.join(order_details,

how='inner',

lsuffix='_oh',

rsuffix='_od')

orders.reset_index(inplace=True)

orders.set_index('ProductID',

inplace=True)

orders_and_products = orders.join(products,

how='inner')

sorted = orders_and_products.reset_index().sort_values('SalesOrderID')

pandas Join Pros

- Even more straightforward to use than merge because you work with the predefined index column

- Stimulates the use of index columns. May feel natural to SQL-minded people, faster in this case

- Supports multi-level indexes.

pandas Join Cons

- Must take care to reset the index and set a new one to get the correct results

- Join conditions cannot be defined; it matches indexes directly

- Need to join n dataframes? You will have to call join n-1 times.

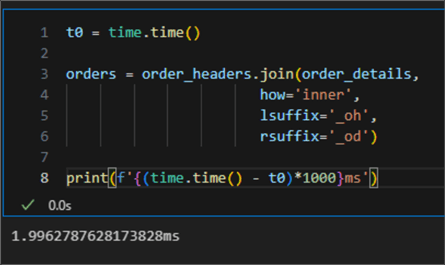

Execution Time

Timing the join method reveals it is faster than merge with about 2ms of execution time which is about three times faster:

This difference may be more noticeable when dealing with more than one large dataset.

pandasql

pandasql is a Python package that allows you to

query dataframes using SQL syntax. First, you must install pandasql in your environment:

pip install -U pandasql

There is one main function called sqldf. It accepts

two parameters: an SQL query string and a set of session/environment variables (denoted

by locals()

or globals()). For example:

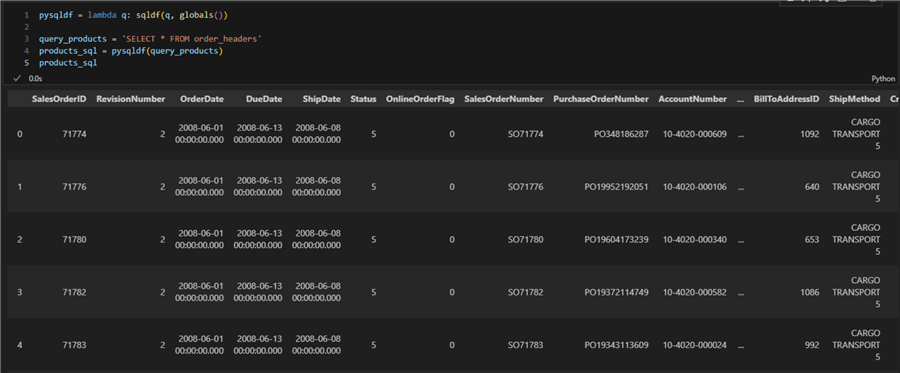

pysqldf = lambda q: sqldf(q, globals()) query_products = 'SELECT * FROM order_headers' products_sql = pysqldf(query_products)

Note: The query is defined as a string literal. So, to perform a join, we can query our dataframes as we would with SQL Server. For example, joining all three tables we have been using so far:

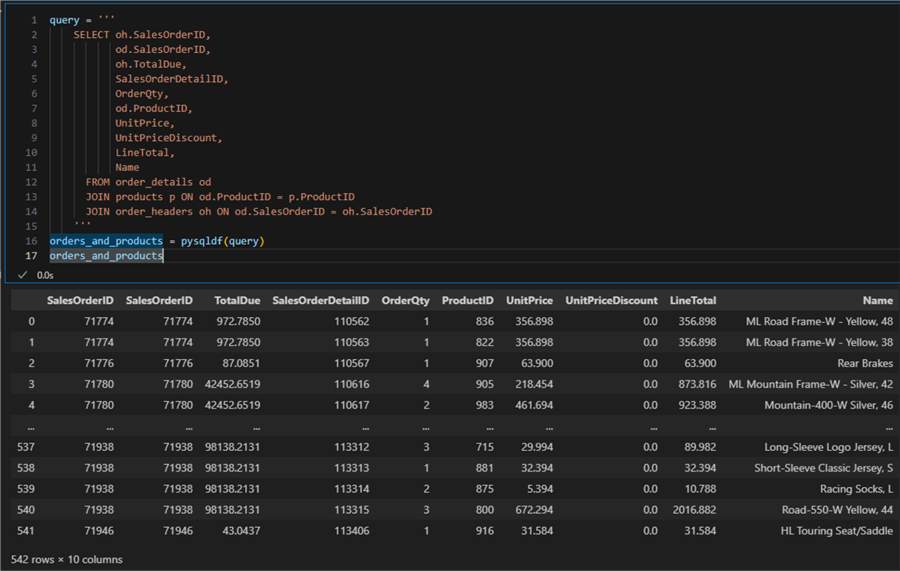

query = '''

SELECT oh.SalesOrderID,

od.SalesOrderID,

oh.TotalDue,

SalesOrderDetailID,

OrderQty,

od.ProductID,

UnitPrice,

UnitPriceDiscount,

LineTotal,

Name

FROM order_details od

JOIN products p ON od.ProductID = p.ProductID

JOIN order_headers oh ON od.SalesOrderID = oh.SalesOrderID

'''

orders_and_products = pysqldf(query)

orders_and_products

The result is a pandas dataframe.

pandasql Join Pros

- Intuitive and familiar SQL join syntax that only needs to be passed to the sqldf function

- Join multiple dataframes at once and select the necessary columns without extra lines of code

- Complex join conditions can be defined using SQL.

pandasql Join Cons

- Slower

- SQL query as string may be difficult to debug

- The package is not supported anymore.

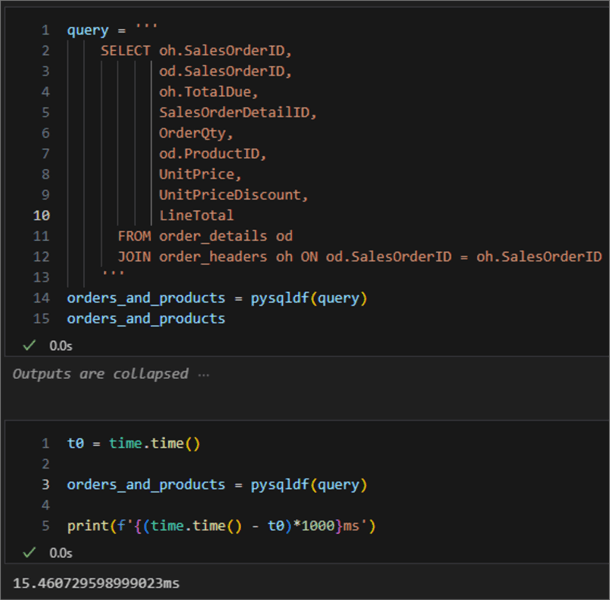

Execution Time

If we time the execution of joining just the order_headers

to the order_details, we get about 15ms:

Conclusion

This article explored three diverse ways to join dataframes: using the pandas

merge or join functions

or the pandasql package. Join

is the fastest and most convenient as it prioritizes working with dataframe indexes.

Merge enables you to use non-index columns too. Finally,

pandasql offers a convenient SQL-based syntax at the

cost of execution time.

Next Steps

About the author

Hristo Hristov is a Data Scientist and Power Platform engineer with more than 12 years of experience. Between 2009 and 2016 he was a web engineering consultant working on projects for local and international clients. Since 2017, he has been working for Atlas Copco Airpower in Flanders, Belgium where he has tackled successfully multiple end-to-end digital transformation challenges. His focus is delivering advanced solutions in the analytics domain with predominantly Azure cloud technologies and Python. Hristo's real passion is predictive analytics and statistical analysis. He holds a masters degree in Data Science and multiple Microsoft certifications covering SQL Server, Power BI, Azure Data Factory and related technologies.

Hristo Hristov is a Data Scientist and Power Platform engineer with more than 12 years of experience. Between 2009 and 2016 he was a web engineering consultant working on projects for local and international clients. Since 2017, he has been working for Atlas Copco Airpower in Flanders, Belgium where he has tackled successfully multiple end-to-end digital transformation challenges. His focus is delivering advanced solutions in the analytics domain with predominantly Azure cloud technologies and Python. Hristo's real passion is predictive analytics and statistical analysis. He holds a masters degree in Data Science and multiple Microsoft certifications covering SQL Server, Power BI, Azure Data Factory and related technologies.This author pledges the content of this article is based on professional experience and not AI generated.

View all my tips

Article Last Updated: 2023-06-16