By: Ron L'Esteve | Updated: 2022-04-13 | Comments | Related: > Azure Databricks

Problem

The capability of cross-organization real-time data sharing with customers, partners, suppliers and more has been an ever-growing need. The historical challenges with securely sharing data across traditional systems has prevented seamless data sharing features. Previously, there were only a few methods of sharing data which included JDBC, ODBC, or SQL connections. Customers are seeking secure, flexible, and open ways of connecting to a variety of data stores, types, and languages. Cloud providers are beginning to take note of this need for Data Sharing and have begun introducing new features and capabilities to the market. Snowflake has provided a capability of sharing data through its Data Sharing and marketplace offering which enables sharing selected objects in a database in your account with other Snowflake accounts. Databricks Delta Sharing provides similar features with the added advantage of a fully open protocol with Delta Lake support for Data Sharing. Customers are interested in understanding how to get started with Delta Sharing.

Solution

Delta Sharing is a fully secure and compliant open-source protocol for sharing live data in your Lakehouse with support for data science cases. It is not restricted to SQL, supports a variety of open data formats, and can efficiently scale and support big datasets. Delta Sharing supports Delta Lake which contains a wide variety of features. Numerous data providers are excited about Delta Sharing and have committed to making thousands of datasets accessible through Delta Sharing. The ease of accessing this shared data promotes self-service data providers and consumers. In this article, you will learn about how Delta Sharing works, along with the various benefits of Delta Sharing. You will also learn how to get started with creating, altering, describing, and sharing Delta Lakehouse data using Databricks. You will also learn about a few options for consuming Delta Lakehouse data that has been shared.

Architecture

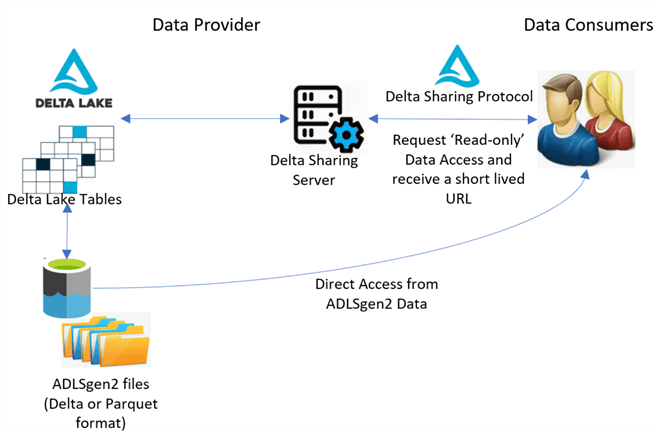

With Delta Sharing, live big datasets in Delta or Parquet format within your ADLS gen2 account can be shared without needing to copy it. Since Delta Sharing is an open-source capability, it supports open data format for a variety of clients and it brings robust security, auditing, and governance features. The Delta Sharing architecture follows a simple paradigm which includes two key stakeholders, which are providers and consumers. A data provider will share their data within their ADLSgen2 account by setting up their Delta Sharing Server to grant access permissions to data recipients via the Delta Sharing Protocol. A variety of clients can then access the Delta Sharing Protocol, Delta Sharing Server, and Delta Data Table. This Delta Sharing is open source and more information about it can be found on the following GitHub repository: https://github.com/delta-io/delta-sharing and at https://delta.io/sharing/

Once access is verified and granted, a short-lived read-only URL will be provided to the data consumer. While the initial request will be provided through the Delta Sharing Server, all subsequent requests will go directly from the client to the ADLSgen2 account where the shared data is stored. With this segregated approach to data sharing, providers can selectively share partitions or tables which can be updated with ACID transactions in real-time. There are a variety of clients including major cloud providers such as Azure and open-source clients including Spark, Pandas, Hive, Tableau, and Power BI that support the various capabilities of Delta Sharing within their platforms. Also, a growing number of large organizations are beginning to adopt Delta Sharing capabilities as data providers which supports the monetization of their data as assets while bringing quicker time to insights for their clients. The architecture which has been discussed in this section is shown in the figure below.

Share Data

To share data within your ADLSgen2 account from a Databricks

notebook, you'll need first create a share using the

create share <MyShareName> command and then

add a table to the share using the add share <MyShareName>

add table <MyDataTable>. Once a table or collection of tables are added

to the share, a recipient can be created using the create

recipient <MyRecipient> command and this will generate an activation

link which can be shared with the respective data recipient. The following

final command will grant read only permissions on the share to the recipients specified:

grant select on share <MyShareName> to recipient <MyRecipient>.

Access Data

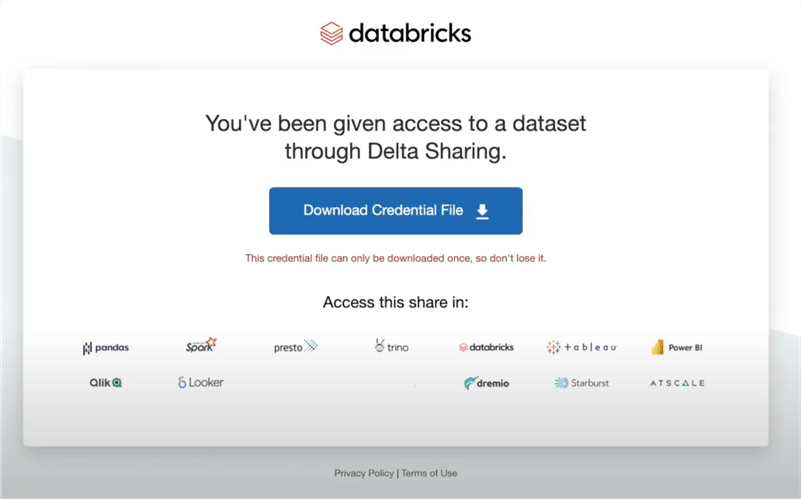

When access is granted by a data sharer, the data recipient will receive an activation link similar to the illustration shown in the figure below. By clicking 'Download Credential File' the data recipient will download a json file locally. Note that for security purposes, the credential file can only be downloaded once after which the download link will be deactivated. For certain technologies such as Tableau, in addition to the URL link, you may need to upload this credential file. For other technologies, you may need credentials such as a 'Bearer token' from this file.

After the credential file is downloaded, it can be referenced in a variety of different notebook types including Jupyter and Databricks to retrieve the shared data within data frames. The following commands can be run to install and import the delta-sharing client within your notebook. You can also install this from PyPi by searching for 'delta-sharing-server' and installing the 'delta-sharing' package. After that, you can use this previously downloaded credential profile file to list and access all the shared tables within your notebook.

# Install Delta Sharing !pip install delta-sharing # Import Delta Sharing import delta_sharing # Point to the profile file which was previously downloaded and can be a file on the local file system or a file on a remote storage. profile_file = "<profile-file-path>" # Create the SharingClient and list all shared tables. client = delta_sharing.SharingClient(profile_file).list_all_tables() # Create a url to access a shared table. # A table path is the profile file path following with `#` and the fully qualified name of a table (`<share-name>.<schema-name>.<table-name>`). table_url = profile_file + "#<share-name>.<schema-name>.<table-name>" # For PySpark code, use `load_as_spark` to load the table as a Spark DataFrame. delta_sharing.load_as_spark(table_url)

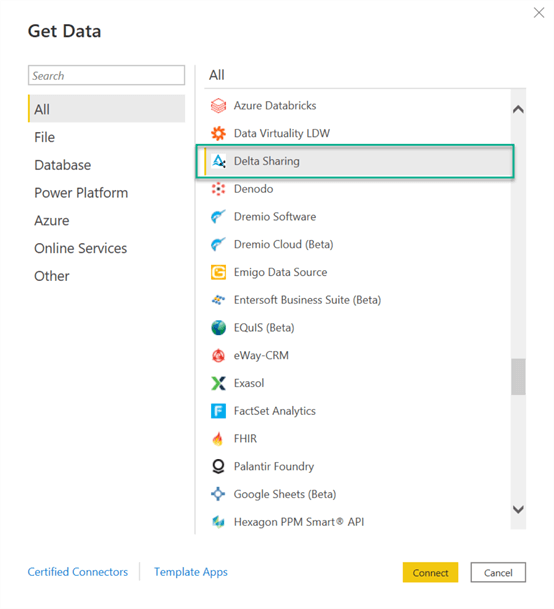

Within Power BI, it is easy to connect to a Delta Sharing source by simply selecting 'Delta Sharing' from the out of the box PBI data source options and clicking 'Connect', as shown in the figure below.

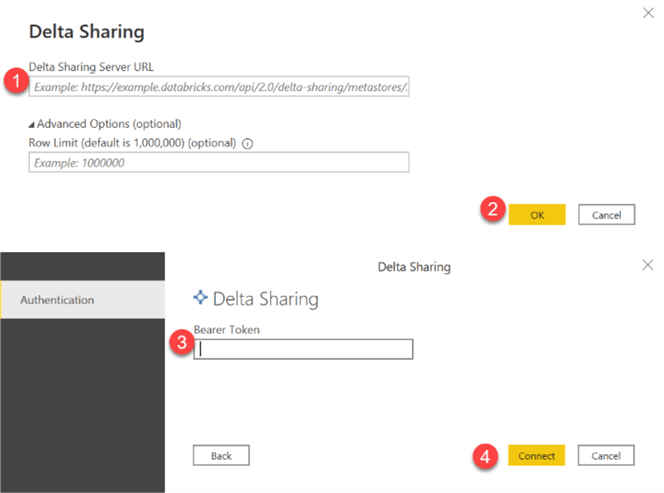

In the configurations section, you'll then need to enter the Delta Sharing Server URL and Bearer Token for authentication, as shown in the figure below. Also notice the optional Advanced Options for specifying a row limit which enforces a limit on the number of rows that can be retrieved from the source dataset.

Summary

In this article, you learned more about the capabilities of Delta Sharing and how it enables data sharers and data recipients to be closely aligned through access to live data residing in a variety of sources on multiple cloud platforms including Azure Data Lake Storage Gen2. With its open-source standards, contributors from the global developer communities can recommend or add additional connectors and contributions to the project as it continues to grow and scale. Delta Sharing reduces the complexity of ELT and manual sharing and prevents any lock-ins to a single platform. Delta Sharing has a robust roadmap for increasing sharing capabilities to support other use cases including ML Models, streaming for incremental data consumption since it already supports Delta format, sharing of views, and much more. All of these secure and live data sharing capabilities of Delta Sharing promote a scalable and tightly coupled interaction between data providers and consumers within the Lakehouse paradigm.

Next Steps

- Read more about Delta Sharing - Delta Lake

- Explore the Delta Sharing GitHub Repository GitHub - delta-io/delta-sharing

- Read the following article: Databricks Unveils Delta Sharing, the World's First Open Protocol for Real-Time, Secure Data Sharing and Collaboration Between Organizations - Databricks

- Read more about Delta Sharing - Databricks

- Read the following article: Databricks introduces Delta Sharing, an open-source tool for sharing data | TechCrunch

About the author

Ron L'Esteve is a trusted information technology thought leader and professional Author residing in Illinois. He brings over 20 years of IT experience and is well-known for his impactful books and article publications on Data & AI Architecture, Engineering, and Cloud Leadership. Ron completed his Master’s in Business Administration and Finance from Loyola University in Chicago. Ron brings deep tec

Ron L'Esteve is a trusted information technology thought leader and professional Author residing in Illinois. He brings over 20 years of IT experience and is well-known for his impactful books and article publications on Data & AI Architecture, Engineering, and Cloud Leadership. Ron completed his Master’s in Business Administration and Finance from Loyola University in Chicago. Ron brings deep tecThis author pledges the content of this article is based on professional experience and not AI generated.

View all my tips

Article Last Updated: 2022-04-13