By: Koen Verbeeck | Updated: 2021-11-04 | Comments | Related: More > Professional Development Skills Development

Problem

In this tip, we'll walk you through a typical day of a business intelligence developer. More specifically, a developer using the Microsoft Data Platform stack. If you're considering a career change into this direction, this tip will hopefully give you some insight on the day-to-day responsibilities.

Solution

Of course, the day is fictional and all the common tasks are put together. In a typical day, you might perhaps work the whole day on a piece of ETL for example, instead of covering the entire stack at once.

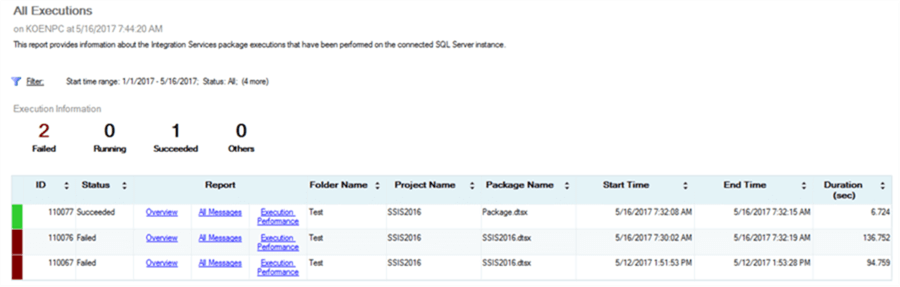

Checking the Logs

First in the morning, we typically check the logs or the monitoring solution if something went wrong during the night. Maybe an email was sent with an error message, or a custom dashboard was created showing metrics about the business intelligence platform.

This was especially important during the days of the "nightly batch load". Typically, ETL processes ran after office hours to not disturb any business processes. The first person arriving in the morning had to check if this process had failed, so it could be fixed and restarted before the business people looked at their reports. Businesses have become more international due to globalization and the continuous online presence, so nowadays running a batch job during "office hours" has become more difficult since the business is now 24/7. In this case, long batch jobs have shifted to shorter jobs running more frequently, to micro-batch jobs running almost continuously, to finally event-driven or streaming data for data analysis. In all those cases, monitoring and logging is crucial to ensure smooth business.

The following tips can get you started on this topic:

- How to setup SQL Server alerts and email operator notifications

- Sending email from SQL Server Integration Services

- Integrated Logging with the Integration Services Package Log Providers

- Integration Services Logging Levels in SQL Server 2016

- Reporting with the SQL Server Integration Services Catalog

- SQL Server Reporting Services Log Files for Troubleshooting

- Monitoring SQL Server Analysis Services with Extended Events

- Setting up Azure Log Analytics to Monitor Performance of an Azure Resource

- Azure Data Factory Pipeline Logging Error Details

- Query Audit data in Azure SQL Database using Kusto Query Language (KQL)

Interviews with the Business

After ensuring everything is running as expected, we have some interviews with the business which is where our communication skills and problem-solving strategies are key. That's right, the "business" side of "business intelligence". It's not all about writing SQL queries and creating BI reports in Microsoft Power BI or Tableau, you have to know what the business actually wants to see in those reports - business requirements. During those interviews, you try to determine what kind of reports/dashboards the business want (and how they intend to use them). What kind of data is involved, where that data might come from, if any calculations are involved at all et cetera. A BI developer will eventually know a lot about the various processes going on in a company.

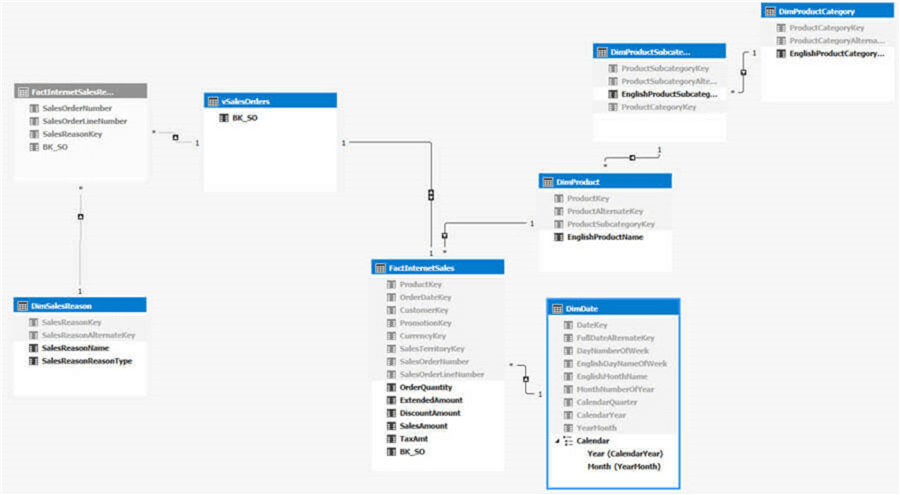

After or during the interview, you can condense the information into a star schema. What are the dimensions involved, what are the fact tables? It's a good idea to involve the business in this modelling process, as they will be the ones directly using it (especially if they for example connect live to a model with an Excel PivotTable. They will interact directly with the dimensions and fact tables).

You can model your data warehouse in SQL Server of course, but also in Azure SQL DB or Azure Managed Instance if you're working in Azure, or Azure Synapse Analytics for the bigger workloads.

Having a Stand-up

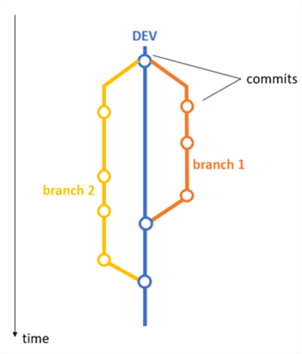

Many IT departments are starting to embrace an agile mindset when it comes to delivering software. Business intelligence has been lacking behind a bit compared with "more traditional" software development, but luckily, it's become common to work with tools supporting agile development and application lifecycle management for BI projects. For example, Azure Devops supports agile boards, work items, CI/CD pipelines, git integration and much more.

No matter what methodology you're using, daily standups have become part of our routines. Somewhere during the morning, the team (or squad) gets together and everybody has a brief moment of time to explain what they've been doing, what they're planning on doing and if there are any issues. Synchronizing helps the team to stay on track.

To help you get started with an agile lifestyle:

- Azure DevOps for Agile Projects

- Azure DevOps CI CD using GitHub Repo and Visual Studio Azure SQL Database Project

- How to Add SQL Server Integration Services Projects to Azure Devops for Version Control

- Deploy Azure Data Factory CI/CD Changes with Azure DevOps

- Branching and Merging Strategy in Git for SSIS Projects

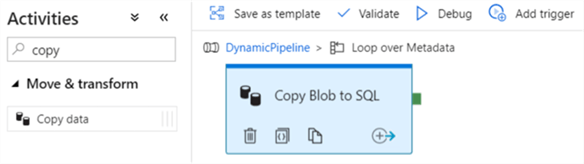

Writing the ETL

Whether it's ETL or ELT, working with a BI tool like SSIS, Azure Data Factory or Azure Databricks, at some point data has to move from one data source to another relational database. Possibly with some transformations as well. Typically, writing the ETL and everything that comes with it (data modeling, data manipulations, data quality, data governance, writing SQL queries, creating storage, et cetera) takes up the biggest chunk of a project. Aside from writing the ETL, you also have to automate it and build some monitoring/logging (as mentioned in the first paragraph).

Writing SQL is an important aspect, but recently other languages are becoming more important as well. For example, if you use Azure Databricks, you can write Python, Java or Scala. Or if you use an Azure Function for a specific data manipulation, you have even more languages to choose from. On the other hand, some tools are low-code (e.g. SSIS) to even no-code solutions (e.g. Azure Logic Apps and Azure Data Factory). Choosing the correct tool for the task at hand will always stay very important, but it doesn't hard to have a broad knowledge of the tools and their programming languages.

- To get started with SSIS, check out this tutorial.

- For working with master data, you can use the tool SQL Server Master Data Services.

- You can find an overview of Azure Data Factory here, and a list of all related tips here.

- An introduction to Azure Databricks is given in the tip What is Azure Databricks?.

- You can find an example on Azure Functions here, and one on Azure Logic Apps here.

Creating a Model

Often a semantic layer is built on top of the data warehouse as it makes it much more intuitive for end users to slice and dice the data. The most commonly used models nowadays are Analysis Services Tabular and the Power BI model. Both are actually the same technology behind the scenes.

You load the data into a star schema, again in close collaboration with the business. They are going to use it after all. It is important to use clear and user-friendly names for the columns and measures. The semantic model is a user interface for your data.

If necessary, extra calculations and measures can be added into the model. It's a good practice to do calculations as early as possible in the process (for example in the data warehouse itself or in the ETL tool), but some measures can only be written in the model itself, such as time intelligence calculations.

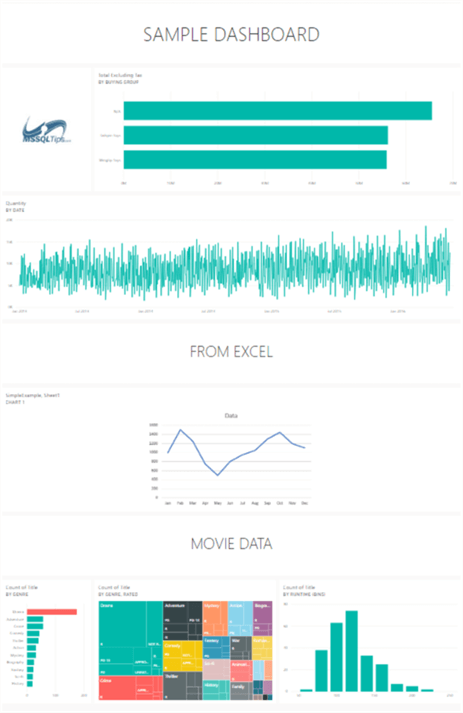

Developing Reports and Dashboards

After all that hard work and coding, it's time to let the data shine. You can build reports directly on the data warehouse using SSRS report builder for example, or you can connect to the model using Power BI Desktop. If you want to crunch numbers, you can also use Excel to analyze the data from the model.

After your data visualizations are finished, you need to find a way to share them with you company. Are you going to use SSRS Report Server? Or the Power BI service? What about subscriptions? Maybe people want their report email to them. There are many options and it's up to you to figure it out with the business what the requirements are.

- Here are some tips on how to create specific types of charts in SSRS:

- An overview of Power BI Tips

- How to Build your First Power BI Dashboard

- Analyzing Power BI data with Excel

Giving Support

Once everything is put into production and the users are happily using their dashboards, it doesn't end there. Before our day is finished, it's possible you have to provide some support. These can be a myriad of possible tasks:

- Users don't understand how to use the reporting tool and you might need to explain it to them. It might be a good idea to create training material and technical documentation to capture recurring questions.

- There might be a bug. The ETL is crashing or maybe some number in a report doesn't seem to be correct. It's up to you to figure out what's going on and fix it as soon as possible.

- Change requests will keep coming. A data warehouse is never finishes. Maybe users want extra reports or dashboards, or they want smaller changes like an extra column in a report, a different color here, a bigger font size there.

- Performance tuning. This can be done in any layer of your business intelligence solution. The report can be slow (believe me, users will quickly let you know that is the case), the ETL might be running for a long time or the model takes forever to process. As a BI developer, you'll need to know about performance tuning techniques and it definitely won't hurt to know how indexes work.

Some tips to get you started:

- Reduce the Size of an Analysis Services Tabular Model – Part 1 and Part 2. The advice given there is also valid for Power BI models.

- SQL Server Performance Tuning and Monitoring Tutorial

- SSIS Catalog Maintenance in the Azure Cloud

- Improve SSIS data flow buffer performance

- You can find a webinar about SQL Server BI Best Practices (SSIS, SSAS, OLAP and SSRS) here.

- SQL Server Index Tutorial Overview

Next Steps

- How does your day look like? What's your work experience like? Let us know in the comments!

- My First SQL Server Business Intelligence Project Tutorial

- How To Become a Successful BI Developer

About the author

Koen Verbeeck is a seasoned business intelligence consultant at AE. He has over a decade of experience with the Microsoft Data Platform in numerous industries. He holds several certifications and is a prolific writer contributing content about SSIS, ADF, SSAS, SSRS, MDS, Power BI, Snowflake and Azure services. He has spoken at PASS, SQLBits, dataMinds Connect and delivers webinars on MSSQLTips.com. Koen has been awarded the Microsoft MVP data platform award for many years.

Koen Verbeeck is a seasoned business intelligence consultant at AE. He has over a decade of experience with the Microsoft Data Platform in numerous industries. He holds several certifications and is a prolific writer contributing content about SSIS, ADF, SSAS, SSRS, MDS, Power BI, Snowflake and Azure services. He has spoken at PASS, SQLBits, dataMinds Connect and delivers webinars on MSSQLTips.com. Koen has been awarded the Microsoft MVP data platform award for many years.This author pledges the content of this article is based on professional experience and not AI generated.

View all my tips

Article Last Updated: 2021-11-04